The NVIDIA Titan V Preview - Titanomachy: War of the Titans

by Ryan Smith & Nate Oh on December 20, 2017 11:30 AM ESTSynthetic Graphics Performance

Moving on, let’s take a look at the synthetic graphics performance of the Titan V. As this is the first graphics-enabled product using the GV100 GPU, this can potentially give us some insight into the yet-untapped graphics capabilities of the Volta architecture. However the usual disclaimer about earlier drivers and early hardware is very much in effect here: we don’t know for sure how consumer Volta parts will be configured, and in the meantime it’s not clear just how well the Volta drivers have been optimized for graphics.

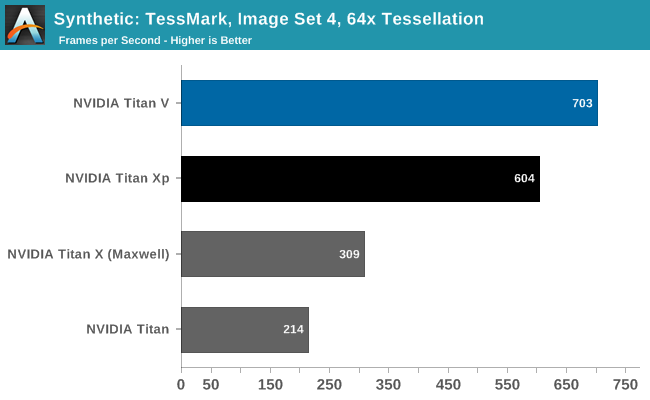

Right now the graphics aspects of the Volta architecture are something of a black box, and tessellation performance with TessMark reflects this. On Pascal, NVIDIA has one Polymorph engine per TPC; if this is the same on GV100, then Titan V has around 40% more geometry hardware than Titan Xp. On the other hand, the actual throughput rate of Polymorph engines has varied over the years as NVIDIA has dialed it up or down in line with their performance goals and the number of such hardware units available. So for all we know, Titan V’s Polymorph engines don’t pack the same punch as Titan Xp’s.

At any rate, Titan V once again comes away with a win. But the 16% lead is not a day-and-night difference, unlike what we’ve seen with compute.

Beyond3D Suite

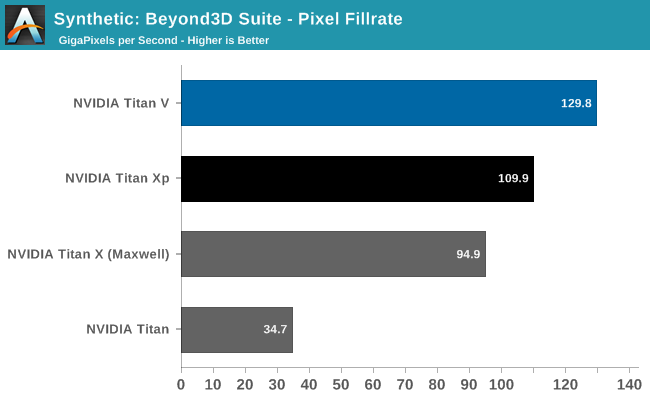

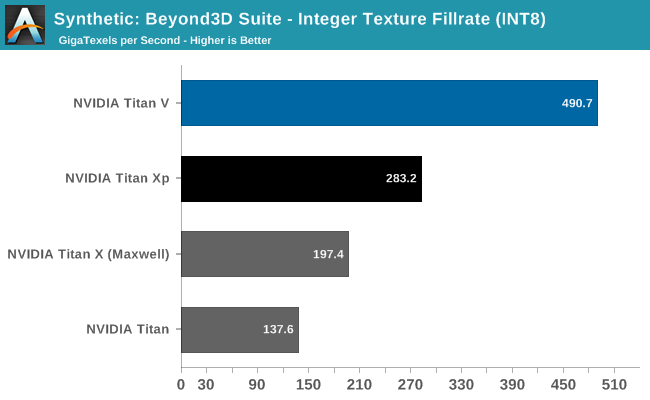

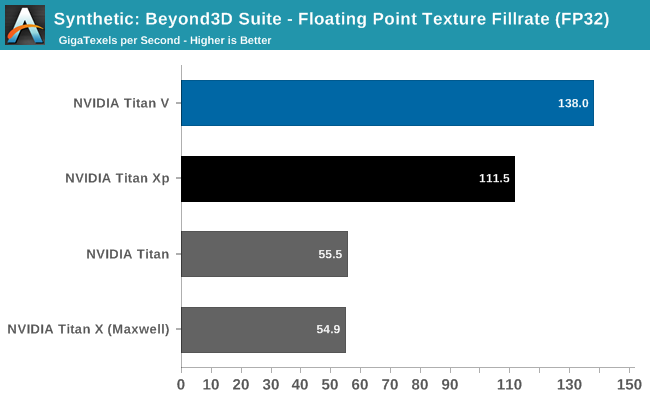

Meanwhile for looking at texel and pixel fillrate, we have the Beyond3D Test Suite.

Starting with the pixel throughput benchmark, I’m actually a bit surprised the difference is as great as it is. As discussed earlier, on paper the Titan V has lower ROP throughput than the Titan Xp, owing to lower clockspeeds. And yet in this case it’s still in the lead by 18%. It’s not a massive difference, but it means the picture is more complex than naïve interpretation of GV100 being a bigger Pascal. This may be an under-the-hood efficiency improvement (such as compression), or it could just be due to Titan V’s raw bandwidth advantage. This will be worth keeping an eye on once NVIDIA starts talking about consumer Volta cards.

As for texel throughput, it’s interesting that INT8 and FP32 texture throughput gains are so divergent. Titan V is showing much greater INT8 texture throughput gains than it is FP32; and Titan Xp was no slouch in that department to begin with. I’m curious whether this is a product of separating the integer ALUs from the FP ALUs on Volta, or whether NVIDIA has made some significant changes to their texture mapping units.

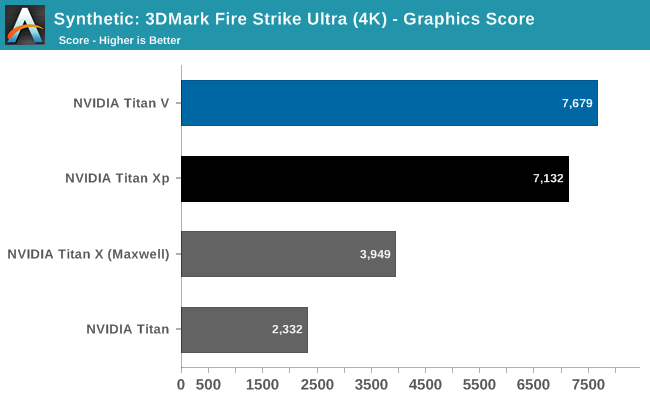

3DMark Fire Strike Ultra

I’ve also gone ahead and run Futuremark’s 3DMark Fire Strike Ultra benchmark on the Titans. This benchmark is a bit older, but it’s a better understood (and better optimized) DX11 benchmark.

The results here find the Titan V in the lead, but not by much: just 8%. Relative to Pascal/Titan Xp, Titan V’s performance advantages and architectural improvements are inconsistent, in as much as not everything has been improved to the same degree. These results point to a bottleneck elsewhere – perhaps the ROPs? – but don’t isolate it. More significantly though, it’s an indication that while Titan V can do graphics, perhaps it’s not well-built (or at least well-optimized) for the task.

SPECViewPerf 12.1.1

Finally, while SPECViewPerf’s component software packages are more for the professional visualization side of the market – and NVIDIA doesn’t optimize the GeForce driver stack for these programs – I wanted to quickly run all of the Titans through the test all the same, just to see what we’d get.

| NVIDIA Titan Cards SPECviewperf 12.1 FPS Scores | ||||||

| Titan V | Titan Xp | GTX Titan X | GTX Titan | |||

| 3dsmax-05 | 176.3 | 173.7 | 133.1 | 100.3 | ||

| catia-04 | 180.3 | 181.4 | 71.3 | 24.1 | ||

| creo-01 | 119.4 | 122.1 | 41.0 | 30.1 | ||

| energy-01 | 27.7 | 21.2 | 8.7 | 5.8 | ||

| maya-04 | 159.0 | 152.0 | 131.6 | 98.6 | ||

| medical-01 | 89.4 | 91.9 | 39.9 | 27.7 | ||

| showcase-01 | 175.5 | 167.0 | 98.7 | 67.0 | ||

| snx-02 | 223.2 | 225.3 | 7.37 | 4.1 | ||

| sw-03 | 110.1 | 100.7 | 52.2 | 41.3 | ||

The end result is actually quite interesting. The Titan V only wins a bit more than it loses, as the card doesn’t pick up much in the way of performance versus the Titan Xp. Professional visualization tasks tend to be more ROP-bound, so this may be a consequence of that, and I wouldn’t read too much into this versus anything Quadro (or what a Quadro GV100 card could do). But it illustrates once again how Titan V has improved at some tasks by more than it has in others.

111 Comments

View All Comments

mode_13h - Wednesday, December 27, 2017 - link

I don't know if you've heard of OpenCL, but there's not reason why a GPU needs to be programmed in a proprietary language.It's true that OpenCL has some minor issues with performance portability, but the main problem is Nvidia's stubborn refusal to support anything past version 1.2.

Anyway, lots of businesses know about vendor lock-in and would rather avoid it, so it sounds like you have some growing up to do if you don't understand that.

CiccioB - Monday, January 1, 2018 - link

Grow up.I repeat. None is wasting millions in using not certified, supported libraries. Let's avoid talking about entire frameworks.

If you think that researches with budgets of millions are nerds working in a garage with avoiding lock-in strategies as their first thought in the morning, well, grow up kid.

Nvidia provides the resources to allow them to exploit their expensive HW at the most of its potential reducing time and other associated costs. Also when upgrading the HW with a better one. That's what counts when investing millions for a job.

For you kid's home made AI joke, you can use whatever alpha library with zero support and certification. Others have already grown up.

mode_13h - Friday, January 5, 2018 - link

No kid here. I've shipped deep-learning based products to paying customers for a major corporation.I've no doubt you're some sort of Nvidia shill. Employee? Maybe you bought a bunch of their stock? Certainly sounds like you've drunk their kool aid.

Your line of reasoning reminds me of how people used to say businesses would never adopt Linux. Now, it overwhelmingly dominates cloud, embedded, and underpins the Android OS running on most of the world's handsets. Not to mention it's what most "researchers with budgets of millions" use.

tuxRoller - Wednesday, December 20, 2017 - link

"The integer units have now graduated their own set of dedicates cores within the GPU design, meaning that they can be used alongside the FP32 cores much more freely."Yay! Nvidia caught up to gcn 1.0!

Seriously, this goes to show how good the gcn arch was. It was probably too ambitious for its time as those old gpus have aged really well it took a long time for games to catch up.

CiccioB - Thursday, December 21, 2017 - link

<blockquote>Nvidia caught up to gcn 1.0!</blockquote>Yeah! It is known to the entire universe that it is nvidia that trails AMD performances.

Luckly they managed to get this Volta out in time before the bankruptcy.

tuxRoller - Wednesday, December 27, 2017 - link

I'm speaking about architecture not performance.CiccioB - Monday, January 1, 2018 - link

New bigger costier architectures with lower performance = failtuxRoller - Monday, January 1, 2018 - link

Ah, troll.CiccioB - Wednesday, December 20, 2017 - link

Useless cardVega = #poorvolta

StrangerGuy - Thursday, December 21, 2017 - link

AMD can pay me half their marketing budget and I will still do better than them...by doing exactly nothing. Their marketing is worse than being in a state of non-existence.