Tensor

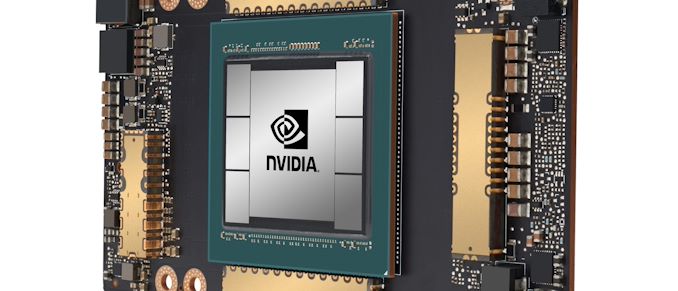

While NVIDIA’s usual presentation efforts for the year were dashed by the current coronavirus outbreak, the company’s march towards developing and releasing newer products has continued unabated. To that end, at today’s now digital GPU Technology Conference 2020 keynote, the company and its CEO Jensen Huang are taking to the virtual stage to announce NVIDIA’s next-generation GPU architecture, Ampere, and the first products that will be using it.

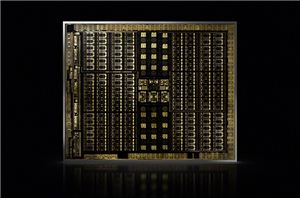

NVIDIA Reveals Next-Gen Turing GPU Architecture: NVIDIA Doubles-Down on Ray Tracing, GDDR6, & More

Moments ago at NVIDIA’s SIGGRAPH 2018 keynote presentation, company CEO Jensen Huang formally unveiled the company’s much awaited (and much rumored) Turing GPU architecture. The next generation of NVIDIA’s...

84 by Ryan Smith on 8/13/2018NVIDIA Unveils & Gives Away New Limited Edition 32GB Titan V "CEO Edition"

NVIDIA’s CEO Jensen Huang has over the years become increasingly known for his giveaway antics at AI conferences. In recent years the CEO has unveiled both the NVIDIA Titan...

38 by Ryan Smith on 6/21/2018Google Announces Cloud TPU v2 Beta Availability for Google Cloud Platform

This week, Google announced Cloud TPU beta availability on the Google Cloud Platform (GCP), accessible through their Compute Engine infrastructure-as-a-service. Using the second generation of Google’s tensor processing units...

8 by Nate Oh on 2/15/2018The NVIDIA Titan V Preview - Titanomachy: War of the Titans

Today we're taking a preview look at NVIDIA's new compute accelerator and video card, the $3000 NVIDIA Titan V. In Greek mythology Titanomachy was the war of the Titans...

113 by Ryan Smith & Nate Oh on 12/20/2017NVIDIA Announces “NVIDIA Titan V" Video Card: GV100 for $3000, On Sale Now

Out of nowhere, NVIDIA has revealed the NVIDIA Titan V today at the 2017 Neural Information Processing Systems conference, with CEO Jen-Hsun Huang flashing out the card on stage...

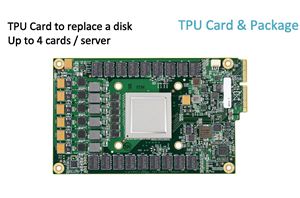

160 by Ryan Smith & Nate Oh on 12/7/2017Hot Chips: Google TPU Performance Analysis Live Blog (3pm PT, 10pm UTC)

Another Hot Chips talk, now talking Google TPU.

30 by Ian Cutress on 8/22/2017NVIDIA Volta Unveiled: GV100 GPU and Tesla V100 Accelerator Announced

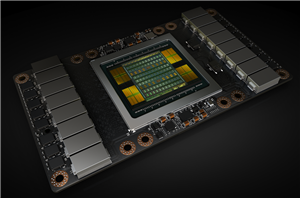

Today at their annual GPU Technology Conference keynote, NVIDIA's CEO Jen-Hsun Huang announced the company's first Volta GPU and Volta products. Taking aim at the very high end of...

179 by Ryan Smith on 5/10/2017