AMD Zen 2 Microarchitecture Analysis: Ryzen 3000 and EPYC Rome

by Dr. Ian Cutress on June 10, 2019 7:22 PM EST- Posted in

- CPUs

- AMD

- Ryzen

- EPYC

- Infinity Fabric

- PCIe 4.0

- Zen 2

- Rome

- Ryzen 3000

- Ryzen 3rd Gen

Floating Point

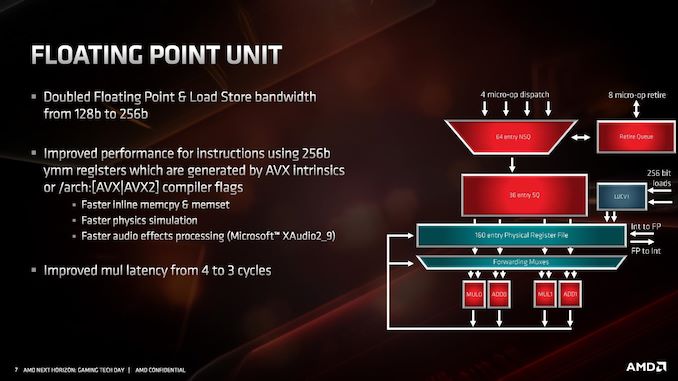

The key highlight improvement for floating point performance is full AVX2 support. AMD has increased the execution unit width from 128-bit to 256-bit, allowing for single-cycle AVX2 calculations, rather than cracking the calculation into two instructions and two cycles. This is enhanced by giving 256-bit loads and stores, so the FMA units can be continuously fed. AMD states that due to its energy aware scheduling, there is no predefined frequency drop when using AVX2 instructions (however frequency may be reduced dependent on temperature and voltage requirements, but that’s automatic regardless of instructions used)

In the floating point unit, the queues accept up to four micro-ops per cycle from the dispatch unit which feed into a 160-entry physical register file. This moves into four execution units, which can be fed with 256b data in the load and store mechanism.

Other tweaks have been made to the FMA units than beyond doubling the size – AMD states that they have increased raw performance in memory allocations, for repetitive physics calculations, and certain audio processing techniques.

Another key update is decreasing the FP multiplication latency from 4 cycles to 3 cycles. That is quite a significant improvement. AMD has stated that it is keeping a lot of the detail under wraps, as it wants to present it at Hot Chips is August. We’ll be running a full instruction analysis for our reviews on July 7th.

216 Comments

View All Comments

JohnLook - Monday, June 10, 2019 - link

@Ian Cutress Are you sure the Io dies are on TSMC's 14 & 12 nm processes ?all info so far was that they were on GloFo's 14 nm ...

Ian Cutress - Monday, June 10, 2019 - link

Sorry, glofo 14 and 12. Matisse IO die is Glofo 12nm. We triple confirmed.JohnLook - Monday, June 10, 2019 - link

Thanks :-)scineram - Tuesday, June 11, 2019 - link

It still says Epyc is TSMC.John_M - Tuesday, June 11, 2019 - link

It would be nice if the article was updated as not everyone reads the comments section and AnandTech articles do often get cited in Wikipedia articles.Smell This - Wednesday, June 12, 2019 - link

I feel safe in saying that Wiki-Dom will be right on it . . .;-)

So __ those little white lines are the Infinity Scalable Data Fabric (SDF) and the Infinity Scalable Control Fabric (SCF), connecting "Core" chiplets to the I/O core.

"The SDF might have dozens of connecting points hooking together things such as PCIe PHYs, memory controllers, USB hub, and the various computing and execution units."

"The SDF is a superset of what was previously HyperTransport. The SCF is a complementary plane that handles the transmission ..."

https://en.wikichip.org/wiki/amd/infinity_fabric

Of course, I counted them (rolling eyes at myself), and determined there were 32 connecting a single core chiplet to the I/O core. I'm smelling a rational relationship between those 32, and other such stuff. Are the number of IF links a proprietary secret to AMD?

Yah know? It would be a nice 'get' if a tech writer interviewed someone in that former Sea Micro bunch, and spilled a few beans . . .

Smell This - Wednesday, June 12, 2019 - link

Might be 36 ... LOL

Smell This - Wednesday, June 12, 2019 - link

Could be 42- or 46 IF links on the right(I'll stop obsessing)

sweetca - Thursday, June 13, 2019 - link

I don't understand anything you said 🙂Smell This - Sunday, June 16, 2019 - link

I was (am) trolling Ian/AT for a **Deep(er) Dive** on the Infinity Fabric -- its past, and its future. The EPYC Rome processors have 8 "Core" chiplets connecting to the I/O core. Right? Those 'little white lines' (32- to 46?) from each chiplet, presumably, scale to ... infinity?AMD purchased SeaMicro 7 years ago as the "Freedom Fabric" platform was developed. Initially the SM15000 'stitched' together 512 compute cores, 160 gigabits of I/O networking and 5+ petabytes of storage to form a 'very-high-density server.'

And then . . . they went dark.

https://www.anandtech.com/show/9170/amd-exits-dens...

(see the last comment on that link)