Solving the Automotive Bandwidth Problem: Aquantia Partners with NVIDIA for 10GbE

by Ian Cutress on January 29, 2018 9:00 AM EST- Posted in

- Automotive

- Networking

- SoCs

- NVIDIA

- Aquantia

- Xavier

- 10GbE

- Autonomous

One of the lesser known topics around fully autonomous vehicles is one of transporting data around. There are usually two options: transport raw image and sensor data with super low latency but with high bandwidth requirements, or use encoding tools and DSPs to send fewer bits but at a higher latency. As we move into development of the first Level 4 (near autonomous) and Level 5 (fully autonomous) vehicle systems, for safety and response time reasons, low latency has won. This means shifting data around, and a lot of it.

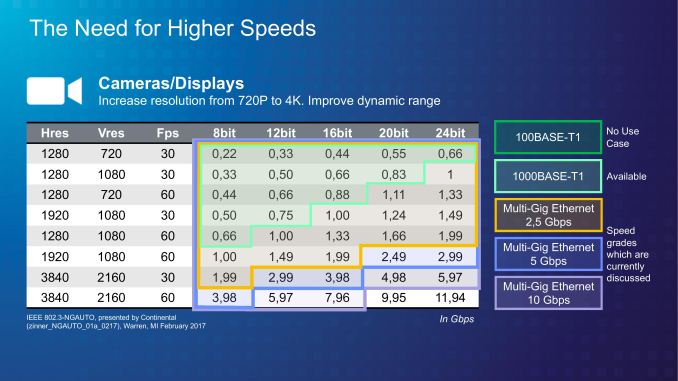

Bandwidth required, in Gbps for raw video at a given resolution and frame rate, also at a specific color depth. E.g. 720p30 at 24-bit RGB (8-bit per color) is 0.66 Gbps

Raw camera data is big: a 1080p60 video, with 8-bits of color per channel, requires a bandwidth of 0.373 GB/s. That is gigabytes per second, or the equivalent of 2.99 gigabits per second, per camera. Now strap anywhere from 4 to 8 of these sensors on board, the switches needed to manage them, the redundancy required for autonomy to still work if one element gets taken offline, and we hit a bandwidth problem. Gigabit simply isn't enough.

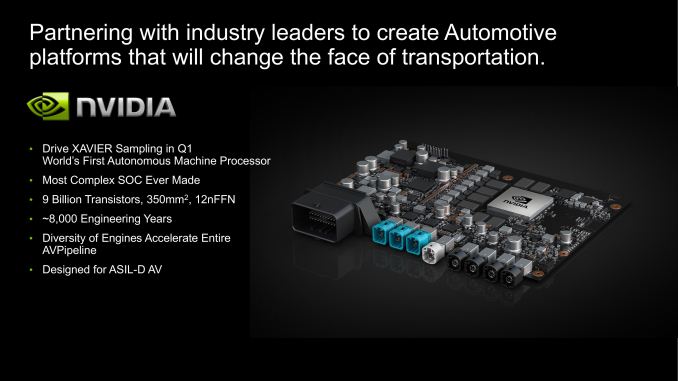

The announcement today is two fold: NVIDIA and Aquantia are announcing a partnership that means Aquantia based network controllers and PHYs will be used inside NVIDIA's DrivePX Xavier platform, and subsequently the Pegasus platform as well. The second announcement is the new automotive product stack from Aquantia, AQcelerate, consisting of three chips depending on the automotive networking requirement.

| Aquantia AQcelerate for Automotive | |||||

| Type | Input | Output | Use Case | Package Size (FCBGA) |

|

| AQC100 | PHY | 2500Base-X USXGMII XFI KR |

10GbE 5GbE 2.5GbE |

ADAS Cameras Parking Assist Sensors Telematics Audio/Video Infotainment |

- |

| AQVC100 | MAC | XFI | PCIe 2/3 x2/x4 | 7x11 mm | |

| AQVC107 | Both | PCIe 2/3 x1/x2/x4 |

10GbE 5GbE 2.5GbE |

12x14 mm | |

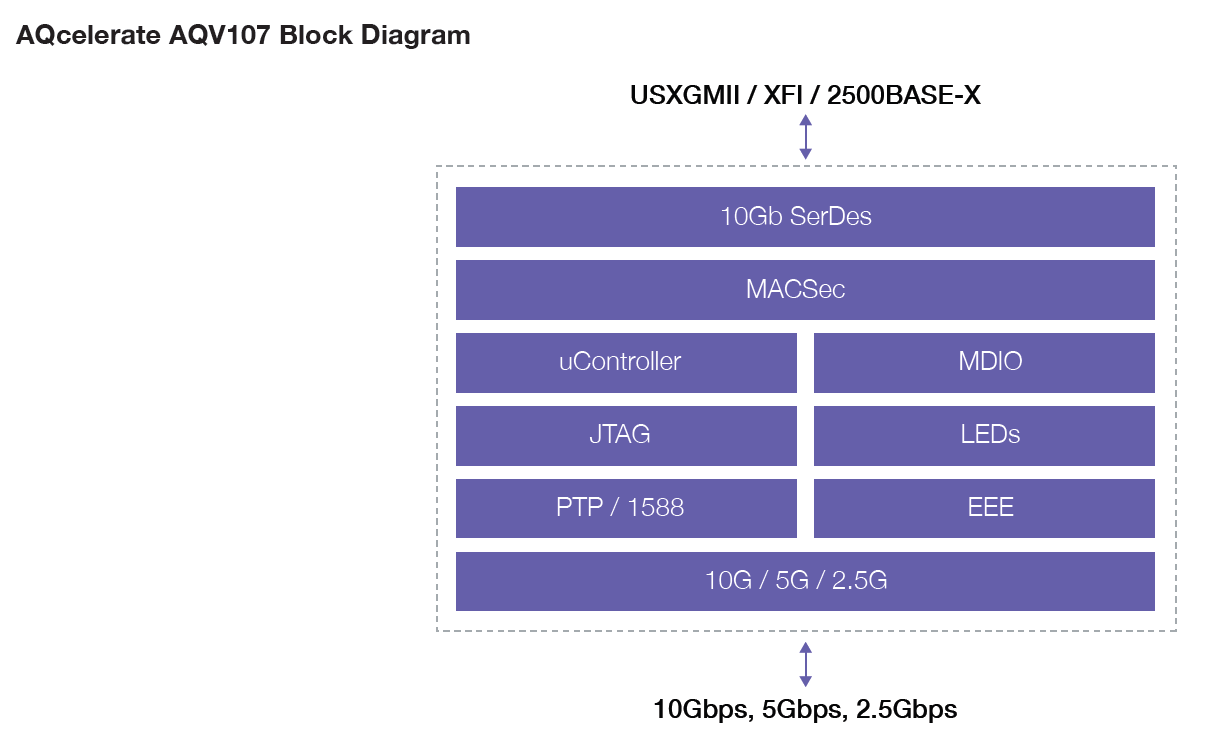

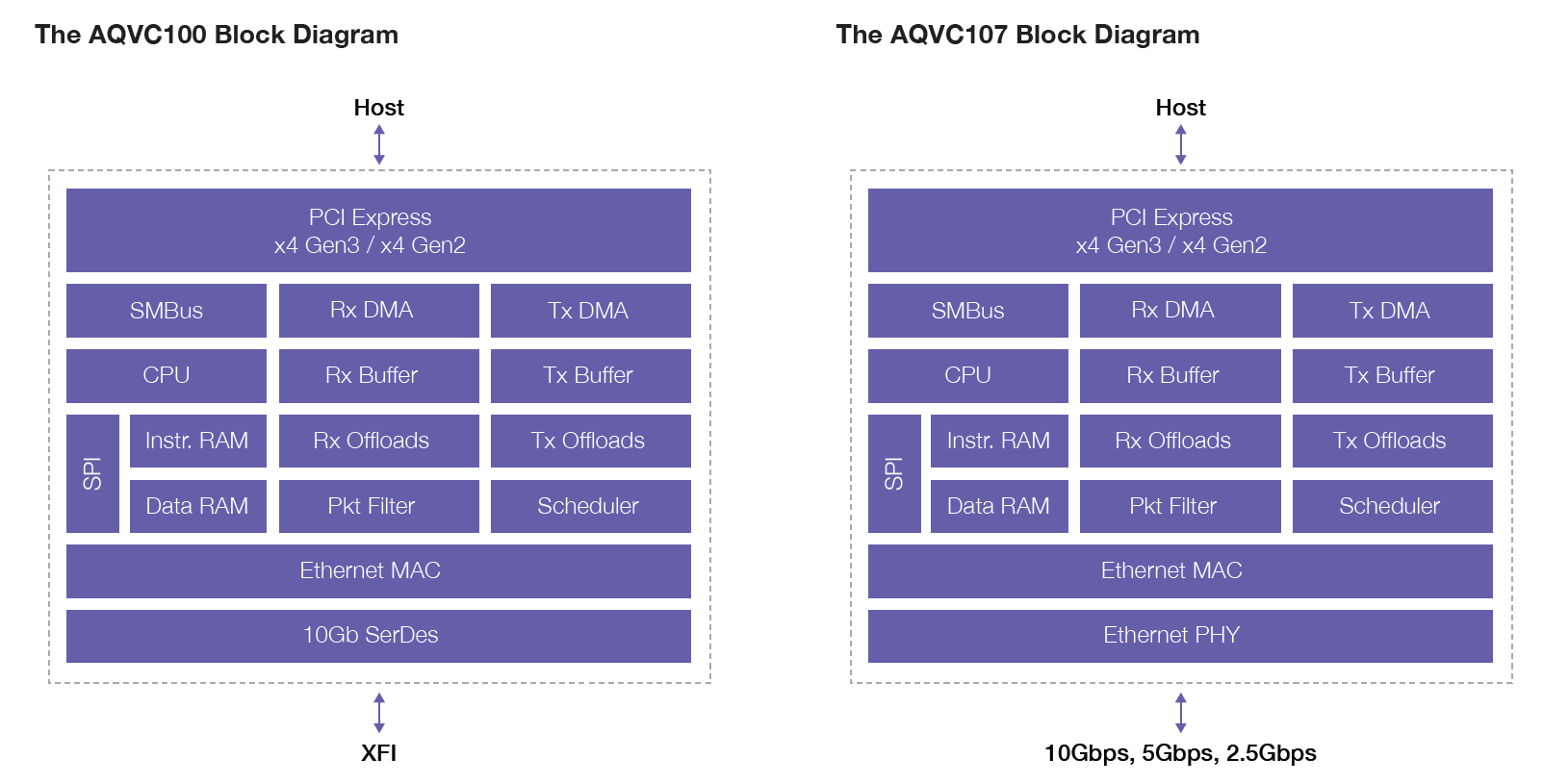

For the three new chips, one is a PHY, one is a PCIe network controller, and a third combines the two. The PHY can take a standard camera inputs (2500BASE-X, USXGMII, and XFI) and send the data through multi-gigabit Ethernet as required. The controller can take standard XFI 10 Gb SerDes data and output direct to PCIe, while the combination chip is as a regular MACPHY combo, converting Ethernet data to PCIe. All three chips are built on a 28nm process (Aquantia works with both TSMC and GloFo, but stated that for these products the fab is not being announced), and qualified for the AEC-Q100 industry standard.

Click to enlarge block diagrams

The benefits of using multi-gigabit, as explained to us by Aquantia, is that it allows for a 2.5G connection using only a standard twisted pair cable, or 5G for dual pair, up to 10G for quad pair. Current automotive networking systems are based on single pair 100/1000Mbit technology, which is insufficient for the high bandwidth, low latency requirements that companies like NVIDIA put into their Level 4/5 systems.

These chips were designed on Aquantia's roadmap before its collaboration with NVIDIA, however NVIDIA approached Aquantia looking for something to work, given Aquantia's current march on multi-gigabit Ethernet ahead of its rivals. We are told that the silicon doesn't do anything special and specific with NVIDIA, allowing other companies keen on automotive technology to use Aquantia as well. With Aquantia's lead in the multi-gigabit Ethernet space, over say Intel, Qualcomm, and Realtek, it seems that the only option at this point for wired connectivity, if you need to send raw data, is something like this. However, the lead time for collaboration seems to be substantial: Aquantia stated that NVIDIA's Gary Shapiro recorded promotional material for them in the middle of last year, however Xavier was announced in 2016, so it is likely that Aquantia and NVIDIA were looking at integration before then.

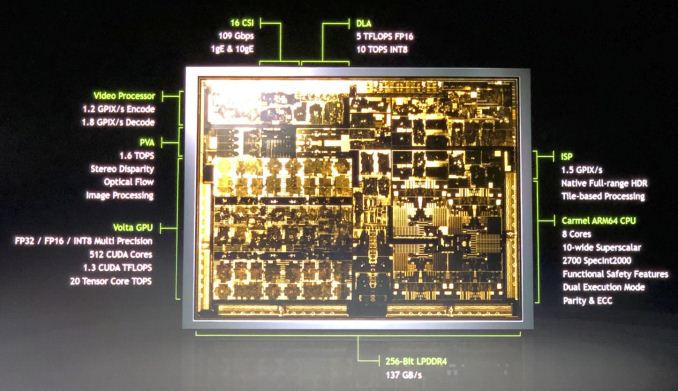

A quick side discussion on managing all this data. If there is 16 GB/s from all the sensors flying around, the internal switches and SoCs has to be able to handle it. At CES, NVIDIA provided a base block diagram of an Xavier SoC, including some details about its custom ARM cores, its GPU, the DSPs, and some about the networking.

Image via CNX-Software

The slide shows that the silicon has gigabit and 10 gigabit embedded in (so it just needs a PHY to work), as well as 109 Gbps total networking support. On the Video Processor, it supports 1.8 gigapixel/s decode, which if we plug in some numbers (1080p60 = 124MPixel/s) allows for about a dozen or so cameras at 8bit color, or a combination of 4K cameras and other sensors. The images of the Xavier also show the ISP, capable of 1.5 gigapixel/s.

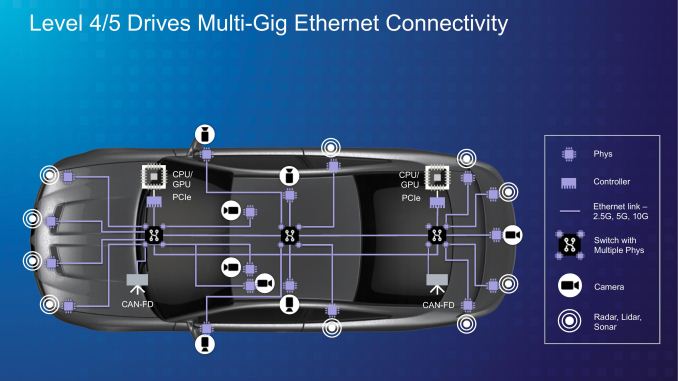

An mockup example from Aquantia showed a potential Level 4/5 autonomous arrangement, with 10 RADAR/LIDAR/SONAR sensors, 8 cameras, and a total of 18 PHYs, two controllers, and three switches. Bearing in mind that there is a level of redundancy for these systems (cameras and sensors should connect two at least two switches, if one CPU fails than another can take over, etc), then this is a lot of networking silicon to go into a single car, and a large potential for anyone who can get the multi-gigabit data transfer done right. The question then comes down to power, which is something Aquantia is not revealing at this time, instead preferring to allow NVIDIA to quote a system wide level power.

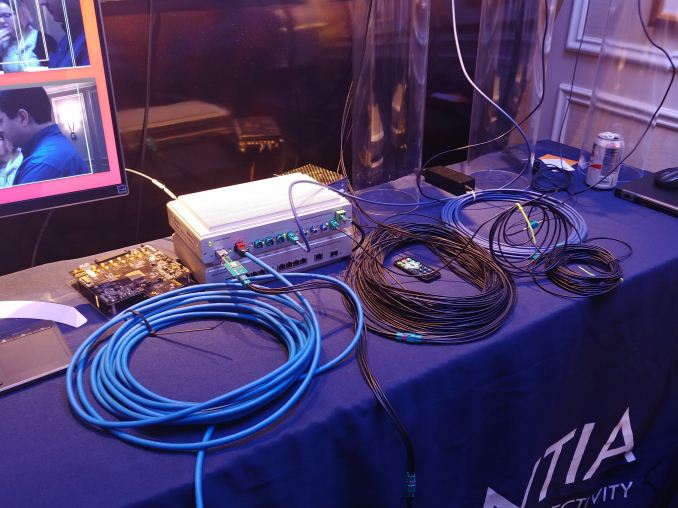

The image at the top is the setup shown to us by Aquantia at CES, demonstrating a switch using AQcelerate silicon capable of supporting various cables, including the vital 2.5 Gbps over a single pair.

Related Reading

- Aquantia Launches New 2.5G/5G Multi-Gigabit Network Controllers for PCs

- Aquantia Launch AQtion 5G/2.5G/1G Multi-Gigabit Ethernet Cards (NICs) for PCIe

- Lower Cost 10GBase-T Switches Coming: 4, 5 and 8-port Aquantia Solutions at ~$30/Port

- Dell Now Offers Aquantia AQtion AQN-108-Based 5 GbE Cards with Select PCs

Source: Aquantia

25 Comments

View All Comments

dlum - Monday, January 29, 2018 - link

Wishing all the best to Aquantia for being the first to give us hope for widespread adoption of multi-GB Ethernet, esp. in general/consumer-level/non-enterprise sectors.Hope such strong collaboration/partnership makes them stronger and brings closer the moment when any non-lowest-end-budget motherboard comes equipped with 2.5 or 5 GbE.

peevee - Monday, January 29, 2018 - link

10GbE is not multi-GB (GB being GigaByte, unlike Gb which is Gigabit). Its real throughput is almost exactly 1 GB.And there is nothing new in 10GbE.

dlum - Monday, January 29, 2018 - link

Yes I meant multi-Gb (2.5/5Gb or more).Why should it be new? It's still far cry from being standard in consumer MBs.

Santoval - Monday, January 29, 2018 - link

It is not new in the server and datacenter market, but it is still almost non existent in the consumer market - thus the "widespread adoption" mention.Duncan Macdonald - Monday, January 29, 2018 - link

The high resolutions and dynamic range quoted MUST not be required - even a light mist (still OK for human driving) will drop the resolution to VGA levels and dynamic range to 6 bits or less. If the control system cannot handle such conditions then it is not suitable for road use.Billy Tallis - Monday, January 29, 2018 - link

You're thinking in terms of the dynamic range exhibited by a single frame of video data. But you want the car to be able to get those 6+ bits of contrast in every frame, even as lighting conditions change quickly and drastically. You don't want to wait for a feedback loop to adjust sensor gain over the course of several frames every time you go into a tunnel.syxbit - Monday, January 29, 2018 - link

I wish Nvidia would make a new Shield device with the Xavier SoC.Amandtec - Monday, January 29, 2018 - link

With small plastic wheels bundled in? Half of the Xavier SOC is for self driving cars only. I think what you actually want is the latest GPU into your shield device when it launches later this year.Threska - Monday, January 29, 2018 - link

One would think fiber optics would be a better fit. Automotive is a harsh, noisy environment. The electrical isolation of fiber would help as well. Also moving processing closer to the respective sensors would help.Ian Cutress - Monday, January 29, 2018 - link

Optics can't be bent and molded the same way copper can, especially when it's a tight fit.I addressed the issue of moving processing closer to sensors in the first paragraph: if you encode at the sensor then decode at the SoC, it adds latency. Now if you want to process frames at every sensor, you'll need an SoC at every sensor, and find a way to ensure that the system can work when one of those sensors is knocked out. At this point in time, centralized processing for Level 4/5 is the preferred fit.