The NVIDIA Titan V Deep Learning Deep Dive: It's All About The Tensor Cores

by Nate Oh on July 3, 2018 10:15 AM ESTDeepBench Training: GEMM and RNN

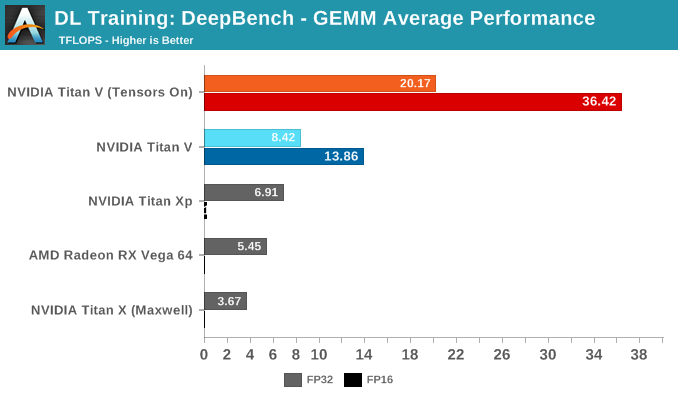

Opening up our DeepBench results are GEMM tests, which we've already seen as pure synthetic operations. Using kernels and GEMM operations used in certain deep learning applications (DeepSpeech, Speaker ID, and Language Modelling), performance here is a little more representative than running through pure matrix-matrix multiplications in cuBLAS.

To preface, the NVIDIA Titan Xp has crippled half precision, while the GeForce GTX Titan X (Maxwell) only supports single precision. According to Baidu, they test a FP32 with tensor cores mode for Volta, where 32-bit inputs undergo 16-bit multiplication and 32-bit accumulation. The specifics of this are somewhat unclear, but we've gone ahead and included those results. Otherwise, FP16 with tensor cores is the 'standard' Volta mixed precision.

The average results of all sub-tests seem unsurprising: enabling tensor cores results in large performance increases all around. Digging into the details reveals just how specific tensor core acceleration is to certain types of matrix-matrix multiplications.

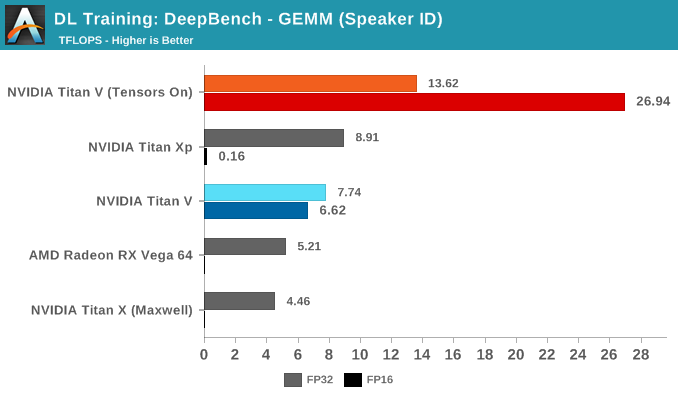

Splitting up the GEMM tests by DL application, we can start to see how tensor cores fare in ideal (and non-ideal) circumstances.

The Speaker ID GEMM workloads actually consist of only two kernels, where a difference of 10 microseconds means a difference of around 1 TFLOPS. The Titan Xp's higher performance is normal variance.

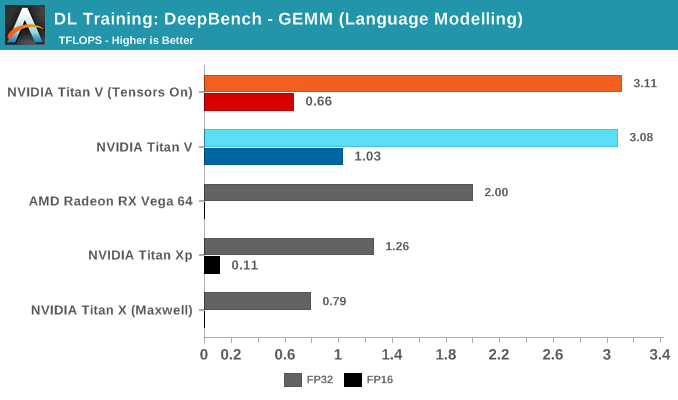

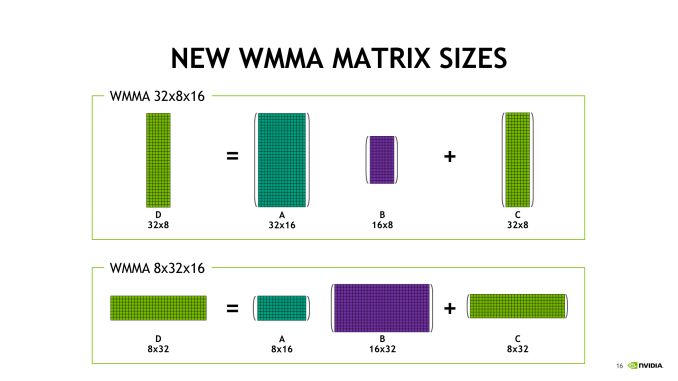

Looking into the Language Modelling kernels explains the poor performance of tensor cores. The sizes of those kernel matrices are m = 512 or 1024, n = 8 or 16, and k = 500000, and the small size of n compared to the very large k is notable. While each number is technically divisible by 8 – one of the basic requirements to qualify for tensor core acceleration – the shape of these matrices isn't a neat fit with the supported basic WMMA shapes: 16x16x16, 32x8x16, and 8x32x16. Nor does it go well with 8x8x8, if we're assuming that tensor cores truly operate on an independent 8x8x8 level.

So tensor cores are being pulled into action on very lopsided matrices that can't be broken up easily as n = 8 or 16, at least, not without performance penalties.

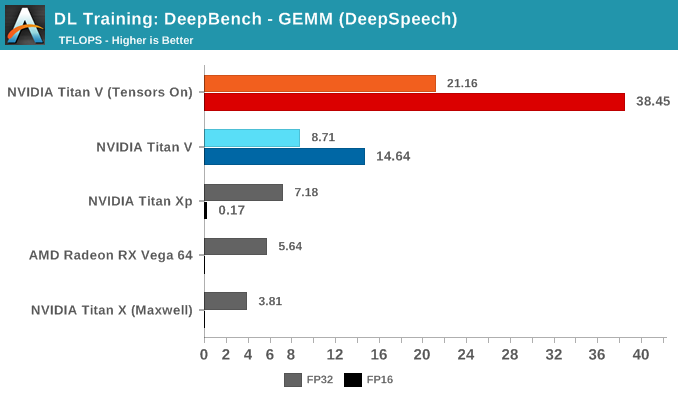

Meanwhile, the tensor cores have runaway performance on DeepSpeech kernels:

As an average, it turns out to be an impressive number of TFLOPs. Granularly, the same impact of wrong-proportioned matrices is occuring. When matrices fit the tensor core proportions, performance can jump to more than 90 TFLOPS. When they don't, and the right transpositions are not in play, then performances can drop to below 1 TFLOPS.

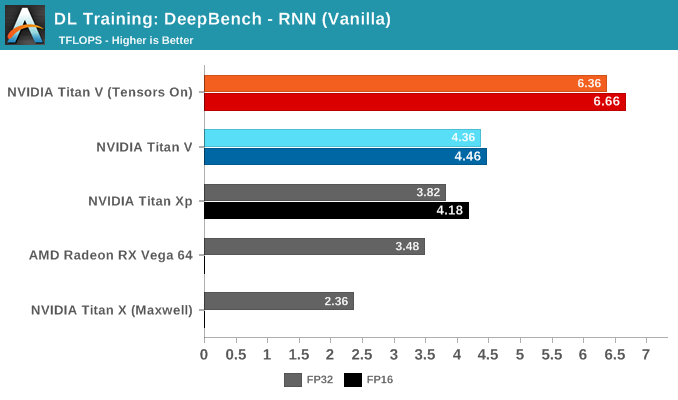

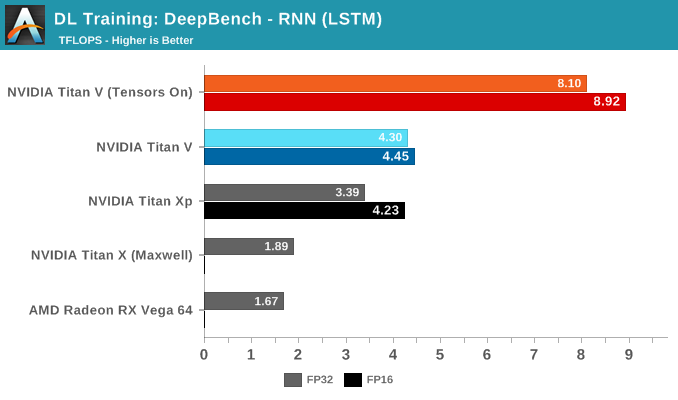

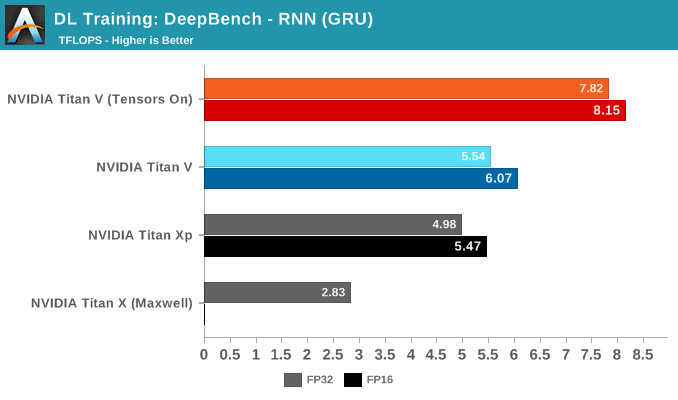

For the DeepBench RNN kernels, there is no drastic divergence between RNN type. But within in each RNN type, the same patterns can be seen if judging kernel-by-kernel.

What is interesting is how close the Titan Xp can come to non-tensor core accelerated Titan V performance. We know that the Titan Xp's superior clockspeeds are doing it a favor here, but also one of Titan V's big advantages – HBM2 – is partially mitigated by its 3 controller configuration. Bandwidth-to-bandwidth, the Titan V only has theoretically around 100GB/s more, but at the same amount of VRAM; and while Volta's HBM controller efficiency has improved over Pascal P100, Titan Xp presumably features NVIDIA's 2nd generation GDDR5X controller.

65 Comments

View All Comments

krazyfrog - Saturday, July 7, 2018 - link

I don't think so.https://www.anandtech.com/show/12170/nvidia-titan-...

mode_13h - Saturday, July 7, 2018 - link

Yeah, I mean why else do you think they built the DGX Station?https://www.nvidia.com/en-us/data-center/dgx-stati...

They claim "AI", but I'm sure it was just an excuse they told their investors.

keg504 - Tuesday, July 3, 2018 - link

"With Volta, there has little detail of anything other than GV100 exists..." (First page)What is this sentence supposed to be saying?

Nate Oh - Tuesday, July 3, 2018 - link

Apologies, was a brain fart :)I've reworked the sentence, but the gist is: GV100 is the only Volta silicon that we know of (outside of an upcoming Drive iGPU)

junky77 - Tuesday, July 3, 2018 - link

ThanksAny thoughts about Google TPUv2 in comparison?

mode_13h - Tuesday, July 3, 2018 - link

TPUv2 is only 45 TFLOPS/chip. They initially grabbed a lot of attention with a 180 TFLOPS figure, but that turned out to be per-board.I'm not sure if they said how many TFLOPS/w.

SirPerro - Thursday, July 5, 2018 - link

TPUv3 was announced in May with 8x the performance of TPUv2 for a total of a 1 PF per podtuxRoller - Tuesday, July 3, 2018 - link

Since utilization is, apparently, an issue with these workloads, I'm interested in seeing how radically different architectures, such as tpu2+ and the just announced ibm ai accelerator (https://spectrum.ieee.org/tech-talk/semiconductors... which looks like a monster.MDD1963 - Wednesday, July 4, 2018 - link

4 ordinary people will buy this....by mistake, thinking it is a gamer. :)philehidiot - Wednesday, July 4, 2018 - link

"With DL researchers and academics successfully using CUDA to train neural network models faster, it was only a matter of time before NVIDIA released their cuDNN library of optimized deep learning primitives, of which there was ample precedent with the HPC-focused BLAS (Basic Linear Algebra Subroutines) and corresponding cuBLAS. So cuDNN abstracted away the need for researchers to create and optimize CUDA code for DL performance. As for AMD’s equivalent to cuDNN, MIOpen was only released last year under the ROCm umbrella, though currently is only publicly enabled in Caffe."Whatever drugs you're on that allow this to make any sense, I need some. Being a layman, I was hoping maybe 1/5th of this might make sense. I'm going back to the porn. </headache>