The NVIDIA Titan V Deep Learning Deep Dive: It's All About The Tensor Cores

by Nate Oh on July 3, 2018 10:15 AM ESTDeepBench Inference: Convolutions

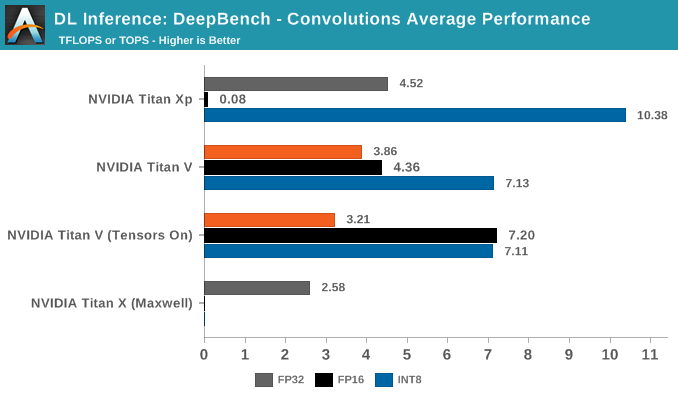

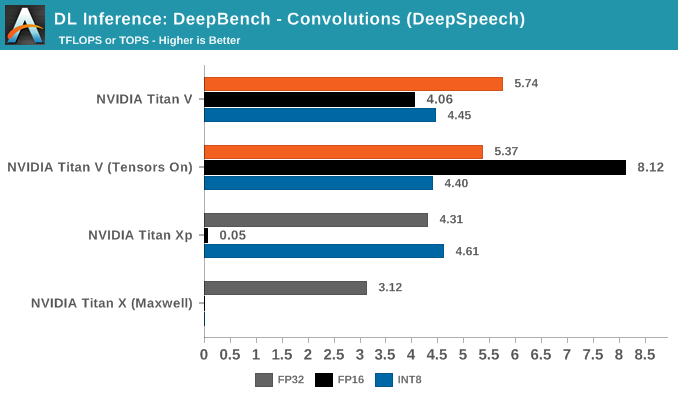

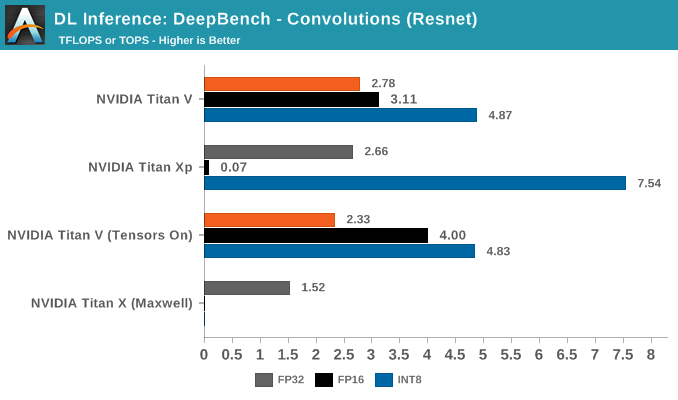

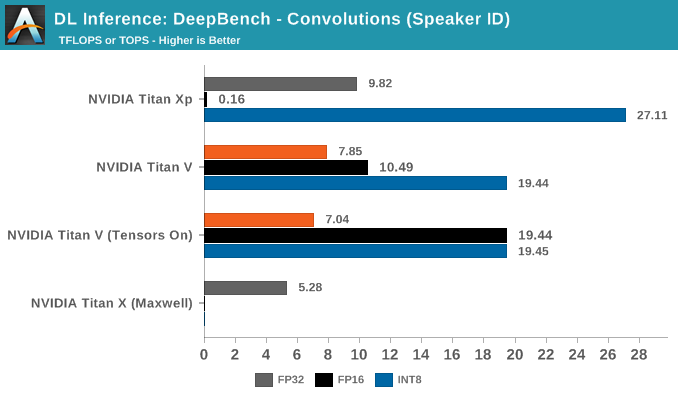

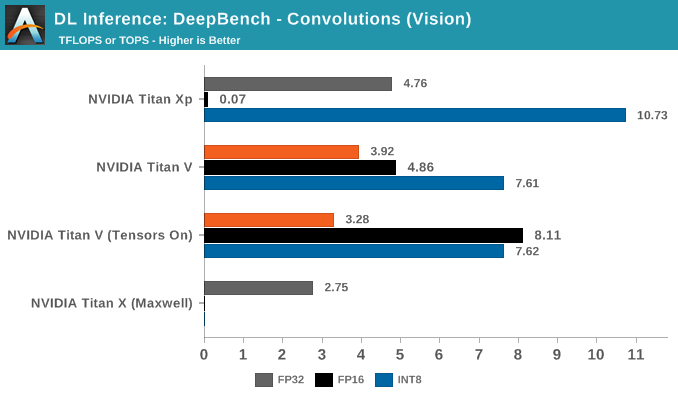

Moving on to convolutions, 8-bit multiply/32-bit accumulate again makes an appearance with INT8 inferencing.

The most striking aspect in the average convolutions performance is Titan Xp's superior INT8 throughput. The numbers, being comparable to the DeepBench Titan Xp inference results, are correct. Nor is padding responsible for the disparity.

Breaking out the convolutions into application-specific workloads, we see that Resnet, Speaker ID, and Vision showcase Titan Xp's superior INT8 performance.

Nothing seems obvious from the kernels, but if anything, this is likely due to DP4A library/driver maturity on Pascal, compared to it's Volta implementation. There's also the chance that Volta is handling it solely through its INT cores.

65 Comments

View All Comments

krazyfrog - Saturday, July 7, 2018 - link

I don't think so.https://www.anandtech.com/show/12170/nvidia-titan-...

mode_13h - Saturday, July 7, 2018 - link

Yeah, I mean why else do you think they built the DGX Station?https://www.nvidia.com/en-us/data-center/dgx-stati...

They claim "AI", but I'm sure it was just an excuse they told their investors.

keg504 - Tuesday, July 3, 2018 - link

"With Volta, there has little detail of anything other than GV100 exists..." (First page)What is this sentence supposed to be saying?

Nate Oh - Tuesday, July 3, 2018 - link

Apologies, was a brain fart :)I've reworked the sentence, but the gist is: GV100 is the only Volta silicon that we know of (outside of an upcoming Drive iGPU)

junky77 - Tuesday, July 3, 2018 - link

ThanksAny thoughts about Google TPUv2 in comparison?

mode_13h - Tuesday, July 3, 2018 - link

TPUv2 is only 45 TFLOPS/chip. They initially grabbed a lot of attention with a 180 TFLOPS figure, but that turned out to be per-board.I'm not sure if they said how many TFLOPS/w.

SirPerro - Thursday, July 5, 2018 - link

TPUv3 was announced in May with 8x the performance of TPUv2 for a total of a 1 PF per podtuxRoller - Tuesday, July 3, 2018 - link

Since utilization is, apparently, an issue with these workloads, I'm interested in seeing how radically different architectures, such as tpu2+ and the just announced ibm ai accelerator (https://spectrum.ieee.org/tech-talk/semiconductors... which looks like a monster.MDD1963 - Wednesday, July 4, 2018 - link

4 ordinary people will buy this....by mistake, thinking it is a gamer. :)philehidiot - Wednesday, July 4, 2018 - link

"With DL researchers and academics successfully using CUDA to train neural network models faster, it was only a matter of time before NVIDIA released their cuDNN library of optimized deep learning primitives, of which there was ample precedent with the HPC-focused BLAS (Basic Linear Algebra Subroutines) and corresponding cuBLAS. So cuDNN abstracted away the need for researchers to create and optimize CUDA code for DL performance. As for AMD’s equivalent to cuDNN, MIOpen was only released last year under the ROCm umbrella, though currently is only publicly enabled in Caffe."Whatever drugs you're on that allow this to make any sense, I need some. Being a layman, I was hoping maybe 1/5th of this might make sense. I'm going back to the porn. </headache>