The NVIDIA Titan V Preview - Titanomachy: War of the Titans

by Ryan Smith & Nate Oh on December 20, 2017 11:30 AM ESTPower, Temperature, & Noise

Finally, let's talk about power, temperature, and noise. At a high level, the Titan V should not be substantially different from other high-end NVIDIA cards. It has the same 250W TDP, and the cooler is nearly identical to NVIDIA’s other vapor chamber cooler designs. In short, NVIDIA has carved out a specific niche on power consumption that the Titan V should fall nicely into.

Unfortunately, no utilities seem to be reporting voltage or HBM temperature of Titan V at this time. These would be particularly of interest considering that Volta is fabbed on TSMC's bespoke 12FFN as opposed to 16nm FinFET. This also marks the first time NVIDIA has implemented HBM2 in gaming use-cases, where HBM temperatures or voltages could be elucidating.

| NVIDIA Titan V and Xp Average Clockspeeds | |||

| NVIDIA Titan V | NVIDIA Titan Xp | Percent Difference | |

| Idle | 135MHz | 139MHz | - |

| Boost Clocks |

1455MHz

|

1582MHz

|

-8.0% |

| Max Observed Boost |

1785MHz

|

1911MHz

|

-6.6% |

| LuxMark Max Boost | 1355MHz | 1911MHz | -29.0% |

| Battlefield 1 |

1651MHz

|

1767MHz

|

-6.6% |

| Ashes: Escalation |

1563MHz

|

1724MHz

|

-9.3% |

| DOOM |

1561MHz

|

1751MHz

|

-10.9% |

| Ghost Recon |

1699MHz

|

1808MHz

|

-6.0% |

| Deus Ex (DX11) |

1576MHz

|

1785MHz

|

-11.7% |

| GTA V |

1674MHz

|

1805MHz

|

-7.3% |

| Total War (DX11) | 1621MHz | 1759MHz | -7.8% |

| FurMark |

1200MHz

|

1404MHz

|

-14.5% |

Interestingly, LuxMark only brings Titan V to 1355MHz instead of its maximum boost clock, a behavior that differs from every other card we've benched in recent memory. Other compute and gaming tasks do bring the clocks higher, with a reported peak of 1785MHz.

The other takeaway is that Titan V is consistently outclocked by Titan Xp. In terms of gaming, Volta's performance gains do not seem to be coming from clockspeed improvements, unlike the bulk of Pascal's performance improvement over Maxwell.

Meanwhile it's worth noting that the HBM2 memory on the Titan V has only one observed clock state: 850MHz. This never deviates, even during FurMark as well as extended compute or gameplay. For the other consumer/prosumer graphics cards with HBM2, AMD's Vega cards downclock HBM2 in high temperature situations like FurMark, and also features a low-power 167MHz idle state.

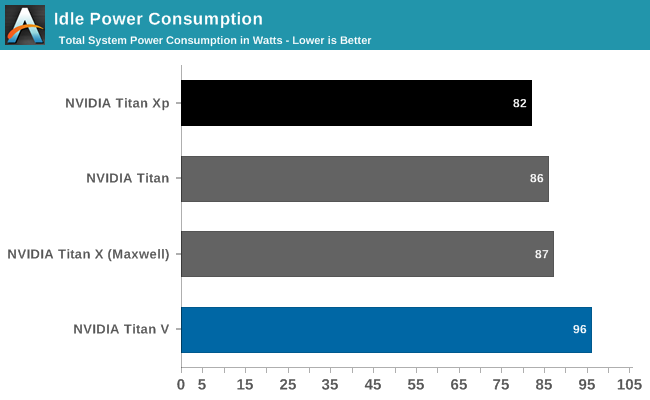

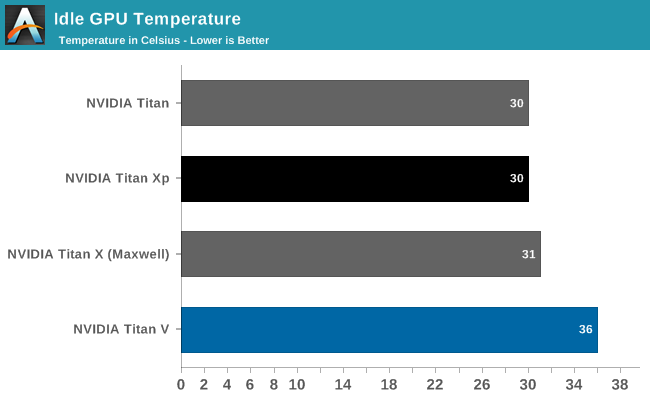

Measuring power from the wall, Titan V's high idle and lower load readings jump out.

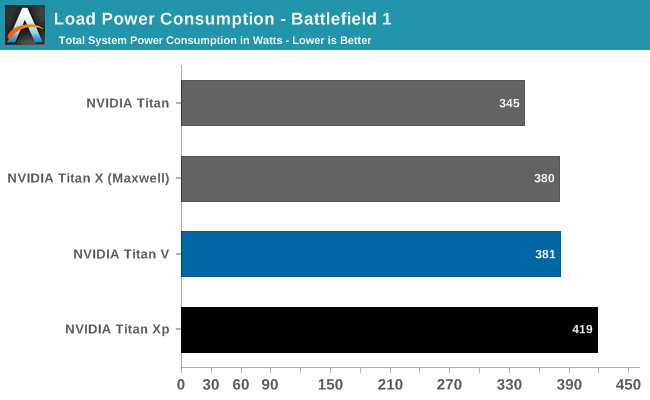

Meanwhile under load, the Titan V's power consumption at the wall is slightly but consistently lower than the Titan Xp's. Again despite the fact that both cards have the same TDPs, and NVIDIA's figures tend to be pretty consistent here since Maxwell implemented better power management.

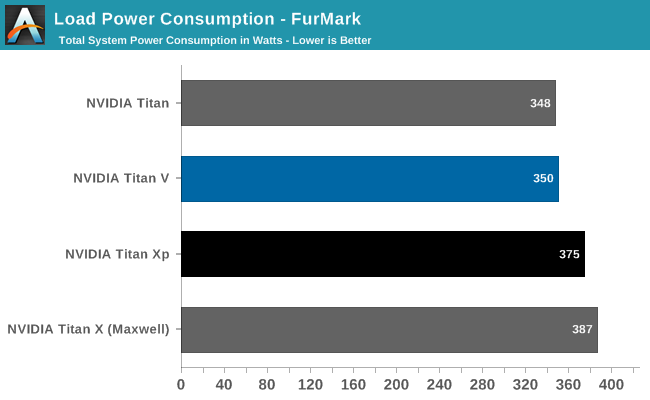

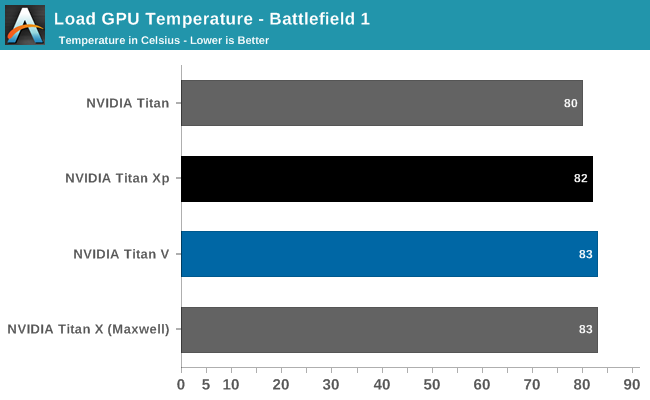

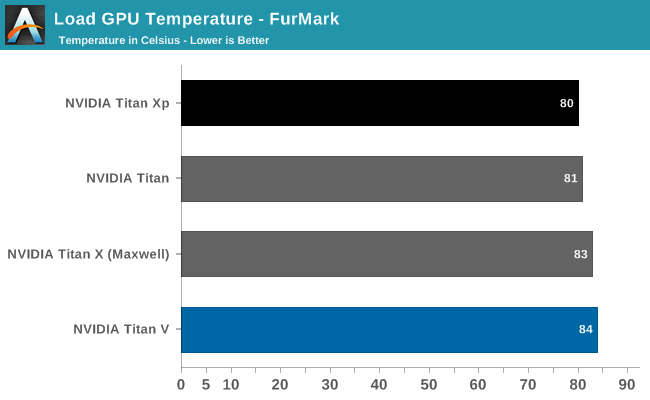

During the course of benchmarking, GPU-Z reported a significant amount of Titan V thermal throttling, and that continued in Battlefield 1, where it oscillated between being capped out by GPU underutilization and temperature. And in FurMark, the Titan V was consistently temperature-limited.

Without HBM2 voltages, it is hard to say if the constant 850MHz clocks are related to Titan V's higher idle system draw. 815mm2 is quite large, but then again elements like Volta's tensor cores are not being utilized in gaming. In Battlefield 1, system power draw is actually lower than Titan Xp but GPU Z would suggest that thermal limits are the cause. Typically what we've seen with other NVIDIA 250W TDP cards is that they hit their TDP limits more often than they hit their temperature limits. So this is an unusual development.

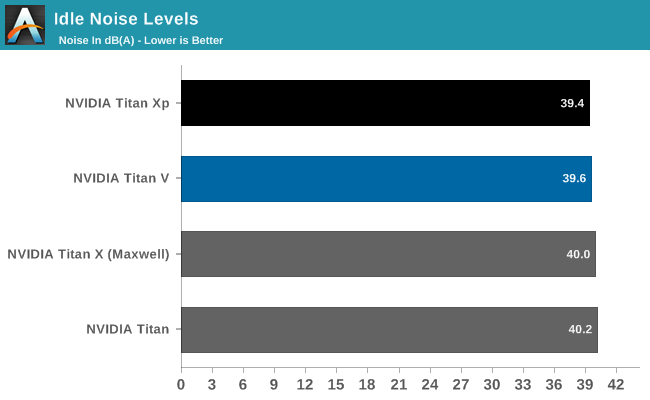

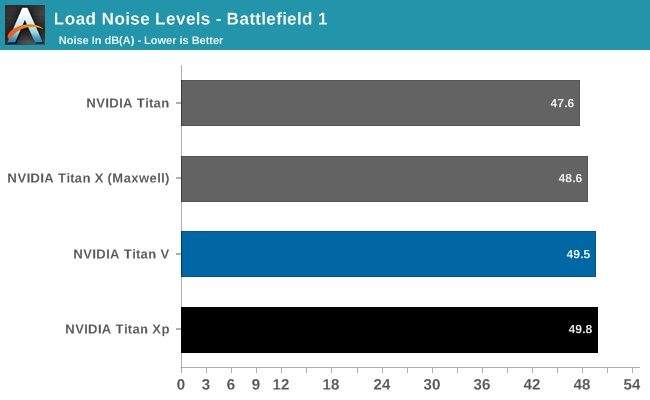

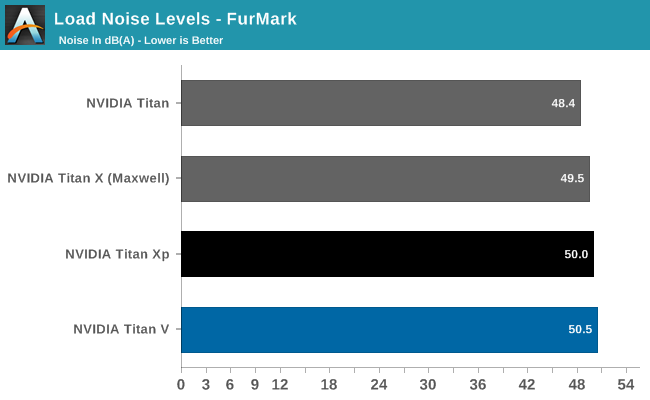

Featuring an improved cooler, Titan V essentially manages the same noise metrics as its Titan siblings.

111 Comments

View All Comments

Ryan Smith - Wednesday, December 20, 2017 - link

The "But can it run Crysis" joke started with the original Crysis in 2007. So it was only appropriate that we use it for that test. Especially since it let us do something silly like running 4x supersample anti-aliasing.crysis3? - Wednesday, December 20, 2017 - link

ahSirPerro - Wednesday, December 20, 2017 - link

They make it pretty clear everywhere this card is meant for ML training.It's the only scenario where it makes sense financially.

Gaming is a big NO at 3K dollars per card. Mining is a big NO with all the cheaper specific chips for the task.

On ML it may mean halving or cutting by 4 the training time on a workstation, and if you have it running 24/7 for hyperparameter tuning it pays itself compared to the accumulated costs of Amazon or Google cloud machines.

An SLI of titans and you train huge models under a day in a local machine. That's a great thing to have.

mode_13h - Wednesday, December 27, 2017 - link

The FP64 performance indicates it's also aimed at HPC. One has to wonder how much better it could be at each, if it didn't also have to do the other.And for multi-GPU, you really want NVlink - not SLI.

takeshi7 - Wednesday, December 20, 2017 - link

Will game developers be able to use these tensor cores to make the AI in their games smarter? That would be cool if AI shifted from the CPU to the GPU.DanNeely - Wednesday, December 20, 2017 - link

First and formost, that depends if mainstream Volta cards get tensor cores.Beyond that I'm not sure how much it'd help there directly, AFAIK what Google/etc are doing with machine learning and neural networks is very different from typical game AI.

tipoo - Wednesday, December 20, 2017 - link

They're more for training the neural nets than actually executing a games AI routine.hahmed330 - Wednesday, December 20, 2017 - link

Finally a card that can properly nail Crysis!crysis3? - Wednesday, December 20, 2017 - link

closer to 55fps if it were crysis 3 maxed outcrysis3? - Wednesday, December 20, 2017 - link

because he benchmarked the first crysis