The NVIDIA Titan V Preview - Titanomachy: War of the Titans

by Ryan Smith & Nate Oh on December 20, 2017 11:30 AM ESTGaming Performance

Sure, compute is useful. But be honest: you came here for the 4K gaming benchmarks, right?

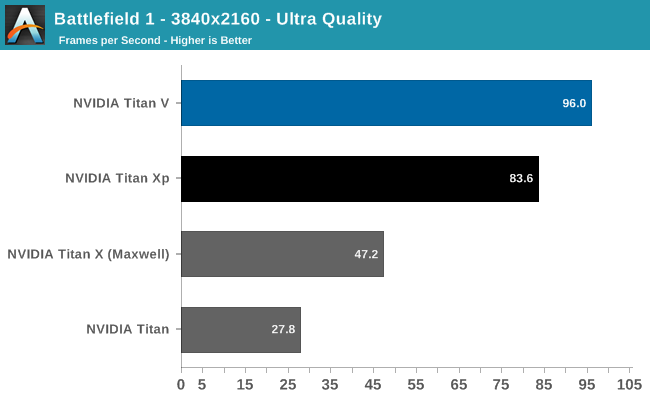

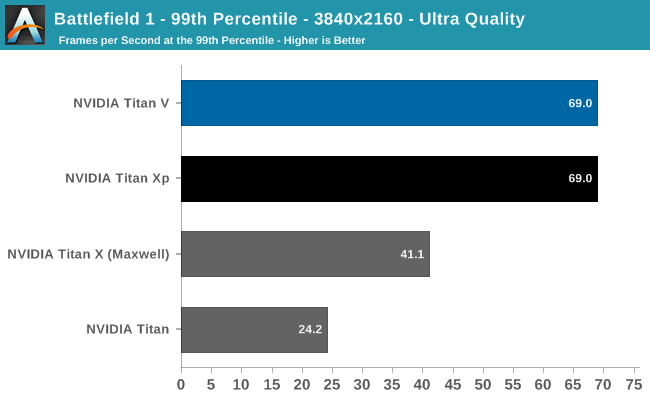

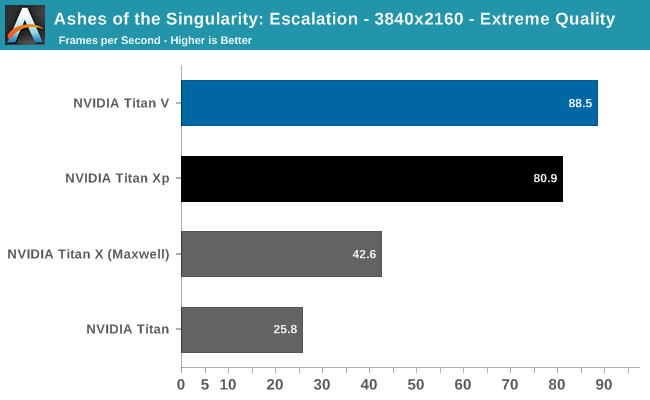

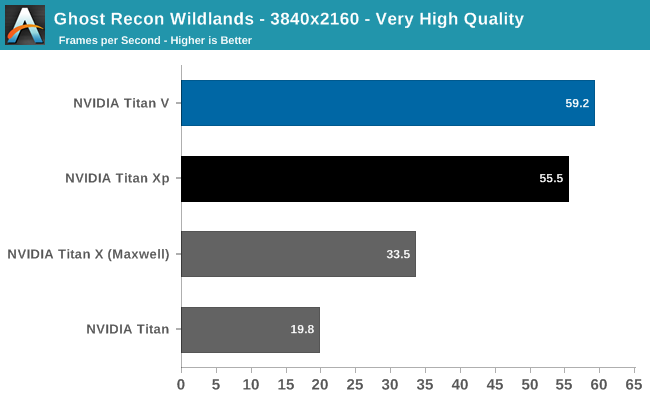

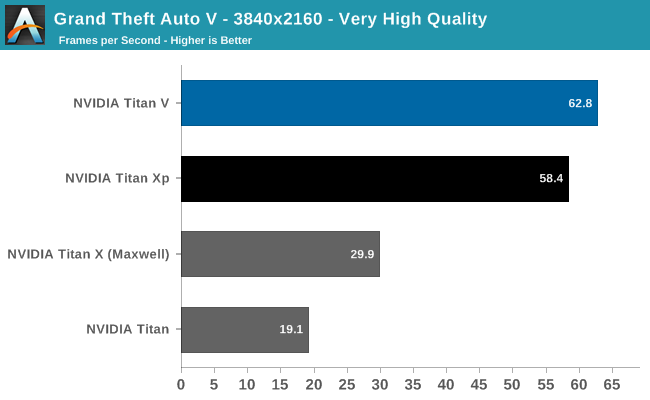

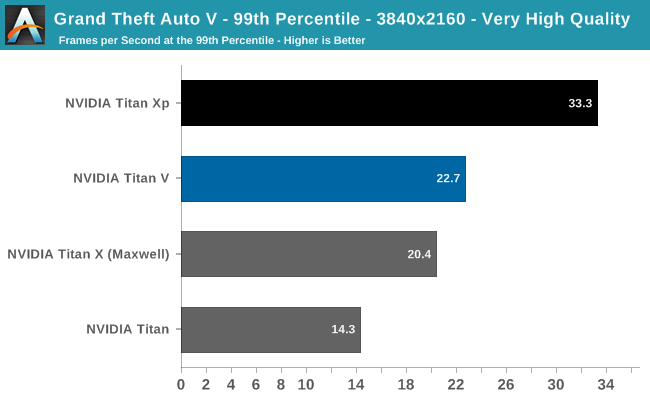

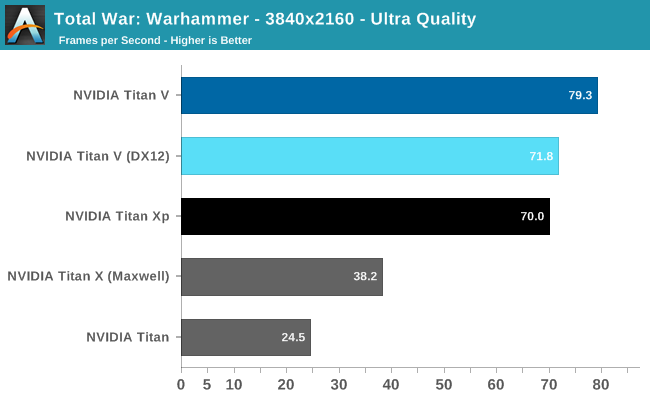

Already after Battlefield 1 (DX11) and Ashes (DX12), we can see that Titan V is not a monster gaming card, though it still is faster than Titan Xp. This is not unexpected, as Titan V's focus is quite far away from gaming as opposed to the focus of the previous Titan cards.

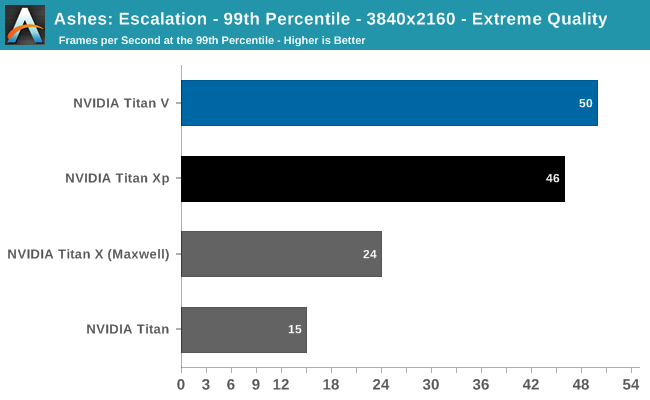

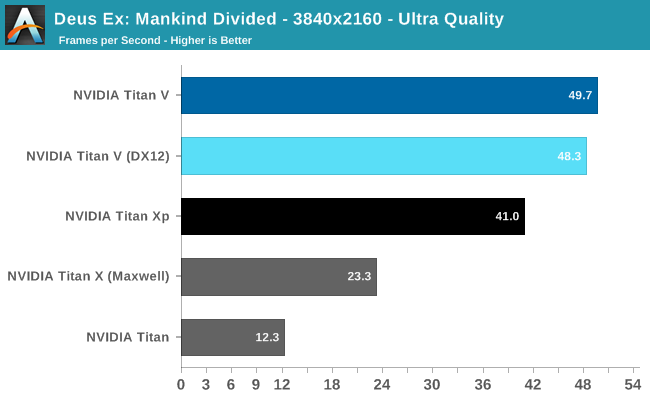

Despite being generally ahead of Titan Xp, it's clear Titan V is suffering from lack of gaming optimization. And for that matter, the launch drivers definitely have bugs in them as far as gaming is concerned. Titan V on Deus Ex resulted in small black box artifacts during the benchmark; Ghost Recon Wildlands experienced sporadic but persistant hitching, and Ashes occasionally suffered from fullscreen flickering.

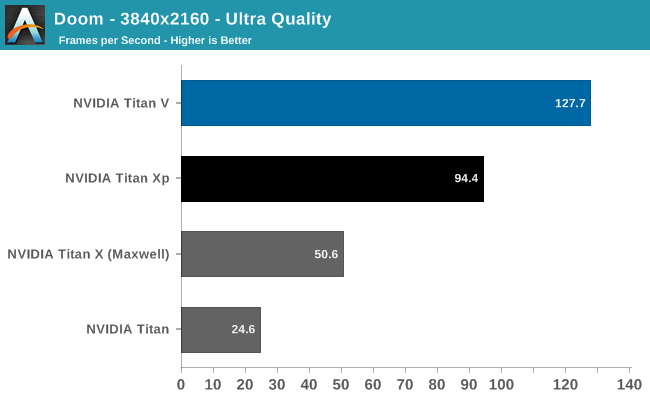

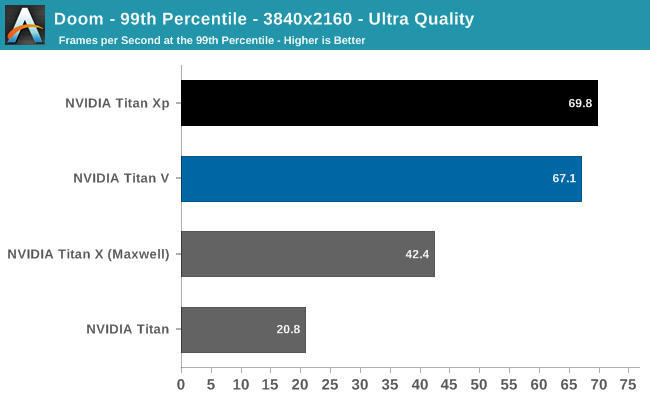

And despite the impressive 3-digit FPS in the Vulkan-powered DOOM, the card actually falls behind Titan Xp in 99th percentile framerates. For such high average framerates, even a 67fps 99th percentile can reduce perceived smoothness. Meanwhile, running Titan V under DX12 for Deus Ex and Total War: Warhammer resulted in less performance. But with immature gaming drivers, it is too early to say if these are representative of low-level API performance on Volta itself.

Overall, the Titan V averages out to around 15% faster than the Titan Xp, excluding 99th percentiles, but with the aforementioned caveats. Titan V's high average FPS in DOOM and Deus Ex are somewhat marred by stagnant 99th percentiles and minor but noticable artifacting, respectively.

So as a pure gaming card, our preview results indicate that this would not the best gaming purchase at $3000. Typically, a $1800 premium for around 10 - 20% faster gaming over the Titan Xp wouldn't be enticing, but it seems there are always some who insist.

111 Comments

View All Comments

CiccioB - Thursday, December 21, 2017 - link

Absolute boost frequency is meaningless as we have already seen that those values are not respected anywhere with the use of boost 3.0. You can be higher or lower.What is important is the power draw and the temperatures. These are the limiting factors to reach and sustain the boost frequency and beyond.

With a 800+mm^2 die and 21 billions of transistor you may expect that the consumption is not that low as for a 14 billion die, and the frequencies cannot be sustained that much.

What is promising is that if these are the power drain of such a monster chip, the consumer grade chips made on the same PP will really be fresh and that the frequencies can be pushed really high to suck all the thermal and power drain gap.

Just imagine a Volta/Ampere GP104 consuming 300+W, same as the new Vega GPU based custom cards.

#poorVega

croc - Wednesday, December 20, 2017 - link

I can't take a titan-as-compute seriously if its double precision is disabled That, to me, makes it only aimed at graphics Yet this whole article is expressing the whole titan family as 'compute machines'...MrSpadge - Thursday, December 21, 2017 - link

It has all compute capability enabled: FP16, FP32, FP64 and the tensor cores. The double speed FP16 is just not (yet?) exposed to graphics applications.CiccioB - Thursday, December 21, 2017 - link

In fact this one has 1/2 FP64 computing capacity with respect to FP32.At least read the first chapter of the review before commenting.

mode_13h - Wednesday, December 27, 2017 - link

The original Titan/Black/Z and this Titan V have uncrippled fp64. It's only the middle two generations - Titan X and Titan Xp - that use consumer GPUs with a fraction of the fp64 units.Zoolook13 - Thursday, December 21, 2017 - link

The figure for tensor operations seems fishy, it's not based on tf.complex128 I guess more probably tf.uint8 or tf.int8 and then it's no longer FLOPS, maybe TOPS?I hope you take a look at that when you flesh out the tensorflow part.

If they can do 110 TFLOPS of tf.float16, then it's very impressive but I doubt that.

Ryan Smith - Thursday, December 21, 2017 - link

It's float 16. Specifically, CUDA_R_16F.http://docs.nvidia.com/cuda/cublas/index.html#cubl...

CheapSushi - Thursday, December 21, 2017 - link

Would be amazing if tensor core support was incorporated into game AI and also OS AI assistants, like Cortana.edzieba - Thursday, December 21, 2017 - link

"Each SM is, in turn, contains 64 FP32 CUDA cores, 64 INT32 CUDA cores, 32 FP64 CUDA cores, 8 tensor cores, and a significant quantity of cache at various levels."IIRC, the '64 FP32 cores' and '32 FP64 cores' are one and the same: the FP64 cores can operate as a pair of FP32 cores (same as GP100 can do two FP16 operations with the FP32 cores).

Ryan Smith - Thursday, December 21, 2017 - link

According to NVIDIA, the FP64 CUDA cores are distinct silicon. They are not the FP32 cores.