Intel’s Enterprise Extravaganza 2019: Launching Cascade Lake, Optane DCPMM, Agilex FPGAs, 100G Ethernet, and Xeon D-1600

by Ian Cutress on April 2, 2019 1:03 PM EST

Today is the big day in 2019 for Intel’s Enterprise product announcements, combining some products that should be available from today and a few others set to be available in the next few months. Rather than go for a staggered approach, we have it all in one: processors, accelerators, networking, and edge compute. Here’s a quick run-down of what’s happening today, along with links to all of our deeper dive articles, our reviews, and announcement analysis.

Cascade Lake: Intel’s New Server and Enterprise CPU

The headliner for this festival is Intel’s new second-generation Xeon Scalable processor, Cascade Lake. This is the processor that Intel will promote heavily across its enterprise portfolio, especially as OEMs such as Dell, HP, Lenovo, Supermicro, QCT, and others all update their product lines with the new hardware. (You can read some of the announcements here: Dell on AT, Supermicro on AT, Lenovo on AT, Lenovo at Lenovo.)

While these new CPUs do not use a new microarchitecture compared to the first generation Skylake-based Xeon Scalable processors, Intel surprised most of the press at its Tech Day with the sheer number of improvements in other areas of Cascade Lake. Not only are there more hardware mitigations against Spectre and Meltdown than we expected, but we have Optane DC Persistent Memory support. The high-volume processors get a performance boost by having up to 25% extra cores, and every processor gets double the memory support (and faster memory, too). Using the latest manufacturing technologies allows for frequency improvements, which when combined with new AVX-512 modes shows some drastic increases in machine learning performance for those that can use them.

| Intel Xeon Scalable | |||||

| 2nd Gen Cascade Lake |

AnandTech | 1st Gen Skylake-SP |

|||

| April 2019 | Released | July 2017 | |||

| [8200] Up to 28 [9200] Up to 56 |

Cores | [8100] Up to 28 | |||

| 1 MB L2 per core Up to 38.5 MB Shared L3 |

Cache | 1 MB L2 per core Up to 38.5 MB Shared L3 |

|||

| Up to 48 Lanes | PCIe 3.0 | Up to 48 Lanes | |||

| Six Channels Up to DDR4-2933 1.5 TB Standard |

DRAM Support | Six Channels Up to DDR4-2666 768 GB Standard |

|||

| Up to 4.5 TB Per Processor | Optane Support | - | |||

| AVX-512 VNNI with INT8 | Vector Compute | AVX-512 | |||

| Variant 2, 3, 3a, 4, and L1TF |

Spectre/Meltdown Fixes |

- | |||

| [8200] Up to 205 W [9200] Up to 400 W |

TDP | Up to 205 W | |||

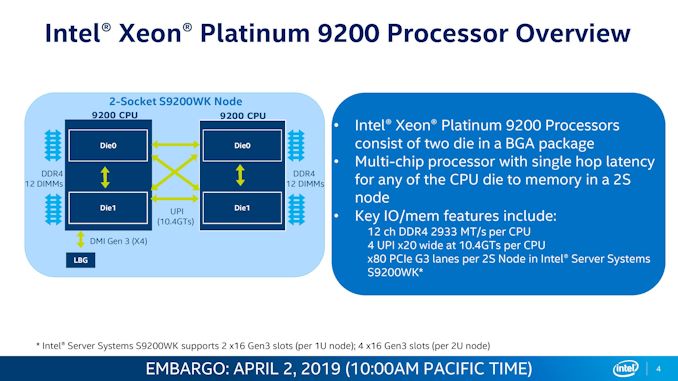

New to the Xeon Scalable family is the AP line of processors. Intel gave a hint to these late last year, but we finally got some of the details. These new Xeon Platinum 9200 family of parts combine two 28-core bits of silicon into a single package, offering up to 56 cores and 112 threads with 12 channels of memory, in a thermal envelope up to 400W. This is essentially a 2P configuration on a single chip, and is designed for high-density deployments. These BGA-only CPUs will only be sold with an underlying Intel-designed platform straight from OEMs, and will not have a direct price – customers will pay for ‘the solution’, rather than the product.

For this generation, Intel will not be producing models with ‘F’ Omnipath fabric on board. Instead, users will have some ‘M’ models with 2 TB memory support and ‘L’ models with 4.5 TB memory support, focused for the Optane markets. There will also be other letter designations, some of them new:

- M = Medium Memory Support (2.0 TB)

- L = Large Memory Support (4.5 TB)

- Y = Speed Select Models (see below)

- N = Networking/NFV Specialized

- V = Virtual Machine Density Value Optimized

- T = Long Life Cycle / Thermal

- S = Search Optimized

Out of all of these, the Speed Select ‘Y’ models are the most interesting. These have additional power monitoring tools that allow for applications to be pinned to certain cores that can boost higher than other cores – distributing the power available to different places on the cores based on what needs to be prioritized. These parts also allow for three different OEM-specified base and turbo frequency settings, so that one system can be focused of three different types of workloads.

We are currently in the process of writing our main review, and plan to tackle the topic from several different angles in a number of stories. Stay tuned for that. We do have the SKU lists and our launch day news found here:

The Intel Second Generation Xeon Scalable:

Cascade Lake, Now with Up To 56-Cores and Optane!

The other key element to the processors is the Optane support, discussed next.

Optane DCPMM: Data Center Persistent Memory Modules

If you’re confused about Optane, you are not the only one.

Broadly speaking, Intel has two different types of Optane: Optane Storage, and Optane DIMMs. The storage products have already been in the market for some time, both in consumer and enterprise, showing exceptional random access latency above and beyond anything NAND can provide, albeit for a price. For users that can amortize the cost, it makes for a great product for that market.

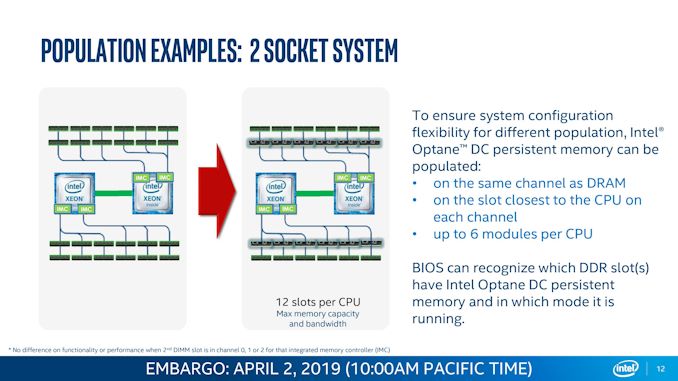

Optane in the memory module form factor actually works on the DDR4-T standard. The product is focused for the Enterprise market, and while Intel has talked about ‘Optane DIMMs’ for a while, today is the ‘official launch’. Select customers are already testing and using it, while general availability is due in the next couple of months.

Me with a 128 GB module of Optane. Picture by Patrick Kennedy

Optane DC Persistent Memory, to give it its official title, comes in a DDR4 form factor and works with Cascade Lake processors to enable large amounts of memory in a single system – up to 6TB in a dual socket platform. The Optane DCPMM is slightly slower than traditional DRAM, but allows for a much higher memory density per socket. Intel is set to offer three different sized modules, either 128 GB, 256 GB, or 512 GB. Optane doesn’t replace DDR4 entirely – you need at least one module of standard DDR4 in the system to get it to work (it acts like a buffer), but it means customers can pair 128GB DDR4 with 512 GB Optane for 768 GB total, rather than looking at a 256 GB of pure DDR4 backed with NVMe.

With Optane DCPMM in a system, it can be used in two modes: Memory Mode and App Direct.

The first mode is the simplest mode to think about it: as DRAM. The system will see the large DRAM allocation, but in reality it will use the Optane DCPMM as the main memory store and the DDR4 as a buffer to it. If the buffer contains the data needed straight away, it makes for a standard DRAM fast read/write, while if it is in the Optane, it is slightly slower. How this is negotiated is between the DDR4 controller and the Optane DCPMM controller on the module, but this ultimately works great for large DRAM installations, rather than keeping everything in slower NVMe.

The second mode is App Direct. In this instance, the DRAM acts like a big storage drive that is as fast as a RAM Disk. This disk, while not bootable, will keep the data stored on it between startups (an advantage of the memory being persistent), enabling very quick restarts to avoid serious downtime. App Direct mode is a little more esoteric than ‘just a big amount of DRAM’, as developers may have to re-architect their software stack in order to take advantage of the DRAM-like speeds this disk will enable. It’s essentially a big RAM Disk that holds its data. (ed: I’ll take two)

One of the issues, when Optane was first announced, was if it would support enough read/write cycles to act as DRAM, given that the same technology was also being used for storage. To alleviate fears, Intel is going to guarantee every Optane module for 3 years, even if that module is run at peak writes for the entire warranty period. Not only does this mean Intel is placing its faith and honor into its own product, it even convinced the very skeptical Charlie from SemiAccurate, who has been a long-time critic of the technology (mostly due to the lack of pre-launch information, but he seems satisfied for now).

Pricing for Intel’s Optane DCPMM is undisclosed at this point. The official line is that there is no specific MSRP for the different sized modules – it is likely to depend on which customers end up buying into the platform, how much, what level of support, and how Intel might interact with them to optimize the setup. We’re likely to see cloud providers offer instances backed with Optane DCPMM, and OEMs like Dell say they have systems planned for general availability in June. Dell stated that they expect users who can take advantage of the large memory mode to start using it first, with those who might be able to accelerate a workflow with App Direct mode taking some time to rewrite their software.

It should be noted that not all of Intel's second generation Xeon Scalable CPUs support Optane. Only Xeon Platinum 8200 family, Xeon Gold 6200 family, Xeon Gold 5200 family, and the Xeon Silver 4215 does. The Xeon Platinum 9200 family do not.

Intel has given us remote access into a couple of systems with Optane DCPMM installed. We’re still going through the process of finding the best way to benchmark the hardware, so stay tuned for that.

Intel Agilex: The New Breed of Intel FPGA

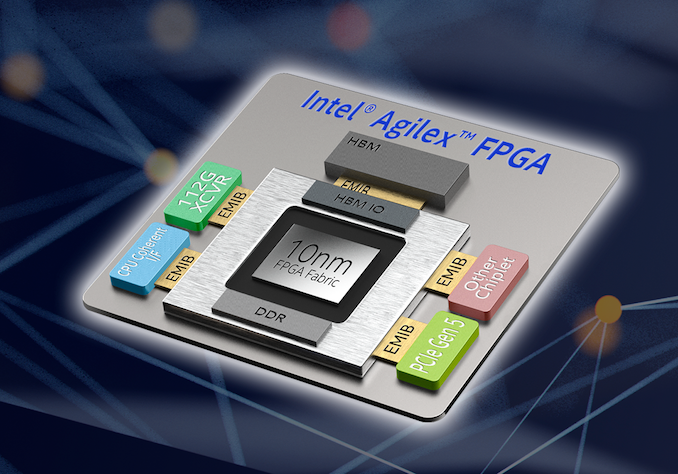

The acquisition of Altera a couple of years ago was big news for Intel. The idea was to introduce FPGAs into Intel’s product family and eventually realize a number of synergies between the two, integrating the portfolio while also aiming to take advantage of Intel’s manufacturing facilities and corporate sales channels. Despite that happening in 2015, every product since was developed prior to that acquisition, prior to the integration of the two companies – until today. The new Agilex family of FPGAs is the first developed and produced wholly under the Intel name.

The announcement for Agilex is today, however the first 10nm samples will be available in Q3. The role of the FPGA has been evolving of late, from offering a general purpose spatial compute hardware to offering hardened accelerators and enabling new technologies. With Agilex, Intel aims to offer that mix of acceleration and configuration, not only with the core array of gates, but also by virtue of additional chiplet extensions enabled through Intel’s Embedded Multi-Die Interconnect Bridge (EMIB) technology. These chiplets can be custom third-party IP, PCIe 5.0, HBM, 112G transceivers, or even Intel’s new Compute eXpress Link cache coherent architecture. Intel is promoting up to 40 TFLOPs of DSP performance, and is promoting its use in mixed precision machine learning, with hardened support for bfloat16 and INT2 to INT8.

Intel will be launching Agilex in three product families: F, I, and M, in that order of both time and complexity. The Intel Quartus Prime software to program these devices will be updated for support during April, but the first F models will be available in Q3.

Columbiaville: Going for 100GbE with Intel 800-Series Controllers

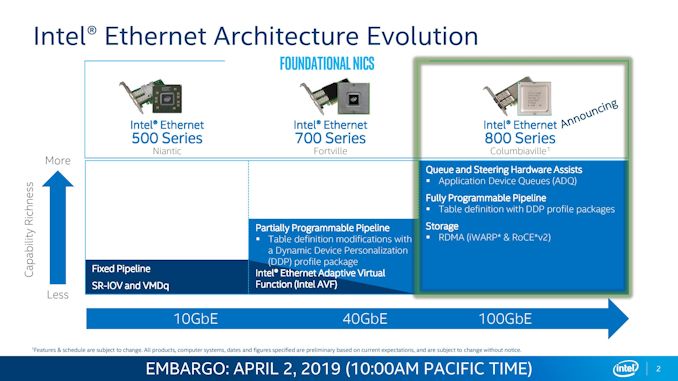

Intel currently offers a lot of 10 gigabit Ethernet and 25 gigabit Ethernet infrastructure in the data center. The company launched 100G Omnipath a few years ago as an early alternative, and is looking towards a second generation of Omnipath to double that speed. In the meantime Intel has developed and is going to launch Columbiaville, its controller offering for the 100G Ethernet market, labeled as the Intel 800-Series.

Introducing faster networking to the data center infrastructure is certainly a positive, however Intel is keen to promote a few new technologies with the product. Application Design Queues (ADQs) are in place to help hardware accelerate priority packets to ensure consistent performance, while Dynamic Device Personalization (DDP) enables additional programming functionality within packet sending for unique networking setups to allow for additional functionality and/or security.

The dual-port 100G card will be called the E810-CQDA2, and we’re still waiting information about the chip: die size, cost, process, etc. Intel states that its 100 GbE offerings will be available in Q3.

Xeon D-1600: A Generational Efficiency Improvement for Edge Acceleration

One of Intel’s key product areas is the edge, both in terms of compute and networking. One of the products that Intel has focused on this area is Xeon D, which covers either the high efficiency compute with accelerated networking and cryptography (D-1500) and the high throughput compute with accelerated networking and cryptography (D-2100). The former being Broadwell well based and the latter is Skylake based. Intel’s new Xeon D-1600 is a direct D-1500 successor: a true single-die solution taking advantage of an additional frequency and efficiency bump in the manufacturing process. It is still built on the same manufacturing process as D-1500, allowing Intel’s partners to easily drop in the new version without many functional changes.

Related Reading

- Intel Xeon Scalable Cascade Lake: Now with Optane!

- Intel Agilex: 10nm FPGAs with PCIe 5.0, DDR5, and CXL

- Intel Columbiaville: 800 Series Ethernet at 100G, with ADQ and DDP

- Intel Launches the Xeon D-1600 Family: Upgrades to Xeon D-1500

- Lenovo’s New Cascade Lake ThinkSystem Servers: Up to 8 Sockets with Optane

- Dell PowerEdge Updates: Upgrade to Cascade Lake and Optane

- Supermicro Calvinballs Into Cascade Lake: Over 100 New and Updated Offerings

38 Comments

View All Comments

HStewart - Tuesday, April 2, 2019 - link

I think the news of Intel Agilex FPGA is significant.1. Intel 10nm in Q3

2. PCIe 5.0 (not 4.0) in 2019

3. EMiB is used

4. 40TFLOPs

I wonder if this chip is planned to be use in new Cray Supercomputers.

I am also curious what difference a 56 core 9200 would be compare to dual socket 28 core xeon. It just seems to me that industry is stuck in making more cores on cpu - but at least these module shave more than 8. For example with same cores what is the difference of the following

1. single 16 core cpu

2. single die with 2 8 core cpus

3. dual socket 8 core cpus.

My guess and only that is the level of performance is 1 is at top, follow by 2 and then 3

bubblyboo - Tuesday, April 2, 2019 - link

If you mean the new German Cray system then they already decided on Intel Stratix 10.HStewart - Tuesday, April 2, 2019 - link

https://www.anandtech.com/show/14112/intels-xeon-x...ksec - Tuesday, April 2, 2019 - link

The 10nm is likely from Custom Foundry, i.e Not the same 10nm used in Icelake.But Altera literally gave up completing with Xilinx ever since Intel acquired them. Cant wait to see how it compared to Xilinx's Everest

FreckledTrout - Tuesday, April 2, 2019 - link

Yes but that is just sampling in 2019.CharonPDX - Friday, April 5, 2019 - link

From other articles about the 56-core 9200, it sounds like it essentially is a dual-socket-in-one-mount solution. It's also BGA-only. It's designed for ultra-high-density servers (blade servers, etc,) to have the effective nature of dual-socket in far less physical space. It's 12 memory channels per socket, compared to 6 per socket for the 8200-series, so it's almost certainly just two 8200-series dies in one package. It's also nearly double the wattage.And it can have a two-package solution (I would say "two socket," but it's soldered not socketed,) to have a four-socket-equivalent system in less space than a two-socket system.

As for performance on the various types, it depends on how the CPUs are architected, and the workload. If your workload isn't heavily multithreaded, but is *VERY* memory intensive, then fewer cores-per-socket with more sockets (each with their own memory channels) may be better for you than more cores in one socket. And again, the 9200 is more like your option 3, just in a single physical package, than even like your option 2.

Dolan - Monday, April 8, 2019 - link

1. em, no2. serdes is still 16/20 nm so it will have same feature level as competitors.

3. sure but any volume product would be success, not these prototype parts

4. 40 in bfloat16. In regular 32b IEEE 754 it is just 10 TFLOPs!!! This is what they originaly advertised for Stratix 10.

Btw.: FPGA is pretty small. Only 30% more elements compared to Stratix 10. Where is claimed 2,7x better density?

40% performance gain is based on estimates in highest speed grade compared to Stratix 10 in -2 without mentioned voltage. Point is that these settings can cause double digit performance difference by itself.

This is shame. It is probably worst FPGA in Alteras history.

People, please. Stop eating Intel's propaganda.

Diogene7 - Tuesday, April 2, 2019 - link

@Ian Cutress : I am really looking forward to see Storage Class Memory (SCM) like 3D X-Point being used on the memory bus of consumer laptop computers and smartphones, and that it could be used as a bootable RAMDisk : in theory, it should help lower latency of several order of magnitude and bring some noticeable responsiveness improvements a bit like replacing a Hard Disk Drive (HDD) by a Solid State Drive (SSD) did.I know it is hard to predict the future, but approximatively what year do you think it would begin to be possible for consumers to buy a laptop / smartphone with 256GB or more of SCM plugged on a memory bus channel ?

I would think 2022 / 2023 at the earliest (probably even later than that) as it probably requires some maturing of memory agnotstic protocol like Gen-z...

What do you think Ian ?

nandnandnand - Tuesday, April 2, 2019 - link

There have already been laptops with configurations like 4 GB RAM + 16 GB 3D XPoint. I don't know if the XPoint is on the memory bus, but even that small amount should be enough to hold the OS and some applications.I don't know if describing XPoint as "slightly slower" than DRAM is accurate. That seems like Intel marketing speak. Certainly, there is room for another post-NAND technology to improve beyond XPoint and the bridge the gap between memory and storage.

And while XPoint would be good for increasing the amount of "memory", the real hotness in the next 10 years will be stacking and later integrating memory directly into the CPU. This could allow CPU performance to increase by orders of magnitude even with small amounts (4 GB) of DRAM. Source: https://www.darpa.mil/attachments/3DSoCProposersDa...

In addition to DRAM, XPoint or another post-NAND technology could also be added for another level of cache.

Diogene7 - Tuesday, April 2, 2019 - link

@nandnandnand : The configuration that you are describing for the laptop is 16GB DDR-Ram + 16GB NVMe Storage Class Memory (SCM) (Optane storage memory)Although the NVMe protocol is faster than the SATA protocal, from what I read on different websites, is that it still add much more latency than the same SCM plugged on the memory bus.

Launching a game application that may take 60s to load from an NVMe SSD could be 5 to 10 times faster without much software optimization, so could take from 6s/12s...

Launching any current size application / rebooting any Operating System (OS) would feel significantly shorter / near instantaneous, which in terms of overall customer experience would be amazing !!!