AMD Rome Second Generation EPYC Review: 2x 64-core Benchmarked

by Johan De Gelas on August 7, 2019 7:00 PM ESTFirst Impressions

Due to bad luck and timing issues we have not been able to test the latest Intel and AMD servers CPU in our most demanding workloads. However, the metrics we were able to perform shows that AMD is offering a product that pushes out Intel for performance and steals the show for performance-per-dollar.

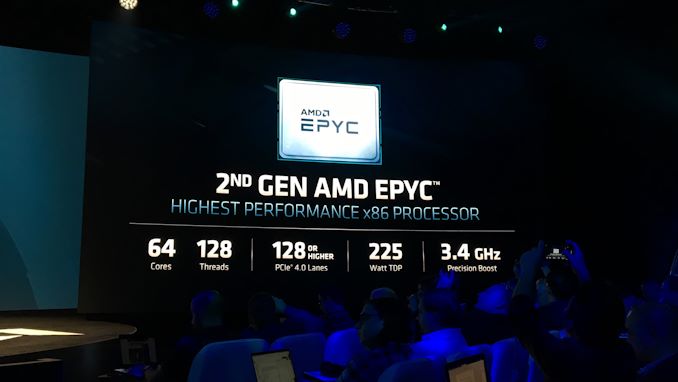

For those with little time: at the high end with socketed x86 CPUs, AMD offers you up to 50 to 100% higher performance while offering a 40% lower price. Unless you go for the low end server CPUs, there is no contest: AMD offers much better performance for a much lower price than Intel, with more memory channels and over 2x the number of PCIe lanes. These are also PCIe 4.0 lanes. What if you want more than 2 TB of RAM in your dual socket server? The discount in favor of AMD just became 50%.

We can only applaud this with enthusiasm as it empowers all the professionals who do not enjoy the same negotiating power as the Amazons, Azure and other large scale players of this world. Spend about $4k and you get 64 second generation EPYC cores. The 1P offerings offer even better deals to those with a tight budget.

So has AMD done the unthinkable? Beaten Intel by such a large margin that there is no contest? For now, based on our preliminary testing, that is the case. The launch of AMD's second generation EPYC processors is nothing short of historic, beating the competition by a large margin in almost every metric: performance, performance per watt and performance per dollar.

Analysts in the industry have stated that AMD expects to double their share in the server market by Q2 2020, and there is every reason to believe that AMD will succeed. The AMD EPYC is an extremely attractive server platform with an unbeatable performance per dollar ratio.

Intel's most likely immediate defense will be lowering their prices for a select number of important customers, which won't be made public. The company is also likely to showcase its 56-core Xeon Platinum 9200 series processors, which aren't socketed and only available from a limited number of vendors, and are listed without pricing so there's no firm determination on the value of those processors. Ultimately, if Intel wanted a core-for-core comparison here, we would have expected them to reach out and offer a Xeon 9200 system to test. That didn't happen. But keep an eye out on Intel's messaging in the next few months.

As you know, Ice lake is Intel's most promising response, and that chip will be available somewhere in the mid of 2020. Ice lake promises 18% higher IPC, eight instead of six memory channels and should be able to offer 56 or more cores in reasonable power envelope as it will use Intel's most advanced 10 nm process. The big question will be around the implementation of the design, if it uses chiplets, how the memory works, and the frequencies they can reach.

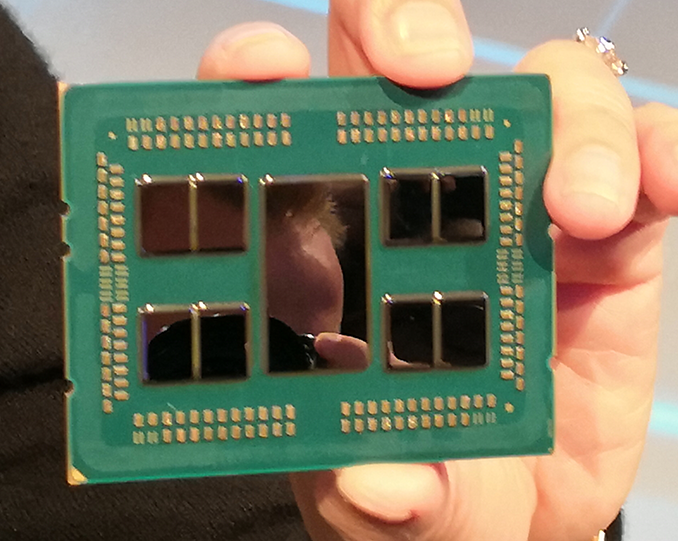

Overall, AMD has done a stellar job. The city may be built on seven hills, but Rome's 8x8-core chiplet design is a truly cultural phenomenon of the semiconductor industry.

We'll be revisiting more big data benchmarks through August and September, and hopefully have individual chip benchmark reviews coming soon. Stay tuned for those as and when we're able to acquire the other hardware.

Can't wait? Then read our interview with AMD's SVP and GM of the Datacenter and Embedded Solutions Group, Forrest Norrod, where we talk about Napes, Rome, Milan, and Genoa. It's all coming up EPYC.

An Interview with AMD’s Forrest Norrod: Naples, Rome, Milan, & Genoa

180 Comments

View All Comments

steepedrostee - Thursday, August 8, 2019 - link

if i had to guess who is more full of crapola, i would think youSmell This - Thursday, August 8, 2019 - link

Pooper Lake?

Cascade is obviously, The Mistake By The Lake

Chipzillah will certainly strike back but it reminds me of the old 'virgin' joke. "My first wife was an OB/GYN, and all she wanted to do was look at it. My second wife was a psychiatrist, and all she wanted to do was talk about it, and ...

My third wife was an Intel Fan Girl, and all she could say was, "Wait until next year!"

HA!

RSAUser - Thursday, August 8, 2019 - link

Do we finally have a contender to run Crysis on max?Tunnah - Thursday, August 8, 2019 - link

I'd normally just ignore this but this really needs proofreading, There's multiple mistakes on every page, becomes a bit difficult to follow.cerealspiller - Thursday, August 8, 2019 - link

Well, 50% of the sentences in your post have a grammatical error, so I will just try to ignore it.GreenReaper - Friday, August 9, 2019 - link

He's a reader, not a writer. ;-)Oliseo - Thursday, August 8, 2019 - link

Pot. Meet Kettle.steepedrostee - Thursday, August 8, 2019 - link

wow amd !umano - Thursday, August 8, 2019 - link

Intel people will welcome "Rome" like gladiators "Ave, Caesar, morituri te salutant"I am really happy for Amd and I really hope their sells will be a lot more than they could ever dream.

Because they become more than competitive with the most rightful strategy, just deliver an awesome product. The fact they think that they will just double their shares shows how sick the market(s) are. The epyc is faster, greener and way way cheaper.

29a - Thursday, August 8, 2019 - link

"AMD does not blow fuses on cheaper SKUs to create artificial 'value' for buying more expensive SKUs"I like this guy, more reviews from him. He's not afraid to bite the hand that feeds him.