The AMD 3rd Gen Ryzen Deep Dive Review: 3700X and 3900X Raising The Bar

by Andrei Frumusanu & Gavin Bonshor on July 7, 2019 9:00 AM ESTSection by Dr. Ian Cutress (Orignal article)

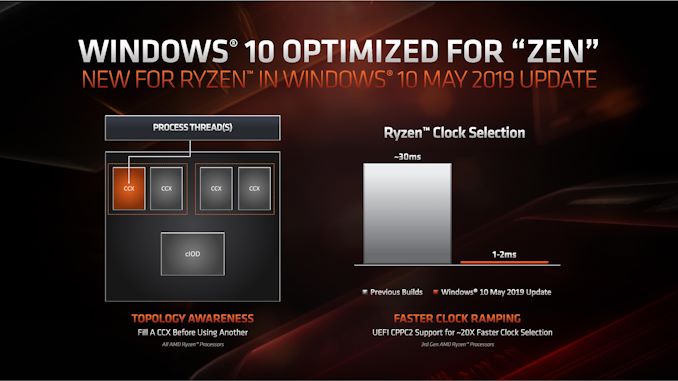

Windows Optimizations

One of the key points that have been a pain in the side of non-Intel processors using Windows has been the optimizations and scheduler arrangements in the operating system. We’ve seen in the past how Windows has not been kind to non-Intel microarchitecture layouts, such as AMD’s previous module design in Bulldozer, the Qualcomm hybrid CPU strategy with Windows on Snapdragon, and more recently with multi-die arrangements on Threadripper that introduce different memory latency domains into consumer computing.

Obviously AMD has a close relationship with Microsoft when it comes down to identifying a non-regular core topology with a processor, and the two companies work towards ensuring that thread and memory assignments, absent of program driven direction, attempt to make the most out of the system. With the May 10th update to Windows, some additional features have been put in place to get the most out of the upcoming Zen 2 microarchitecture and Ryzen 3000 silicon layouts.

The optimizations come on two fronts, both of which are reasonably easy to explain.

Thread Grouping

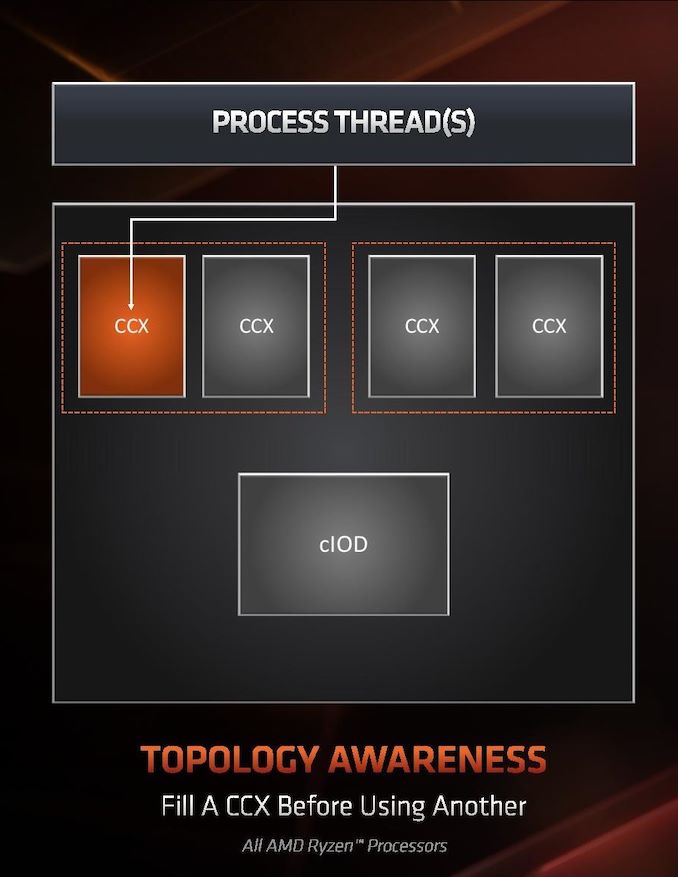

The first is thread allocation. When a processor has different ‘groups’ of CPU cores, there are different ways in which threads are allocated, all of which have pros and cons. The two extremes for thread allocation come down to thread grouping and thread expansion.

Thread grouping is where as new threads are spawned, they will be allocated onto cores directly next to cores that already have threads. This keeps the threads close together, for thread-to-thread communication, however it can create regions of high power density, especially when there are many cores on the processor but only a couple are active.

Thread expansion is where cores are placed as far away from each other as possible. In AMD’s case, this would mean a second thread spawning on a different chiplet, or a different core complex/CCX, as far away as possible. This allows the CPU to maintain high performance by not having regions of high power density, typically providing the best turbo performance across multiple threads.

The danger of thread expansion is when a program spawns two threads that end up on different sides of the CPU. In Threadripper, this could even mean that the second thread was on a part of the CPU that had a long memory latency, causing an imbalance in the potential performance between the two threads, even though the cores those threads were on would have been at the higher turbo frequency.

Because of how modern software, and in particular video games, are now spawning multiple threads rather than relying on a single thread, and those threads need to talk to each other, AMD is moving from a hybrid thread expansion technique to a thread grouping technique. This means that one CCX will fill up with threads before another CCX is even accessed. AMD believes that despite the potential for high power density within a chiplet, while the other might be inactive, is still worth it for overall performance.

For Matisse, this should afford a nice improvement for limited thread scenarios, and on the face of the technology, gaming. It will be interesting to see how much of an affect this has on the upcoming EPYC Rome CPUs or future Threadripper designs. The single benchmark AMD provided in its explanation was Rocket League at 1080p Low, which reported a +15% frame rate gain.

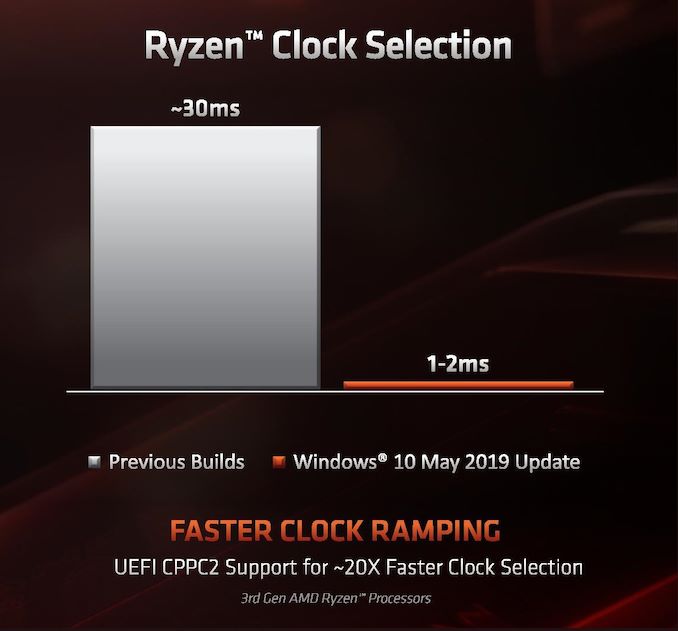

Clock Ramping

For any of our users familiar with our Skylake microarchitecture deep dive, you may remember that Intel introduced a new feature called Speed Shift that enabled the processor to adjust between different P-states more freely, as well as ramping from idle to load very quickly – from 100 ms to 40ms in the first version in Skylake, then down to 15 ms with Kaby Lake. It did this by handing P-state control back from the OS to the processor, which reacted based on instruction throughput and request. With Zen 2, AMD is now enabling the same feature.

AMD already has sufficiently more granularity in its frequency adjustments over Intel, allowing for 25 MHz differences rather than 100 MHz differences, however enabling a faster ramp-to-load frequency jump is going to help AMD when it comes to very burst-driven workloads, such as WebXPRT (Intel’s favorite for this sort of demonstration). According to AMD, the way that this has been implemented with Zen 2 will require BIOS updates as well as moving to the Windows May 10th update, but it will reduce frequency ramping from ~30 milliseconds on Zen to ~1-2 milliseconds on Zen 2. It should be noted that this is much faster than the numbers Intel tends to provide.

The technical name for AMD’s implementation involves CPPC2, or Collaborative Power Performance Control 2, and AMD’s metrics state that this can increase burst workloads and also application loading. AMD cites a +6% performance gain in application launch times using PCMark10’s app launch sub-test.

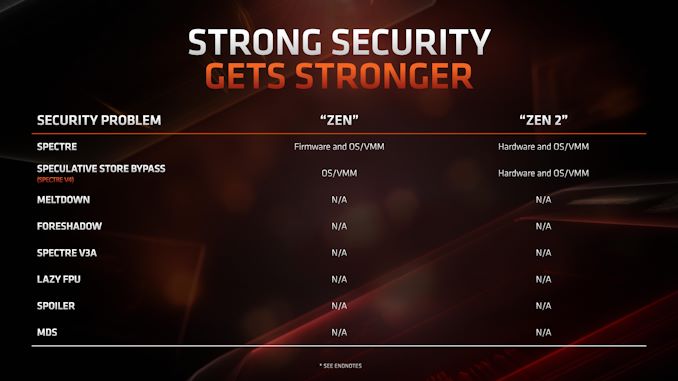

Hardened Security for Zen 2

Another aspect to Zen 2 is AMD’s approach to heightened security requirements of modern processors. As has been reported, a good number of the recent array of side channel exploits do not affect AMD processors, primarily because of how AMD manages its TLB buffers that have always required additional security checks before most of this became an issue. Nonetheless, for the issues to which AMD is vulnerable, it has implemented a full hardware-based security platform for them.

The change here comes for the Speculative Store Bypass, known as Spectre v4, which AMD now has additional hardware to work in conjunction with the OS or virtual memory managers such as hypervisors in order to control. AMD doesn’t expect any performance change from these updates. Newer issues such as Foreshadow and Zombieload do not affect AMD processors.

447 Comments

View All Comments

shakazulu667 - Sunday, July 7, 2019 - link

Is there a compilation test coming for chromium or another big source tree, that would show if new IO arch brings wider benefits for such CPU+IO workloads?Andrei Frumusanu - Sunday, July 7, 2019 - link

We'll be re-adding the Chromium compile test in the next few days - there were a few technical hiccups when running it.shakazulu667 - Sunday, July 7, 2019 - link

Thanks, I'm looking forward to it, especially curious if AMD can utilize NVMe better for this kind of workload.Andrei Frumusanu - Sunday, July 7, 2019 - link

Unfortunately we don't test the CPU suite with different SSDs for this.shakazulu667 - Sunday, July 7, 2019 - link

Is there another test in your suite that could show improvements with IO , incl NVMe?RSAUser - Monday, July 8, 2019 - link

But one of the big features is PCIe 4 support, so testing with an nvme drive as well to show difference would be important? People spending $490 on a CPU only are probably going to be buying an Nvme SSD.A5 - Monday, July 8, 2019 - link

There aren't any PCIe 4 SSDs for them to test with.0ldman79 - Monday, July 8, 2019 - link

Yep, PCIe 4.0 NVME is going to be beta at this point at best.Last I read the first 4.0 NVME to be released is essentially running an overclocked 3.0 interface, which the list of NVME that can saturate 3.0 is pretty short as it is.

RSAUser - Tuesday, July 9, 2019 - link

That's because these are the first PCIe 4 slots that exist, can't release a product that can't even be used.Using an overlocked drive in lieu of a 4 one is the proper thing to do.

Kevin G - Tuesday, July 9, 2019 - link

For consumers yes but the first PCIe 4.0 host system was the IBM POWER9 released ~18 months ago. As such there are a handful of NIC and accelerators for servers out there today.The real oddity is that nVidia doesn’t support PCIe 4.0. Volta’s nvLink has a PHY based upon PCIe 4.0. Turing should as well though nVidia doesn’t par those chips with the previously mentioned POWER9.