The AMD 3rd Gen Ryzen Deep Dive Review: 3700X and 3900X Raising The Bar

by Andrei Frumusanu & Gavin Bonshor on July 7, 2019 9:00 AM EST** = Old results marked were performed with the original BIOS & boost behaviour as published on 7/7.

Benchmarking Performance: CPU System Tests

Our System Test section focuses significantly on real-world testing, user experience, with a slight nod to throughput. In this section we cover application loading time, image processing, simple scientific physics, emulation, neural simulation, optimized compute, and 3D model development, with a combination of readily available and custom software. For some of these tests, the bigger suites such as PCMark do cover them (we publish those values in our office section), although multiple perspectives is always beneficial. In all our tests we will explain in-depth what is being tested, and how we are testing.

All of our benchmark results can also be found in our benchmark engine, Bench.

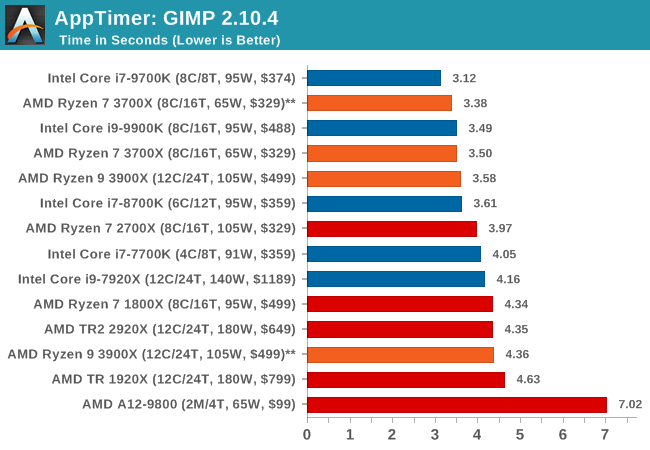

Application Load: GIMP 2.10.4

One of the most important aspects about user experience and workflow is how fast does a system respond. A good test of this is to see how long it takes for an application to load. Most applications these days, when on an SSD, load fairly instantly, however some office tools require asset pre-loading before being available. Most operating systems employ caching as well, so when certain software is loaded repeatedly (web browser, office tools), then can be initialized much quicker.

In our last suite, we tested how long it took to load a large PDF in Adobe Acrobat. Unfortunately this test was a nightmare to program for, and didn’t transfer over to Win10 RS3 easily. In the meantime we discovered an application that can automate this test, and we put it up against GIMP, a popular free open-source online photo editing tool, and the major alternative to Adobe Photoshop. We set it to load a large 50MB design template, and perform the load 10 times with 10 seconds in-between each. Due to caching, the first 3-5 results are often slower than the rest, and time to cache can be inconsistent, we take the average of the last five results to show CPU processing on cached loading.

Application loading is typically single thread limited, but we see here that at some point it also becomes core-resource limited. Having access to more resources per thread in a non-HT environment helps the 8C/8T and 6C/6T processors get ahead of both of the 5.0 GHz parts in our testing.

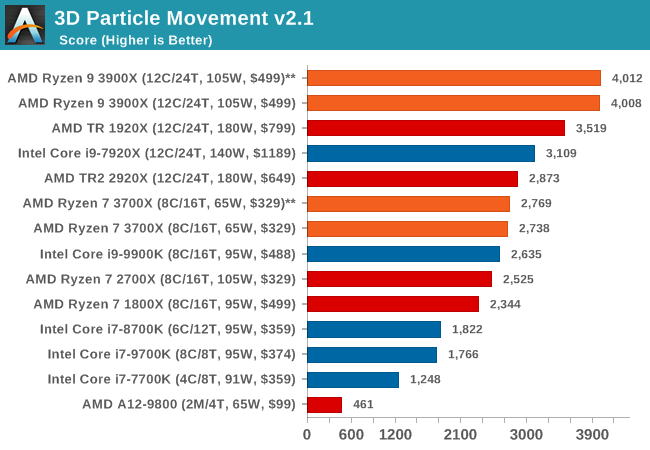

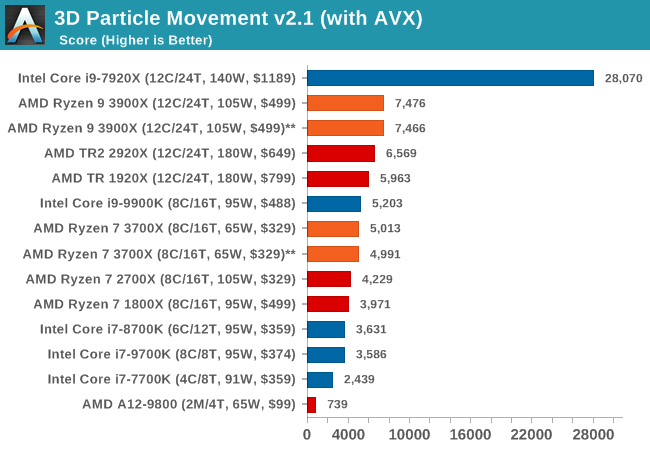

3D Particle Movement v2.1: Brownian Motion

Our 3DPM test is a custom built benchmark designed to simulate six different particle movement algorithms of points in a 3D space. The algorithms were developed as part of my PhD., and while ultimately perform best on a GPU, provide a good idea on how instruction streams are interpreted by different microarchitectures.

A key part of the algorithms is the random number generation – we use relatively fast generation which ends up implementing dependency chains in the code. The upgrade over the naïve first version of this code solved for false sharing in the caches, a major bottleneck. We are also looking at AVX2 and AVX512 versions of this benchmark for future reviews.

For this test, we run a stock particle set over the six algorithms for 20 seconds apiece, with 10 second pauses, and report the total rate of particle movement, in millions of operations (movements) per second. We have a non-AVX version and an AVX version, with the latter implementing AVX512 and AVX2 where possible.

3DPM v2.1 can be downloaded from our server: 3DPMv2.1.rar (13.0 MB)

With a non-AVX code base, the 9900K shows the IPC and frequency improvements over the R7 2700X, although in reality it is not as big of a percentage jump as you might imagine. The processors without HT get pushed back a bit here.

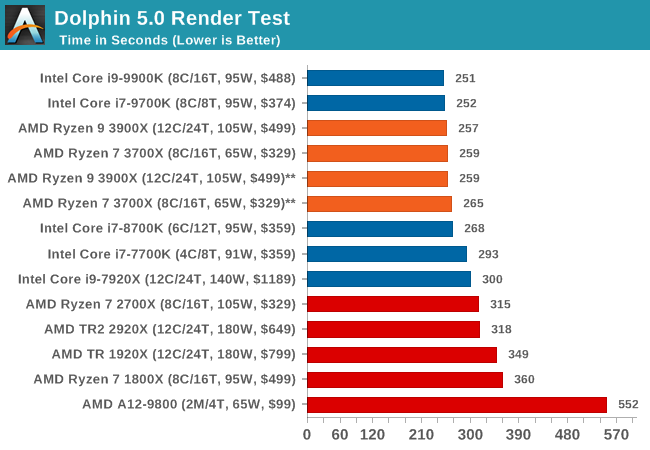

Dolphin 5.0: Console Emulation

One of the popular requested tests in our suite is to do with console emulation. Being able to pick up a game from an older system and run it as expected depends on the overhead of the emulator: it takes a significantly more powerful x86 system to be able to accurately emulate an older non-x86 console, especially if code for that console was made to abuse certain physical bugs in the hardware.

For our test, we use the popular Dolphin emulation software, and run a compute project through it to determine how close to a standard console system our processors can emulate. In this test, a Nintendo Wii would take around 1050 seconds.

The latest version of Dolphin can be downloaded from https://dolphin-emu.org/

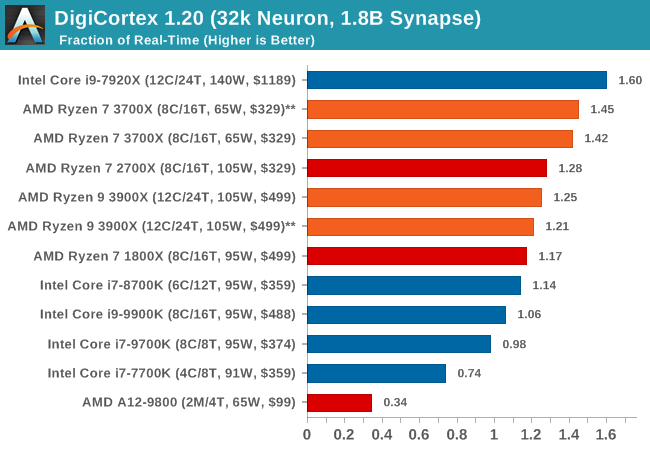

DigiCortex 1.20: Sea Slug Brain Simulation

This benchmark was originally designed for simulation and visualization of neuron and synapse activity, as is commonly found in the brain. The software comes with a variety of benchmark modes, and we take the small benchmark which runs a 32k neuron / 1.8B synapse simulation, equivalent to a Sea Slug.

Example of a 2.1B neuron simulation

We report the results as the ability to simulate the data as a fraction of real-time, so anything above a ‘one’ is suitable for real-time work. Out of the two modes, a ‘non-firing’ mode which is DRAM heavy and a ‘firing’ mode which has CPU work, we choose the latter. Despite this, the benchmark is still affected by DRAM speed a fair amount.

DigiCortex can be downloaded from http://www.digicortex.net/

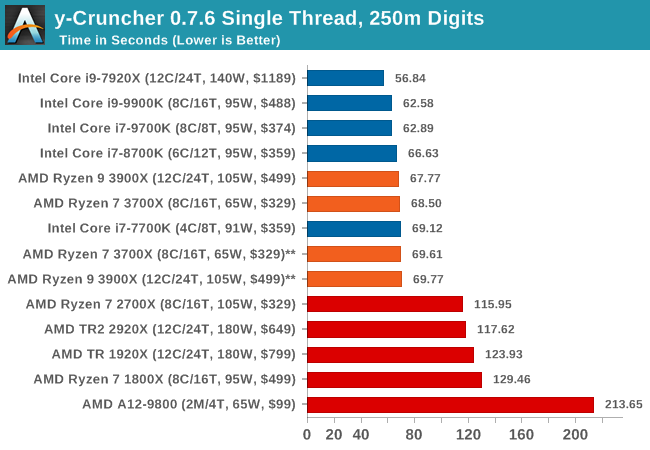

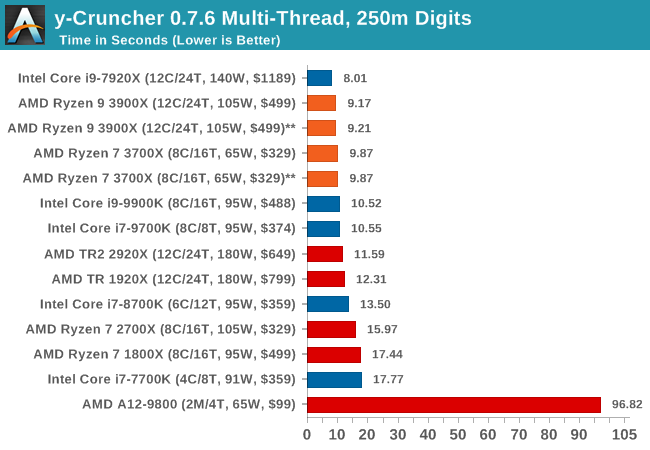

y-Cruncher v0.7.6: Microarchitecture Optimized Compute

I’ve known about y-Cruncher for a while, as a tool to help compute various mathematical constants, but it wasn’t until I began talking with its developer, Alex Yee, a researcher from NWU and now software optimization developer, that I realized that he has optimized the software like crazy to get the best performance. Naturally, any simulation that can take 20+ days can benefit from a 1% performance increase! Alex started y-cruncher as a high-school project, but it is now at a state where Alex is keeping it up to date to take advantage of the latest instruction sets before they are even made available in hardware.

For our test we run y-cruncher v0.7.6 through all the different optimized variants of the binary, single threaded and multi-threaded, including the AVX-512 optimized binaries. The test is to calculate 250m digits of Pi, and we use the single threaded and multi-threaded versions of this test.

Users can download y-cruncher from Alex’s website: http://www.numberworld.org/y-cruncher/

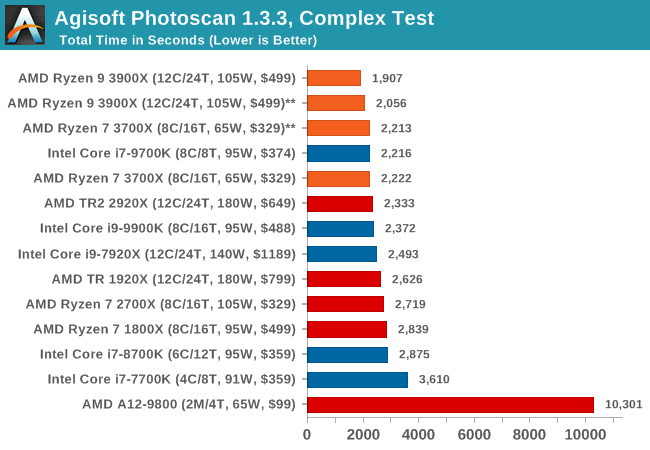

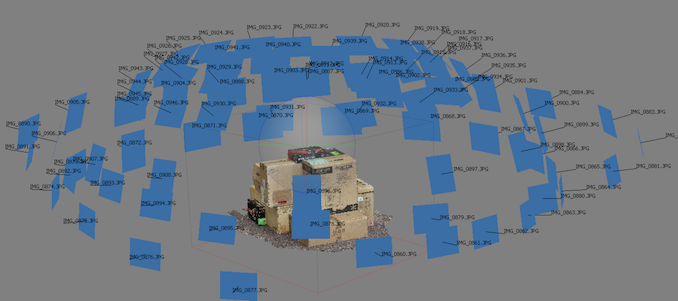

Agisoft Photoscan 1.3.3: 2D Image to 3D Model Conversion

One of the ISVs that we have worked with for a number of years is Agisoft, who develop software called PhotoScan that transforms a number of 2D images into a 3D model. This is an important tool in model development and archiving, and relies on a number of single threaded and multi-threaded algorithms to go from one side of the computation to the other.

In our test, we use version 1.3.3 of the software with a good sized data set of 84 x 18 megapixel photos, and push it through a reasonably fast variant of the algorithms. We report the total time to complete the process.

Agisoft’s Photoscan website can be found here: http://www.agisoft.com/

447 Comments

View All Comments

Daeros - Monday, July 15, 2019 - link

The only mitigation for MDS is to disable Hyper-Threading. I feel like there would be a pretty significant performance penalty for this.Irata - Sunday, July 7, 2019 - link

Well, at least Ryzen 3000 CPU were tested with the latest Windows build that includes Ryzen optimizations, but tbh I find it a bit "lazy" at least to not test Intel CPU on the latest Windows release which forces security updates that *do* affect performance negatively.This may or may not have changed the final results but would be more proper.

Oxford Guy - Sunday, July 7, 2019 - link

Lazy doesn't even begin to describe it.Irata - Sunday, July 7, 2019 - link

Thing is I find this so completely unnecessary.Not criticising thereview per se, but you see AT staff going wild on Twitter over people accusing them of bias when simple things like testing both Intel and AMD systems on the same Windows version would be an easy way to protect themselves against criticism.

It the same as the budget CPU review where the Pentium Gold was recommended due to its price/ performance, but many posters pointed out that it simply was not available anywhere for even near the suggested price and AT failed to acknowledge that.

Zombieload ? Never heard of it.

This is what I mean by lazy - acknowledge these issues or at least give a logical reason why. This is much easier than being offended on Twitter. If you say why you did certain things, there is no reason to post "Because they crap over the comment sections with such vitriol; they're so incensed that we did XYZ, to the point where they're prepared to spend half an hour writing comments to that effect with the most condescending language. " which basically comes down to saying "A ton of our readers are a*holes.

Sure, PC related comment sections can be extremely toxic, but doing things as proper as possible is a good way to safeguard against such comments or at least make those complaining look like ignorant fools rather than actually encouraging this.

John_M - Sunday, July 7, 2019 - link

A good point and you made it very well and in a very civil way.Ryan Smith - Monday, July 8, 2019 - link

Thanks. I appreciate the feedback, as I know first hand it can sometimes be hard to write something useful.When AMD told us that there were important scheduler changes in 1903, Ian and I both groaned a bit. We're glad AMD is getting some much-needed attention from Microsoft with regards to thread scheduling. But we generally would avoid using such a fresh OS, after the disasters that were the 1803 and 1809 launches.

And more to the point, the timeframe for this review didn't leave us nearly enough time to redo everything on 1903. With the AMD processors arriving on Wednesday, and with all the prep work required up to that, the best we could do in the time available was run the Ryzen 3000 parts on 1903, ensuring that we tested AMD's processor with the scheduler it was meant for. I had been pushing hard to try to get at least some of the most important stuff redone on 1903, but unfortunately that just didn't work out.

Ultimately laziness definitely was not part of the reason for anything we did. Andrei and Gavin went above and beyond, giving up their weekends and family time in order to get this review done for today. As it stands, we're all beat, and the work week hasn't even started yet...

(I'll also add that AnandTech is not a centralized operation; Ian is in London, I'm on the US west coast, etc. It brings us some great benefits, but it also means that we can't easily hand off hardware to other people to ramp up testing in a crunch period.)

RSAUser - Monday, July 8, 2019 - link

But you already had the Intel processors beforehand so could have tested them on 1903 without having to wait for the Ryzen CPU? Your argument is weird.Daeros - Monday, July 15, 2019 - link

Exactly. They knew that they needed to re-test the Intel and older Ryzen chips on 1903 to have a level, relevant playing field. Knowing that it would penalize Intel disproportionately to have all the mitigations 1903 bakes in, they simply chose not to.Targon - Monday, July 8, 2019 - link

Sorry, Ryan, but test beds are not your "daily drivers". With 1903 out for more than one month, a fresh install of 1903(Windows 10 Media Creation tool comes in handy), with the latest chipset and device drivers, it should have been possible to fully re-test the Intel platform with all the latest security patches, BIOS updates, etc. The Intel platform should have been set and re-benchmarked before the samples from AMD even showed up.It would have been good to see proper RAM used, because anyone who buys DDR4-3200 RAM with the intention of gaming would go with DDR4-3200CL14 RAM, not the CL16 stuff that was used in the new Ryzen setup. The only reason I went CL16 with my Ryzen setup was because when pre-ordering Ryzen 7 in 2017, it wasn't known at the time how significant CL14 vs. CL16 RAM would be in terms of performance and stability(and even the ability to run at DDR4-3200 speeds).

If I were doing reviews, I'd have DDR4-3200 in various flavors from the various systems being used. Taking the better stuff out of another system to do a proper test would be expected.

Ratman6161 - Thursday, July 11, 2019 - link

"ho buys DDR4-3200 RAM with the intention of gaming would go with DDR4-3200CL14 RAM"Well I can tell you who. First Ill address "the intention of gaming". there are a lot of us who could care less about games and I am one of them. Second, even for those who do play games, if you need 32 GB of RAM (like I do) the difference in price on New Egg between CAS 16 and CAS 14 for a 2x16 Kit is $115 (comparing RipJaws CAS 16 Vs Trident Z CAS 14 - both G-Skill obviously). That's approaching double the price. So I sort of appreciate reviews that use the RAM I would actually buy. I'm sure gamers on a budget who either can't or don't want to spend the extra $115 or would rather put it against a better video card, the cheaper RAM is a good trade off.

Finally, there are going to be a zillion reviews of these processors over the next few days and weeks. We don't necessarily need to get every single possible configuration covered the first day :) Also, there are many other sites publishing reviews so its easy to find sites using different configurations. All in all, I don't know why people are being so harsh on this (and other) reviews. its not like I paid to read it :)