AMD Zen 2 Microarchitecture Analysis: Ryzen 3000 and EPYC Rome

by Dr. Ian Cutress on June 10, 2019 7:22 PM EST- Posted in

- CPUs

- AMD

- Ryzen

- EPYC

- Infinity Fabric

- PCIe 4.0

- Zen 2

- Rome

- Ryzen 3000

- Ryzen 3rd Gen

AMD Zen 2 Microarchitecture Overview

The Quick Analysis

At AMD’s Tech Day, on hand was Fellow and Chief Architect Mike Clark to go through the changes. Mike is a great engineer to talk to, although what always amuses me (for any company, not just AMD) is that engineers that talk about the latest products coming to market are already working one, two, or three generations ahead at the company. Mike remarked that it took him a while to think back to the specific Zen+ to Zen 2 changes, while his mind internally is already several generations down the line.

An interesting element to Zen 2 is around the intention. Initially Zen 2 was merely going to be a die shrink of Zen+, going from 12nm down to 7nm, similar to what we used to see with Intel in its tick-tock model for the initial part of the century. However, based on internal analysis and the time frame for 7nm, it was decided that Zen 2 would be used as a platform for better performance, taking advantage of 7nm in multiple ways rather than just redesigning the same layout on a new process node. As a result of the adjustments, AMD is promoting a +15% IPC improvement for Zen 2 over Zen+.

When it comes down to the exact changes in the microarchitecture, what we’re fundamentally looking at is still a similar floorplan to what Zen looks like. Zen 2 is a family member of the Zen family, and not a complete redesign or different paradigm on how to process x86 – as will other architectures that have familial updates, Zen 2 affords a more efficient core and a wider core, allowing better instruction throughput.

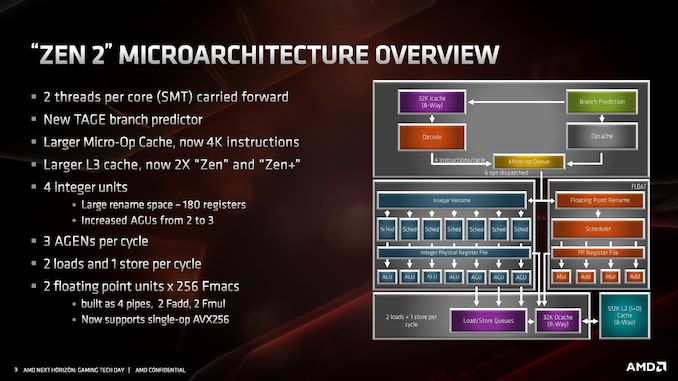

At a high level, the core looks very much the same. Highlights of the Zen 2 design include a different L2 branch predictor known as a TAGE predictor, a doubling of the micro-op cache, a doubling of the L3 cache, an increase in integer resources, an increase in load/store resources, and support for single-operation AVX-256 (or AVX2). AMD has stated that there is no frequency penalty for AVX2, based on its energy aware frequency platform.

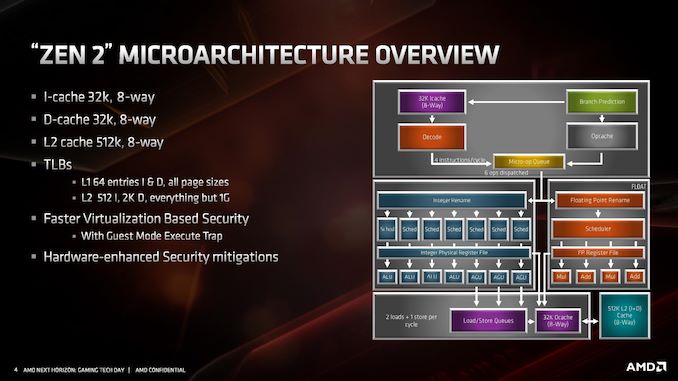

AMD has also made adjustments to the cache system, the most notable being for the L1 instruction cache, which has been halved to 32 kB, but associativity has doubled. This change was made for important reasons, which we’ll go into over the next pages. The L1 data cache and L2 caches are unchanged, however the translation lookaside buffers (TLBs) have increased support. AMD also states that it has added deeper virtualization support with respect to security, helping enable features further down the pipeline. As mentioned previously in this article, there are also security hardening updates.

For the quick analysis, it’s easy to tell that doubling the micro-op cache is going to offer a significant improvement to IPC in a number of scenarios, and combine that with an increase in load/store resources is going to help more instructions get pushed through. The double L3 cache is going to help in specific workloads, as would the AVX2 single-op support, but the improved branch predictor is also going to showcase raw performance uplift. All-in-all, for an on-paper analysis, AMD’s +15% IPC improvement seems like a very reasonable number to promote.

Over the next few pages, we’ll go deeper into how the microarchitecture has changed.

216 Comments

View All Comments

Smell This - Sunday, June 16, 2019 - link

AND ...

it might be 12- to 16 IF links or, another substrate ?

Targon - Thursday, June 13, 2019 - link

Epyc and Ryzen CCX units are TSMC, the true CPU cores. The I/O unit is the only part that comes from Global Foundries, and is probably at TSMC just to satisfy the contracts currently in place.YukaKun - Monday, June 10, 2019 - link

"Users focused on performance will love the new 16-core Ryzen 9 3950X, while the processor seems nice an efficient at 65W, so it will be interesting so see what happens at lower power."Shouldn't that be 105W?

And great read as usual.

Cheers!

jjj - Monday, June 10, 2019 - link

The big problem with this platform is that ST perf per dollar gains are from zero to minimal, depending on SKU.They give us around 20% ST gains (IPC+clocks) but at a cost. Would rather have 10-15% gains for free than to pay for 20%. Pretty much all SKUs need a price drop to become exciting, some about 50$, some a bit less and the 16 cores a lot more.

Got to wonder about memory BW with the 16 cores. 2 channels with 8 cores is one thing but at 16 cores, it might become a limiting factor here and there.

Threska - Tuesday, June 11, 2019 - link

That could be said of any processor. "Yeah, drop the price of whatever it is and we'll love you for it." Improvements cost, just like DVD's costed more than VHS.jjj - Tuesday, June 11, 2019 - link

In the semi business the entire point is to offer significantly more perf per dollar every year. That's what Moore's Law was, 2x the perf at same price every 2 years. Now progress is slower but consumers aren't getting anything anymore.And in pretty much all tech driven areas, products become better every year, even cars. When there is no innovation, it means that the market is dysfunctional. AMD certainly does not innovate here, except on the balance sheet. Innovation means that you get better value and that is missing here. TSMC gives them more perf per dollar, they have additional gains from packaging but those gains do not trickle down to us. At the end of the day even Intel tries to offer 10-15% perf per dollar gains every cycle.

AlyxSharkBite - Tuesday, June 11, 2019 - link

That’s not Moore’s Law at all. It stated that the number of transistors would double. Also it’s been dead a whileSandy bridge 4c 1.16b

Coffee lake 4c is 2.1b (can’t compare the 6c or 8c)

And that’s a lot more than 2 years.

mode_13h - Tuesday, June 11, 2019 - link

Yeah, but those two chips occupy different market segments. So, you should compare Sandybridge i7 vs. Coffelake i7.Teutorix - Tuesday, June 11, 2019 - link

The number of transistors in an IC, not the number of transistors per CPU core. This is an important distinction since a CPU core in Moore's day had very little in it besides registers and an ALU. They didn't integrate FPUs until relatively recently.It's about overall transistor density, nothing more. You absolutely can compare an 8c to a 4c chip, because they are both a single IC.

An 8 core coffee lake chip is 20% smaller than a quad core sandy bridge chip. That's double the CPU cores, double the GPU cores, with probably a massive increase in the transistors/core also.

Moore's law had a minor slowdown with intel stuck at 14nm but its not dead.

Wilco1 - Tuesday, June 11, 2019 - link

Moore's Law is actually accelerating. Just not at Intel. See https://en.wikipedia.org/wiki/Transistor_count - the largest chips now have ~20 Billion transistors, and with 7nm and 5nm it looks like we're getting some more doublings soon.