AMD Zen 2 Microarchitecture Analysis: Ryzen 3000 and EPYC Rome

by Dr. Ian Cutress on June 10, 2019 7:22 PM EST- Posted in

- CPUs

- AMD

- Ryzen

- EPYC

- Infinity Fabric

- PCIe 4.0

- Zen 2

- Rome

- Ryzen 3000

- Ryzen 3rd Gen

Fetch/Prefetch

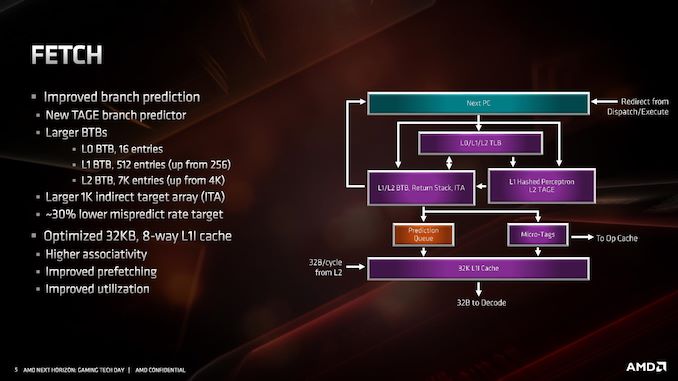

Starting with the front end of the processor, the prefetchers.

AMD’s primary advertised improvement here is the use of a TAGE predictor, although it is only used for non-L1 fetches. This might not sound too impressive: AMD is still using a hashed perceptron prefetch engine for L1 fetches, which is going to be as many fetches as possible, but the TAGE L2 branch predictor uses additional tagging to enable longer branch histories for better prediction pathways. This becomes more important for the L2 prefetches and beyond, with the hashed perceptron preferred for short prefetches in the L1 based on power.

In the front end we also get larger BTBs, to help keep track of instruction branches and cache requests. The L1 BTB has doubled in size from 256 entry to 512 entry, and the L2 is almost doubled to 7K from 4K. The L0 BTB stays at 16 entries, but the Indirect target array goes up to 1K entries. Overall, these changes according to AMD affords a 30% lower mispredict rate, saving power.

One other major change is the L1 instruction cache. We noted that it is smaller for Zen 2: only 32 KB rather than 64 KB, however the associativity has doubled, from 4-way to 8-way. Given the way a cache works, these two effects ultimately don’t cancel each other out, however the 32 KB L1-I cache should be more power efficient, and experience higher utilization. The L1-I cache hasn’t just decreased in isolation – one of the benefits of reducing the size of the I-cache is that it has allowed AMD to double the size of the micro-op cache. These two structures are next to each other inside the core, and so even at 7nm we have an instance of space limitations causing a trade-off between structures within a core. AMD stated that this configuration, the smaller L1 with the larger micro-op cache, ended up being better in more of the scenarios it tested.

216 Comments

View All Comments

mikato - Tuesday, June 11, 2019 - link

Hehe, yeah I saw that. That was a good one for the marketing team or whoever makes the slides.Atari2600 - Wednesday, June 12, 2019 - link

No, for each of those line items they should have said "Intel only"zalves - Tuesday, June 11, 2019 - link

I really don't understand how one can compare these AMD CPU's with Intel's HEDT, they lack PCIe Lanes and don't support quad-channel memory. And that a huge deal breaker for anyone that wants and needs some serious IO and multi tasking.TheUnhandledException - Tuesday, June 11, 2019 - link

Well that is what Threadripper is for. Can't wait to see the 3000 series Threadrippers.John_M - Tuesday, June 11, 2019 - link

So, 5th generation EPYC codename is going to be either Turin, Bolognia or Florence as Palermo has already been used for Sempron.John_M - Tuesday, June 11, 2019 - link

*that's Bologna, of course. It would be nice to be able to edit posts for typos.WaltC - Tuesday, June 11, 2019 - link

Great read!John_M - Tuesday, June 11, 2019 - link

What is the advantage in halving the L1 instruction cache? Was the change forced by the doubling of its associativity? According to the (I suspect somewhat oversimplified) Wikipedia article on CPU Cache, doubling the associativity increases the probability of a hit by about the same amount as doubling the cache size, but with more complexity. So how is this Zen2 configuration better than that in Zen and Zen+?John_M - Tuesday, June 11, 2019 - link

Ah! It's sort of explained at the bottom of page 7. I had glossed over that because the first two paragraphs were too technical for my understanding. I see that it was halved to make room for something else to be made bigger, which on balance seems to be a successful trade off.arnd - Wednesday, June 12, 2019 - link

More importantly, 32K 8-way is a sweet spot for an L1 cache. This is what AMD is using for the D$ already and what all modern Intel L1 caches (both I and D) are. With eight ways, this is the largest size you can have for a non-aliasing virtually indexed cache using the 4KB page size of the x86 architecture. Having more than eight ways has diminishing returns, so going beyond 32KB requires extra complexity for dealing with aliasing or physically indexed caches like the L2.