HiSilicon Kirin 970 - Android SoC Power & Performance Overview

by Andrei Frumusanu on January 22, 2018 9:15 AM ESTGPU Performance

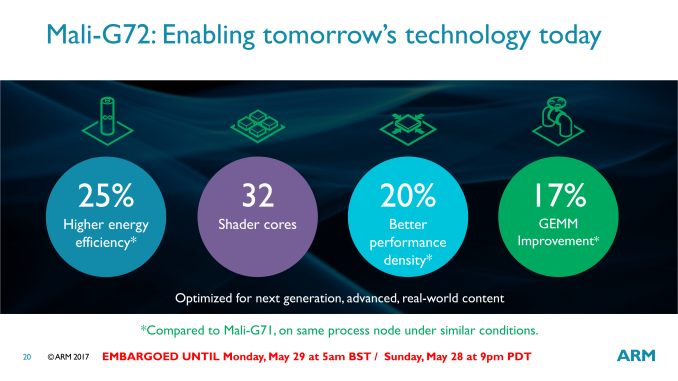

For 3D graphics and games the Kirin 970 is the first GPU to make use of ARM’s second generation Bifrost GPU architecture, Heimdall / G72. The new IP is an evolutionary update over last year’s Mali G71 with density and efficiency updates.

The density increase as well as the process node shrink allowed HiSilicon to increase the GPU core count by 50% from 8 to 12 while still reducing the GPU block complex in terms of absolute silicon area. There is no mincing around with words on last year’s G71 performance: The GPU unfortunately came nowhere near the projected efficiency goals stated by ARM in neither the Exynos 8895 nor the Kirin 960. The Kirin 960 especially was remarkable in terms of how we saw devices powered by it reach until then unheard of average power figures at the peak performance states, ranging at around the 9W mark for the Mate 9. I still remember 2 years ago I had praised HiSilicon for implementing a GPU conservative enough that it could properly sustain its maximum performance state within the device thermal envelope, staying below 4W. Nevertheless before continuing the power argument any power figures of the Kirin 970, let’s go over the peak performance figures of the most commonly used industry 3D benchmarks.

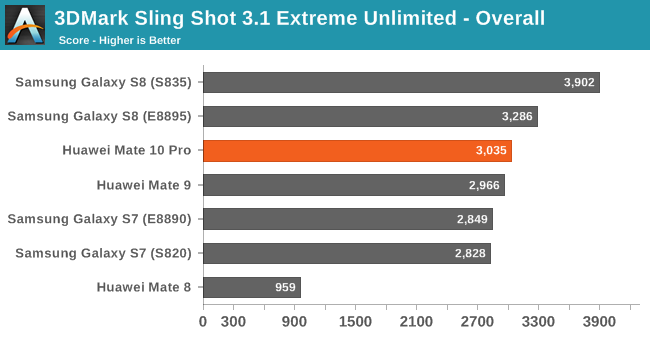

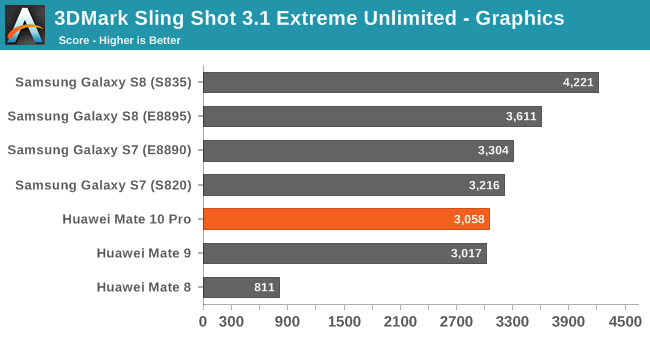

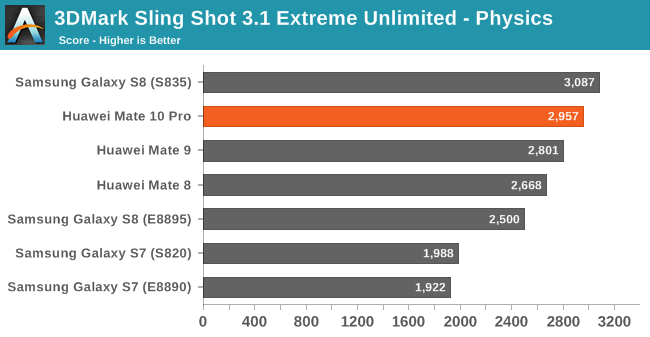

In 3DMark Sling Shot 3.1 Extreme Unlimited we see the G72 on the Kirin 970, oddly enough, not improving at all. I ran the benchmark several times and made sure thermals weren’t the causen but still the phone wasn’t able to increase performance over the Kirin 960 save for a small increase in the physics score. I’m not yet sure what the cause is here – I wasn’t able to monitor GPU frequency as I haven’t rooted the device yet so I can’t be sure that it’s using some kind of limitation mechanism.

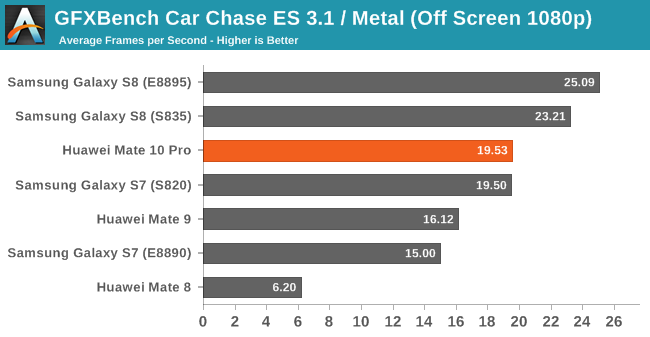

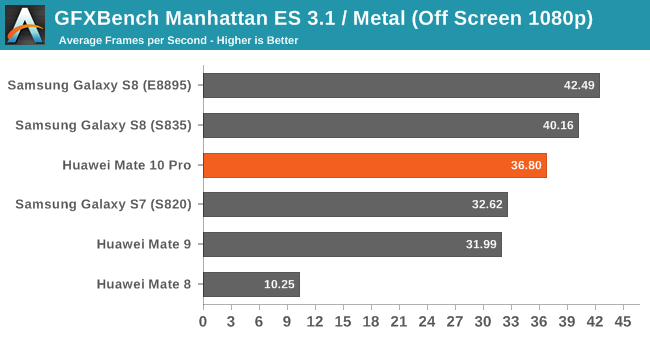

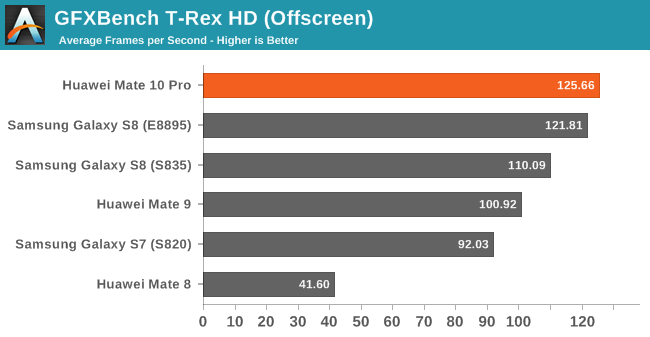

Moving on to Kishonti’s GFXBench we see the Kirin 970 achieve its theoretical gains of 15-20%. As a reminder while the GPU core count increased 50% from 8 to 12 cores, the frequency has been vastly reduced from the maximum 1033MHz down to 746MHz, leaving only a more marginal performance upgrade to be expected.

The Kirin 970’s G71MP12 ends up slightly below the Exynos 8895’s G71MP20 and the Snapdragon 835’s Adreno 540 in more compute bound workloads such as Manhattan 3.1 or Car Chase. In TRex the GPU has a slight lead over the Exynos 8895, but only when the device is cool as it quickly starts throttling down from its maximum frequencies at slightly more elevated temperatures.

GPU Power Efficiency

| GFXBench Manhattan 3.1 Offscreen Power Efficiency (System Active Power) |

||||

| Mfc. Process | FPS | Avg. Power (W) |

Perf/W Efficiency |

|

| Galaxy S8 (Snapdragon 835) | 10LPE | 38.90 | 3.79 | 10.26 fps/W |

| LeEco Le Pro3 (Snapdragon 821) | 14LPP | 33.04 | 4.18 | 7.90 fps/W |

| Galaxy S7 (Snapdragon 820) | 14LPP | 30.98 | 3.98 | 7.78 fps/W |

| Huawei Mate 10 (Kirin 970) | 10FF | 37.66 | 6.33 | 5.94 fps/W |

| Galaxy S8 (Exynos 8895) | 10LPE | 42.49 | 7.35 | 5.78 fps/W |

| Meizu PRO 5 (Exynos 7420) | 14LPE | 14.45 | 3.47 | 4.16 fps/W |

| Nexus 6P (Snapdragon 810 v2.1) | 20Soc | 21.94 | 5.44 | 4.03 fps/W |

| Huawei Mate 8 (Kirin 950) | 16FF+ | 10.37 | 2.75 | 3.77 fps/W |

| Huawei Mate 9 (Kirin 960) | 16FFC | 32.49 | 8.63 | 3.77 fps/W |

| Huawei P9 (Kirin 955) | 16FF+ | 10.59 | 2.98 | 3.55 fps/W |

In terms of average platform active power consumption, the Mate 10 shows as significant improvement over last year’s Mate 9. In Manhattan we go down from 8.6W to 6.33W. In terms of efficiency at similar peak performance the Kirin 970 managed only slightly outpace the Exynos 8895 and Mali G71. The architectural improvements that the G72 is promised to bring is counter-acted by the fact that the Exynos uses more cores at lower frequencies (and efficient voltages), with both ending up at a similar performance and efficiency point. The same effect applies between the Kirin 960 and 970, but in reverse. Here the addition of more cores at a lower frequency amplifies the process and architectural efficiency gains versus the G71, resulting in an absolute efficiency gain of 57% at peak performance, which comes near to Huawei’s stated claims of 50% efficiency gain. It’s to be noted that the true efficiency gain at same performance points is likely near the 100% mark, meaning for the same peak Kirin 960 performance levels the Kirin 970 and G72 implementation will be nearly double its efficiency.

Whilst this all might sound optimistic in terms of performance and efficiency gains, it’s all rather meaningless as the Mate 10 and Kirin 970 average power drains are still far above sustainable thermal envelopes at 6.3W.

| GFXBench T-Rex Offscreen Power Efficiency (System Active Power) |

||||

| Mfc. Process | FPS | Avg. Power (W) |

Perf/W Efficiency |

|

| Galaxy S8 (Snapdragon 835) | 10LPE | 108.20 | 3.45 | 31.31 fps/W |

| LeEco Le Pro3 (Snapdragon 821) | 14LPP | 94.97 | 3.91 | 24.26 fps/W |

| Galaxy S7 (Snapdragon 820) | 14LPP | 90.59 | 4.18 | 21.67 fps/W |

| Galaxy S8 (Exynos 8895) | 10LPE | 121.00 | 5.86 | 20.65 fps/W |

| Galaxy S7 (Exynos 8890) | 14LPP | 87.00 | 4.70 | 18.51 fps/W |

| Huawei Mate 10 (Kirin 970) | 10FF | 127.25 | 7.93 | 16.04 fps/W |

| Meizu PRO 5 (Exynos 7420) | 14LPE | 55.67 | 3.83 | 14.54 fps/W |

| Nexus 6P (Snapdragon 810 v2.1) | 20Soc | 58.97 | 4.70 | 12.54 fps/W |

| Huawei Mate 8 (Kirin 950) | 16FF+ | 41.69 | 3.58 | 11.64 fps/W |

| Huawei P9 (Kirin 955) | 16FF+ | 40.42 | 3.68 | 10.98 fps/W |

| Huawei Mate 9 (Kirin 960) | 16FFC | 99.16 | 9.51 | 10.42 fps/W |

Again on T-Rex, which is less ALU heavy and more texture, fill-rate and triangle rate bound we see the Kirin 970 reach impressive performance levels at impressively bad power figures. At 7.93W the phone doesn’t seem to be able to sustain the peak frequencies for long as even on a second consecutive run we see performance go down as thermal throttling kicks in. So while the Kirin 970 slightly outpaces the Exynos 8895 in performance it does so at 25% lower efficiency.

Against the Kirin 960 as again the previous paragraph might sound dire, it’s a vast improvement in comparison. So disastrous was the peak power of the Mate 9 that still at 28% higher peak performance, the Mate 10 still manages to be 53% more efficient, again validating Huawei’s marketing claims. At iso-performance again I estimate that the Kirin 970 is likely near twice as efficient over the Kirin 960.

In all this you’ll have probably noticed Qualcomm consistently at the top of the charts. Indeed over the last few generations it seems Qualcomm is the only company which has managed to increase performance by architectural and process node improvements without ever increasing and exploding the power budget. On the contrary, Qualcomm seems to steadily able to lower the average power generation after generation, reaching an extremely impressive 3.5-3.8W on the Snapdragon 835. It’s widely quoted that mobile GPU’s power budget is 1.5-2W, but over the last few years the only high-end GPU able to achieve that seems to be Adreno, and this gap seems to be ever increasing generation after generation.

In my review of the Mate 8 there were a lot of users in the comments section who still deemed the performance of the T880MP4 in the Kirin 950 unsatisfactory and uncompetitive. Unfortunately this view is the common widespread notion among most users and most media, and was one of main complaints of Huawei devices in the past. Today Huawei is able to compete at the top of the benchmarks, but at a rather ghastly hidden cost of efficiency and unsustainable power that is perfectly honest a lot harder to test and to communicate to users.

AnandTech is also partly guilty here; you have to just look at the top of the page: I really shouldn’t have published those performance benchmarks as they’re outright misleading and rewarding the misplaced design decisions made by the silicon vendors. I’m still not sure what to do here and to whom the onus falls onto. As long as vendors keep away from configuring devices with unreachable and unsustainable performance states on 3D workloads and keep within reasonable levels then the whole topic becomes a non-issue. If things don’t improve then we’ll have to have a hard look on how to handle these situations I’m considering simply no longer posting any GPU peak performance figures in device reviews and keeping them in separate more technical SoC pieces such as this one.

Overall I think we’re at a critical point in time for the mobile GPU landscape. Qualcomm currently holds such an enormous lead in performance, density and efficiency that other silicon vendors who rely on IP vendors for their GPUs are in a tight and precarious situation in terms of their ability to offer competitive products. I see this as a key catalyst as to why Apple has stated to planning to abandon Imagination as their GPU IP provider in upcoming SoCs and why Samsung has accelerated efforts to replace Mali and also introduce their in-house S-GPU maybe as early as 2019. Over the course of the next 2 years we’ll be seeing some exciting shake-ups of the SoC GPU space, that’s for sure.

116 Comments

View All Comments

StormyParis - Monday, January 22, 2018 - link

If the Modem IP is Huawei's one true in-house part, why didn't you at least test it alongside the CPU and GPU ? I'd assume in the real world, ti too has a large impact on batteyr and performance ?Ian Cutress - Monday, January 22, 2018 - link

The kit to properly test a modem power/attenuation to battery is around $50-100k. We did borrow one once, a few years ago, but it was only short-term loan. Not sure if/when we'll be able to do that testing again.juicytuna - Monday, January 22, 2018 - link

How does Mali have so many design wins? Why did Samsung switch from PowerVR to Mali? Cost savings? Politics? Because it clearly wasn't a descistion made on technical merit.lilmoe - Tuesday, January 23, 2018 - link

Because OEMs like Samsung are not stupid? And Mali is actually very power efficient and competitive?What are you basing your GPU decision on? Nothing in the articles provides evidence that Mali is less efficient than Adreno in UI acceleration or 60fps capped popular games (or even 60fps 1080p normalized T-Rex benchmark)...

Measuring the constant power draw of the GPU, which is supposed to be reached in vert short bursts, is absolutely meaningless.

lilmoe - Tuesday, January 23, 2018 - link

***Measuring the max (constant) power draw of the GPU, which is supposed to be reached in very short bursts during a workload, is absolutely meaningless.jospoortvliet - Saturday, January 27, 2018 - link

Your argument is half-way sensible for a CPU but not for a GPU.A GPU should not even HAVE a boost clock - there is no point in that for typical GPU workloads. Where a CPU is often active in bursts, a GPU has to sustain performance in games - normal UI work barely taxes it anyway.

So yes the max sustained performance and associated efficiency is ALL that matters. And MALI, at least in the implementations we have seen, is behind.

lilmoe - Sunday, January 28, 2018 - link

I think you're confusing fixed function processing with general purpose GPUs. Modern GPU clocks behave just like CPU cores, and yes, with bursts, just like NVidia's and AMD's. Not all scenes rendered in a game, for example, need the same GPU power, and not all games have the same GPU power needs.Yes, there is a certain performance envelope that most popular games target. That performance envelope/ target is definitely not SlingShot nor T-rex.

This is where Andrei's and your argument crumbles. You need to figure out that performance target and measure efficiency and power draw at that target. That's relatively easy to do; open up candy crush and asphalt 8 and measure in screen fps and power draw. That's how you measure efficiency on A SMARTPHONE SoC. Your problem is that you think people are using these SoCs like they would on a workstation. They don't. No one is going to render a 3dmax project on these phones, and there are no games that even saturate last year's flagship mobile gpu.

Not sure if you're not getting my simple and sensible point, or you're just being stubborn about it. Mobile SoC designed have argued for bursty gpu behavior for years. You guys need to get off your damn high horse and stop deluding yourself into thinking that you know better. What Apple or Qualcomm do isn't necessarily best, but it might be best for the gpu architecture THEY'RE using.

As for the CPU, you agree but Andrei insists on making the same mistake. You DON'T measure efficiency at max clocks. Again, max clocks are used in bursts and only for VERY short periods of time. You measure efficient by measuring the time it takes to complete a COMMON workload and the total power it consumes at that. Another hint, that common workload is NOT geekbench, and it sure as hell isn't SPEC.

lilmoe - Sunday, January 28, 2018 - link

The A75 is achieving higher performance mostly with higher clocks. The Exynos M3 is a wide core WITH higher clocks. Do you really believe these guys are idiots? You really think that's going to affect efficiency negatively? You think Android OEMs will make the same "mistake" Apple did and not provide adequate and sustainable power delivery?Laughable.

futrtrubl - Monday, January 22, 2018 - link

"The Kirin 970 in particular closes in on the efficiency of the Snapdragon 835, leapfrogging the Kirin 960 and Exynos SoCs."Except according to the chart right above it the 960 is still more efficient.

Andrei Frumusanu - Monday, January 22, 2018 - link

The efficiency axis is portrayed as energy (joules) per performance (test score). In this case the less energy used, the more efficient, meaning the shorter the bars, the better.