The NVIDIA GeForce GTX 1070 Ti Founders Edition Review: GP104 Comes in Threes

by Nate Oh on November 2, 2017 9:00 AM EST- Posted in

- GPUs

- GeForce

- NVIDIA

- Pascal

- GTX 1070 Ti

Power, Temperature, & Noise

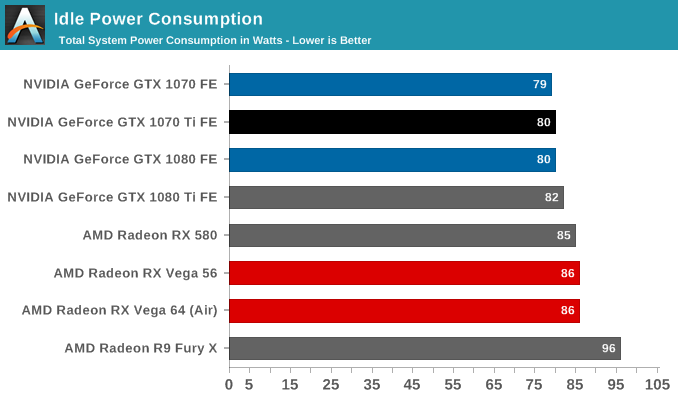

As always, we'll take a look at inter-related metrics of power, temperature and noise. Particularly with noise, these factors can render unwanted even a decently-performing card, a situation that usually goes hand-in-hand with high power consumption and heat output. As this is a new GPU, we will quickly review the GeForce GTX 1070 Ti's stock voltages as well.

| GeForce Video Card Voltages | |||||

| GTX 1070 Ti Boost | GTX 1070 Boost | GTX 1070 Ti Idle | GTX 1070 Idle | ||

| 1.062v | 1.062v | 0.65v | 0.625v | ||

With the exception of a slightly higher idle voltage, everything remains the same in comparison to the GTX 1080 and 1070. The idle voltage actually matches the GTX 1080 Ti idle voltage, but isn't particularly significant as it seems to vary from card to card. In comparison to previous generations, these voltages are exceptionally lower because of the FinFET process used, something we went over in detail in our GTX 1080 and 1070 Founders Edition review. As we said then, the 16nm FinFET process requires said low voltages as opposed to previous planar nodes, so this can be limiting in scenarios where a lot of power and voltage are needed, i.e. high clockspeeds and overclocking.

Fortunately for NVIDIA, GP104 has always been able to clock exceptionally high, at least the good chips, so with a slight knock on efficiency in the form of a 180W TDP, the GTX 1070 Ti Founders Edition is also able to clock exceptionally high, and then a little more. I also suspect the maturity of TSMC's 16nm FinFET process is playing a part here, but the card's higher TDP and NVIDIA's clockspeed choices make it hard to validate that point.

| GeForce Video Card Average Clockspeeds | |||

| Game | GTX 1070 Ti | GTX 1070 | |

| Max Boost Clock |

1898MHz

|

1898MHz

|

|

| Battlefield 1 |

1826MHz

|

1797MHz

|

|

| Ashes: Escalation |

1838MHz

|

1796MHz

|

|

| DOOM |

1856MHz

|

1780MHz

|

|

| Ghost Recon Wildlands |

1840MHz

|

1807MHz

|

|

| Dawn of War III |

1848MHz

|

1807MHz

|

|

| Deus Ex: Mankind Divided |

1860MHz

|

1803MHz

|

|

| Grand Theft Auto V |

1865MHz

|

1839MHz

|

|

| F1 2016 |

1840MHz

|

1825MHz

|

|

| Total War: Warhammer |

1832MHz

|

1785MHz

|

|

The end result is that the GTX 1070 Ti is amusingly able to reverse the GeForce Founders Edition trend of decreased clocks with higher-performing models; that is, as we reviewed them, the GTX 1080 Ti maintained lower clockspeeds than the GTX 1080, which in turn had lower average clockspeeds than the vapor-chamberless GTX 1070, which had lower clockspeeds than the GTX 1060. Typically, that was the case due to extra hardware units, and thus extra power consumption and heat. But as we see in the GTX 1070 Ti Founders Edition, despite the extra SMs and such, the vapor chamber and higher TDP work well in allowing the GTX 1070 Ti to boost high. In fact, higher than the GTX 1070 FE across the board, but in relative terms the clockspeeds only come out to about 2% faster on average than the GTX 1070 FE. In relation to the 1683MHz boost specification, the GTX 1070 Ti on average clocks nearly 10% above that in these games, though that is something we've already seen with previous Pascal cards. And we can also start to see the line of thinking that leads to framing the GTX 1070 Ti as an "overclocking monster."

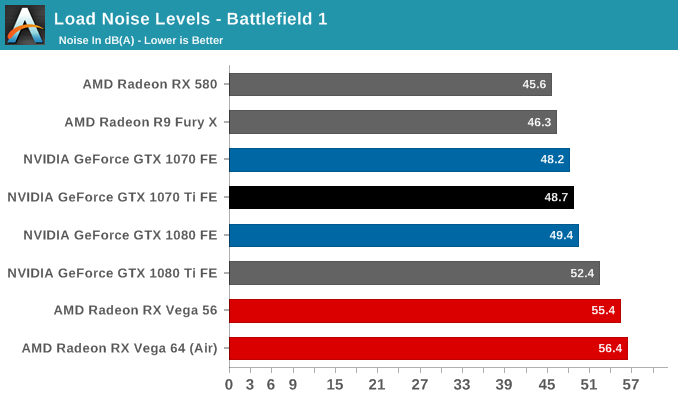

As those higher clockspeeds bear out in power, heat, and noise, there are no surprises here with the GTX 1070 Ti Founders Edition. We've already seen several variations in the aforementioned Founders Edition cards. Here, the blower and vapor chamber are more than adequate for what the card can put out, and in turn lessening the work (and noise) the fan needs to do.

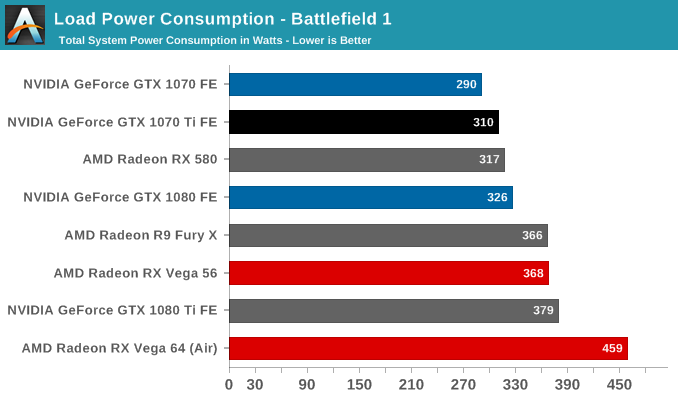

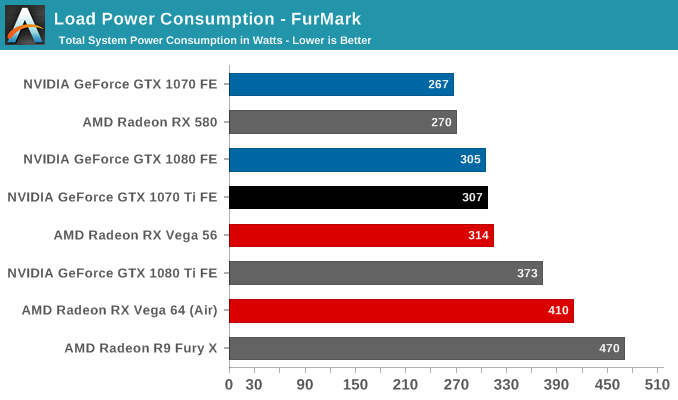

Given the higher 180W TDP, the card can also be closer to GTX 1080 levels of power consumption if need be, though with measurements at the wall, the accuracy of quantification is less than ideal. But in any case, the GTX 1070 Ti has no real necessity to be a power-sipper at this performance range, for which the crown has already gone to the GTX 1070. On top of that, drops in power efficiency will likely not be noticable when compared to its RX Vega competition, or even just against RX Vega 56, whose Battlefield 1 power consumption at the wall is closer to the GTX 1080 Ti FE than the GTX 1080, let alone the GTX 1070 Ti FE.

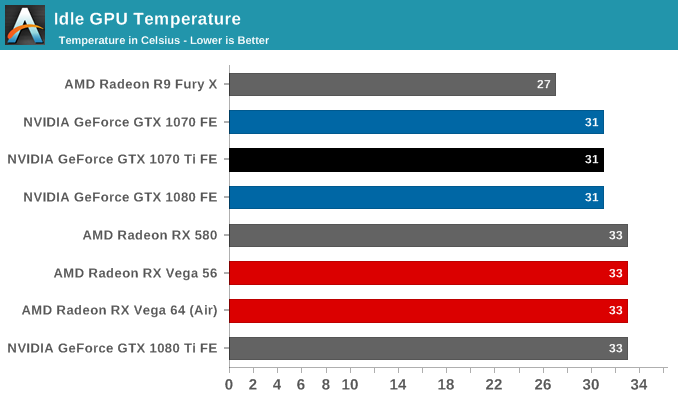

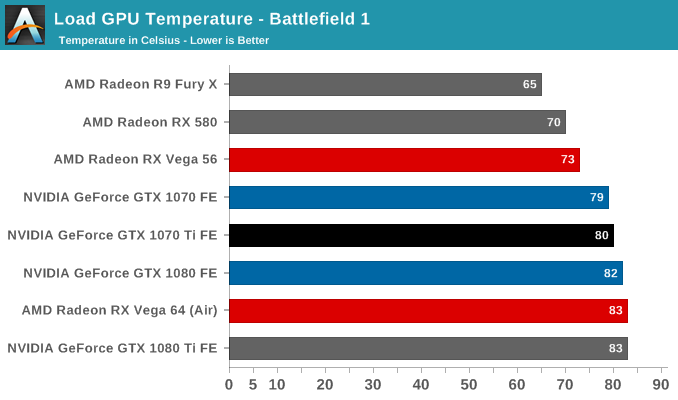

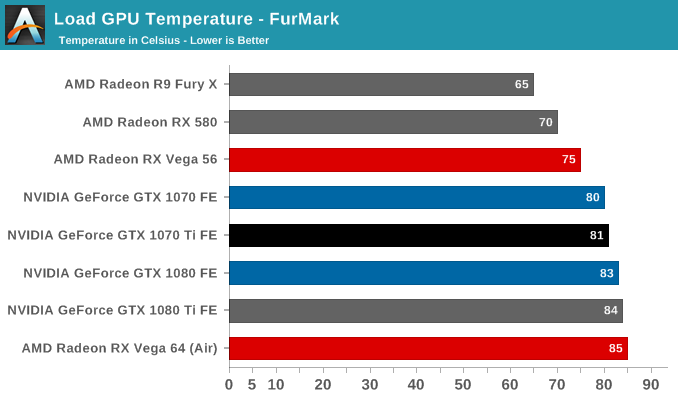

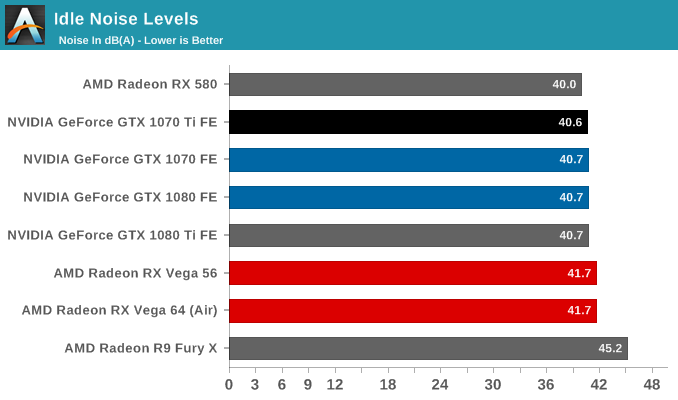

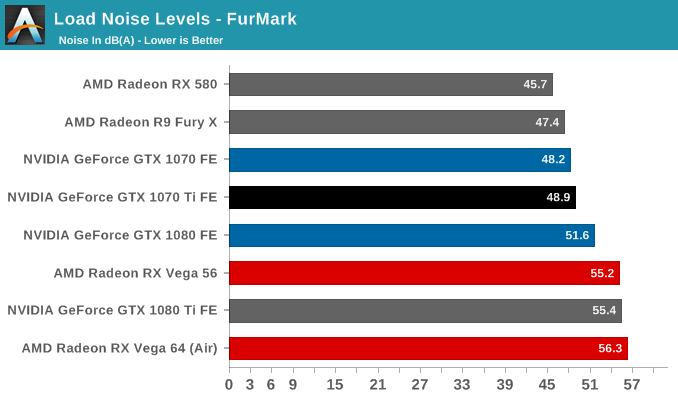

As far as heat and noise go, the GTX 1070 Ti FE has the same 83C throttle point as the GTX 1080 and 1070 FEs, and will approach there under load, though with the GTX 1080's vapor chamber cooler, is kept a little cooler. This follows in fanspeeds and noise as well, and particularly in the eternal power-virus that is Furmark. So for both temperature and noise, the GTX 1070 Ti FE sits right in between the GTX 1080 and 1070 FEs.

78 Comments

View All Comments

Bp_968 - Tuesday, December 26, 2017 - link

I know this is an old article but I had to comment on the desktop space being "stagnant". That's mostly true for general consumers and corporate buyers but absolutely not true for med/higher end systems targeted at gamers (and miners). Those two markets are pushing massive demand for high performance desktop CPUs and GPUs (which you can see in AMD and NV's pricing and availability on their GPUs). The year over year performance improvement in CPUs is pretty boring right now but GPUs are still pretty exciting and still seem to have some big leaps left in them. I'm interested in seeing if Intel is going to be able to bring out a capable 3rd option in the discrete GPU space in the next few years. Their track record isn't good so far but having a 3rd player in the GPU/Compute space could really shake things up.I'm with you on the Ryzen APUs. If AMD can manage to make an APU with 1050/1060 level performance that fits in a thin and light notebook that could be a real game changer for many of us. I've mostly given up on gaming laptops because they end up being more of a portable then a mobile system and for most things I do on a laptop I'd just prefer the really thin and light setup. If I can manage to get both thin and light *and* decent gaming performance (even if you need to be plugged in to maintain battery life when gaming) then that would be killer.

The only problem I see is the push for 4k monitors on these little laptops. No APU is going to be able to push 4k anytime soon, but I've never tried gaming at 1080p on a 4k panel and since its still a square pixel at 1080p, it might look fine, in which case it would be a non-issue.

Hxx - Thursday, November 2, 2017 - link

That just makes no sense and heres why. First off, If you're paying over MSRP then you are not paying Intel or Amd or Nvidia or whatever. You are paying that distributor or the retailer or whoever gets those cards from the manufacturer. Second, if the manufacturer quotes an MSRP then this price already covers the costs and whatever the manufacturer wants to charge you for.It has nothing to do with whether or not you can afford it. Thats irrelevant. And if you're wondering WHY the vendor charges over MSRP is because 1) they can, 2) they know the demand is high so they know there are suckers like you who are willing to pay.

"Bullwinkle J Moose" - Thursday, November 2, 2017 - link

Higher prices would destroy AMDTo demand a higher price, they would need to compete with NVidia with cards that ran cooler/quieter, used less power and outperformed NVidia cards for the same price

That's just not happening anytime soon

Hixbot - Thursday, November 2, 2017 - link

The MSRP seems a bit high. The MSRP is a bit irrelevant as miners will push up the price even further.For me, it's a still a good purchase because of the blower cooler which I need for my SFF PC, all other Founder Edition cards are unavailable.

Wwhat - Sunday, November 12, 2017 - link

I think any device relying on Nvidia or AMD drivers/software should reflect that, and they should not dare to sell anything over $500.Samus - Thursday, November 2, 2017 - link

I’m glad I’m not the only one who finds it ridiculous that the mainstream cards are now $100-$150 more than they used to be...These price brackets have just gotten ridiculous. I’d like to go back 15-20 years to when the most expensive cards were $200 (TNT2 Ultra :)

Hxx - Thursday, November 2, 2017 - link

It wasnt like this a year ago. This year we just have a couple phenomenons overlapping and the result is this...a market where the demand is high and supply is low. Maybe next year things will change but this year is not consistent with the past couple years where a videocard would drop significantly in price 6 months after release.BrokenCrayons - Thursday, November 2, 2017 - link

I remember splurging on an original TNT with 16MB of VRAM. It was a Diamond Viper V550, I think...but paying $130 or so for it back then felt like an awful lot. It was passively cooled by a tiny little heatsink, but was among the highest performing video cards at the time. Heh, I did want to shove a pair of Voodoo2s in the system behind it for Glide titles. Anyhow, I think economic inflation can account for some of the cost increase in higher end video cards, but certainly not all of it.webdoctors - Thursday, November 2, 2017 - link

The TNT2 was awesome and the best bang per buck at the time. However, the average price of gas (including state taxes) was $1.14 in 1999.https://www.statista.com/statistics/204740/retail-...

Now its $2.14. The Feds have been printing money so fast its essentially become water and diluted. That's why gas and video cards are going up (its true inflation, not the one fed by propaganda outlets). Unless you switch to using the gold standard where it was $290 in 1999 and now its ~$1500.

https://goldprice.org/gold-price-usa.html

So really if you wanted to buy the TNT2 today, instead of $200 it would be $400+, in short get the gtx1060 6gb and be happy.

RiZad - Monday, November 6, 2017 - link

counting for inflation they are ~1% more expensive. based on the tnt2 ultra that launched at $299 not $199