Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

Intel's New On-Chip Topology: A Mesh

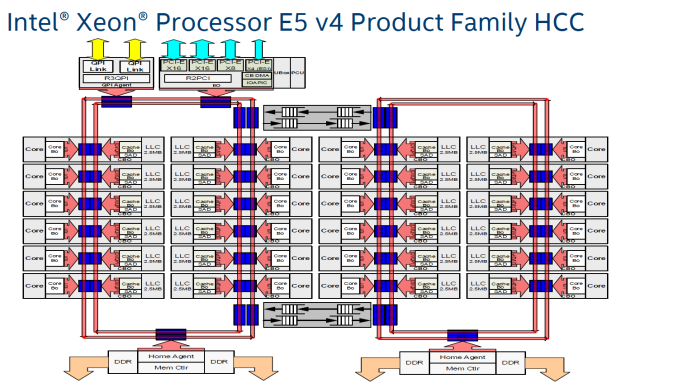

Since the introduction of the "Nehalem" CPU architecture – and the Xeon 5500 that started almost a decade-long reign for Intel in the datacenter – Intel's engineers have relied upon a low latency, high bandwidth ring to connect their cores with their caches, memory controllers, and I/O controllers.

Intel's most recent adjustment to their ring topology came with the Ivy Bridge-EP (Xeon E5 2600 v2) family of CPUs. The top models were the first with three columns of cores connected by a dual ring bus, which utilized both outer and inner rings. The rings moved data in opposite directions (clockwise/counter-clockwise) in order to minimize latency by allowing data to take the shortest path to the destination. As data is brought onto the ring infrastructure, it must be scheduled so that it does not collide with previous data.

The ring topology had a lot of advantages. It ran very fast, up to 3 GHz. As result, the L3-cache latency was pretty low: if the core is lucky enough to find the data in its own cache slice, only one extra cycle is needed (on top of the normal L1-L2-L3 latency). Getting a cacheline of another slice can cost up to 12 cycles, with an average cost of 6 cycles.

However the ring model started show its limits on the high core count versions of the Xeon E5 v3, which had no less than four columns of cores and LLC slices, making scheduling very complicated: Intel had to segregate the dual ring buses and integrate buffered switches. Keeping cache coherency performant also became more and more complex: some applications gained quite a bit of performance by choosing the right snoop filter mode (or alternatively, lost a lot of performance if they didn't pick the right mode). For example, our OpenFOAM benchmark performance improved by almost 20% by choosing "Home Snoop" mode, while many easy to scale, compute-intensive applications preferred "Cluster On Die" snooping mode.

In other words, placing 22 (E7:24) cores, several PCIe controllers, and several memory controllers was close to the limit what a dual ring could support. In order to support an even larger number of cores than the Xeon v4 family, Intel would have to add a third ring, and ultimately connecting 3 rings with 6 columns of cores each would be overly complex.

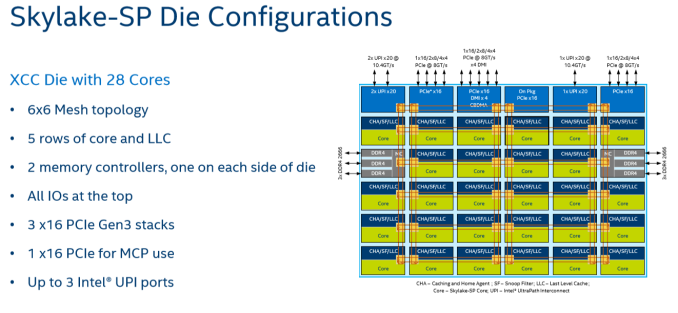

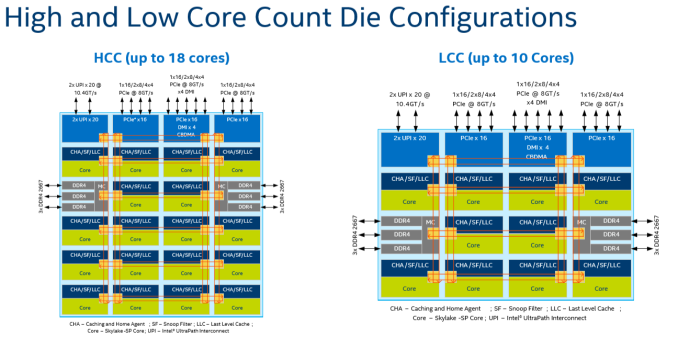

Given that, it shouldn't come as a surprise that Intel's engineers decided to use a different topology for Skylake-SP to connect up to 28 cores with the "uncore." Intel's new solution? A mesh architecture.

Under Intel's new topology, each node – a caching/home agent, a core, and a chunk of LLC – is interconnected via a mesh. Conceptually it is very similar to the mesh found on Xeon Phi, but not quite the same. In the long-run the mesh is far more scalable than Intel's previous ring topology, allowing Intel to connect many more nodes in the future.

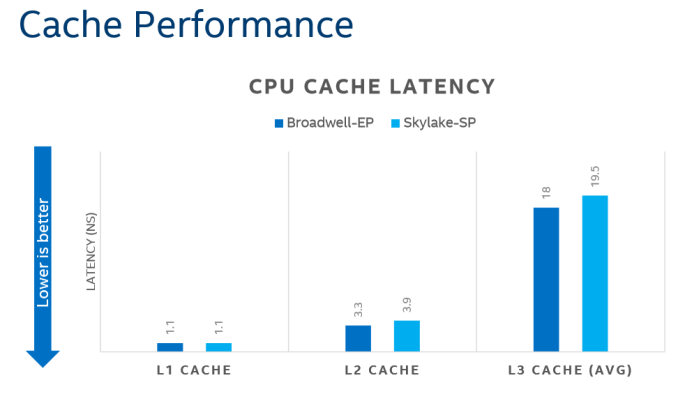

How does it compare to the ring architecture? The Ring could run at up to 3 GHz, while the current mesh and L3-cache runs at at between 1.8GHZ and 2.4GHz. On top of that, the mesh inside the top Skylake-SP SKUs has to support more cores, which further increases the latency. Still, according to Intel the average latency to the L3-cache is only 10% higher, and the power usage is lower.

A core that access an L3-cache slice that is very close (like the ones vertically above each other) gets an additional latency of 1 cycle per hop. An access to a cache slice that is vertically 2 hops away needs 2 cycles, and one that is 2 hops away horizontally needs 3 cycles. A core from the bottom that needs to access a cache slice at the top needs only 4 cycles. Horizontally, you get a latency of 9 cycles at the most. So despite the fact that this Mesh connects 6 extra cores verse Broadwell-EP, it delivers an average latency in the same ballpark (even slightly better) as the former's dual ring architecture with 22 cores (6 cycles average).

Meanwhile the worst case scenario – getting data from the right top node to the bottom left node – should demand around 13 cycles. And before you get too concerned with that number, keep in mind that it compares very favorably with any off die communication that has to happen between different dies in (AMD's) Multi Chip Module (MCM), with the Skylake-SP's latency being around one-tenth of EPYC's. It is crystal clear that there will be some situations where Intel's server chip scales better than AMD's solution.

There are other advantages that help Intel's mesh scale: for example, caching and home agents are now distributed, with each core getting one. This reduces snoop traffic and reduces snoop latency. Also, the number of snoop modes is reduced: no longer do you need to choose between home snoop or early snoop. A "cluster-on-die" mode is still supported: it is now called sub-NUMA Cluster or SNC. With SNC you can divide the huge Intel server chips into two NUMA domains to lower the latency of the LLC (but potentially reduce the hitrate) and limit the snoop broadcasts to one SNC domain.

219 Comments

View All Comments

deltaFx2 - Thursday, July 13, 2017 - link

"Can you mention one innovation from AMD that changed the world?" : None. But the same applies to Intel too, save, I suppose, the founders (Moore and Noyce) contributions to IC design back when they were at Fairchild/Shockley. That's not Intel's contribution. Computer Architecture/HPC? That's IBM. They invented the field along with others like CDC. Intel is an innovator in process technology, specifically manufacturing. Or used to be... others are catching up. That 3-yr lead that INtel loves to talk about is all but gone. So with that out of the way...AMD's contributions to x86 technology: x86-64, hypertransport, integrated memory controller, multicore, just to name a few. Intel copied all of them after being absolutely hammered by Opteron. Nehalem system architecture was a copy-paste of Opteron. It is to AMD's discredit that they ceded so much ground on the CPU microarchitecture since then with badly executed Bulldozer, but it was AMD that brought high-performance features to x86 server. Intel would've just loved to keep x86 on client and Itanium on server (remember that innovative atrocity?). Then there's a bunch on the GPU side (which INtel can't get right for love or money), but that came from an acquisition, so I won't count those.

"AMD exists because they are always inferior". Remember K8? It absolutely hammered intel until 2007. Remember Intel's shenanigans bribing the likes of Dell to not carry K8? Getting fined in the EU for antitrust behaviors and settling with AMD in 2010? Not much of a memory card on you, is there?

AMD gaining even 5-10% means two things for intel: Lower margins on all but the top end (Platinum) and a loss in market share. That's plain bad for the stock.

"Intel is a data center giant have head start have the resources...". Yes, they are giants in datacenter compute. 99% market share. Only way to go from there is down. Also, those acquisitions you're talking about? Only altera applies to the datacenter. Also, remember McAfee for an eye-watering $7.8 bn? How's that working out for them?

Shankar1962 - Wednesday, July 12, 2017 - link

Nvidia who have been ahead than Intel in AI should be the more competent threatHow much market share Intel loses depends on how they compete against Nvidia

Amd will probably gain 5% by selling products for cheap prices

Intel controls 98/99% share so it's inevitable to lose a few % as more players see the money potential but unless Intel loses to Nvidia there is annuphill battle for Qualcomm ARM.

HanSolo71 - Wednesday, July 12, 2017 - link

Could you guys create a Benchmark for Virtual Desktop Solutions? These AMD chips sound awesome for something like my Horizon View environment where I have hundreds of 2 core 4GB machines.Threska - Saturday, July 22, 2017 - link

For VDI wouldn't either an APU setup, or CPU+GPU be better?msroadkill612 - Wednesday, July 12, 2017 - link

Kudos to the authors. I imagine its gratifying to have stirred such healthy & voluminous debate :)milkod2001 - Thursday, July 13, 2017 - link

Are you guys still updating BENCH results? I cannot find there benchmark results for RYZEN CPUs when i want to compare them to others.Ian Cutress - Friday, July 14, 2017 - link

They've been there since the launchAMD (Zen) Ryzen 7 1800X:

http://www.anandtech.com/bench/product/1853

KKolev - Thursday, July 13, 2017 - link

I wonder if AMD'd EPYC CPU's can be overclocked. If so, the AMD EPYC 7351P would be very interesting indeed.uklio - Thursday, July 13, 2017 - link

How could you not do Cinebench results?! we need an answer!JohanAnandtech - Thursday, July 13, 2017 - link

I only do server benchmarks, Ian does workstation. Ian helped with the introduction, he will later conduct the workstation benchmarks.