Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

Introducing Skylake-SP: The Xeon Scalable Processor Family

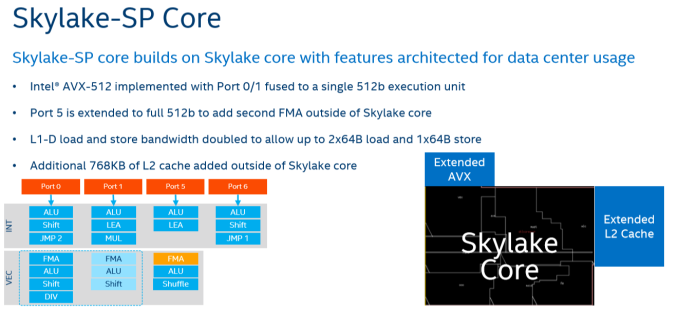

The biggest news hitting the streets today comes from the Intel camp, where the company is launching their Skylake-SP based Xeon Scalable Processor family. As you have read in Ian's Skylake-X review, the new Skylake-SP core has been rather significantly altered and improved compared to it's little brother, the original Skylake-S. Three improvements are the most striking: Intel added 768 KB of per-core L2-cache, changed the way the L3-cache works while significantly shrinking its size, and added a second full-blown 512 bit AVX-512 unit.

On the defensive and not afraid to speak their mind about the competition, Intel likes to emphasize that AMD's Zen core has only two 128-bit FMACs, while Intel's Skylake-SP has two 256-bit FMACs and one 512-bit FMAC. The latter is only useable with AVX-512. On paper at least, it would look like AMD is at a massive disadvantage, as each 256-bit AVX 2.0 instruction can process twice as much data compared to AMD's 128-bit units. Once you use AVX-512 bit, Intel can potentially offer 32 Double Precision floating operations, or 4 times AMD's peak.

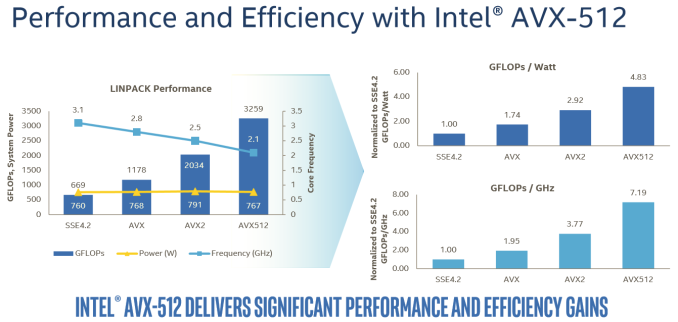

The reality, on the other hand, is that the complexity and novelty of the new AVX-512 ISA means that it will take a long time before most software will adopt it. The best results will be achieved on expensive HPC software. In that case, the vendor (like Ansys) will ask Intel engineers to do the heavy lifting: the software will get good AVX-512 support by the expensive process of manual optimization. Meanwhile, any software that heavily relies on Intel's well-optimized math kernel libraries should also see significant gains, as can be seen in the Linpack benchmark.

In this case, Intel is reporting 60% better performance with AVX-512 versus 256-bit AVX2.

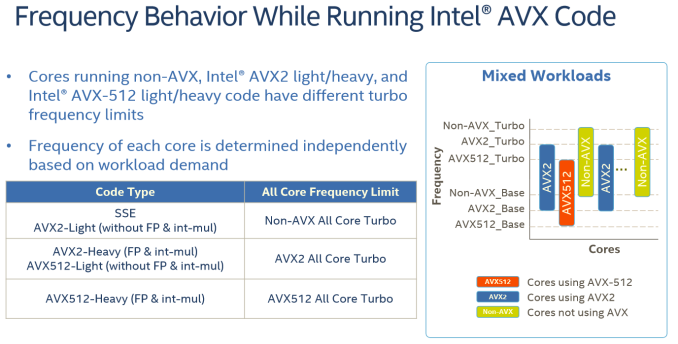

For the rest of us mere mortals, it will take a while before compilers will be capable of producing AVX-512 code that is actually faster than the current AVX binaries. And when they do, the result will be probably be limited, as compilers still have trouble vectorizing code from scratch. Meanwhile it is important to note that even in the best-case scenario, some of the performance advantage will be negated by the significantly lower clock speeds (base and turbo) that Intel's AVX-512 units run at due to the sheer power demands of pushing so many FLOPS.

For example, the Xeon 8176 in this test can boost to 2.8 GHz when all cores are active. With AVX 2.0 this is reduced to 2.4 GHz (-14%), with AVX-512, the clock tumbles down to 1.9 GHz (another 20% lower). Assuming you can fill the full width of the AVX unit, each step still sees a significant performance improvement, but AVX2 to AVX-512 won't offer a full 2x performance improvement even with ideal code.

Lastly, about half of the major floating point intensive applications can be accelerated by GPUs. And many FP applications are (somewhat) limited by memory bandwidth. While those will still benefit from better AVX code, they will show diminishing returns as you move from 256-bit AVX to 512-bit AVX. So most FP applications will not achieve the kinds of gains we saw in the well-optimized Linpack binaries.

219 Comments

View All Comments

TheOriginalTyan - Tuesday, July 11, 2017 - link

Another nicely written article. This is going to be a very interesting next couple of months.coder543 - Tuesday, July 11, 2017 - link

I'm curious about the database benchmarks. It sounds like the database is tiny enough to fit into L3? That seems like a... poor benchmark. Real world databases are gigabytes _at best_, and AMD's higher DRAM bandwidth would likely play to their favor in that scenario. It would be interesting to see different sizes of transactional databases tested, as well as some NoSQL databases.psychobriggsy - Tuesday, July 11, 2017 - link

I wrote stuff about the active part of a larger database, but someone's put a terrible spam blocker on the comments system.Regardless, if you're buying 64C systems to run a DB on, you likely will have a dataset larger than L3, likely using a lot of the actual RAM in the system.

roybotnik - Wednesday, July 12, 2017 - link

Yea... we use about 120GB of RAM on the production DB that runs our primary user-facing app. The benchmark here is useless.haplo602 - Thursday, July 13, 2017 - link

I do hope they elaborate on the DB benchmarks a bit more or do a separate article on it. Since this is a CPU article, I can see the point of using a small DB to fit into the cache, however that is useless as an actual DB test. It's more an int/IO test.I'd love to see a larger DB tested that can fit into the DRAM but is larger than available caches (32GB maybe ?).

ddriver - Tuesday, July 11, 2017 - link

We don't care about real world workloads here. We care about making intel look good. Well... at this point it is pretty much damage control. So let's lie to people that intel is at least better in one thing.Let me guess, the databse size was carefully chosen to NOT fit in a ryzen module's cache, but small enough to fit in intel's monolithic die cache?

Brought to you by the self proclaimed "Most Trusted in Tech Since 1997" LOL

Ian Cutress - Tuesday, July 11, 2017 - link

I'm getting tweets saying this is a severely pro AMD piece. You are saying it's anti-AMD. ¯\_(ツ)_/¯ddriver - Tuesday, July 11, 2017 - link

Well, it is hard to please intel fanboys regardless of how much bias you give intel, considering the numbers.I did not see you deny my guess on the database size, so presumably it is correct then?

ddriver - Tuesday, July 11, 2017 - link

In the multicore 464.h264ref test we have 2670 vs 2680 for the xeon and epyc respectively. Considering that the epyc score is mathematically higher, howdoes it yield a negative zero?Granted, the difference is a mere 0.3% advantage for epyc, but it is still a positive number.

Headley - Friday, July 14, 2017 - link

I thought the exact same thing