Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

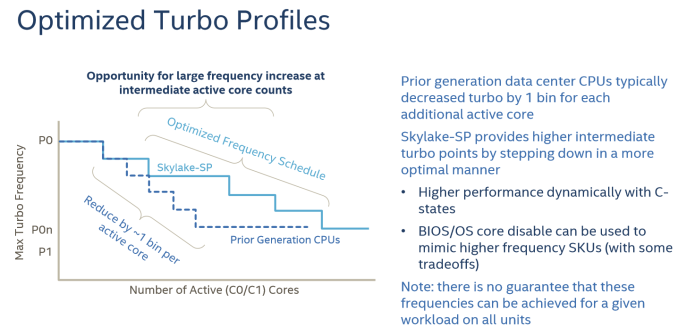

Intel's Optimized Turbo Profiles

Also new to Skylake-SP, Intel has also further enhanced turbo boosting.

There are also some security and virtualization enhancements (MBE, PPK, MPX) , but these are beyond the scope this article as we don't test them.

Summing It All Up: How Skylake-SP and Zen Compare

The table below shows you the differences in a nutshell.

| AMD EPYC 7000 |

Intel Skylake-SP | Intel Broadwell-EP |

|

| Package & Dies | Four dies in one MCM | Monolithic | Monolithic |

| Die size | 4x 195 mm² | 677 mm² | 456 mm² |

| On-Chip Topology | Infinity Fabric (1-Hop Max) |

Mesh | Dual Ring |

| Socket configuration | 1-2S | 1-8S ("Platinum") | 1-2S |

| Interconnect (Max.) Bandwidth (*)(Max.) |

4x16 (64) PCIe lanes 4x 37.9 GB/s |

3x UPI 20 lanes 3x 41.6 GB/s |

2x QPI 20 lanes 2x 38.4 GB/s |

| TDP | 120-180W | 70-205W | 55-145W |

| 8-32 | 4-28 | 4-22 | |

| LLC (max.) | 64MB (8x8 MB) | 38.5 MB | 55 MB |

| Max. Memory | 2 TB | 1.5 TB | 1.5 TB |

| Memory subsystem Fastest sup. DRAM |

8 channels DDR4-2666 |

6 channels DDR4-2666 |

4 channels DDR4-2400 |

| PCIe Per CPU in a 2P |

64 PCIe (available) | 48 PCIe 3.0 | 40 PCIe 3.0 |

(*) total bandwidth (bidirectional)

At a high level, I would argue that Intel has the most advanced multi-core topology, as they're capable of integrating up to 28 cores in a mesh. The mesh topology will allow Intel to add more cores in future generations while scaling consistently in most applications. The last level cache has a decent latency and can accommodate applications with a massive memory footprint. The latency difference between accessing a local L3-cache chunk and one further away is negligible on average, allowing the L3-cache to be a central storage for fast data synchronization between the L2-caches. However, the highest performing Xeons are huge, and thus expensive to manufacture.

AMD's MCM approach is much cheaper to manufacture. Peak memory bandwidth and capacity is quite a bit higher with 4 dies and 2 memory channels per die. However, there is no central last level cache that can perform low latency data coordination between the L2-caches of the different cores (except inside one CCX). The eight 8 MB L3-caches acts like - relatively low latency - spill over caches for the 32 L2-caches on one chip.

219 Comments

View All Comments

TheOriginalTyan - Tuesday, July 11, 2017 - link

Another nicely written article. This is going to be a very interesting next couple of months.coder543 - Tuesday, July 11, 2017 - link

I'm curious about the database benchmarks. It sounds like the database is tiny enough to fit into L3? That seems like a... poor benchmark. Real world databases are gigabytes _at best_, and AMD's higher DRAM bandwidth would likely play to their favor in that scenario. It would be interesting to see different sizes of transactional databases tested, as well as some NoSQL databases.psychobriggsy - Tuesday, July 11, 2017 - link

I wrote stuff about the active part of a larger database, but someone's put a terrible spam blocker on the comments system.Regardless, if you're buying 64C systems to run a DB on, you likely will have a dataset larger than L3, likely using a lot of the actual RAM in the system.

roybotnik - Wednesday, July 12, 2017 - link

Yea... we use about 120GB of RAM on the production DB that runs our primary user-facing app. The benchmark here is useless.haplo602 - Thursday, July 13, 2017 - link

I do hope they elaborate on the DB benchmarks a bit more or do a separate article on it. Since this is a CPU article, I can see the point of using a small DB to fit into the cache, however that is useless as an actual DB test. It's more an int/IO test.I'd love to see a larger DB tested that can fit into the DRAM but is larger than available caches (32GB maybe ?).

ddriver - Tuesday, July 11, 2017 - link

We don't care about real world workloads here. We care about making intel look good. Well... at this point it is pretty much damage control. So let's lie to people that intel is at least better in one thing.Let me guess, the databse size was carefully chosen to NOT fit in a ryzen module's cache, but small enough to fit in intel's monolithic die cache?

Brought to you by the self proclaimed "Most Trusted in Tech Since 1997" LOL

Ian Cutress - Tuesday, July 11, 2017 - link

I'm getting tweets saying this is a severely pro AMD piece. You are saying it's anti-AMD. ¯\_(ツ)_/¯ddriver - Tuesday, July 11, 2017 - link

Well, it is hard to please intel fanboys regardless of how much bias you give intel, considering the numbers.I did not see you deny my guess on the database size, so presumably it is correct then?

ddriver - Tuesday, July 11, 2017 - link

In the multicore 464.h264ref test we have 2670 vs 2680 for the xeon and epyc respectively. Considering that the epyc score is mathematically higher, howdoes it yield a negative zero?Granted, the difference is a mere 0.3% advantage for epyc, but it is still a positive number.

Headley - Friday, July 14, 2017 - link

I thought the exact same thing