The Ampere Altra Review: 2x 80 Cores Arm Server Performance Monster

by Andrei Frumusanu on December 18, 2020 6:00 AM EST- Posted in

- Servers

- Neoverse N1

- Ampere

- Altra

Test Bed and Setup - Compiler Options

For the rest of our performance testing, we’re disclosing the details of the various test setups:

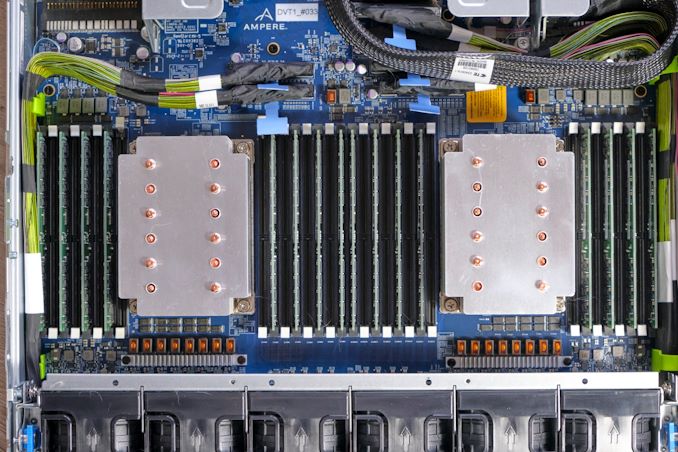

Ampere "Mount Jade" - Dual Altra Q80-33

Obviously, for the Ampere Altra system we’re using the provided Mount Jade server as configured by Ampere.

The system features 2 Altra Q80-33 processors within the Mount Jade DVT motherboard from Ampere.

In terms of memory, we’re using the bundled 16 DIMMs of 32GB of Samsung DDR4-3200 for a total of 512GB, 256GB per socket.

| CPU | 2x Ampere Altra Q80-33 (3.3 GHz, 80c, 32 MB L3, 250W) |

| RAM | 512 GB (16x32 GB) Samsung DDR4-3200 |

| Internal Disks | Samsung MZ-QLB960NE 960GB Samsung MZ-1LB960NE 960GB |

| Motherboard | Mount Jade DVT Reference Motherboard |

| PSU | 2000W (94%) |

The system came preinstalled with CentOS 8 and we continued usage of that OS. It’s to be noted that the server is naturally Arm SBSA compatible and thus you can run any kind of Linux distribution on it.

Ampere makes special note of Oracle’s active support of their variant of Oracle Linux for Altra, which makes sense given that Oracle a few months ago announced adoption of Altra systems for their own cloud-based offerings.

The only other note to make of the system is that the OS is running with 64KB pages rather than the usual 4KB pages – this either can be seen as a testing discrepancy or an advantage on the part of the Arm system given that the next page size step for x86 systems is 2MB – which isn’t feasible for general use-case testing and something deployments would have to decide to explicitly enable.

The system has all relevant security mitigations activated, including SSBS (Speculative Store Bypass Safe) against Spectre variants.

AMD - Dual EPYC 7742

For our AMD system, unfortunately we had hit some issues with our Daytona reference server motherboard, and moved over to a test-bench setup on a SuperMicro H11DSI0.

We’re also equipping the system with 256GB per socket of 8-channel/DIMM DDR4-3200 memory, matching the Altra system.

| CPU | 2x AMD EPYC 7742 (2.25-3.4 GHz, 64c, 256 MB L3, 225W) |

| RAM | 512 GB (16x32 GB) Micron DDR4-3200 |

| Internal Disks | OCZ Vector 512GB |

| Motherboard | SuperMicro H11DSI0 |

| PSU | EVGA 1600 T2 (1600W) |

As an operating system we’re using Ubuntu 20.10 with no further optimisations. In terms of BIOS settings we’re using complete defaults, including retaining the default 225W TDP of the EPYC 7742’s, as well as leaving further CPU configurables to auto, except of NPS settings where it’s we explicitly state the configuration in the results.

The system has all relevant security mitigations activated against speculative store bypass and Spectre variants.

Intel - Dual Xeon Platinum 8280

For the Intel system we’re also using a test-bench setup with the same SSD and OS image actually – we didn’t have enough RAM to run both systems concurrently.

Because the Xeons only have 6-channel memory, their maximum capacity is limited to 384GB of the same Micron memory, running at a default 2933MHz to remain in-spec with the processor’s capabilities.

| CPU | 2x Intel Xeon Platinum 8280 (2.7-4.0 GHz, 28c, 38.5MB L3, 205W) |

| RAM | 384 GB (12x32 GB) Micron DDR4-3200 (Running at 2933MHz) |

| Internal Disks | OCZ Vector 512GB |

| Motherboard | ASRock EP2C621D12 WS |

| PSU | EVGA 1600 T2 (1600W) |

The Xeon system was similarly run on BIOS defaults on an ASRock EP2C621D12 WS with the latest firmware available.

The system has all relevant security mitigations activated against the various vulnerabilities.

Compiler Setup

For compiled tests, we’re using the release version of GCC 10.2. The toolchain was compiled from scratch on both the x86 systems as well as the Altra system. We’re using shared binaries with the system’s libc libraries.

148 Comments

View All Comments

realbabilu - Friday, December 18, 2020 - link

well it support fortran also using Arm Fortran Compiler, unlike m1.realbabilu - Friday, December 18, 2020 - link

my bad. Numerical Algorithms Group (Nag) has fortran for m1. lets battle begin X86 vs armGruenSein - Friday, December 18, 2020 - link

The userbase for fortran on M1 is probably super small anyway. Although.. I can see the HPC cluster entirely made up of Macbook Airs before my eye. Just like the PS3-cluster the air force used to have ;)davidorti - Friday, December 18, 2020 - link

Wouldn't it be way cheaper a cluster of minis?Flunk - Friday, December 18, 2020 - link

No, the hardware would be cheaper but the maintenance would be much more time-intensive. That's why companies that need a lot of processing hardware buy enterprise level hardware. The cost of maintaining the system quickly eclipses the hardware costs. And if you're using a computer to make money, quite often the hardware cost is only a small amount of your costs.FunBunny2 - Friday, December 18, 2020 - link

I dunno about the "quite often the hardware cost is only a small amount of your costs." part. as modern production methods have been ever more automated, (I'm talkin to you, bitcoin mining), there's almost no other cost. now, some may argue, in the extreme case of mining for instance, that power is the largest component; but isn't that 'hardware' cost? it certainly isn't labor or interest or land or even CxOs' cut. fewer and fewer automation efforts are conducted in assembler or even naked C or java or FORTRAN, but in frameworks, often with bespoke syntax and with headcounts way lower than their native languages. so, yeah, now into the foreseeable future, hardware is the biggest byte.at_clucks - Friday, December 18, 2020 - link

The point was a cluster of Minis would probably be cheaper than a cluster of Airs because why pay for screen, battery, keyboard and all that.Spunjji - Monday, December 21, 2020 - link

True, but I did enjoy the holistic response. Just think of the potential: batteries are a built-in UPS, and you don't need to mess about with any sort of KVM arrangement - if a node drops out, you can go right to it and poke it to find out what's up!ProDigit - Saturday, December 19, 2020 - link

I guess the results showing lower TDP despite 100% load, means that the cores are sometimes idling for a part of their clock frequency.It means the cpu is lacking buffers, and isn't fully optimized.

mode_13h - Sunday, December 20, 2020 - link

Buffers and even cache can't completely avoid memory bottlenecks.Also, you can run a core 100% on code with very little parallelism and not draw much power. Code with lots of ILP and especially vector arithmetic burns a lot more power, which is why AVX2 and especially AVX-512 trigger significant clock-throttling on Intel.