Intel’s Tiger Lake 11th Gen Core i7-1185G7 Review and Deep Dive: Baskin’ for the Exotic

by Dr. Ian Cutress & Andrei Frumusanu on September 17, 2020 9:35 AM EST- Posted in

- CPUs

- Intel

- 10nm

- Tiger Lake

- Xe-LP

- Willow Cove

- SuperFin

- 11th Gen

- i7-1185G7

- Tiger King

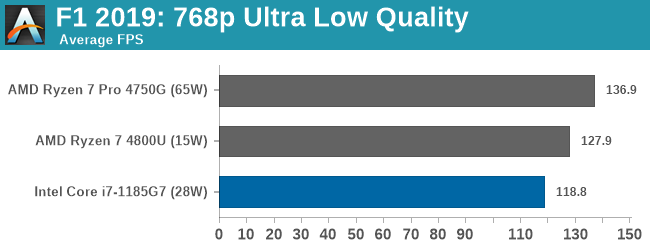

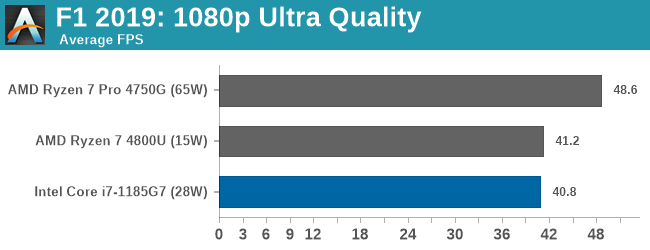

Xe-LP GPU Performance: F1 2019

The F1 racing games from Codemasters have been popular benchmarks in the tech community, mostly for ease-of-use and that they seem to take advantage of any area of a machine that might be better than another. The 2019 edition of the game features all 21 circuits on the calendar, and includes a range of retro models and DLC focusing on the careers of Alain Prost and Ayrton Senna. Built on the EGO Engine 3.0, the game has been criticized similarly to most annual sports games, by not offering enough season-to-season graphical fidelity updates to make investing in the latest title worth it, however the 2019 edition revamps up the Career mode, with features such as in-season driver swaps coming into the mix. The quality of the graphics this time around is also superb, even at 4K low or 1080p Ultra.

To be honest, F1 benchmarking has been up and down in any given year. Since at least 2014, the benchmark has revolved around a ‘test file’, which allows you to set what track you want, which driver to control, what weather you want, and which cars are in the field. In previous years I’ve always enjoyed putting the benchmark in the wet at Spa-Francorchamps, starting the fastest car at the back with a field of 19 Vitantonio Liuzzis on a 2-lap race and watching sparks fly. In some years, the test file hasn’t worked properly, with the track not being able to be changed.

For our test, we put Alex Albon in the Red Bull in position #20, for a dry two-lap race around Austin.

In this case, at 1080p Ultra, AMD and Intel (28W) are matched. Unfortunately looking through the data, the 15 W test run crashed and we only noticed after we returned the system.

253 Comments

View All Comments

tipoo - Thursday, September 17, 2020 - link

“Baskin for the exotic”I see what you did there...

ingwe - Thursday, September 17, 2020 - link

I didn't get it until I read your comment.Luminar - Thursday, September 17, 2020 - link

RIP AMDAMDSuperFan - Thursday, September 17, 2020 - link

"Against the x86 competition, Tiger Lake leaves AMD’s Zen2-based Renoir in the dust when it comes to single-threaded performance." - But I am hoping Big Navi can compete well against this Intel chip.tipoo - Thursday, September 17, 2020 - link

What does Big Navi have to do with a laptop CPU?AMDSuperFan - Thursday, September 17, 2020 - link

You care about games don't you? This Intel Tiger won't have an answer for Big Navi. We can look forward to that showing who is the boss.blppt - Thursday, September 17, 2020 - link

Based on preliminary data, they'll both be about 2 years behind Nvidia, what with Big Navi only matching a 2080ti, and not available for another month at the earliest.hecksagon - Friday, September 18, 2020 - link

Crazy how you can make that prediction, the only preliminary data that is out is a photograph of the card. Are you a wizard?blppt - Friday, September 18, 2020 - link

Incorrect.https://wccftech.com/amd-radeon-navi-gpu-specs-per...

HarryVoyager - Friday, September 18, 2020 - link

I'm not really seeing where you are getting that from. We know that RDNA2 can hit 2.23Ghz from the PS5 implementation, and we have solid rumors that it the top end one will be an 80CU chip, rather than a 40 CU chip. That implies on the order of a 230% improvement over the 5700XT, if their are no other performance improvements. That alone puts it in the 30-40% improvement range over the 2080 Ti. Given we've already seen at least a few AMD benchmarks of unidentified cards showing a 30-40% improvement over 2080 To performance, that sort of lift does seem likely.If I had to guess, that RDNA2 that recently showed up with a near 2080 TI performance is probably a 6700 competitor to the 3070, not the top end card. Those do have to be developed and tested too, after all.