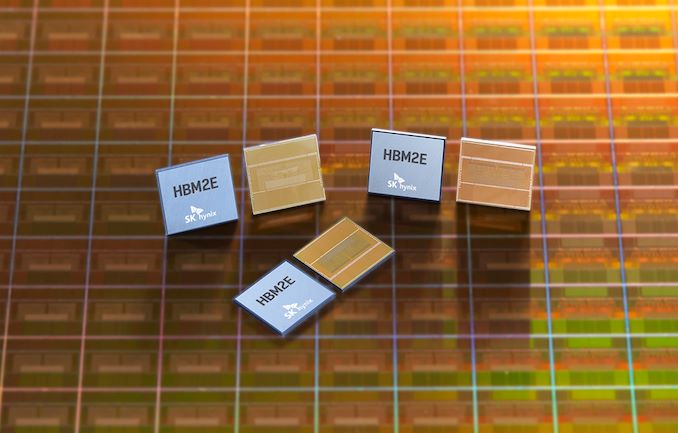

SK Hynix: HBM2E Memory Now in Mass Production

by Ryan Smith on July 2, 2020 10:00 AM EST

Just shy of a year ago, SK Hynix threw their hat into the ring, as it were, by becoming the second company to announce memory based on the HBM2E standard. Now the company has announced that their improved high-speed, high density memory has gone into mass production, offering transfer rates up to 3.6 Gbps/pin, and capacities of up to 16GB per stack.

As a quick refresher, HBM2E is a small update to the HBM2 standard to improve its performance, serving as a mid-generational kicker of sorts to allow for higher clockspeeds, higher densities (up to 24GB with 12 layers), and the underlying changes that are required to make those happen. Samsung was the first memory vendor to ship HBM2E with their 16GB/stack Flashbolt memory, which runs at up to 3.2 Gbps in-spec (or 4.2 Gbps out-of-spec). This in turn has led to Samsung becoming the principal memory partner for NVIDIA’s recently-launched A100 accelerator, which was launched using Samsung’s Flashbolt memory.

Today’s announcement by SK Hynix means that the rest of the HBM2E ecosystem is taking shape, and that chipmakers will soon have access to a second supplier for the speedy memory. As per SK Hynix’s initial announcement last year, their new HBM2E memory comes in 8-Hi, 16GB stacks, which is twice the capacity of their earlier HBM2 memory. Meanwhile, the memory is able to clock at up to 3.6 Gbps/pin, which is actually faster than the “just” 3.2 Gbps/pin that the official HBM2E spec tops out at. So like Samsung’s Flashbolt memory, it would seem that the 3.6 Gbps data rate is essentially an optional out-of-spec mode for chipmakers who have HBM2E memory controllers that can keep up with the memory.

At those top speeds, this gives a single 1024-pin stack a total of 460GB/sec of memory bandwidth, which rivals (or exceeds) most video cards today. And for more advanced devices which employ multiple stacks (e.g. server GPUs), this means a 6-stack configuration could reach as high as 2.76TB/sec of memory bandwidth, a massive amount by any measure.

Finally, for the moment SK Hynix isn’t announcing any customers, but the company expects the new memory to be used on “next-generation AI (Artificial Intelligence) systems including Deep Learning Accelerator and High-Performance Computing.” An eventual second-source for NVIDIA’s A100 would be among the most immediate use cases for the new memory, though NVIDIA is far from the only vendor to use HBM2. If anything, SK Hynix is typically very close to AMD, who is due to launch some new server GPUs over the next year for use in supercomputers and other HPC systems. So one way or another, the era of HBM2E is quickly ramping up, as more and more high-end processors are set to be introduced using the faster memory.

Source: SK Hynix

37 Comments

View All Comments

TheJian - Thursday, July 2, 2020 - link

I really hope this doesn't end up on consumer cards unless it can be produced EASILY (meaning won't be in such a shortage it kills multiple flagships for AMD...LOL), and CHEAPER than GDDR6 (or whatever). Win the price war or feck off UNLESS you can PROVE it makes my games faster at 30fps or better. Meaning, no point in showing me it winning at 32K (yeah that's about how dumb this crap gets) when it's running .1fps doing it. "It's 10 times faster than GDDR75, which only gets .01 fps, so it sucks, get HBMblahblahBS now!".Wake me when it wins something and does it cheaper, and is EASY to produce. Not sure why AMD ever got on this dumb bandwagon to destroyed flagships (2 so far? 3 soon?). There are very few situations that can exploit this memory, so don't waste your effort designing for it unless you are IN THAT USE CASE.

Let's hope AMD stops making mistakes that kill NET INCOME, and overall raises prices to finally get some REAL NET INCOME. Quit the discounts. Charge like you are winning win you ARE.

brucethemoose - Thursday, July 2, 2020 - link

I didn't know HBM was part of GPU politics...But now that the compute lineup is splitting from the graphics lineup, I suspect you won't have to worry about that.

FreckledTrout - Thursday, July 2, 2020 - link

AMD was looking 5-10 years out :) The entire point of HBM was to stack it on die. The holy grail was for APU's. Lots of marketing discussing this a few years ago. I suspect the end game to kill Nvidia in the dedicated GPU segment is an APU that can have enough CPU for anything the average person might do so say 8-cores, a GPU that can play 1080p flawlessly and is ok at 4K, and has stacked HBM memory all on die. Oh its coming and once AMD is on TSMC's 3nm in a couple years densities will be such that an 8-16 core chiplet will be tiny so there is plenty of space for a decently high end GPU on die.Deicidium369 - Sunday, July 5, 2020 - link

Man I grow some amazing cannabis - but whatever you are smoking has mine beat.AMD will NEVER kill Nvidia. Won't happen. Ever.

dotjaz - Sunday, July 5, 2020 - link

Your weed must be trash. Nvidia's dGPU business is dying, that's a fact, as a matter of fact, the entire dGPU segment is dying.HBM+iGPU will kill any remaining dGPU on laptops soon enough. That's not up for debate, just a fact.

Desktop PC is dying fast, I mean really fast, it's shrinking every year. PC gaming is shrinking even faster. When AMD command both consoles there's no escaping from the fact that next year, iGPU+HBM can match PS5/XBSX performance in 2 years, rendering dGPU below $400 useless. How long do you think Nvidia can remain profitable without any iGPU or console?

FreckledTrout - Monday, July 6, 2020 - link

Yeah people have blinders on if they don't see what Intel and AMD are doing with APU's.Tams80 - Tuesday, July 7, 2020 - link

Nvidia, to their credit, are also aware of this, and it isn't as if they aren't diversifying. They are and are doing so very successfully in most cases. Plus, they do still have their ARM developments to fall back on if they have to.AMD appear to have set themselves up nicely.

As for Intel, well they are a slow, likely quite corrupt, behemoth that are turning in the right direction. And they do have the advantage of having their own fabs (although for the last few years that hasn't been so great, but they are an asset).

FreckledTrout - Tuesday, July 7, 2020 - link

I agree with you Tams80. Nvidia are doing a great job moving into AI and the data center. Just people arguing AMD and Intel wont make such powerful APU's that all but eliminate the very high end GPU's don't see what is going on. We will easily have enough CPU cores and GPU power in a single APU once manufacturing is on TSMC's 3nm or comparable gate all around process. When this happens only x86 APU makers will be relevant which leaves only Intel and AMD standing. That is unless Nvidia does something crazy like buy VIA which is the only other x86 license holder(well Zhaoxin as well but they are under VIA).RSAUser - Wednesday, July 15, 2020 - link

dGPU is not going to die any time soon, and Nvidia is diversifying because they make a lot of money doing so, while it's a good thing to do in business.As long as dGPU keeps getting better, Nvidia is going to be fine, because people will move up to higher resolutions, and the industry will take advantage of the fact that GPU are getting stronger, so you'll need a stronger GPU.

FreckledTrout - Monday, July 6, 2020 - link

Intel and AMD will push Nvidia out of the dedicated GPU market in under a decade. At this point its not if its when. Nvidia will move into the data center for AI etc.