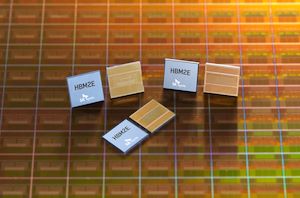

HBM2E

Intel this morning is kicking off the second day of their Vision 2024 conference, the company’s annual closed-door business and customer-focused get-together. While Vision is not typically a hotbed for new silicon announcements from Intel – that’s more of an Innovation thing in the fall – attendees of this year’s show are not coming away empty handed. With a heavy focus on AI going on across the industry, Intel is using this year’s event to formally introduce the Gaudi 3 accelerator, the next-generation of Gaudi high-performance AI accelerators from Intel’s Habana Labs subsidiary. The latest iteration of Gaudi will be launching in the third quarter of 2024, and Intel is already shipping samples to customers now. The hardware itself is something of a mixed bag...

Memory Makers on Track to Double HBM Output in 2023

TrendForce projects a remarkable 105% increase in annual bit shipments of high-bandwidth memory (HBM) this year. This boost comes in response to soaring demands from AI and high-performance computing...

9 by Anton Shilov on 8/9/2023As The Demand for HBM Explodes, SK Hynix is Expected to Benefit

The demand for high bandwidth memory is set to explode in the coming quarters and years due to the broader adoption of artificial intelligence in general and generative AI...

9 by Anton Shilov on 4/18/2023Intel Showcases Sapphire Rapids Plus HBM Xeon Performance at ISC 2022

Alongside today’s disclosure of the Rialto Bridge accelerator, Intel is also using this week’s ISC event to deliver a brief update on Sapphire Rapids, the company’s next-generation Xeon CPU...

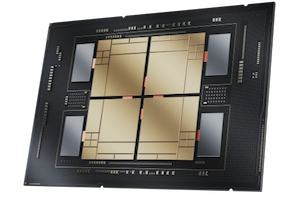

22 by Ryan Smith on 5/31/2022Intel: Sapphire Rapids With 64 GB of HBM2e, Ponte Vecchio with 408 MB L2 Cache

This week we have the annual Supercomputing event where all the major High Performance Computing players are putting their cards on the table when it comes to hardware, installations...

69 by Dr. Ian Cutress on 11/15/2021NVIDIA Unveils PCIe version of 80GB A100 Accelerator: Pushing PCIe to 300 Watts

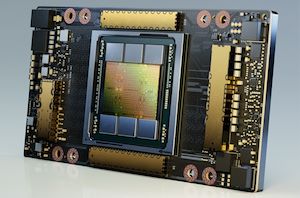

As part of today’s burst of ISC 2021 trade show announcements, NVIDIA this morning is announcing that they’re bringing the 80GB version of their A100 accelerator to the PCIe...

16 by Ryan Smith on 6/28/2021NVIDIA Announces A100 80GB: Ampere Gets HBM2E Memory Upgrade

Kicking off a very virtual version of the SC20 supercomputing show, NVIDIA this morning is announcing a new version of their flagship A100 accelerator. Barely launched 6 months ago...

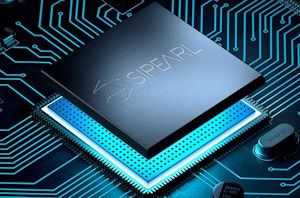

29 by Ryan Smith on 11/16/2020SiPearl Lets Rhea Design Leak: 72x Zeus Cores, 4x HBM2E, 4-6 DDR5

In what seems to be a major blunder by the SiPearl PR team, a recent visit by a local French politician resulted in the public Twitter posting in what...

28 by Andrei Frumusanu on 9/8/2020SK Hynix: HBM2E Memory Now in Mass Production

Just shy of a year ago, SK Hynix threw their hat into the ring, as it were, by becoming the second company to announce memory based on the HBM2E...

38 by Ryan Smith on 7/2/2020Micron to Launch HBM2 DRAM This Year: Finally

Bundled in their latest earnings call, Micron has revealed that later this year the company will finally introduce its first HBM DRAM for bandwidth-hungry applications. The move will enable...

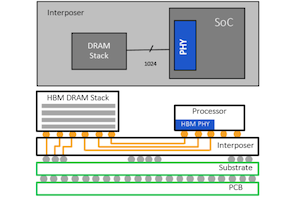

14 by Anton Shilov on 3/27/2020Rambus Develops HBM2E Controller & PHY: 3.2 Gbps, 1024-Bit Bus

The latest enhancements to the HBM2 standard will clearly be appreciated by developers of memory bandwidth-hungry ASICs, however in order to add support of HBM2E to their designs, they...

42 by Anton Shilov on 3/6/2020JEDEC Updates HBM2 Memory Standard To 3.2 Gbps; Samsung's Flashbolt Memory Nears Production

After a series of piecemeal announcements from different hardware vendors over the past year, the future of High Bandwidth Memory 2 (HBM2) is finally coming into focus. Continuing the...

24 by Ryan Smith on 2/3/2020GlobalFoundries and SiFive to Design HBM2E Implementation on 12LP/12LP+

GlobalFoundries and SiFive announced on Tuesday that they will be co-developing an implementation of HBM2E memory for GloFo's 12LP and 12LP+ FinFET process technologies. The IP package will enable...

13 by Anton Shilov on 11/5/2019Samsung Develops 12-Layer 3D TSV DRAM: Up to 24 GB HBM2

Samsung on Monday said that it had developed the industry’s first 12-layer 3D packaging for DRAM products. The technology uses through silicon vias (TSVs) to create high-capacity HBM memory...

11 by Anton Shilov on 10/7/2019SK Hynix Announces 3.6 Gbps HBM2E Memory For 2020: 1.8 TB/sec For Next-Gen Accelerators

SK Hynix this morning has thrown their hat into the ring as the second company to announce memory based on the HBM2E standard. While the company isn’t using any...

23 by Ryan Smith on 8/12/2019Samsung HBM2E ‘Flashbolt’ Memory for GPUs: 16 GB Per Stack, 3.2 Gbps

Samsung has introduced the industry’s first memory that correspond to the HBM2E specification. The company’s new Flashbolt memory stacks increase performance by 33% and offer double per-die as well...

25 by Anton Shilov on 3/20/2019