Rebranded Ethernet Technology Consortium Unveils 800 Gigabit Ethernet

by Gavin Bonshor on April 9, 2020 11:00 AM EST- Posted in

- Networking

- Cisco

- Ethernet

- 800 GbE

- 400 GbE

- 800GBase-T

With an increasing demand for networking speed and throughput performance within the datacenter and high performance computing clusters, the newly rebranded Ethernet Technology Consortium has announced a new 800 Gigabit Ethernet technology. Based upon many of the existing technologies that power contemporary 400 Gigabit Ethernet, the 800GBASE-R standard is looking to double performance once again, to feed ever-hungrier datacenters.

The recently-finalized standard comes from the Ethernet Technology Consortium, the non-IEEE, tech industry-backed consortium formerly known as the 25 Gigabit Ethernet Consortium. The group was originally created to develop 25, 50, and 100 Gigabit Ethernet technology, and while IEEE Ethernet standards have since surpassed what the consortium achieved, the consortium has stayed formed to push even faster networking speeds, and changing its name to keep with the times. Some of the biggest contributors and supporters of the ETC include Broadcom, Cisco, Google, and Microsoft, with more than 40 companies listed as integrators of its work.

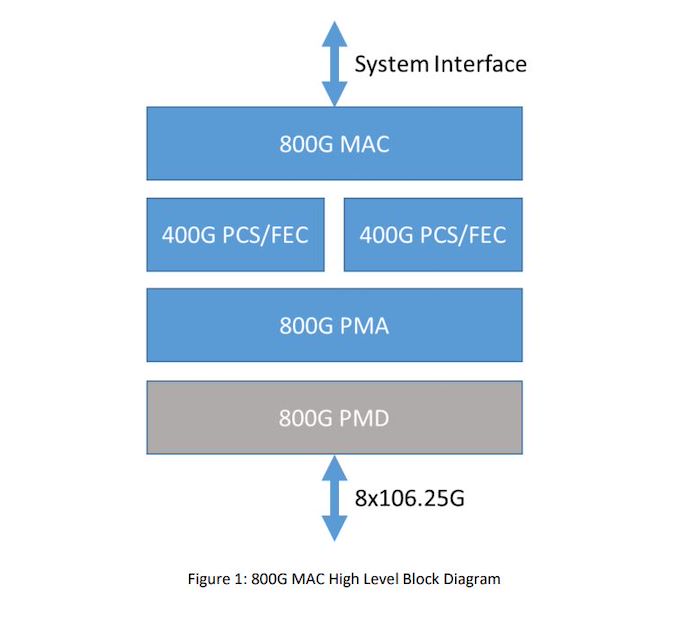

800 Gigabit Ethernet Block Diagram

As for their new 800 Gigabit Ethernet standard, at a high level 800GbE can be thought of as essentially a wider version of 400GbE. The standard is primarily based around using existing 106.25G lanes, which were pioneered for 400GbE, but doubling the number of total lanes from 4 to 8. And while this is a conceptually simple change, there is a significant amount of work involved in bonding together additional lanes in this fashion, which is what the new 800GbE standard has to sort out.

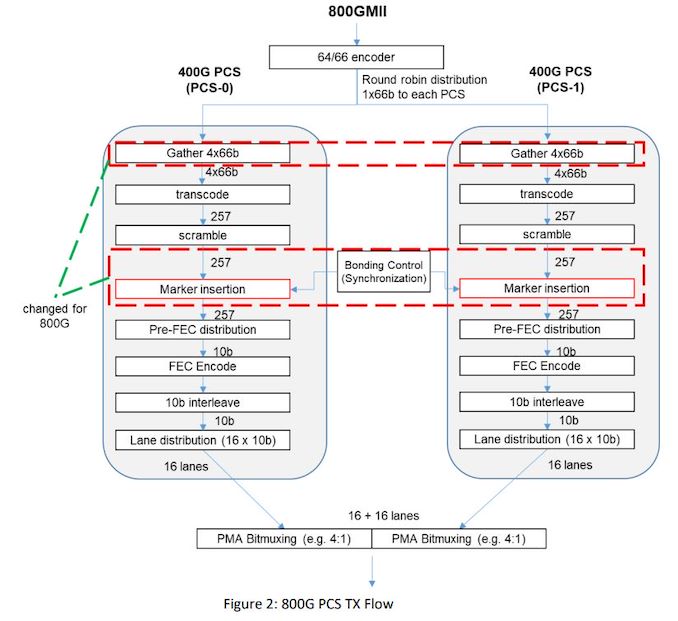

Diving in, the new 800GBASE-R specification defines a new Media Access Control (MAC) and a Physical Coding Sublayer (PCS), which in turn is built on top of two 400 GbE 2xClause PCS's to create a single MAC which operates at a combined 800 Gb/s. Each 400 GbE PCS uses 4 x 106.25 GbE lanes, which when doubled brings the total to eight lanes, which has been used to create the new 800 GbE standard. And while the focus is on 106.25G lanes, it's not a hard requirement; the ETC states that this architecture could also allow for larger groupings of slower lanes, such as 16x53.125G, if manufacturers decided to pursue the matter.

Focusing on the MAC itself, the ETC claims that 800 Gb Ethernet will inherit all of the previous attributes of the 400 GbE standard, with full-duplex support between two terminals, and with a minimum interpacket gap of 8-bit times. The above diagram depicts each 400 GbE with 16 x 10 b lanes, with each 400 GbE data stream transcoding and scrambling packet data separately, with a bonding control which synchronizes and muxes both PCS's together.

All told, the 800GbE standard is the latest step for an industry as a whole that is moving to Terabit (and beyond) Ethernet. And while those future standards will ultimately require faster SerDes to drive the required individual lane speeds, for now 800GBASE-R can deliver 800GbE on current generation hardware. All of which should be a boon for the standard's intended hyperscaler and HPC operator customers, who are eager to get more bandwidth between systems.

The Ethernet Technology Consortium outlines the full specifications of the 800 GbE on its website in a PDF. There's no information when we might see 800GbE in products, but as its largely based on existing technology, it should be a relatively short wait by datacenter networking standards. Though datacenter operators will probably have to pay for the luxury; with even a Cisco Nexus 400 GbE 16-port switch costing upwards of $11,000, we don't expect 800GbE to come cheap.

Related Reading

- Sonnet Unveils Solo5G: A USB-C to 5 GbE Network Adapter

- Intel Launches Atom P5900: A 10nm Atom for Radio Access Networks

- D-Link Announces Nuclias Remote Management Solutions for SMB Networks

- TP-Link Updates Deco Mesh Networking Family with Wi-Fi 6

Source: Ethernet Technology Consortium

QSFP-DD Image Courtesy Optomind

75 Comments

View All Comments

imaheadcase - Thursday, April 9, 2020 - link

"Though datacenter operators will probably have to pay for the luxury; with even a Cisco Nexus 400 GbE 16-port switch costing upwards of $11,000, we don't expect 800GbE to come cheap."Good thing you posted the press release here then..

p1esk - Thursday, April 9, 2020 - link

$11k for 16 port 400GbE switch actually sounds pretty cheap. Imagine it connects a 16 server rack, where each server has 8 V100 cards. That's about $100k per server.Samus - Friday, April 10, 2020 - link

I was thinking the same thing. Structurally this is probably the same investment cost as current 400Gbps deployment. The only difference is component costs.mrvco - Friday, April 10, 2020 - link

At $11k the cost per port may appear expensive initially, but considering the capacity it sounds surprisingly reasonable. Of course I expect that the real cost will be in the 16x upstream and downstream devices (and/or 400G transceivers) that can support and fully utilize such a switch.close - Friday, April 10, 2020 - link

Oh don't worry, plenty of people on AT are itching to tell everyone how much they "need" 1TbE and how the market already begs for it because the current speeds are just not enough for whatever invented case they think everyone needs.TheinsanegamerN - Friday, April 10, 2020 - link

If everyone thought the way you do we would still be on fast ethernet and 16 bit ISA slots.close - Saturday, April 11, 2020 - link

Wanting more is one thing. Pretending that everyone "needs" some technology that brings 0 benefit to 99.9% of consumer out there is quite another. I may want a 128K 16000Hz monitor and we may very well have them some day. But expecting we should have them today "in the name of evolution" is about as stupid as your comment.The evolution from 16bit ISA slots brought tangible benefits for end users, solved actual problems. Don't let that throw a spanner in your reasoning, run to Wikipedia and search for some more stuff that existed before you parents knew their names and you may just find one to make your point... eventually.

Having an SSD 10 times faster than old HDDs makes a difference. Your games, apps, OS load instantly, you can to more stuff at the same time, etc. Faster internet? Hell yeah. Faster home LAN? In a regular home most connections these days are WiFi (phones, tablets, laptops), not even close to Gigabit (real life). Very few people have more than 4 wired connections in the house, those coming from the router (and even those may be something like a Hue bridge). But there's always going to be someone to whine around here (or anywhere) about how 10G should be everywhere because reasons. Completely ignoring that most people do not want wires and none of the things they do daily (and I mean NONE) benefit from that speed. Facebook? Twitter? Youtube? Netflix? Yeah, I'm sure everyone has a home lab with 1000 VMs that somehow have to be migrated every 4s, they transfer PB of data every day and 10G makes a difference, etc.

I on the other hand could actually use this 100TBps

So go peddle your platitudes in reply to someone else's comment, maybe put one more LED on your PC so it runs faster. Maybe you need at least 10000 cores in your PC because you don't want to sound backward or against progress and have to stand behind that comment.

sa666666 - Sunday, April 12, 2020 - link

Wow, triggered much?mode_13h - Monday, April 13, 2020 - link

Either that or @close is seriously confused.wolfesteinabhi - Monday, April 13, 2020 - link

these switches/network speeds are primarily targeted towards datacenter and HPC crowd ...and yes a lot of them do crave for higher and higher bandwidth ...because the multimedia and other such data needs are growing quite exponentially .... and its hard to server those to millions of people (or other devices) simultaneously.