AnandTech Year In Review 2019: Flagship Mobile

by Andrei Frumusanu on December 26, 2019 8:00 AM ESTSoC Improvements: Apple vs Qualcomm vs Samsung vs HiSilicon

The system-on-chip is a device’s single most important component. The SoC powers every feature of a phone, determines the performance of the device, and also is the second biggest factor in a device’s battery life (behind the display). At the high-end, the key SoCs this year were HiSilicon’s Kirin 980, Qualcomm’s Snapdragon 855, Samsung LSI’s Exynos 9820 and Apple’s A13.

The Kirin 980 was the first released amongst the bunch, actually being announced and used late in 2018. The chip was a big step-up in execution from HiSilicon, and was an excellent product that powered most of Huawei and Honor’s devices for 2019. Whilst it was still lagging behind a bit in GPU performance, the chip presented a well-rounded product that offered top of the line CPU performance for the Android devices it powered.

HiSilicon’s follow-up Kirin 990 released with the Mate 30 just a few months ago unfortunately didn’t bring quite as large improvements as its predecessor: whilst it’s still a good and well-rounded SoC, this time around the company didn’t manage to integrate the newest IPs from Arm, as the company’s design and product cycle didn’t match up well enough with the releases of the newer Cortex-A77 CPU and Mali-G77 GPU. We’ll have to see how things pan out in 2020, but I don’t expect Huawei devices to be as competitive in this regard as in 2019.

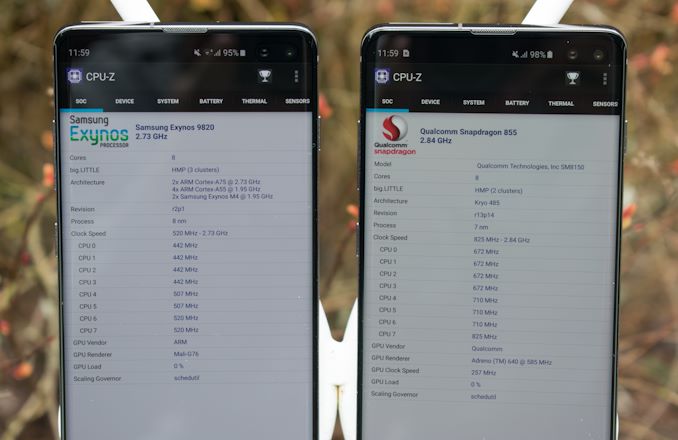

The Galaxy S10 in March also introduced two new generation SoCs: The Snapdragon 855 and the Exynos 9820. The Snapdragon 855 was amongst Qualcomm’s best executed designs, and went on to power essentially every other flagship device in 2019.

Samsung’s Exynos 9820 – whilst not quite as performant or as efficient as the Snapdragon 855, was a big improvement over the disappointing Exynos 9810 of 2018, and served as a solid alternative for the Galaxy S10. S.LSI released a 7nm shrink of the Exynos 9820 in the form of the Exynos 9825 in the Galaxy Note10 series: functionally it’s the same chip as its predecessor, offering the same performance and only minor differences in efficiency – with the Qualcomm counterpart still being the same Snapdragon 855. Unfortunately, it’s just no better than the Qualcomm alternative, and thus S.LSI has never managed to get a design win from a vendor besides Samsung’s own mobile division.

Apple’s A13 this year brought big improvements in performance, although not exactly visible at first-glance in benchmarks. On the CPU side, the new Lightning cores were relatively straightforward upgrades in performance, but it did come at a cost in power consumption which keeps creeping up with every new A-series SoC. Power efficiency however still remains excellent, and I think that’s mostly due to the new Thunder efficiency cores which saw a very large microarchitectural upgrade in performance and efficiency. Apple’s yearly upgrades on the efficiency cores put the aging Cortex-A55s to shame in terms of performance and power, and the Android SoC vendors are very much in a dire need of an IP upgrade from Arm.

| GFXBench Aztec Normal Offscreen Power Efficiency (System Active Power) |

||||

| Mfc. Process | FPS | Avg. Power (W) |

Perf/W Efficiency |

|

| iPhone 11 Pro (A13) Warm | N7P | 73.27 | 4.07 | 18.00 fps/W |

| iPhone 11 Pro (A13) Cold / Peak | N7P | 91.62 | 6.08 | 15.06 fps/W |

| iPhone XS (A12) Warm | N7 | 55.70 | 3.88 | 14.35 fps/W |

| iPhone XS (A12) Cold / Peak | N7 | 76.00 | 5.59 | 13.59 fps/W |

| QRD865 (Snapdragon 865) | N7P | 53.65 | 4.65 | 11.53 fps/W |

| Mate 30 Pro (Kirin 990 4G) | N7 | 41.68 | 4.01 | 10.39 fps/W |

| Galaxy 10+ (Snapdragon 855) | N7 | 40.63 | 4.14 | 9.81 fps/W |

| Galaxy 10+ (Exynos 9820) | 8LPP | 40.18 | 4.62 | 8.69 fps/W |

On the GPU side of things, Apple has also been hitting it out of the park; the last two GPU generations have brought tremendous efficiency upgrades which also allow for larger performance gains. I really had not expected Apple to make as large strides with the A13’s GPU this year, and the efficiency improvements really surprised me. The differences to Qualcomm’s Adreno architecture are now so big that even the newest Snapdragon 865 peak performance isn’t able to match Apple’s sustained performance figures. It’s no longer that Apple just leads in CPU, they are now also massively leading in GPU.

For 2020, I’m not really expecting the competitive situation in the high-end to change much. The Snapdragon 865 and Exynos 990 will likely be excellent products, but largely won’t be able to catch up to the A13. I think the biggest surprise in 2020 will be MediaTek’s new Dimensity 1000 SoC: MediaTek’s return to the high-end comes with what seems an unusually well-rounded and solid approach. On paper, the chip should perform better than HiSilicon’s Kirin 990 and also be a very viable alternative to the Snapdragon 865, I’m predicting to see a lot more MediaTek devices and design wins than what we’ve been used to recently.

The OnePlus 7 Pro Brings 90Hz To The Masses, Starts a Trend

The OnePlus 7 Pro was one of the most important phones in 2019 for the fact that it was the first device to bring the high-refresh rate experience to the masses. The phone’s 90Hz display has been a resounding success for OnePlus to the point that the company said it’s been the key feature as to why consumers chose a OnePlus device this year. The refreshed OnePlus 7T also brought the 90Hz display to the lower-priced flagship variant.

Google’s Pixel 4 series represented the first mainstream follow-up from another vendor, however Google’s implementation here largely seemed not as well thought-out as OnePlus’, and was handicapped by lacklustre battery life, particularly on the smaller Pixel 4.

Gaming phones such as ROG Phone II even come with 120Hz displays, and the accompanying gigantic batteries of such phones means there’s no drawbacks to the user experience.

I’m expecting high refresh rate displays to be a key feature in 2020 flagships, with the usual big-name vendors also adopting them.

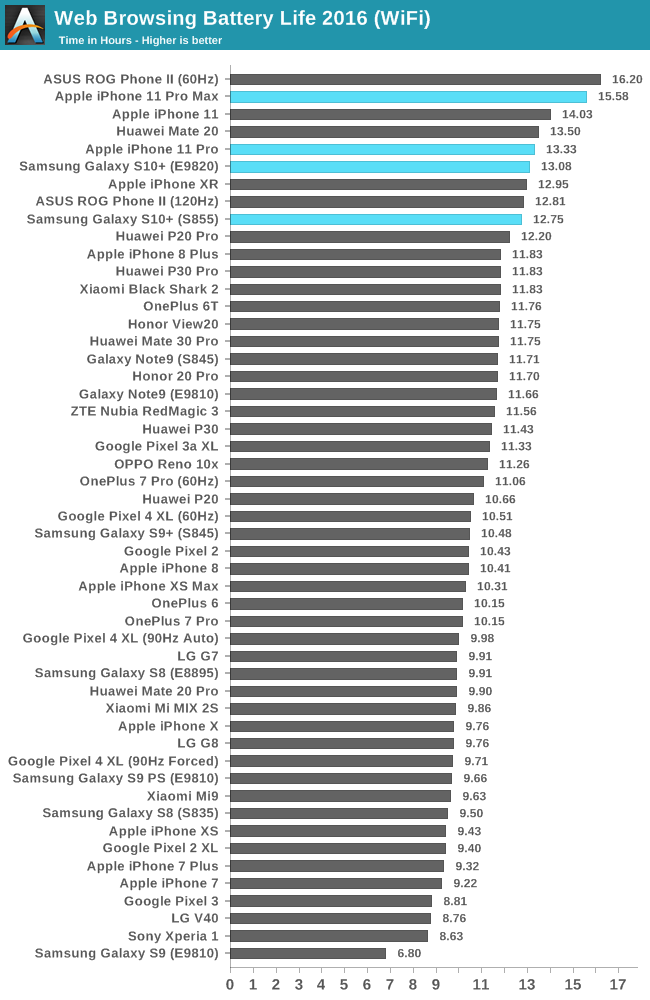

Battery & Display Improvements: Samsung and Apple’s Rise To Longevity

Another key improvement that I will keenly remember in 2019 will be Samsung and Apple’s battery improvements. For several years now the devices with the longest battery life were Huawei’s larger Mate-series phones with big battery capacities and efficient LCD displays, or mid-range phones which come with less power hungry SoCs and displays.

The Galaxy S10 (particularly the S10+) series I think was a game-changer in that it was the first flagship devices that combined top-of-the-line display and SoC, yet still managed to top the battery charts. Apple’s iPhone 11 Pro series follows the Galaxy S10 and Note10 series in these characteristics: the reason for the exemplary battery life of these phones is a combination of larger than usual battery capacities along with the newest OLED display technology. The S10, Note10, iPhone 11 Pro, along with the OnePlus 7T are the only devices in 2019 to use the new emitter technology to bring brighter and more efficient displays – and this advantage is it’s very much noticeable when it comes to each of these device’s battery life.

We expect the technology to trickle down to other vendors in 2020, with Samsung and Apple to continue introducing newer and even more efficient generations – a good tie-in with the expected higher refresh-rate displays next year.

Bendy Phones: The Galaxy Fold & Mate X

While certainly not relevant to the average consumer, 2019 will be remembered as the first year that foldable phones became a reality. The Galaxy Fold and the Huawei Mate X are the first serious big-name implementations of the new flexible screen technology. It’s expensive, impractical, gimmicky and has a ton of drawbacks, yet it’s also one of the most exciting things we’ve seen in years as it represents the first step in a new device form-factor category.

The new Motorola Razr seems like a much better thought-out implementation, and it seems to be the most sensible direction for foldable phones. We’ll undoubtedly hear a lot more innovation from vendors in 2020 when it comes to flexible display devices.

5G – A Reality … In Some Countries

It’s no doubt that 5G has been an incessant talking point throughout 2019, and we actually even saw the first 5G devices in the market. For anybody caring about longevity of their phones, the first generation 5G phones based on Qualcomm’s X50 modem are largely to be ignored – the modem does not support future 5G standalone networks. Second generation devices based on the X55 are certainly to be much more future-proof and much better performing.

We’ve seen the first 5G networks go live this year, but there’s a big difference in the deployment types, depending on your country and carrier. In the US, Verizon most famously launched first with the introduction of their “ultra-wideband” service in select city blocks of select cities. This is the mmWave side of 5G, which does bring the higher multi-gigabit speeds that are hyped up a lot. The reality is that such deployments are going to be extremely limited in terms of coverage and usage; it’ll take many years for such networks to be ubiquitous and even then, it’ll be mostly concentrated around high-density locations such as event centres or stadiums (Which is essentially what mmWave deployments are really intended for).

The rest of the world (And other US carriers) is focusing on bringing 5G to sub-6GHz spectrum. In a lot of countries such as South Korea we’ve already seen very wide-spread infrastructure deployments with coverage quickly catching up to 4G LTE. The speed increases here aren’t as substantial as mmWave, but the improvements here are actually more realistic, consistent and practical, and this will be what 5G will be about for the vast majority of users.

While 2019 will be the first year of 5G, that’s about it when it comes to how it’ll be remembered. I expect 5G deployment to be more of a slow-burn over the comings years as network coverage and capacity continues to improve.

54 Comments

View All Comments

Tigran - Thursday, December 26, 2019 - link

Why sustained performance is called "Warm", while peak performance - "Cold", isn't it vice versa? And what about Mate 30 Pro & Galaxy 10+ - is there peak performance in the table? If yes - can we see sustained also, please?zanon - Thursday, December 26, 2019 - link

It's describing the thermal condition, because the primary limiter for phone form factors with modern SoCs is passive heat dissipation and thermal throttling. When the phone is cold there is a bit headroom to burst to until it warms up, and once it's warm than whatever the SoC can run at consistently from there on out is the sustained performance. That's a reason even the same SoC can exhibit higher performance in a tablet (or different form factor phone) with more thermal dissipation.Tigran - Thursday, December 26, 2019 - link

I see, so it's initial thermal condition, not final. Thanks!Andrei Frumusanu - Thursday, December 26, 2019 - link

The A12 and A13 have absurd peak power consumption >6W which is far above the general ~4.5W peak that Android SoCs exhibit; but Apple throttles within 2-3 minutes to the "Warm" state. This isn't the full sustained performance figure which is below that.So I chose to add in a second data-point for the Apple SoCs as that gives a better comparison point to the Android SoCs. Because the Android SoCs don't exhibit that abnormal thermal behaviour at peak, there's no need for a second data-point.

Tigran - Thursday, December 26, 2019 - link

I see, thanks. As for me personally, I would appreciate Apple's & SoCs full sustained performance AND avg. power figures also.Andrei Frumusanu - Thursday, December 26, 2019 - link

This article isn't the piece to go over in detail, you can read about it in the full reviews.https://www.anandtech.com/show/14892/the-apple-iph...

https://www.anandtech.com/show/15207/the-snapdrago...

Tigran - Thursday, December 26, 2019 - link

Yes, I'm aware about that figures, but there is Android SoCs PEAK performance with avg. power, not full sustained. E.g. I know Mate 30 Pro's full sustained performance (14 FPS in GFXBench Aztec High), but not the corresponding avg. power.Alistair - Friday, December 27, 2019 - link

Average isn't a useful figure as it just depends on how long you run the test. If you run the test a long time you get the "warm" figure, if you run it a short time you get the "cold" figure, so those are the two important numbers, not the average.GC2:CS - Thursday, December 26, 2019 - link

Wait. Single core CPU takes 6W. Neural engine takes like 4. GPU can take up to 6.I thought A10 was power hungry, but how can those things work at such high power levels, without blowing the (i)phone up ?

What about the A12X ? Is that a 20W part ?

Andrei Frumusanu - Thursday, December 26, 2019 - link

All the blocks never work at peak performance at the same time, so it never sums up to the theoretical 15-20W.