AMD Joins CXL Consortium: Playing in All The Interconnects

by Anton Shilov on July 19, 2019 5:00 PM EST- Posted in

- Interconnect

- AMD

- CXL

- PCIe 5.0

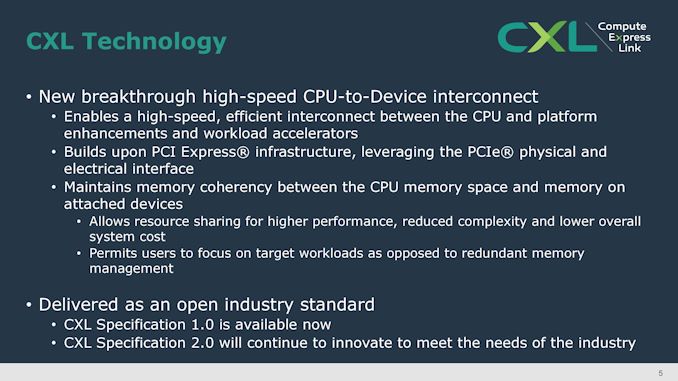

AMD's CTO, Mark Papermaster, has stated in a blog that AMD has joined the Compute Express Link (CXL) Consortium. The industry group is led by nine industry giants including Intel, Alibaba, Google, and Microsoft, but has over 35 members. The CXL 1.0 technology uses the PCIe 5.0 physical infrastructure to enable a coherent low-latency interconnect protocol that allows to share CPU and non-CPU resources efficiently and without using complex memory management. The announcement indicates that AMD now supports all of the current and upcoming non-proprietary high-speed interconnect protocols, including CCIX, Gen-Z, and OpenCAPI.

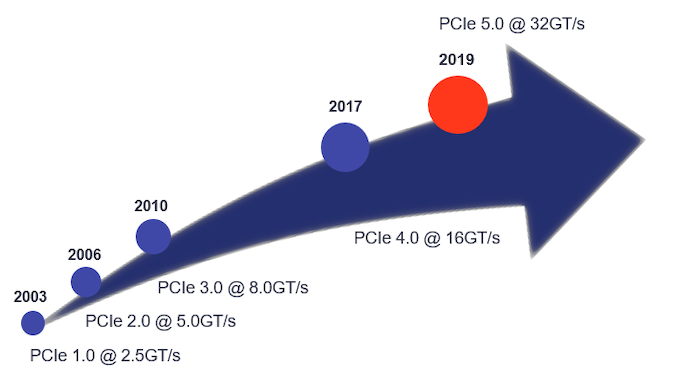

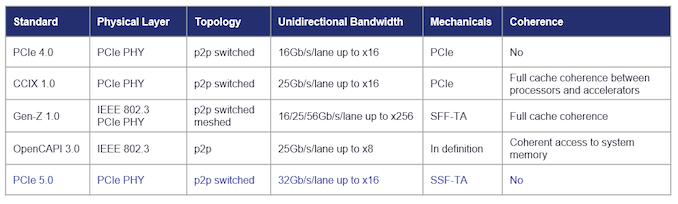

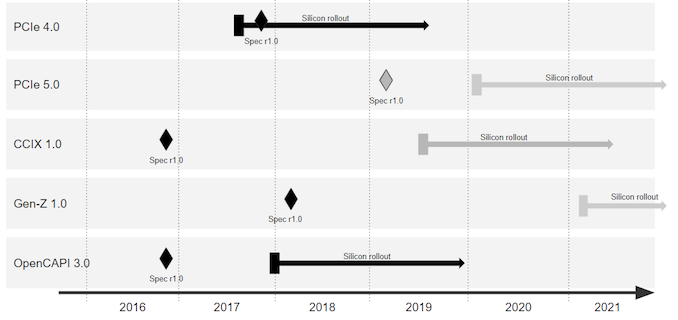

PCIe has enabled a tremendous increase of bandwidth from 2.5 GT/s per lane in 2003 to 32 GT/s per lane in 2019 and is set to remain a ubiquitous physical interface of upcoming SoCs. Over the past few years it turned out that to enable efficient coherent interconnect between CPUs and other devices, specific low-latency protocols were needed, so a variety of proprietary and open-standard technologies built upon PCIe PHY were developed, including CXL, CCIX, Gen-Z, Infinity Fabric, NVLink, CAPI, and other. In 2016, IBM (with a group of supporters) went as far as developing the OpenCAPI interface relying on a new physical layer and a new protocol (but this is a completely different story).

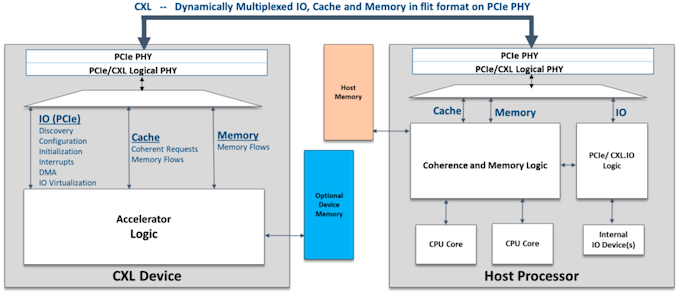

Each of the protocols that rely on PCIe have their peculiarities and numerous supporters. The CXL 1.0 specification introduced earlier this year was primarily designed to enable heterogeneous processing (by using accelerators) and memory systems (think memory expansion devices). The low-latency CXL runs on PCIe 5.0 PHY stack at 32 GT/s and supports x16, x8, and x4 link widths natively. Meanwhile, in degraded mode it also supports 16.0 GT/s and 8.0 GT/s data rates as well as x2 and x1 links. In case of a PCIe 5.0 x16 slot, CXL 1.0 devices will enjoy 64 GB/s bandwidth in each direction. It is also noteworthy that the CXL 1.0 features three protocols within itself: the mandatory CXL.io as well as CXL.cache for cache coherency and CXL.memory for memory coherency that are needed to effectively manage latencies.

In the coming years computers in general and machines used for AI and ML processing will require a diverse combination of accelerators featuring scalar, vector, matrix and spatial architectures. For efficient operation, some of these accelerators will need to have low-latency cache coherency and memory semantics between them and processors, but since there is no ubiquitous protocol that supports appropriate functionality, there will be a fight between some of the standards that do not complement each other.

The biggest advantage of CXL is that it is not only supported by over 30 companies already, but its founding members include such heavyweights as Alibaba, DellEMC, Facebook, Google, HPE, Huawei, Intel, and Microsoft. All of these companies build their own hardware architectures and their support for CXL means that they plan to use the technology. Since AMD clearly does not want to be left behind the industry, it is natural for the company to join the CXL party.

Since CXL relies on PCIe 5.0 physical infrastructure, companies can use the same physical interconnects but develop the transmission logic required. At this point AMD is not committing to enabling CXL on future products, but is throwing its hat into the ring to discuss how the protocol develops, should it appear in a future AMD product.

Related Reading:

- Compute Express Link (CXL): From Nine Members to Thirty Three

- CXL Specification 1.0 Released: New Industry High-Speed Interconnect From Intel

- Gen-Z Interconnect Core Specification 1.0 Published

- Hot Chips: Intel EMIB and 14nm Stratix 10 FPGA Live Blog (8:45am PT, 3:45pm UTC)

Sources: AMD, CXL Consortium, PLDA

43 Comments

View All Comments

extide - Friday, July 19, 2019 - link

Honestly, it's smart and good for everyone if Intel & AMD both support a common coherent protocol. This could be very good.HStewart - Saturday, July 20, 2019 - link

It always good that company work together on common protocols. USB4 is good example of that.,But I hope this stuff can bring some order to this PCIe mess - with AMD doing 4.0 and Intel doing 5.0 - bad thing is that there are going to be product out that don't work for other products. Yes 4.0 and 5.0 are backwards compatible but far as I understand 4.0 or 5.0 does not run on 3.0

Korguz - Saturday, July 20, 2019 - link

sorry hstewart, pcie 4 and 5, remains backwards compatible with pcie 3HStewart - Sunday, July 21, 2019 - link

So is a pcie 4 or pcie 5 card work in pice 3 slot.I know a pcie 3 card should work in pice 4 or pcie 5 slot - that would be very stupid if that did not work.

Korguz - Sunday, July 21, 2019 - link

yep.. it would just work at the cards pcie speed, or the slots depending on which is older/newer.. example, plug a pcie 5 card in a 3 slot, card runs at pcie 3eldakka - Monday, July 22, 2019 - link

A PCIe5 x16 card will work in a PCIe1 x1 slot, just at PCIe1 x1 speeds, if it doesn't, then either the PCIe5 card or the PCIe1 slot are not to specification. It may not be useful to run a PCIe5 x16 card in a PCIe1 x1 slot, but it should work.A PCIe1 x1 card will work in a PCIe5 x16 slot.

One of the features of PCIe is that devices perform an auto-negotiation with the slot they are put into to determine what specifications they support, and the devices (the slot and the card) will operate at the lowest common denominator of capabilities.

What it comes down to is you are more likely to have issues with the appropriate drivers for the platform you are running on, e.g. drivers written for an O/S running on a PCIe5 platform (windows 10, recent versions of Linux, AIX, MacOS and so on) probably won't work on the likely platform that has PCIe1, e.g. Windows NT4, or Linux 1.x Kernels, and so on, then PCIe slot incompatibility.

However, there should be no issues in PCIe3/4/5 driver compatibility since all three will be simultaneously 'current' standards at the same time, with PCIe3 still being in production when PCIe5 is available, therefore the same set of O/Ses, at least as far as major versioning is concerned, are likely to be current across all 3 PCIe generations, therefore drivers for the cards will likely be current across any typical O/S that will be running on those platforms (excluding custom super-computers, lab devices and so on).

phoenix_rizzen - Wednesday, July 24, 2019 - link

No, a PCIe 5 x16 card will not work in a PCIe 1 x1 slot.An x16 card will fit into an x16 slot.

An x16 card will NOT fit into an x8 slot.

An x16 card will NOT fit into an x4 slot.

An x16 card will NOT fit into an x1 slot.

An x8 card will fit into an x16 slot

An x8 card will fit into an x8 slot.

An x8 card will NOT fit into an x4 slot.

An x8 card will NOT fit into an x1 slot.

An x4 card will fit into an x16 slot

An x4 card will fit into an x8 slot.

An x4 card will fit into an x4 slot.

An x4 card will NOT fit into an x1 slot.

An x2 card will fit into an x16 slot

An x2 card will fit into an x8 slot.

An x2 card will fit into an x4 slot.

An x2 card will NOT fit into an x1 slot.

An x1 card will fit into an x16 slot

An x1 card will fit into an x8 slot.

An x1 card will fit into an x4 slot.

An x1 card will fit into an x1 slot.

Notice the pattern? The slot needs to be the same size or larger than the connector.

(Talking about the physical connector for the card. The electrical connection for the card adds another wrinkle, but you can generally ignore that - ex x16 physical but only x8 electrical. If the connector fits in the slot, the card will work.)

asgallant - Wednesday, July 24, 2019 - link

Sorry, but you're just wrong about that. Any PCIe card of any generation can fit into any x1 slot of any generation. x2, x4, and x8 slots won't fit larger cards unless they are open-ended. In consumer-grade hardware, x4 slots are rare (and usually open-ended when they show up in modern hardware), and x8 slots are basically non-existent*.* PCIe slots wired for x4 and x8 connections often use x16 physical slots anyway.

eldakka - Wednesday, July 24, 2019 - link

"x2, x4, and x8 slots won't fit larger cards unless they are open-ended."That is purely a manufacturer choosing to make the piece of plastic that makes the receptacle a solid-bordered rectangle piece of plastic instead of a piece of plastic with the end cut out to fit a longer card. This is nothing to do with the PCIe spec. It is solely how the manufacturer built the support and retaining mechanism around the connector. This can be remedied by grabbing your favourite cutting tool and etching out that piece of plastic so now the connector of the card can sink into the slot with the extra length of the connector potruding out the end.

As per the Spec, an X16 card has to work in an x1 slot. Sure, of course there has tp be physical room for the card to be jammed in. I mean, in putting an x1 slot in, the manufacture could ahve envisopned only tiny 5cm cards will ever be put in, so they could have put a power supply or other components 5cm beyond the edge of the slot so you can't put a 10cm car in, even that that 10cm long card might be an x1 card as well.

Those are all constriants of the shape of the case, motherboard, and so on. Nothing to do with the electrical and signalling capabilities of the slot itself and/or those of the type of card (X1, xX, PCIe1, PCIe5).

phoenix_rizzen - Thursday, July 25, 2019 - link

"That is purely a manufacturer choosing to make the piece of plastic that makes the receptacle a solid-bordered rectangle piece of plastic instead of a piece of plastic with the end cut out to fit a longer card."IOW, an x16 card won't fit into an x8 slot.

An x16 card will work with an x8 connector (after all, increasing the number of lanes just extends the length of the connector), but I have yet to see an open-ended PCIe slot on any motherboard (desktop or server; Supermicro, Tyan, Gigabyte, Asus, Asrock, or MSI). Maybe they exist, maybe they're part of the PCIe spec, but they aren't commonplace by any definition of the word.

Thus, an x16 card won't fit into any existing x8 slots.

"In consumer-grade hardware, x4 slots are rare (and usually open-ended when they show up in modern hardware), and x8 slots are basically non-existent*."

There are lots of x4 and x8 slots on better-than-garbage motherboards, and they are very commonplace on server motherboards (which is what I use more often than not). NICs, HBAs, RAID controllers, etc are generally x8 cards. We look for motherboards that have lots of x8 slots to fit these into. EPYC makes for wonderful storage and VM hosting servers as there's plenty of PCIe lanes to directly connect to storage. :) Lots of x8 cards can go into EPYC motherboards.