Intel Unveils Meteor Lake Architecture: Intel 4 Heralds the Disaggregated Future of Mobile CPUs

by Gavin Bonshor on September 19, 2023 11:35 AM ESTSoC Tile, Part 2: NPU Adds a Physical AI Engine

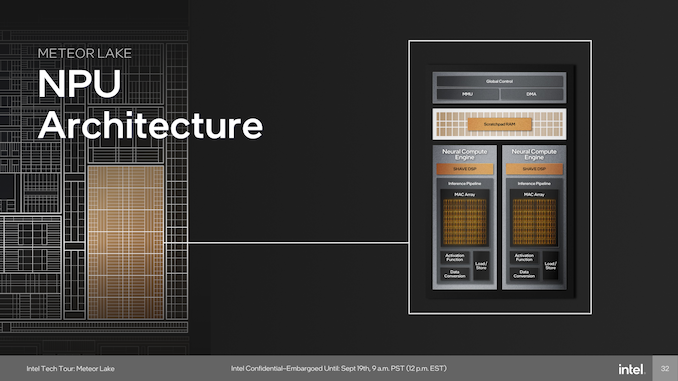

The last major block on the SoC tile is a full-featured Neural Processing Unit (NPU), a first for Intel's client-focused processors. The NPU brings AI capabilities directly to the chip and is compatible with standardized program interfaces like OpenVINO. The architecture of the NPU itself is multi-engine in nature, which is comprised of two neural compute engines that can either collaborate on a single task or operate independently. This flexibility is crucial for diverse workloads and potentially benefits future workloads that haven't yet been optimized for AI situations or are in the process of being developed. Two primary components of these neural compute engines stand out: the Inference Pipeline and the SHAVE DSP.

The Inference Pipeline is primarily responsible for executing workloads in neural network execution. It minimizes data movement and focuses on fixed-function operations for tasks that require high computational power. The pipeline comprises a sizable array of Multiply Accumulate (MAC) units, an activation function block, and a data conversion block. In essence, the inference pipeline is a dedicated block optimized for ultra-dense matrix math.

The SHAVE DSP, or Streaming Hybrid Architecture Vector Engine, is designed specifically for AI applications and workloads. It has the capability to be pipelined along with the Inference Pipeline and the Direct Memory Access (DMA) engine, thereby enabling parallel computing on the NPU to improve overall performance. The DMA Engine is designed to efficiently manage data movement, contributing to the system's overall performance.

At the heart of device management, the NPU is designed to be fully compatible with Microsoft's new compute driver model, known as MCDM. This isn't merely a feature, but it's an optimized implementation with a strong emphasis on security. The Memory Management Unit (MMU) complements this by offering multi-context isolation and facilitates rapid and power-efficient transitions between different power states and workloads.

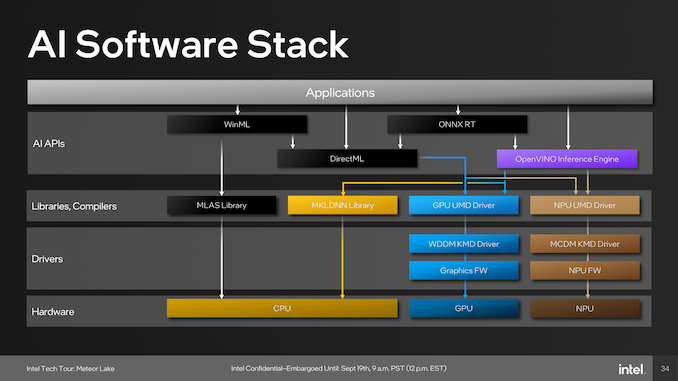

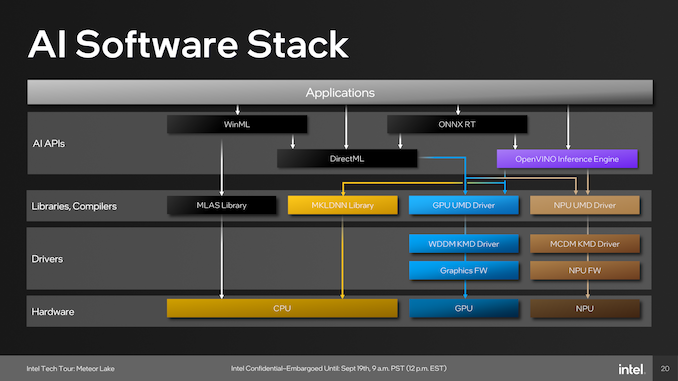

As part of building an ecosystem that can capitalize on Intel's NPU, they have been embracing developers with a number of tools. One of these is the open-source OpenVINO toolkit, which supports various models such as TensorFlow, PyTorch, and Caffe. Supported APIs include Windows Machine Learning (WinML), which also includes the DirectML component of the library, the ONNX Runtime accelerator, and OpenVINO.

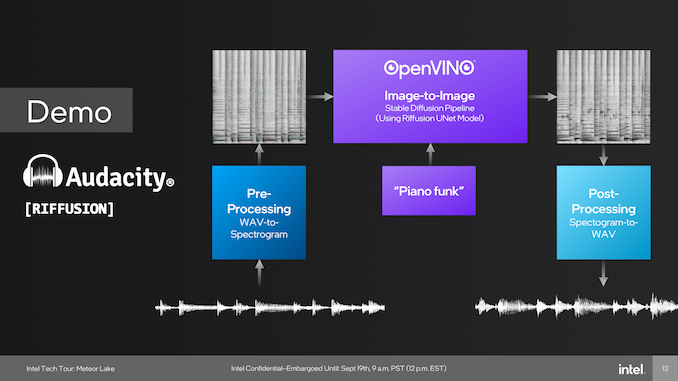

One example of the capabilities of the NPU was provided through a demo using Audacity during Intel's Tech Tour in Penang, Malaysia. During this live demo, Intel Fellow Tom Peterson, used Audacity to showcase a new plugin called Riffusion. This fed a funky audio track with vocals through Audacity and separated the audio tracks into two, vocals and music. Using the Riffusion plugin to separate the tracks, Tom Peterson was then able to change the style of the music audio track to a dance track.

The Riffusion plugin for Audacity uses Stable Diffusion, which is an open-source AI model that traditionally generates images from text. Riffusion goes one step further by generating images of spectrograms, which can then be converted into audio. We touch on Riffusion and Stable Diffusion because this was Intel's primary showcase of the NPU during Intel's Tech Tour 2023 in Penang, Malaysia.

Although it did require resources from both the compute and graphics tile, everything was brought together by the NPU, which processes multiple elements to spit out an EDM-flavored track featuring the same vocals. An example of how applications pool together the various tiles include those through WinML, which has been part of Microsoft's operating systems since Windows 10, typically runs workloads with the MLAS library through the CPU, while those going through DirectML are utilized by both the CPU and GPU.

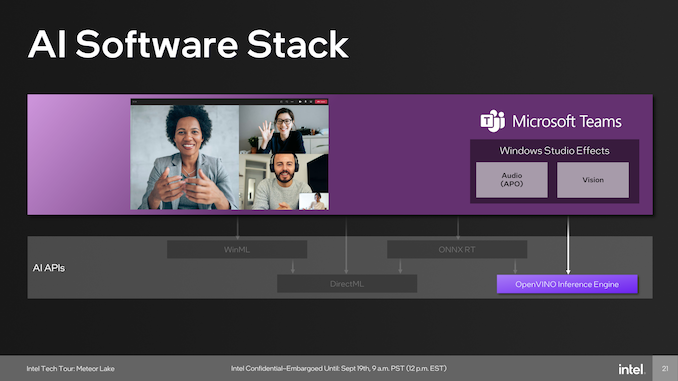

Other developers include Microsoft, which uses the capability of the NPU in tandem with the OpenVINO inferencing engine to provide cool features like speech-to-text transcripts of meetings, audio improvements such as suppressing background noise, and even enhancing backgrounds and focusing capabilities. Another big gun using AI and is supported through the NPU is Adobe, which adds a host of features for adopters of Adobe Creative applications use. These features include generative AI capabilities, including photo manipulative techniques in Photoshop such as refining hair, editing elements, and neural filters; there's a lot going on.

107 Comments

View All Comments

tipoo - Tuesday, September 19, 2023 - link

Is anyone at AT planning on deep diving the A17 Pro?Ryan Smith - Tuesday, September 19, 2023 - link

At the moment, no. I do not have a mobile editor to work on such projects.FWhitTrampoline - Tuesday, September 19, 2023 - link

Oh no that's bad news as Apple appears to have gone even wider with the A17 P cores than even the A14/Firestorm with decode resources on A17/P core, if the Apple promotional material is correct!Maybe Chipsandcheese will look at A17's P core design and with some Micro-benchmarks as well.

tipoo - Tuesday, September 19, 2023 - link

Yeah that's too bad, it looks like the e-cores got a bigger bump than the p-cores but they didn't advertise it with how strangely they mentioned itFWhitTrampoline - Wednesday, September 20, 2023 - link

The slide from Intel on its Crestmont E core design(Block Diagram) does not look that much different from Gracemont's block diagram and Redwood Cove(Block Diagram) core design still appears to be a 6 wide Instruction Decoder design and so Similar to Golden Cove but there needs to be more info concerning Micro-Op issue rates and other parts of Redwood Cove's core design.GeoffreyA - Thursday, September 21, 2023 - link

It's hard to see the instruction decoders being increased all that much, because of their power burden in x86.ikjadoon - Tuesday, September 19, 2023 - link

>As expected, Meteor Lake brings generational IPC gains through the new Redwood Cove cores.Redwood Cove does not have any IPC gain, I believe. Is there a citation or slide regarding this?

This will be now the third Intel CPU generation with 0% to 1% IPC gains in their P-cores.

Gavin Bonshor - Tuesday, September 19, 2023 - link

Intel confirmed to me Redwood Cove would have IP gains over Raptor Cove. When I get back (been sat at a PC a lot the last few days), I'll grab it for youkwohlt - Tuesday, September 19, 2023 - link

There's so many changes in MTL, it would make sense to just save a new P core uArch for next gen. Especially when clockspeed/watt is going up a decent amount, so it's not like perf/watt is stagnating.GeoffreyA - Thursday, September 21, 2023 - link

I think they've been following the old tick-tock system, Sunny and Golden Cove being the tocks, and Willow and Raptor the ticks. So, it's possible that Redwood would bring some proper changes.