Intel Rocket Lake (14nm) Review: Core i9-11900K, Core i7-11700K, and Core i5-11600K

by Dr. Ian Cutress on March 30, 2021 10:03 AM EST- Posted in

- CPUs

- Intel

- LGA1200

- 11th Gen

- Rocket Lake

- Z590

- B560

- Core i9-11900K

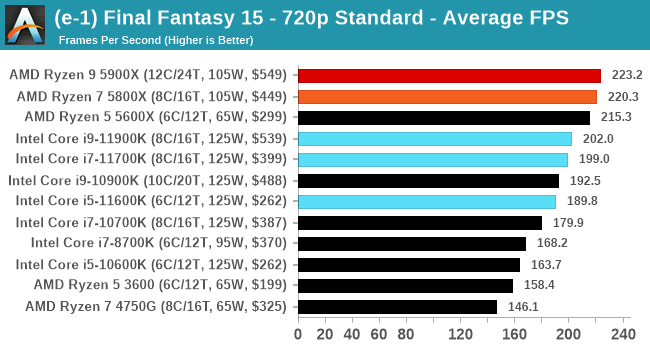

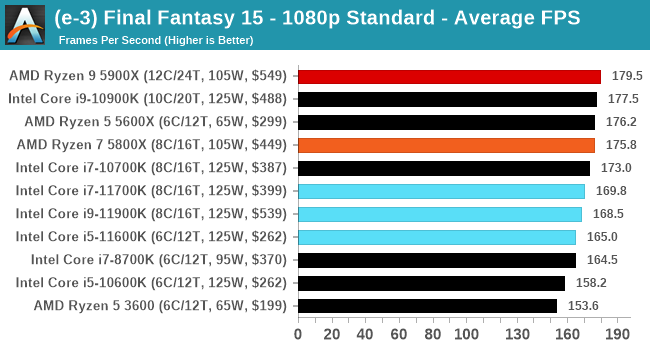

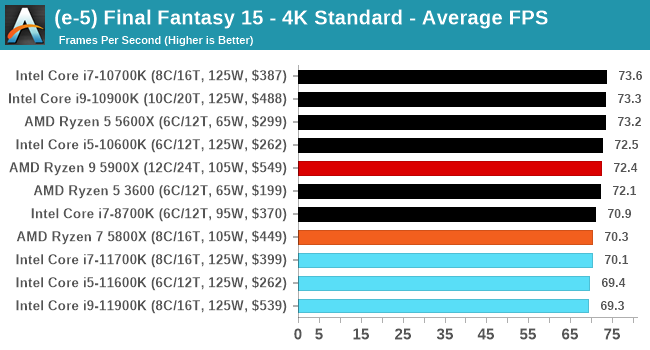

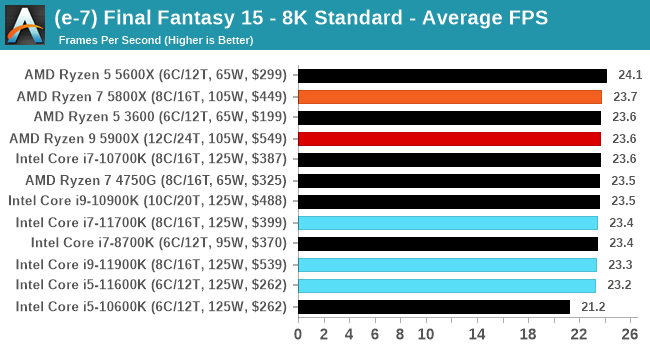

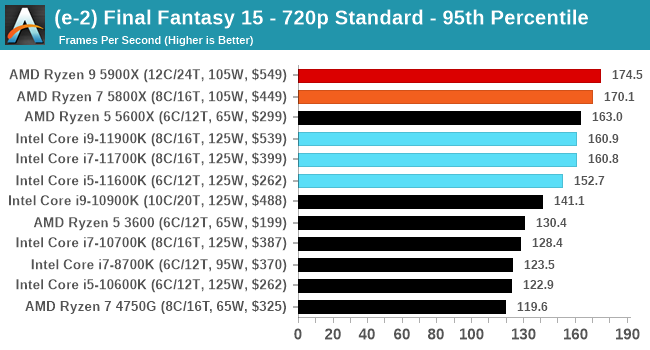

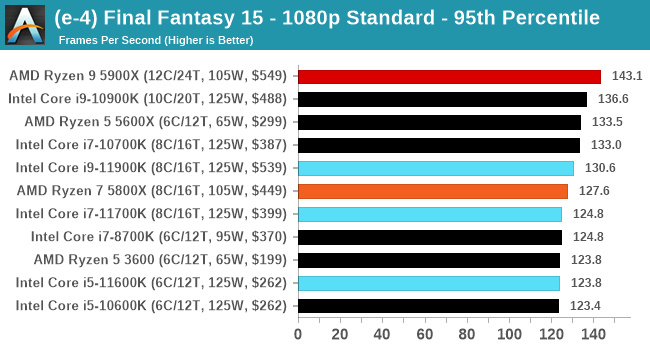

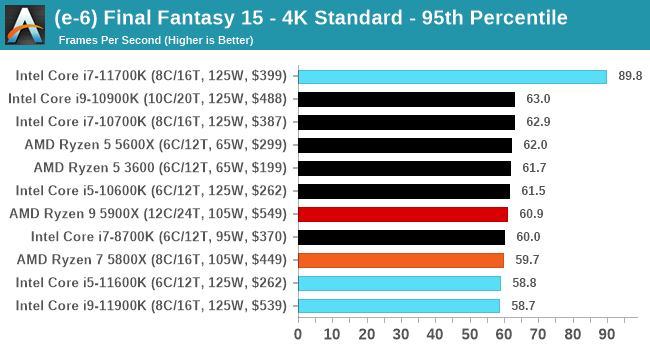

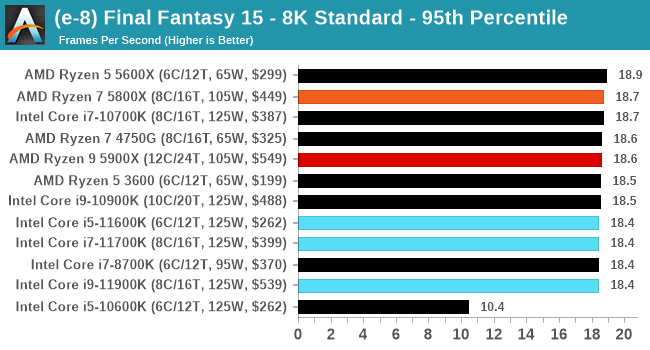

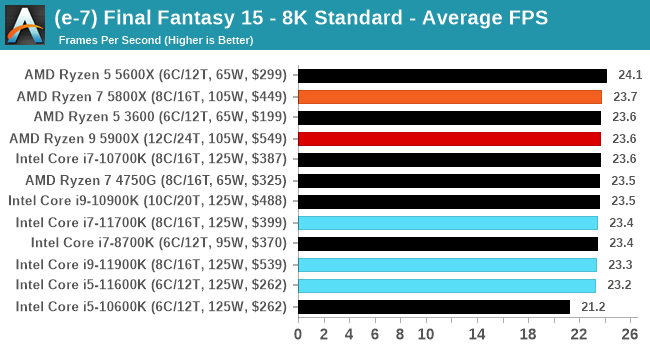

Gaming Tests: Final Fantasy XV

Upon arriving to PC, Final Fantasy XV: Windows Edition was given a graphical overhaul as it was ported over from console. As a fantasy RPG with a long history, the fruits of Square-Enix’s successful partnership with NVIDIA are on display. The game uses the internal Luminous Engine, and as with other Final Fantasy games, pushes the imagination of what we can do with the hardware underneath us. To that end, FFXV was one of the first games to promote the use of ‘video game landscape photography’, due in part to the extensive detail even at long range but also with the integration of NVIDIA’s Ansel software, that allowed for super-resolution imagery and post-processing effects to be applied.

In preparation for the launch of the game, Square Enix opted to release a standalone benchmark. Using the Final Fantasy XV standalone benchmark gives us a lengthy standardized sequence to record, although it should be noted that its heavy use of NVIDIA technology means that the Maximum setting has problems - it renders items off screen. To get around this, we use the standard preset which does not have these issues. We use the following settings:

- 720p Standard, 1080p Standard, 4K Standard, 8K Standard

For automation, the title accepts command line inputs for both resolution and settings, and then auto-quits when finished. As with the other benchmarks, we do as many runs until 10 minutes per resolution/setting combination has passed, and then take averages. Realistically, because of the length of this test, this equates to two runs per setting.

| AnandTech | Low Resolution Low Quality |

Medium Resolution Low Quality |

High Resolution Low Quality |

Medium Resolution Max Quality |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

All of our benchmark results can also be found in our benchmark engine, Bench.

279 Comments

View All Comments

GeoffreyA - Tuesday, March 30, 2021 - link

It could be due to x264 limiting the number of threads because when vertical resolution divided by threads drops below a certain threshold---I think round about 30 or 40---quality begins to suffer.GeoffreyA - Wednesday, March 31, 2021 - link

I tested this now on FFmpeg but it should be the same on Handbrake because the x264/5 libraries are doing the actual encoding.I only have a 4C/4T CPU but used the "-threads" switch to request more. On x264, regardless of resolution, once more than 16 threads are asked for, it logs a warning that it's not recommended but goes ahead and uses the requested count, up to 128. I assume that running at default settings, like AT is probably doing with Handbrake, will let x264 cut off at 16 by itself. If someone could confirm this with a 32-thread CPU, that would be nice. As for x265, I gave it a try as well and the encoder refuses to go on if more than 16 threads are requested, saying the range must be between 0 and X265_MAX_FRAME_THREADS.

In short, I reckon both these codecs are cutting off at 16 threads on default settings. If Ian or someone else could test how much extra is gained by manually putting in the count on a 32T CPU, that would be interesting.

scott_htpc - Tuesday, March 30, 2021 - link

Splat. Backporting doesn't really work & dead-end platform.What I'd really like to read is a detailed narrative of Intel's blunders over the last 5-10 years. To me, it probably makes a case study in failed leadership & hubris, but I would really like to read an authoritative, detailed account. I'm curious why the risks of their decisions were not enough to dissuade them to take a better path forward.

Prosthetic Head - Tuesday, March 30, 2021 - link

Yes, some sort of post mortem on Intel development over the last few years would be interesting. Once they abandoned the Pentium 4 madness, they did a good job with Core, Core2 and then the early stages of the 'i' series. Because AMD were by that point down their own dead end, they had essentially no competition for about a decade. The tempting easy explanation is that as a de facto monopoly for desktop and laptop CPUs, they only innovated enough to keep the upgrade cycle ticking over, then when AMD made a rapid comeback they got caught with their pants down and some genuine technical difficulties in fab tech.... But the reality could be a lot more complex and interesting than that.Hifihedgehog - Tuesday, March 30, 2021 - link

> But the reality could be a lot more complex and interesting than that.The reality is Conroe was a once-in-a-lifetime IPC improvement, literally 90% better (or nearly double the performance!) clock-for-clock than the ill-fated Pentium 4 (see here: https://www.reddit.com/r/intel/comments/m7ocxj/pen... They are not going to get that again unless Gelsinger clones himself across Intel's entire leadership team. Now, they may get something Zen-like in the ~50% range, but nothing Conroe-like unless ALL the stars align after a decade of complacency.

Hifihedgehog - Tuesday, March 30, 2021 - link

https://www.reddit.com/r/intel/comments/m7ocxj/pen...29a - Tuesday, March 30, 2021 - link

Keep in mind that P4 was a piece of shit built for marketing high clock speeds and was easily beaten by Athlon 64 running 1Ghz slower so getting that much IPC wasn't as hard as usual.GeoffreyA - Wednesday, March 31, 2021 - link

"Keep in mind that P4 was a piece of"Not to defend the P4, but Northwood wasn't half bad in the Athlon XP's time, beating it quite a lot. It was Prescott that mucked it all up.

TheinsanegamerN - Wednesday, March 31, 2021 - link

TBF, the only reason it wasnt half bad is AMD's willingness to just abandon XP. I mean, only 2.23 GHz? 3 GHz OCs were not hard to do with their mobile lineup, and those obliterated anything intel would have until conroe. IF they had released 2.4, 2.6, and 2.8 GHz athlon XPs intel would have been losing every benchmark against them.GeoffreyA - Friday, April 2, 2021 - link

Oh yes, the XP had the higher IPC and would have given Intel a sound drubbing if its clocks were only higher. Thankfully, the Athlon 64 came and turned the tables round. I remember in those days my heart was set on the 3200+ Barton but I ended up with a K8 budget system of sorts.