Apple Announces 5nm A14 SoC - Meagre Upgrades, Or Just Less Power Hungry?

by Andrei Frumusanu on September 15, 2020 4:30 PM EST

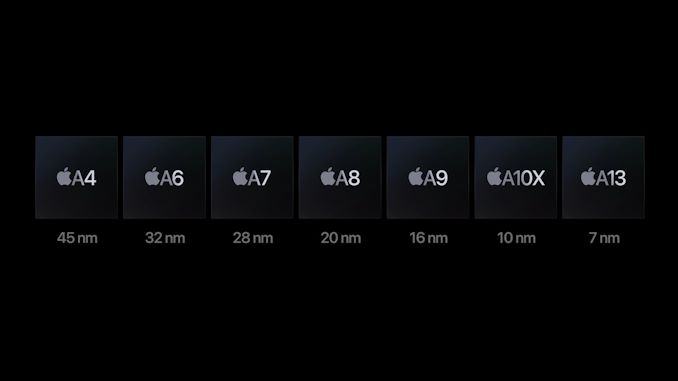

Amongst the new iPad and Watch devices released today, Apple made news in releasing the new A14 SoC chip. Apple’s newest generation silicon design is noteworthy in that is the industry’s first commercial chip to be manufactured on a 5nm process node, marking this the first of a new generation of designs that are expected to significantly push the envelope in the semiconductor space.

Apple’s event disclosures this year were a bit confusing as the company was comparing the new A14 metrics against the A12, given that’s what the previous generation iPad Air had been using until now – we’ll need to add some proper context behind the figures to extrapolate what this means.

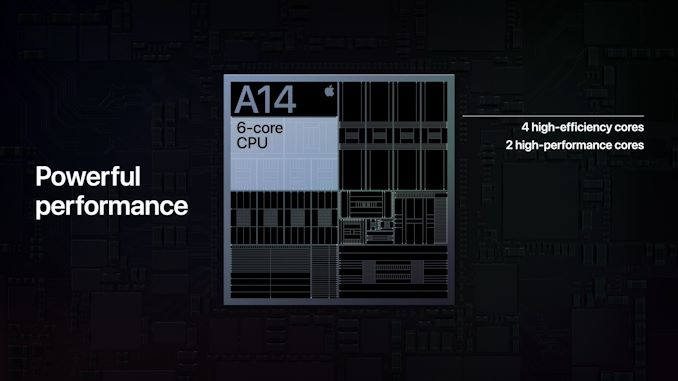

On the CPU side of things, Apple is using new generation large performance cores as well as new small power efficient cores, but remains in a 2+4 configuration. Apple here claims a 40% performance boost on the part of the CPUs, although the company doesn’t specify exactly what this metric refers to – is it single-threaded performance? Is it multi-threaded performance? Is it for the large or the small cores?

What we do know though is that it’s in reference to the A12 chipset, and the A13 already had claimed a 20% boost over that generation. Simple arithmetic thus dictates that the A14 would be roughly 16% faster than the A13 if Apple’s performance metric measurements are consistent between generations.

On the GPU side, we also see a similar calculation as Apple claims a 30% performance boost compared to the A12 generation thanks to the new 4-core GPU in the A14. Normalising this against the A13 this would mean only an 8.3% performance boost which is actually quite meagre.

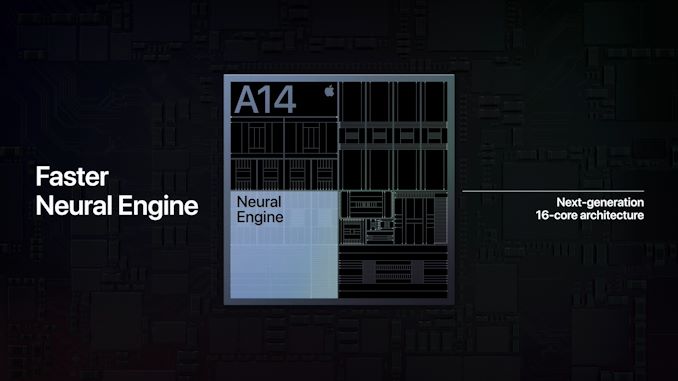

In other areas, Apple is boasting more significant performance jumps such as the new 16-core neural engine which now sports up to 11TOPs inferencing throughput, which is over double the 5TOPs of the A12 and 83% more than the estimated 6TOPs of the A13 neural engine.

Apple does advertise a new image signal processor amongst new features of the SoC, but otherwise the performance metrics (aside from the neural engine) seem rather conservative given the fact that the new chip is boasting 11.8 billion transistors, a 38% generational increase over the A13’s 8.5bn figures.

The one explanation and theory I have is that Apple might have finally pulled back on their excessive peak power draw at the maximum performance states of the CPUs and GPUs, and thus peak performance wouldn’t have seen such a large jump this generation, but favour more sustainable thermal figures.

Apple’s A12 and A13 chips were large performance upgrades both on the side of the CPU and GPU, however one criticism I had made of the company’s designs is that they both increased the power draw beyond what was usually sustainable in a mobile thermal envelope. This meant that while the designs had amazing peak performance figures, the chips were unable to sustain them for prolonged periods beyond 2-3 minutes. Keeping that in mind, the devices throttled to performance levels that were still ahead of the competition, leaving Apple in a leadership position in terms of efficiency.

What speaks against such a theory is that Apple made no mention at all of concrete power or power efficiency improvements this generation, which is rather very unusual given they’ve traditionally always made a remark on this aspect of the new A-series designs.

We’ll just have to wait and see if this is indicative of the actual products not having improved in this regard, of it’s just an omission and side-effect of the new more streamlined presentation style of the event.

Whatever the performance and efficiency figures are, what Apple can boast about is having the industry’s first ever 5nm silicon design. The new TSMC-fabricated A14 thus represents the cutting-edge of semiconductor technology today, and Apple made sure to mention this during the presentation.

Related Reading:

- The Apple iPhone 11, 11 Pro & 11 Pro Max Review: Performance, Battery, & Camera Elevated

- The Samsung Galaxy S20+, S20 Ultra Exynos & Snapdragon Review: Megalomania Devices

- TSMC Expects 5nm to be 11% of 2020 Wafer Production (sub 16nm)

- ‘Better Yield on 5nm than 7nm’: TSMC Update on Defect Rates for N5

127 Comments

View All Comments

ANORTECH - Thursday, September 17, 2020 - link

Why are not scaled better?jjjag - Tuesday, September 15, 2020 - link

You are assuming that all of the current CPU/GPU capacity in something like an A12 is already being used. That is not true. Your phone/ipad is not using 6 cores to do anything. Running ML-type tasks on a CPU/GPU is less efficient than having a dedicated/specialized processor for this.I mean the fact that you assume, by default, that you know better than Apple, Samsung, Huawei, and Qualcomm tells it all right there

ikjadoon - Tuesday, September 15, 2020 - link

Sigh, a troll comment masquerading as a correction. Faster & larger CPUs/GPUs are not for performance alone, but for more efficient processing (i.e., the race to idle), longer software update cycles,Not only that, but larger CPU cores & GPU cores can run at lower frequencies (see the mess of A12's DVFS curve), further increasing efficiency. Again, see A13 for a perfect problem.

GPU cores are also purely scalable: there's hardly an issue of "unused cores" during GPU events.

Likewise, because iOS devices are updated for nearly half-a-decade while iOS increases in complexity, a significant reserve of CPU / GPU power is much more important.

The fact that fixed-function hardware simply exists is not an argument for its large die reservation, relative to high-end iOS / iPadOS consumer experiences.

There's plenty of AI/ML hype and little real-world delivery besides a few niche applications.

Relying on corporations is a pitiful argument: I'm sure users appreciated that 8K30p ISP from Qualcomm. "How relevant! This is what was missing." /s

dotjaz - Tuesday, September 15, 2020 - link

You are the troll. You assume you know better than ALL of them, that type of superiority is just delusional.On top of that, you have the audacity to lecture others.

dotjaz - Tuesday, September 15, 2020 - link

Relying on good competition is excellent argument. That's the core of capitalism, stupid.You listed more than 3 companies. If forgoing ML gives a competition advantage, wouldn't one of them, especially MTK and Samsung have already done it? Samsung obviously know they are behind, that's why they finally got rid of SARC and signed on RDNA. Yet ML is still there.

How stupid are you to think you are so much better?

Spunjji - Wednesday, September 16, 2020 - link

How stupid are *you* to think that's exactly how capitalism works in practice?Forgoing ML wouldn't really give a competitive advantage because all of these companies have dedicated vast quantities of marketing to how important ML is for their products. Consumers aren't machines with access to perfect information - most of them don't even read these releases, let alone take any particular note of what's in their phone. The salesperson tells them "new, shiny, faster, better" and they buy.

The difference in die size between and SoC with ML and one without wouldn't make enough of a difference to the BoM to give a significant price advantage, and investing it into other components wouldn't give enough of a performance advantage to change that, either - whereas saying "now your phone has a brain in it!" definitely will.

Backing up an appeal to authority with some wishful thinking about the nature of capitalism and a spot of tone policing really is Ben Shapiro level of crappy argumentation.

Spunjji - Wednesday, September 16, 2020 - link

I'm in agreement with you about the other comments, but if I could offer a counterpoint to your thoughts on AI/ML - you yourself noted how iOS devices are updated for half a decade. With the way things have been proceeding, it seems likely that within that time frame we *will* have more uses for the "Neural engine" - and, as others have noted, it will probably perform those tasks more efficiently than an up-rated CPU and GPU would. It's pretty much a classic chicken/egg scenario.Archer_Legend - Thursday, September 17, 2020 - link

The gpu core scalability argument is quite flawed, the scalability of the cores depends on the gpu arch, see vega which after 56 cu will not scale well to 64 and then after that not at allnico_mach - Thursday, September 17, 2020 - link

Siri, searching and photo categorization aren't 'niche' applications, they are primary use cases for most people. Every voice system needs special hardware to efficiently triage voice recognition samples.Spunjji - Wednesday, September 16, 2020 - link

"I mean the fact that you assume, by default, that you know better than Apple, Samsung, Huawei, and Qualcomm tells it all right there"So they're all right to take away the 3.5mm jack and create phones with curved screens that can't fit a screen protector? Glad to hear that, I thought they were all just copying flashy trends that don't add anything to the user experience...

In seriousness, I'm mocking you because that comment is a naked appeal to authority. It's perfectly possible that they're dedicating silicon area to things that can't be used very well yet - there are, after all, a lot of phones out there with 8 or 10 decidedly mediocre CPU cores. Lots of companies got on board with VR and 3D displays and those aren't anywhere to be seen now.