Intel’s Tiger Lake 11th Gen Core i7-1185G7 Review and Deep Dive: Baskin’ for the Exotic

by Dr. Ian Cutress & Andrei Frumusanu on September 17, 2020 9:35 AM EST- Posted in

- CPUs

- Intel

- 10nm

- Tiger Lake

- Xe-LP

- Willow Cove

- SuperFin

- 11th Gen

- i7-1185G7

- Tiger King

Comparing Power Consumption: TGL to TGL

On the first page of this review, I covered that our Tiger Lake Reference Design offered three different power modes so that Intel’s customers could get an idea of performance they could expect to see if they built for the different sustained TDP options. The three modes offered to us were:

- 15 W TDP (Base 1.8 GHz), no Adaptix

- 28 W TDP (Base 3.0 GHz), no Adaptix

- 28 W TDP (Base 3.0 GHz), Adaptix Enabled

Intel’s Adaptix is a suite of technologies that includes Dynamic Tuning 2.0, which implements DVFS feedback loops on top of supposedly AI-trained algorithms to help the system deliver power to the parts of the processor that need it most, such as CPU, GPU, interconnect, or accelerators. In reality, what we mostly see is that it reduces frequency in line with memory access stalls, keeping utilization high but reducing power, prolonging turbo modes.

Compute Workload

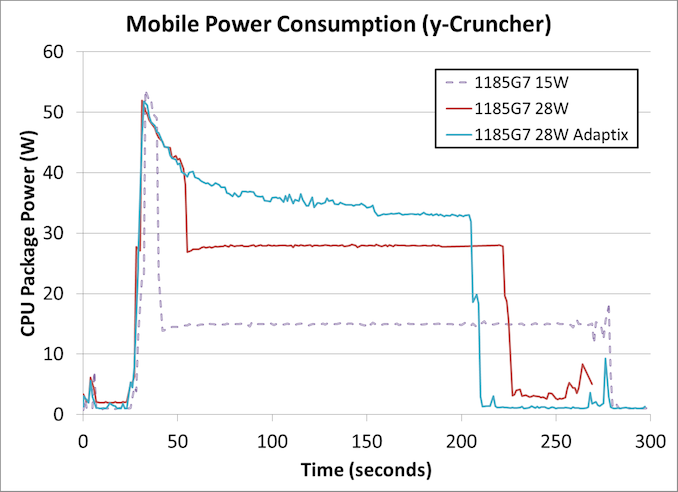

When we put these three modes onto a workload with a mix of heavy AVX-512 compute and memory accesses, the following is observed.

Note that due to time constraints this is the only test we ran with Adaptix enabled.

This is a fixed workload to calculate 2.5 billion digits of Pi, which takes around 170-250 seconds, and uses both AVX-512 and 11.2 GB of DRAM to execute. We can already draw conclusions.

In all three power modes, the turbo mode power limit (PL2) is approximately the same at around 52 watts. As the system continues with turbo mode, the power consumed is decreased until the power budget is used up, and the 28 W mode has just over double the power budget of the 15 W mode.

Adaptix clearly works best like this, and although it initially follows the same downward trend as the regular 28 W mode, it levels out without hitting much of a ‘base’ frequency at all. Around about the 150 second mark (120 seconds into the test), there is a big enough drop followed by a flat-line which would probably indicate a thermally-derived sustained power mode, which occurs at 33 watts.

The overall time to complete this test was:

- Core i7-1185G7 at 15 W: 243 seconds

- Core i7-1185G7 at 28 W: 191 seconds

- Core i7-1185G7 at 28 W Adaptix: 174 seconds

In this case moving from 15 W to 28 W gives a 27% speed-up, while Adaptix is a total 40% speed-up.

However, this extra speed does come at the cost of total power consumed. With most processors, the peak efficiency point is when the system is at idle, and while these processors do have a good range of high efficiency, when the peak frequencies are requested then we are in a worst case scenario. Because this benchmark measures power over time, we can integrate to get total benchmark power consumed:

- Core i7-1185G7 at 15 W: 4082 joules

- Core i7-1185G7 at 28 W: 6158 joules

- Core i7-1185G7 at 28 W Adaptix: 6718 joules

This means that for the extra 27% performance, an extra 51% power is used. For Adaptix, that 40% extra performance means 65% more power. This is the trade off with the faster processors, and this is why battery management in mobile systems is so important - if a task is lower priority and can be run in the background, then that is the best way to do it to conserve battery power. This means things like email retrieval, or server synchronization, or thumbnail generation. However, because users demand the start menu to pop up IMMEDIATELY, then user-experience events are always put to the max and then the system goes quickly to idle.

Professional ISV Workload

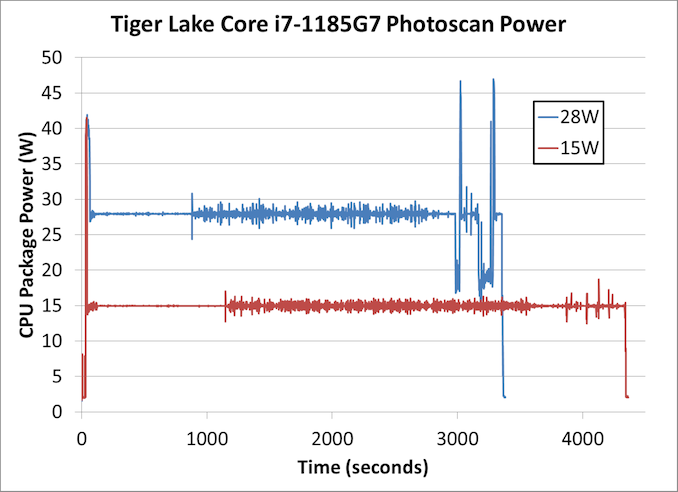

In our second test, we put our power monitoring tools on Agisoft’s Photoscan. This test is somewhat of a compute test, split into four algorithms, however some sections are more scalable than others. Normally in this test we would see some sections rely on single threaded performance, while other sections use AVX2.

This is a longer test, and so the immediate turbo is less of a leading factor across the whole benchmark. For the first section the system seems content to sit at the respective TDPs, but the second section shows a more variable up and down as power budget is momentarily gained and then used up immediately.

Doing the same maths as before,

- At 15 W, the benchmark took 4311 seconds and consumed 64854 joules

- At 28 W, the benchmark took 3330 seconds and consumed 92508 joules

For a benchmark that takes about an hour, a +30% performance uplift is quite considerable, however it comes at the expense of +43% power. This is a better ratio than the first compute workload, but still showcases that 28 W is further away from Tiger Lake’s ideal efficiency point.

Note that the power-over-time graph we get for Agisoft on a mobile processor looks very different to that of a desktop processor, as a mobile processor core can go above the TDP budget with fewer threads.

This leads to the dichotomy of mobile use cases with respect to the marketing that goes on for these products - as part of the Tiger Lake launch, Intel was promoting its use for streaming, professional workflows such as Adobe, video editing and content creation, and AI acceleration. All of these are high-performance workloads, compared to web browsing or basic office work. Partly because Tiger Lake is built on the latest process technology, as well as offering Intel’s best performing CPU and GPU cores, the product is going to be pitched in the premium device market for the professionals and prosumers that can take advantage.

253 Comments

View All Comments

blppt - Friday, September 18, 2020 - link

Yeah, we can extrapolate such things if power consumption and heat dissipation are of no relevance to AMD. You're leaving out other factors that go into building a top line GPU.AnarchoPrimitiv - Saturday, September 26, 2020 - link

Power? It will certainly be better than Ampere which is awful at efficiency... Are you forgetting that RDNA2 will be on an improved 7nm node, meaning a better 7nm node that RDNA2?Spunjji - Friday, September 18, 2020 - link

Big Navi probably won't clock that high for TDP reasons, but the people who are buying that it's only going to have 2080Ti performance are in for a rude surprise. It should compete solidly with the 3080, and I'm betting at a lower TDP. We'll see.blppt - Saturday, September 19, 2020 - link

Its been AMD's modus operandi for a long time now. Introduce new card, and either because of inferior tech (occasionally) or drivers (mostly), it usually ends up matching Nvidia's last gen flagship. Although also at a lower price.Considering the leaked benches we've already seen, Big Navi appears to be more of the same. Around 2080Ti performance, probably at a much lower price, though.

Spunjji - Saturday, September 19, 2020 - link

@blppt - not sure if you're shilling or credulous, but there's no indication that those leaked benchmarks are "Big Navi". Based on the probable specs vs. the known performance of the 3080, it's extremely unlikely that it will significantly underperform the 3080. It's entirely possible that it will perform similarly at lower power levels. They're also specifically holding back the launch to work on software.In other words: assuming AMD will keep doing the same thing over and over when they already stopped doing that (see: RDNA, Zen 2, Renoir) is not a solid bet.

But none of this is relevant here. It's amazing how far shills will go to poison the well in off-topic posts.

blppt - Sunday, September 20, 2020 - link

Considering that the 2080ti itself doesn't "significantly underperform the 3080", Big Navi being in line with the 2080ti doesn't qualify it as getting pummeled by the 3080.blppt - Sunday, September 20, 2020 - link

Oh, and BTW, I am not a shill for Nvidia. I've owned many AMD cards and cpus over the years, and they have been this way for a while. I keep wishing they'll release a true high end card, but they always end up matching Nvidia's previous gen flagship.Witness the disappointing 5700XT in my machine at the moment. Due to AMD's lesser driver team, it often is less consistent in games then my now ancient 1080ti. Even in its ideal situation with well optimized drivers in a game that favors AMD cards, it just barely outperforms that old 1080ti. Most of the time its around 1080 performance.

Actually, YOU are the shill for AMD if you keep denying this is the way they have been for a while.

"In other words: assuming AMD will keep doing the same thing over and over when they already stopped doing that (see: RDNA, Zen 2, Renoir) is not a solid bet."

Except---they STILL don't hit the top of the charts in games on their CPUs. Zen/Zen 2 is a massive improvement, and dominates Intel in anything highly multi-core optimized, but that almost always never applies to games.

So, going to a Zen comparison for what you think Big Navi will do is not a particularly good analogy.

Spunjji - Sunday, September 20, 2020 - link

@blppt - "I'm not the shill, you're the shill, I totally own this product, let me whine about how disappointing it is though, even though performance characteristics were clear from the leaks and it still outperformed them. I bought it to replace a far more expensive card that it doesn't outperform". Okay buddy, sure. Whatever you say. 🙄I didn't say it would take the performance lead. Going for a Zen comparison is exactly what I meant and I stand by it. We will see, until benchmarks come out it's all just talk anyway - just some of it's more obvious nonsense than the rest...

blppt - Sunday, September 20, 2020 - link

@SpunjiThat was the dumbest counter argument I've ever heard.

First off, I didn't buy it to 'replace' anything. The 1080ti is in one of my other boxes. Where did you get 'replace' from? The 5700XT was to complete an all-AMD rig consisting of a 3900X and and AMD video card.

Secondly, the 1080ti is now almost 4 freaking years old. You bet your rear end I'd expect it to outperform a top end card from almost 4 years ago, when it is currently STILL the best gpu AMD offers.

And finally, I have over 20 years experience with both AMD cpus and gpus in various builds of mine, so don't give me that "bought one AMD product and decided they stink" B.S.

I've been on both sides of the aisle. Don't try and tell me i'm a shill for Nvidia. I've spent way too much time and money around AMD systems for that to be true.

AnarchoPrimitiv - Saturday, September 26, 2020 - link

You're a liar, I'm so sick of Nvidia fans lying about owning AMD cards