The Intel Lakefield Deep Dive: Everything To Know About the First x86 Hybrid CPU

by Dr. Ian Cutress on July 2, 2020 9:00 AM ESTHybrid CPUs: Sunny Cove and Tremont

Now that we’ve gone over the concept of the heterogeneous core design, it’s time to dig into each of the cores separately and some of the tradeoffs that Intel has had to do in order to get this to work.

Big Sunny Cove

As mentioned previously, the big core in Lakefield is known as Sunny Cove, and stands as the same core we currently see in Intel’s Ice Lake mobile processors today. It is officially Intel’s second 10nm-class core (the first one being the DOA Cannon Lake / Palm Cove), but the first one in mass production.

We have covered the Sunny Cove core microarchitecture in great detail, and you can read about it here:

Examining Intel's Ice Lake Processors: Taking a Bite of the Sunny Cove Microarchitecture

The quick recap is as follows.

Very similar to a Skylake design, except that:

- Better prefetchers and branch predictors

- +50% L1 Data Cache

- +100% L1 Store Bandwidth

- +100% L2 Cache w/improved L2 TLB

- +50% Micro-op Cache

- +25% uops/cycle into reorder buffer

- +57% reorder buffer size

- +25% execution ports

- AVX-512 with VNNI

The side effect of increasing the L1 Data cache size was a decrease in latency, with the L1-D moving to a 5-cycle rather than a 4-cycle. Normally that would sound like a 25% automatic speed drop, however the increased L1 size, L1 bandwidth, and L2 cache all help for an overall improvement.

Intel claimed that Sunny Cove should perform ~18% better clock-for-clock compared to a Skylake core design. In our initial review of Ice Lake, we compared the i7-1065G7 processor (Ice Lake) to the Core i9-9900K processor (Coffee Lake, a Skylake derivative), and saw a 19% increase in performance per clock, essentially matching Intel’s advertised numbers.

(However it should be noted that overall we didn’t see that much of an improvement at the overall chip and product level, because the Ice Lake ran at a lower frequency, which removed any raw clock speed gain.)

Small Tremont Atom

Arguably the Tremont core is the more interesting of the two in the Lakefield design. Lakefield will be the first consumer product built with a Tremont core inside, and as a result we have not had a chance to test it yet. But we have gone over the microarchitecture extensively in a previous article.

Intel's new Atom Microarchitecture: The Tremont Core in Lakefield

The reason why Tremont is more exciting is because updates to Intel’s Atom line of processor cores happen at a much slower pace. Traditionally Atom has been a core that focuses on the low cost part of the market, so there isn’t that much of a need to make it right at the bleeding edge as it commands lower margins for the company. It still plays a vital role, but for context, here is what year we’ve seen new Atom designs come into the market:

- 2008: Bonnell

- 2011: Saltwell

- 2013: Silvermont

- 2015: Airmont

- 2016: Goldmont

- 2017: Goldmont Plus

- 2020: Tremont

Tremont is the first new Atom microarchitecture design for three years, and technically only the third Atom design to be an out-of-order design. However, Tremont is a big jump in a lot of under-the-hood changes compared to Goldmont Plus.

- Can be in a 1-core, 2-core, or 4-core cluster

- +33% L1-Data Cache over Goldmont+, no performance penalty

- Configurable L2 cache per cluster, from 1.5 MB to 4.5 MB

- +50% L2 TLB (1024-entry, up from 512)

- New 2x3-wide decoder, rather than single 3-wide decoder

- +119% re-order buffer (208, up from 92)

- 8 execution ports, 7 reservation stations

- 3 ALUs, 2 AGUs

- Dual 128-bit AES units

- New Instructions*

What made the most noise is the new dual 3-wide decoder. On Intel’s primary Core line, we haven’t seen much change in the decoder in recent generations – it still uses a 5-wide decoder, split between 1 complex decoder and 4 simple decoders, backed with a micro-op cache. Tremont’s new dual 3-wide decoder can manage dual data streams in order to keep the buffers further down the core fed. Intel stated that for the design targets of Tremont, this was more area and power efficient than a 6-wide decoder, or having a large micro-op cache in the processor design (Atom cores have not have micro-op caches to date). Intel states that the decoder design helps shape the back-end of the core and the balance of resources.

Also worthy of note in Tremont is the L1-Data cache. Intel moved up from a 24 KiB design to a 32 KiB design, an increase of 33%. This is mostly due to using the latest manufacturing node. However, an increase in cache size is typically accompanied with an increase in latency – as we saw on Sunny Cove, we moved from a 4-cycle to a 5-cycle. However in Tremont’s case, the L1-Data cache stays at 3-cycle for an 8-way 32 KiB design. Even Skylake’s L1-D cache, at an 8-way 32 KiB design, is a 4-cycle, which means that Tremont’s L1-D is tuned to surpass even Skylake here.

The final point, Tremont’s new instructions, requires a section all on its own, specifically because none of the new instructions are supported in Lakefield.

What’s Missing in Lakefield

One of the biggest issues with a heterogeneous processor design is software. Even if we go beyond the issues that come with scheduling a workload on such a device, the problem is that most programs are designed to work on whatever microarchitecture they were written for. Generic programs are meant to work everywhere, while big publishers will write custom code for specific optimizations, such as if AVX-512 is detected, it will write AVX-512.

The hair-pulling out moment occurs when a processor has two different types of CPU core involved, and there is the potential for each of them to support different instructions or commands. Typically the scheduler makes no guarantee that software will run on any given core, so for example if you had some code written for AVX-512, it would happily run on an AVX-512 enabled core, but cause a critical fault on a core that doesn’t have AVX-512. The core won’t even know it’s an AVX-512 instruction until it comes time to decode it, and just throw an error when that happens. Not only this, but the scheduler has the right to move a thread when it needs to – if it moves a thread in the middle of an instruction stream, that can cause errors too. The processor could also move a thread to prevent thermal hotspots occurring, which will then cause a fault.

There could be a situation where the programmer can flag that their code has specific instructions. In a program with unique instructions, there’s very often a check that tries to detect support, in order to say to itself something like ‘AVX512 will work here!’. However, all modern software assumes a homogeneous processor – that all cores will support all of the same instructions.

It becomes a very chicken and egg problem, to a certain degree.

The only way out of this is that both processors in a hybrid CPU have to support the same instructions completely. This means that we end up with the worst of both worlds – only instructions supported by both can be enabled. This is the lowest common denominator of the two, and means that in Lakefield we lose support for AVX-512 on Sunny Cove, but also things like GFNI, ENCLV, and CLDEMOTE in Tremont (Tremont is actually rather progressive in its instruction support).

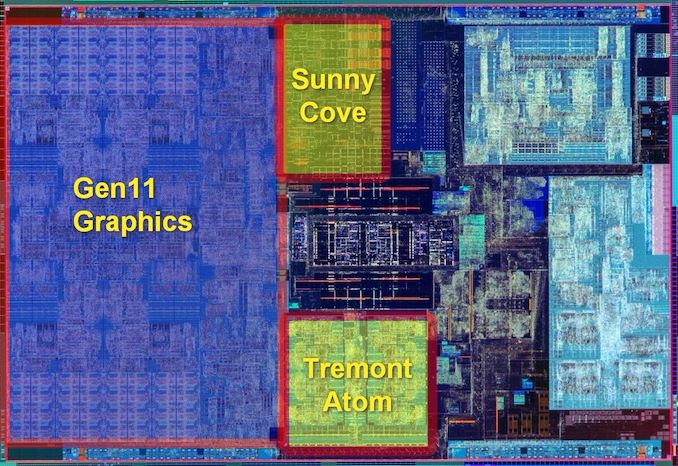

Knowing that Lakefield was going to have to take the lowest common denominator from the two core designs, Intel probably should physically removed the very bulky AVX-512 unit from the Sunny Cove core. Looking at the die shot, it's still there - there was some question going into the recent disclosures as to whether it would still be there, but Intel has stated on the record repeatedly that they removed it. The die shot of the compute silicon shows that not to be the case.

For x86 programmers doing instruction detection by code name or core family, this might have to change. In the smartphone world, where 4+4 processor designs are somewhat the norm, this lowest common denominator issue has essentially been universally adopted. There was some slight issue with a Samsung processor that had a non-unified cache setup, which ended up being rectified in firmware. But both sets of CPUs had to rely on lowest common denominator instructions.

221 Comments

View All Comments

ichaya - Sunday, July 12, 2020 - link

You've claimed ARM64 has a code density advantage without any evidence for a few posts now. Being byte-aligned has advantages too, which are clear in the paper with the real world program! You're welcome to provide more real world evidence!We're changing the goal posts now with new numbers, you can't estimate IPC based on one specific INTrate2006 test, and assume it's similar across other workloads as well. If we just stick to INTrate2006, IPC seems within 5% where Graviton 2 has twice the cache of AMD Epyc 7742.

Comparing a top-line power number like you were doing is irrelevant when features like AVX can easily blow past any power envelope you might have, and one chip lacks the feature.

Wilco1 - Sunday, July 12, 2020 - link

No, I am stating that AArch64 has better code density as a fact. Maybe 5 years ago you could argue about it as AArch64 was still relatively new, but today that's not even disputable. So check it out if you'd like to see it for yourself.I used the overall intrate result to get an accurate IPC comparison. If you do the math correctly you'll see that Graviton 2 has 12% higher IPC than EPYC 7742.

At the end of the day what matters is performance, perf/W and cost. Whether you have AVX or not is not relevant in this comparison - EPYC 7742 uses the same amount of power whether it executes AVX code or not.

ichaya - Tuesday, July 14, 2020 - link

This is not the first time I've seen someone look at single thread performance and disregard everything else. All Graviton 2 and A13 single thread gains can be attributed to large (100~200% more) shared L2/L3 caches, and when compared with x86, 5% or even 75% IPC gains turn out to be ~10% less real world performance or ~10% more with marginal power use difference on 7nm. AMD has everything from a 15W to 280W chip.For multi-threaded, the Graviton 2 looks better, but the 64 vcpu EPYC 2 c5a.16xlarge (144MB L2+L3) AWS instance costs the same as the 64 core Graviton 2 m6g.16xlarge (96MB L2+L3) instance and delvers equivalent performance on real world tasks while having 1/2 the real cores, 1/2 the system RAM and 50% more L2+L3.

perf/W/$ is important, and since ARM has always been on the lower end of W and $, it can be hard to see past it. If you can compare cache sizes, power and real world performance, the only thing revolutionary is the fact that Amazon, Apple and the ARM ecosystem have come this far in a few years. The overall features (AVX2+SMT among others) and openness still leaves a lot to be desired.

Wilco1 - Wednesday, July 15, 2020 - link

Single threaded performance is important in showing that x86 does no longer have the big advantage it once used to have. Overall throughput is well correlated with single thread performance, you can see that clearly in the results we discussed. Do you believe 64 Graviton 1 cores would do equally well against 7742 if they had the same huge caches?I haven't seen serious benchmarks on c5a, do you have a link? With 32 cores at 3.3GHz it should burn well over 200W, not an improvement...

It's not that revolutionary if you followed the rapid increase of single thread performance over the last 5 years. Smartphones paid for the progress in microarchitecture and process technology that enabled competitive Arm servers (it helped AMD surpass Intel as well). I don't believe SMT or AVX are useful - 128 cores in Altra Max will beat 64 cores with SMT+AVX on performance and area at similar power.

As for AVX, this article discusses how Intel's latest CPU disables AVX... Linus had some interesting comments recently about the fragmentation of the many AVX variants. Then there are all the unresolved clocking and power issues. It's a mess.

ichaya - Thursday, July 16, 2020 - link

If there was a significant power difference between m6g.16xlarge and c5a.16xlarge, they would be priced differently. 128GB of RAM can't be more than ~15W.Single thread performance can help multi-thread performance up to a point, but SMT, non-boost clocks, and biasing towards TLP more than ILP (like an in-order GPU) can hurt single thread performance at the expense of more multi-threaded throughput.

AVX-512 is a mess, but AVX2 is worth having in most contexts now. Maybe some AVX512 instructions worth having will make it into a AVX2.1 which can completely supersede AVX2. For the price of Lakefield, there are certainly more attractive options, though compatibility, packaging and performance can trump battery life.

Wilco1 - Thursday, July 16, 2020 - link

Well there is a much better comparison, c6g.16xlarge has 128GB and is 12% cheaper than c5a.16xlarge. More than enough to pay for the electricity cost of the 280W TDP of c5a.Yes you can optimize for multithreaded throughput but SMT remains questionable, especially for large core counts. Why add SMT when you could just add some more cores?

Indeed AVX512 is worse, and could be removed without anyone missing it. Lakefield battery life comparisons are in, the Atom curse has struck yet again...

ichaya - Thursday, July 16, 2020 - link

12% is probably more the amount of subsidies these instances are getting. Amazon has a very very long history of putting any profit margins back into growth. Either that, or 128GB of RAM is 100W+!SMT is perhaps the lowest level at which TLP can be extracted, recent multi-core Atoms don't have it, but for server/workstation tasks like compilation, DB engine or even general multi-tasking, it's well worth it.

Wilco1 - Friday, July 17, 2020 - link

Graviton 2 is less than a third of the silicon area of EPYC so cheaper to make. 128GB server DRAM costs over $1000, which is why the 256GB/512GB versions are more expensive. The power cost of extra DRAM is a tiny fraction of that.There are tasks where SMT helps but equally there also tasks where it is slower. So it looks great on marketing slides where you just show the best cases, but overall it is a small gain.

ichaya - Saturday, July 18, 2020 - link

I wouldn't call a 64 vcpu (180W) system beating or equaling a 64 core (110W) system in web serving/DB and code compilation a small gain. The tasks where SMT hurts is basically single threaded JS, which is just such a shame. Shame! I don't think POWER, SPARC and others have been wrong in having added SMT years ago.For code compilation and DB the differences are 50%-100%+ making perf/W/$ very competitive.

https://www.phoronix.com/scan.php?page=article&...

This article also seems to mention SMT might make an appearance in the next Neoverse N* chips: https://www.nextplatform.com/2019/02/28/arm-sharpe...

Wilco1 - Sunday, July 19, 2020 - link

The Phoronix link has various benchmarks that aren't even running identical code between different ISAs (eg. Linux kernel compile). So it's not anywhere near a fair CPU comparison like SPEC. And this: https://openbenchmarking.org/result/1907314-AS-RYZ... shows SMT gives almost no gain on multithreaded benchmarks once you stop cherry picking the good results and ignore the bad ones...Even if we just consider the benchmarks with the largest SMT speedup, Coremark and 7-zip have good SMT gains of 41% and 32%, but m6g *still* outperforms c5a by 5% and 24%.

So the best SMT gain combined with a 32% frequency advantage and 4 times the L3 cache is still not enough to provide equal per-thread performance!