The Intel Lakefield Deep Dive: Everything To Know About the First x86 Hybrid CPU

by Dr. Ian Cutress on July 2, 2020 9:00 AM ESTThermal Management on Stacked Silicon

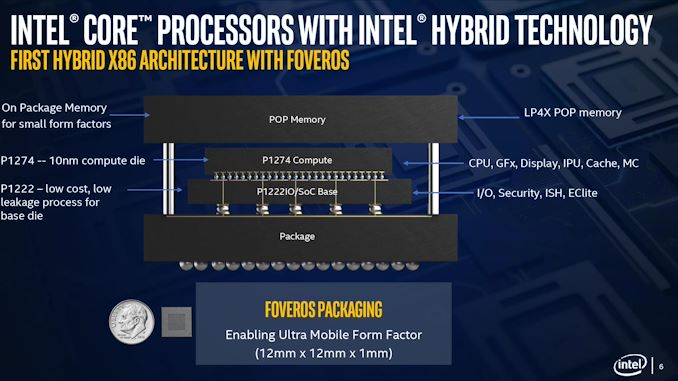

With a standard processor design, there is a single piece of silicon doing all the work and generating the heat – it’s bonded to the package (which doesn’t do any work) and then depending on the implementation, there’s some adhesive to either a cooler or a headspreader then a cooler. When moving to a stacked chiplet design, it gets a bit more complicated.

Having two bits of silicon that ‘do work’, even if one is the heavy compute die and the other is an active interposer taking care of USB and audio and things, does mean that there’s a thermal gradient between the silicon, and depending on the bonding, potential for thermal hotspots and build-up. Lakefield makes it even more complex, by having an additional DRAM package placed on top but not directly bonded.

We can take each of these issues independently. For the case of die-on-die interaction, there is a lot of research going into this area. Discussions and development about fluidic channels between two hot silicon dies have been going on for a decade or longer in academia, and Intel has mentioned it a number of times, especially when relating to a potential solution of its new die-to-die stacking technology.

They key here is hot dies, with thermal hotspots. As with a standard silicon design, ideally it is best to keep two high-powered areas separate, as it gives a number of benefits with power delivery, cooling, and signal integrity. With a stacked die, it is best to not have hotspots directly on top of each other, for similar reasons. Despite Intel using its leading edge 10+ process node for the compute die, the base die is using 22FFL, which is Intel’s low power implementation of its 14nm process. Not only that, but the base die is only dealing with IO, such as USB and PCIe 3.0, which is essentially fixed bandwidth and energy costs. What we have here is a high-powered die on top of a low powered die, and as such thermal issues between the two silicon die, especially in a low TDP device like Lakefield (7W TDP), are not an issue.

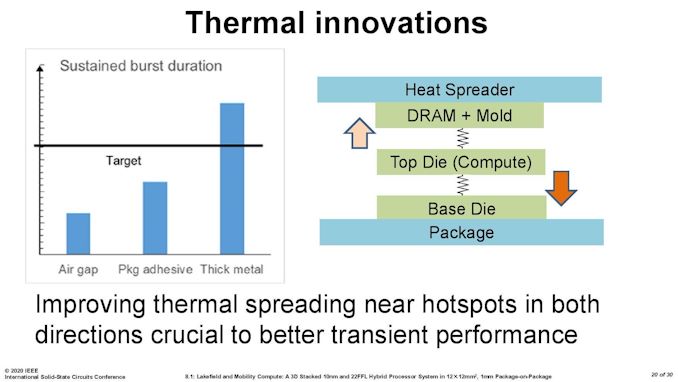

What is an issue is how the compute die gets rid of the heat. On the bottom it can do convection by being bonded to more silicon, but the top is ultimately blocked by that DRAM die. As you can see in the image above, there’s a big air gap between the two.

As part of the Lakefield design, Intel had to add in a number of design changes in order to make the thermals work. A lot of work can be done with the silicon design itself, such as matching up hotspots in the right area, using suitable thickness of metals in various layers, and rearranging the floorplan to reduce localized power density. Ultimately both increasing the thermal mass and the potential dissipation becomes high priorities.

Lakefield CPUs have a sustained power limit of 7 watts – this is defined in the specifications. Intel also has another limit, known as the turbo power limit. At Intel’s Architecture Day, the company stated that the turbo power limit was 27 watts, however in the recent product briefing, we were told is set at 9.5 W. Historically Intel will let its OEM partners (Samsung, Lenovo, Microsoft) choose its own values for these based on how well the design implements its cooling – passive vs active and heatsink mass and things like this. Intel also has another factor of turbo time, essentially a measure of how long the turbo power can be sustained for.

When we initially asked Intel for this value, they refused to tell us, stating that it is proprietary information. After I asked again after a group call on the product, I got the same answer, despite the fact that I informed the Lakefield team that Intel has historically given this information out. Later on, I found out through my European peers that in a separate briefing, they gave the value of 28 seconds, to which Intel emailed me this several hours afterwards. This value can also be set by OEMs.

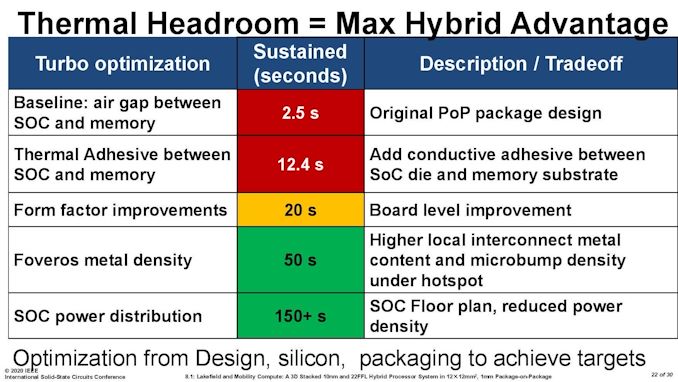

Then I subsequently found one of Intel’s ISSCC slides.

This slide shows that a basic implementation would only allow sustained power for 2.5 seconds. Adding in an adhesive between the top die and the DRAM moves up to 12.4 seconds, and then improving the system cooling goes up to 20 seconds. The rest of the improvements work below the compute die: a sizeable improvement comes from increasing the die-to-die metal density, and then an optimized power floor plan which in total gives sustained power support for 150+ seconds.

221 Comments

View All Comments

serendip - Thursday, July 2, 2020 - link

The ARM MacOS devices could be mobile powerhouses at (gasp!) equal price points as Windows devices running either ARM or x86. Imagine a $1000 Macbook A13 or A14 with double the performance than a Surface Pro X or Galaxy Book S costing the same.lmcd - Friday, July 3, 2020 - link

Considering the hurdles just to use any form of open source software with the platform, they're not equal.JayNor - Thursday, July 2, 2020 - link

Intel already makes LTE modems. By chiplet, I am referring to the Foveros 3D stackable chiplets in this case ... Intel also makes emib stitched chiplet form features for their FPGAs. So, not just a marketing term. These have to implement certain bus interfaces or TSV placement requirements to work with the FPGA or Foveros manufacturing.henryiv - Thursday, July 2, 2020 - link

What a shame to disable AVX-512. The circuitry is probably left there to support SSE, which is still a common denominator with Tremont cores. Also, 5 cores not running together is a huge huge bummer.This first generation is an experimental product and is to be avoided. In the next generation, the Tremont successor will probably get at least 256-bit AVX support, which will be finally possible to use across 5 cores. Transition to 7nm should also give the elbow room needed to run all 5 cores at the same time at full throttle within the limited 7w power budget.

jeremyshaw - Thursday, July 2, 2020 - link

By the usual Intel Atom timeline, it will be 2023 before a Tremont successor comes out. By then, will anyone even care about Intel releases anymore?lmcd - Thursday, July 2, 2020 - link

Bad joke, right? Intel is signaling that Atom is moving to the center of their business model. Previous times Intel prioritized Atom, Atom got every-other-year updates. I'd expect a 2022 core or sooner, if you assume Tremont was "ready" in 2019 but had no products (given 10nm delays and priority to Ice Lake).serendip - Friday, July 3, 2020 - link

Moving Atom to the center of their business model? Atom is still being treated as an also-ran.Intel now has to contend with a resurgent AMD gobbling up x86 market share in multiple segments and ARM encroaching on consumer and server segments. Putting Atom as a priority product would be suicidal.

Lucky Stripes 99 - Saturday, July 4, 2020 - link

Not really. The ARM big/little design has been fairly successful. Most likely is that you'll see the walls between Atom and Core break down a bit in order to keep code optimizations happy on either core type.Deicidium369 - Saturday, July 4, 2020 - link

revenues show they are gobbling up nothing.Namisecond - Saturday, July 11, 2020 - link

There is a limit to the amount of market share AMD can gobble up and they are currently at or near their limit. AMD is production limited and always will be.