AMD Rome Second Generation EPYC Review: 2x 64-core Benchmarked

by Johan De Gelas on August 7, 2019 7:00 PM ESTJava Performance

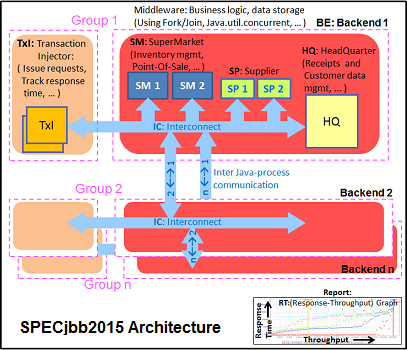

The SPECjbb 2015 benchmark has 'a usage model based on a world-wide supermarket company with an IT infrastructure that handles a mix of point-of-sale requests, online purchases, and data-mining operations'. It uses the latest Java 7 features and makes use of XML, compressed communication, and messaging with security.

We test SPECjbb with four groups of transaction injectors and backends. The reason why we use the "Multi JVM" test is that it is more realistic: multiple VMs on a server is a very common practice.

The Java version was OpenJDK 1.8.0_222. We used the older JDK 8 as the most recent JDK 11 has removed some deprecated JAVA EE modules that SPECJBB 1.01 needs. We applied relatively basic tuning to mimic real-world use, while aiming to fit everything inside a server with 128 GB of RAM:

We tested with huge pages on and off.

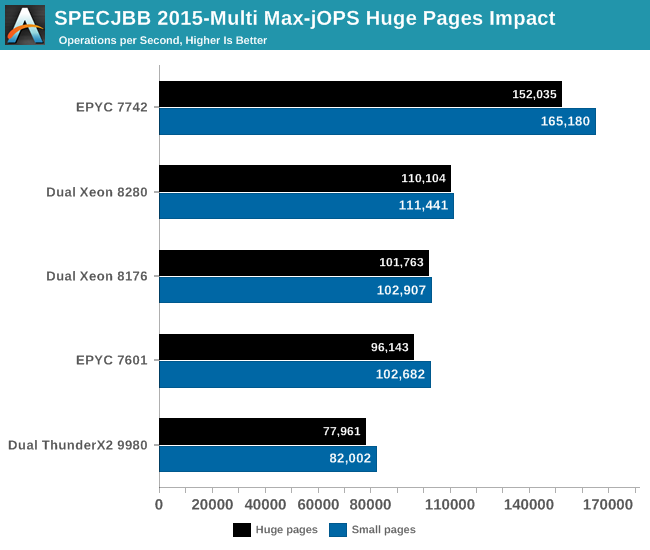

The graph below shows the maximum throughput numbers for our MultiJVM SPECJbb test. Since the test is almost identical to the one that we have used in our ThunderX2 review (JDK8 1.8.0_166), we also include Cavium's server CPU.

Ultimately we publish these numbers with a caveat: you should not compare this with the official published SPECJBB2015 numbers, because we run our test slightly differently to the official run specifications. We believe our numbers make as much sense (and maybe more) as most professionals users will not go for the last drop of performance. Using these ultra optimized settings can result in unrepeateable and hard to debug inconsistent errors - at best they will result in subpar performance as they are so very specific to SPECJBB. It is simply not worth it, a professional will stick with basic and reliable optimization in the real non-HPC world. In the HPC world, you simply rerun your job in case of an error. But in the rest of the enterprise world you just made a lot users very unhappy and created a lot of work for (hopefully) well paid employees.

The EPYC 7742 performance is excellent, outperforming the best available Intel Xeon by 48%.

Notice that the EPYC CPU performs better with small pages (4 KB) than with large ones (2 MB). AMD's small pages TLB are massive and as result page table walks (PTW) are seldom with large pages. If the number of PTW is already very low, you can not get much benefit from increasing the page size.

What about Cavium? Well, the 32-core ThunderX2 was baked with a 16 nm process technology. So do not discount them - Cavium has a unique opportunity as they move the the ThunderX3 to 7 nm FFN TSMC too.

To be fair to AMD, we can improve performance even higher by using numactl and binding the JVM to certain CPUs. However, you rarely want to that, and happily trade that extra performance for the flexibility of being able to start new JVMs when you need them and let the server deal with it. That is why you buy those servers with massive core counts. We are in the world of micro services, docker containers, not in the early years of 21st century.

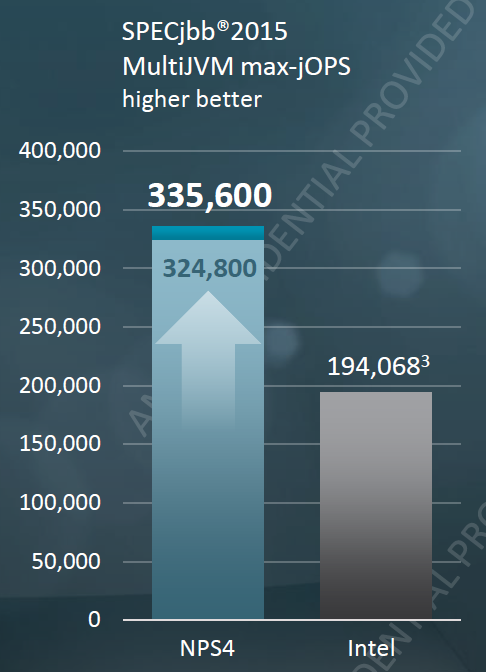

Ok, what if you do that anyway? AMD offered some numbers, while comparing them to the officialy published SPEJBB numbers of Lenovo ThinkSystem SR650 (Dual Intel 8280).

AMD achieves 335600 by using 4 JVM per node, binding them to "virtual NUMA nodes".

Just like Intel, AMD uses the Oracle JDK, but there is more to these record breaking numbers. A few tricks that only benchmarking people can use to boost SPECJBB:

- Disabling p-states and setting the OS to maximum performance (instead of balanced)

- Disabling memory protection (patrol scrub)

- Using older garbage collector because they happen to better at Specjbb

- Non-default kernel settings

- Aggressive java optimizations

- Disabling JVM statistics and monitoring

- ...

In summary, we don't think that it is wise to mimic these settings, but let us say that AMD's new EPYC 7742 is anywhere between 48 and 72% faster. And in both cases, that is significant!

180 Comments

View All Comments

krumme - Thursday, August 8, 2019 - link

Because he is feeded by another hand.Enjoy the objectivity by Johan as its is very rare these days. It's not easy for AT to post this stuff. So kudos to them.

hoohoo - Thursday, August 8, 2019 - link

Nice review, but tbh I think you should run the AMD system as such, not limit it's RAM to what the Intel system maxes out at. I would not buy a system and configure it to limits of the competition: I would configure it to it's actual linits.yankeeDDL - Thursday, August 8, 2019 - link

Wow. "Blasted" is the only word that comes to mind. Good job AMD.eastcoast_pete - Thursday, August 8, 2019 - link

Thanks Johan and Ian! Impressive results, glad to see that AMD is once again making Intel sweat, all of which can only be good for us.Question: A bit out of left field, but why does AMD put the 7 nm dies in these close pairs, as opposed to leaving a little more space between them? Wouldn't thermals be better if each chip gets a little more "reserved" lid space? Just curious. Thanks!

sharath.naik - Thursday, August 8, 2019 - link

Now since we finally are entering the era where a single server(Yes backup is addition) is enough for most of smaller organizations. There is one thing that is needed, OS limits/zones which can limit the cpus and memory built in, instead of using VMs. This will save a lot on resources wasted on booting up an entire OS for individual applications. Linux has the ability for targeting specific cpus but not sure windows has it. But there is a need for standardized way to limit resources by process and by user.mdriftmeyer - Thursday, August 8, 2019 - link

Agreed.quorm - Thursday, August 8, 2019 - link

Is it possible you haven't heard of docker?abufrejoval - Sunday, August 11, 2019 - link

or OpenVZ/Virtuozzo or quite simply cgroups. Can even nest them, including with VMs.DillholeMcRib - Thursday, August 8, 2019 - link

destruction … Intel sat on their proverbial hands too long. It's over.crotach - Friday, August 9, 2019 - link

Bye Intel!!