Examining Intel's Ice Lake Processors: Taking a Bite of the Sunny Cove Microarchitecture

by Dr. Ian Cutress on July 30, 2019 9:30 AM EST- Posted in

- CPUs

- Intel

- 10nm

- Microarchitecture

- Ice Lake

- Project Athena

- Sunny Cove

- Gen11

Gen11 Graphics: Competing for 1080p Gaming

The new message from Intel is that it is driving to deliver deep gaming experiences with its technology, and the nod to the future is specifically what it wants to do with its graphics technology. Until the company is ready with its Xe designs for 2020 and beyond, it wants to start to lead the way with better integrated designs. That starts with Ice Lake, where the most powerful version of Ice Lake will offer over 1TF of compute performance, support higher resolution HEVC, better display pipes, an enhanced rasterizer, and support for Adaptive Sync.

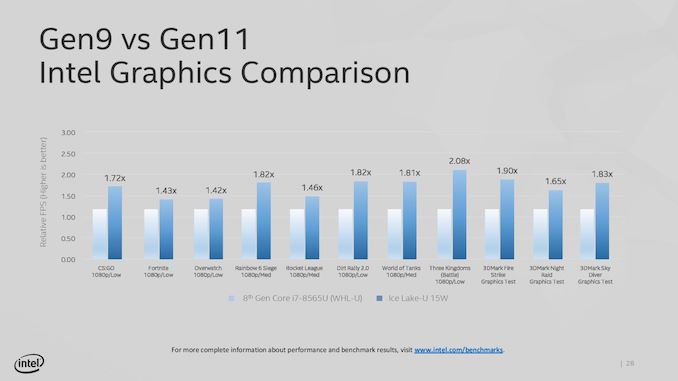

The key words in that last sentence were ‘the most powerful version’. Because Intel hasn’t really spoken about its product stack yet, the company has been leading with its most powerful Iris Plus designs. We assume this means 28W? That means its high-end performance products, in the best designs, with the fastest memory. Compared to the standard Gen9 implementation of 24 execution units at 1150 MHz turbo, the best Ice Lake Gen11 design will deliver 64 execution units up to a 1100 MHz frequency, good for 1.15 TF of FP32 performance, or 2.30 TF of FP16 performance. Intel promise up to 1.8x better frame rates in games with the best Ice Lake compared to an average 8th Gen Core (Kaby Lake) Gen9 implementation. Intel doesn’t compare the results to a hypothetical Cannon Lake Gen10 implementation.

Intel hasn’t stated how many graphics configurations it will offer, but there would appear to be several given what information has leaked out already. The high-end design with 64 execution units will be called Iris Plus, but there will be a ‘UHD’ version for mid-range and low-end parts, however Intel has not stated how many execution units these parts will have. We suspect that standard dividers will be in play, with 24/32/48 EU designs possible as different parts of the GPU are fused off. There may be some potential for increased frequency in these designs, reducing latency, but ultimately reduced performance over the top design.

It should be noted that Intel is promoting the top model as being suitable for 1080p low-to-mid gaming, which would imply that models with fewer execution units may struggle to hit those highs with different EU counts. Until Intel gives us a full and proper product list, it is hard to tell at this point.

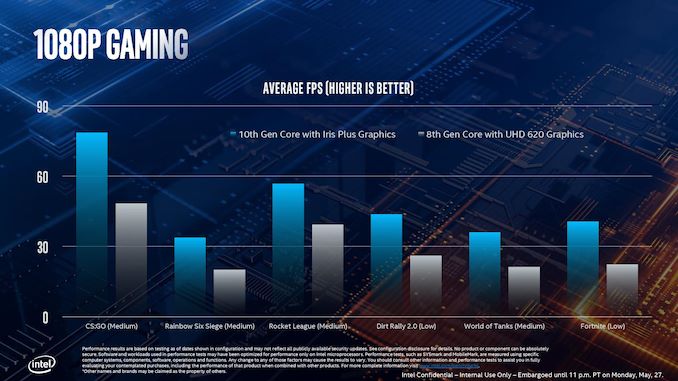

This slide, for example, shows where Intel expects its highest Ice Lake implementation to perform compared to the standard 8th Gen solution. As part of Computex, Intel also showed off some different data:

This graph shows relative FPS, rather than actual FPS, so it’s hard to see if certain games are just hitting 30 FPS in the highest mode. The results here are a function of the combination of increased EU count but also memory bandwidth.

Features for All

There are a number of features that all of the Gen11 graphics implementations will get, regardless of its number of execution units.

For its fixed function units, Gen11 supports two HEVC 10-bit encode pipelines, either two 4K60 4:4:4 streams simultaneously or one 8K30 4:2:2 stream using both pipelines at once. On display pipes, Gen11 has access to three 4K pipes split between DP1.4 HBR3 and HDMI 2.0b. There is also support for 2x 5K60 or 1x 4K120 with a 10-bit color depth.

The rasterizer gets an upgrade, and will now do 16 pixels per clock or 32 bilinear filtered texels per clock. Intel also gives some insight into the cache arrangements, with the execution units having their own 3 MiB of L3 cache and 0.5 MiB of shared local memory.

Intel recommends that to get the best out of the graphics, it should be paired with LPDDR4X-3733 memory in order to extract a healthy 50-60 GB/s bandwidth, and we should expect a number of Project Athena approved designs do just that. However, at the lower end of Ice Lake devices, we might see single channel DDR4 designs take over due to costs, which might limit performance. As always for integrated graphics, memory bandwidth is often a major bottleneck in performance. Back when Intel had eDRAM enabled Crystalwell designs, those chips were good for 50 GB/s bidirectional bandwidth, and we are almost at that stage with DRAM bandwidth designs now. It should be noted that there are tradeoffs with memory support: LPDDR4/X supports 4x 32b channels up to 32 GB with super low power consumption modes, but if users want more capacity, they’ll have to look to DDR4-3200 with 2x 64b channels up to 64 GB, but lose some performance and power savings.

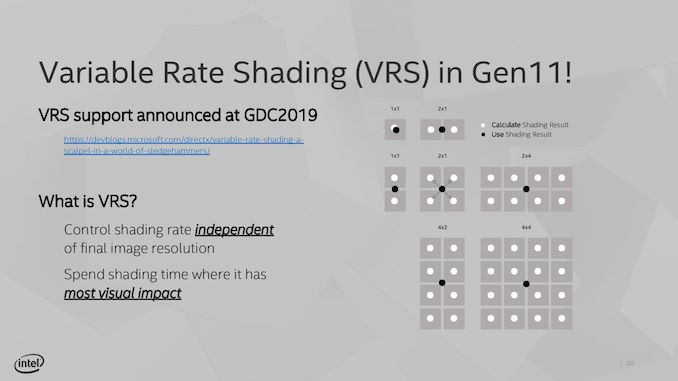

Variable Rate Shading

A feature being implemented in Gen11 is Variable Rate Shading. VRS is a game-dependent technology that allows the GPU adjust the shading performance of the scene render based on what areas are important. All games currently do shading on a per-pixel basis, meaning that each pixel has a full calculation and that data is transferred to the final image. With VRS, shading is calculated over several pixels at once – essentially doing pixel shading in a coarser, lower-resolution manner – to save post-processing time by using averaged data.

The idea is that using this method can reduce some of the load on the execution units, ultimately increasing the frame rate. The size of that combination of pixels can be adjusted on a per-frame basis as well, allowing the game to take advantage of processing budget where it exists, or pull back to a point where performance is needed. Ultimately Intel believes that any image quality loss is not noticeable, especially for the performance impact they expect it to provide. Intel states that this technology is useful for areas such as lighting adjustments, partially obscured objects (by fog/clouds), and areas that undergo blur, or foveated rendering – basically any area where clarity isn’t explicitly required to begin with.

The only issue here though is an ecosystem one – it requires the game developer support. Intel is already working with Epic to add it to the Unreal Engine, and Intel has worked with developers to enable support in titles such as Civilization 6. The difference in performance, according to Intel, can be up to a 30% FPS increase in a best-case scenario. NVIDIA already supports VRS through dedicated hardware, whereas AMD’s current solutions are best described as a more limited shader-based approximation.

107 Comments

View All Comments

The_Assimilator - Wednesday, July 31, 2019 - link

Getting Thunderbolt on-die is huge for adoption. While I doubt many laptop manufacturers will enable more than a single TB port, desktop is an entirely different kettle of fish.umano - Wednesday, July 31, 2019 - link

I am afraid but I cannot consider 4 cores cpu as premiumKhenglish - Wednesday, July 31, 2019 - link

This honestly is looking like the worst architecture refresh since Prescott. IPC increases are getting almost completely washed out by loss in frequency. I wonder if this would have happened if Ice Lake came out on 14nm. Is the clock loss from uArch changes, process change, or a mix of both?Performance of an individual transistor has been decreasing since 45nm, but overall circuit performance kept improving due to interconnect capacitance decreasing at a faster rate at every node change. It looks like at Intel 10nm, and TSMC 7nm that this is no longer true, with transistor performance dropping off a cliff faster than interconnect capacitance reduction. 5nm and 3nm should be possible, but will anyone want to use them?

Sivar - Wednesday, July 31, 2019 - link

"...with a turbo frequency up to 4.1 GHz"This is the highest number I have come across for the new 10th generation processors, and according to SemiAccurate (which is accurate more often than not), this is likely not an error.

If this value is close to desktop CPU limitations, the low clock speed all but erases the 18% IPC advantage -- an estimate likely based on a first-gen Skylake.

Granted, the wattage values are low, so higher-wattage units should run at least a bit faster.

Farfolomew - Wednesday, July 31, 2019 - link

I’m a bit confused by the naming scheme. Ian, you say: “The only way to distinguish between the two is that Ice Lake has a G in the SKU and Comet Lake has a U”But that’s not what’s posted in several places throughout the article. The ICL processors are named Core iX-nnnnGn where CML are Core iX-nnnnnU. Comet lake is using 5 digits and Ice Lake only 4 (1000 vs 10000 series).

Is this a typo or will ICL be 1000-series Core chips?

name99 - Wednesday, July 31, 2019 - link

Regarding AI on the desktop. The place where desktop AI will shine is NLP. NLP has lagged behind vision for a while, but has acquired new potency with The Transformer. It will take time for this to be productized, but we should ultimately see vastly superior translation (text and speech), spelling and grammar correction, decent sentiment analysis while typing, even better search.Of course this requires productization. Google’s agenda is to do this in the cloud. MS’ agenda I have no idea (they still have sub-optimal desktop search). So probably Apple will be first to turn this into mainstream products.

Relevant to this article is that I don’t know the extent to which instructions and micro-architectures optimized for CNNs are still great for The Transformer (and the even newer and rather superior Transformer-XL published just a few months ago). This may all be a long time happening on the desktop if INTC optimized too much purely for vision, and it takes another of their 7 years to turnaround and update direction...

croc - Thursday, August 1, 2019 - link

It seems that Ice Lake / Sunny Cove will have hardware fixes for Spectre and Meltdown. I would like to see some more information on this, such as how much speed gain, whether the patch is predictive (so as to block ALL such OOE / BP exploits) etc.MDD1963 - Thursday, August 1, 2019 - link

A month or so ago, we heard a few rumors that the CPUs were ahead ~18% in IPC (I see that number again in this article), but are down ~20+% in clock speed.... ; it would be nice to see at least one or two performance metrics/comparisons on a shipped product. :)isthisavailable - Thursday, August 1, 2019 - link

Unlike Ryzen mobile, intel’s “upto” 64 EUs part will probably only ship in like 2 laptops. Therefore amd has more designs in my book. I don’t understand people who buy expensive 4K laptops with intel integrated gfx which can’t even render windows 10 ui smoothly.Looking forward to Zen2 + navi based 7nm APU.

Bulat Ziganshin - Thursday, August 1, 2019 - link

> it can be very effective: a dual core system with AVX-512 outscored a 16-core system running AVX2 (AVX-256).it's obviously wrong - since ice lake has only one avx-512 block but two avx2 blocks, it's not much faster in avx-512 mode compared to avx2 mode

the only mention of HEDT cpus at the page linked is "At a score of 4519, it beats a full 18-core Core i9-7980XE processor running in non-AVX mode". Since AVX-512 can process 16 32-bit numbers in a single operation, no wonder that a single avx-512 core matches 16 scalar cores