Intel's Xeon Cascade Lake vs. NVIDIA Turing: An Analysis in AI

by Johan De Gelas on July 29, 2019 8:30 AM ESTInference: ResNet-50

After training your model on training data, the real test awaits. Your AI model should now be able to apply those learnings in the real world and do the same for new real-world data. That process is called inference. Inference requires no back propagation as the model is already trained – the model has already determined the weights. Inference also can make use of lower numerical precision, and it has been shown that even the accuracy from using 8-bit integers is sometimes acceptable.

From a high-level workflow perfspective, a working AI model is basically controlled by a service that, in turn, is called from another software service. So the model should respond very quickly, but the total latency of the application will be determined by the different services. To cut a long story short: if inference performance is high enough, the perceived latency might shift to another software component. As a result, Intel's task is to make sure that Xeons can offer high enough inference performance.

Intel has a special "recipe" for reaching top inference performance on the Cascade Lake, courtesy of the DL Boost technology. DLBoost includes the Vector Neural Network Instructions, which allows the use of INT8 ops instead of FP32. Integer operations are intrinsically faster, and by using only 8 bits, you get a theoretical peak, which is four times higher.

Complicating matters, we were experimenting with inference when our Cascade Lake server crashed. For what it is worth, we never reached more than 2000 images per second. But since we could not experiment any further, we gave Intel the benefit of the doubt and used their numbers.

Meanwhile the publication of the 9282 caused quite a stir, as Intel claimed that the latest Xeons outperformed NVIDIA's flagship accelerator (Tesla V100) by a small margin: 7844 vs 7636 images per second. NVIDIA reacted immediately by emphasizing performance/watt/dollar and got a lot of coverage in the press. However, the most important point in our humble opinion is that the Tesla V100 results are not comparable, as those 7600 images per second were obtained in mixed mode (FP32/16) and not INT8.

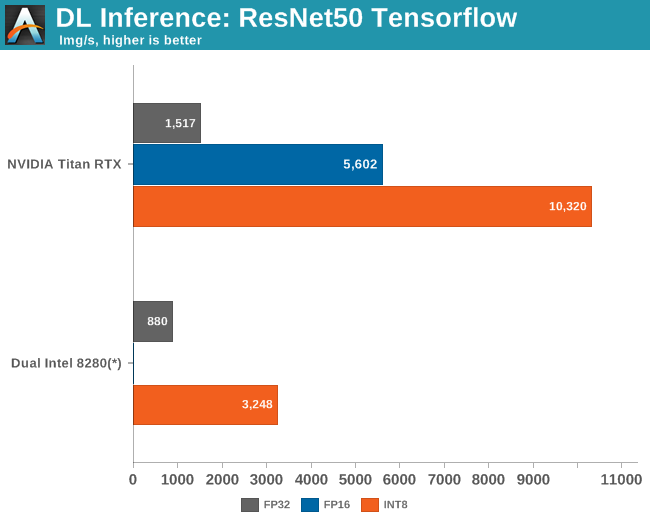

Once we enable INT8, the $2500 Titan RTX is no less than 3 times faster than a pair of $10k Xeons 8280s.

Intel cannot win this fight, not by a long shot. Still, Intel's efforts and NIVIDA’s poking in response show how important it is for Intel to improve both inference and training performance; to convince people to invest in high end Xeons instead of a low end Xeon with a Tesla V100. In some cases, 3 times slower than NVIDIA's offering might be good enough as the inference software component is just one part of the software stack.

In fact, to really analyze all of the angles of the situation, we should also measure the latency on a full-blown AI application instead of just measuring inference throughput. But that will take us some more time to get that one right....

56 Comments

View All Comments

C-4 - Monday, July 29, 2019 - link

It's interesting that optimizations did so much for the Intel processors (but relatively less for the AMD ones). Who made these optimizations? How much time was devoted to doing this? How close are the algorithms to being "fully optimized" for the AMD and nVidia chips?quorm - Monday, July 29, 2019 - link

I believe these optimizations largely take advantage of AVX512, and are therefore intel specific, as amd processors do not incorporate this feature.RSAUser - Monday, July 29, 2019 - link

As quorm said, I'd assume it's due to AVX512 optimizations, the next generation of AMD Epyc CPU's should support it, and I am hoping closer to 3GHz clock speeds on the 64 core chips, since it seems the new ceiling is around the 4GHz mark for 16 all-core.It will be an interesting Q3/Q4 for Intel in the server market this year.

SarahKerrigan - Monday, July 29, 2019 - link

Next generation? You mean Rome? Zen2 doesn't have any AVX512.HStewart - Tuesday, July 30, 2019 - link

I believe AMD AVX 2 is dual-128 bit instead of 256bit - so AVX 512 would probably be quad 128bit .jospoortvliet - Tuesday, July 30, 2019 - link

That’s not really how it works, in the sense that you explicitly need to support the new instructions... and amd doesn’t (plan to, as far as we know).Qasar - Tuesday, July 30, 2019 - link

from wikipedia :" AVX2 is now fully supported, with an increase in execution unit width from 128-bit to 256-bit. "

" AMD has increased the execution unit width from 128-bit to 256-bit, allowing for single-cycle AVX2 calculations, rather than cracking the calculation into two instructions and two cycles."

which is from here : https://www.anandtech.com/show/14525/amd-zen-2-mic...

looks like AVX2 is single 256 bit :-)

name99 - Monday, July 29, 2019 - link

Regarding the limits of large batches: while this is true in principle, the maximum size of those batches can be very large, is hard to predict (at leas right now) and there is on-going work to increase the sizes, This link describes some of the issue and what’s known:http://ai.googleblog.com/2019/03/measuring-limits-...

I think Intel would be foolish to pin many hopes on the assumption that batch scaling will soon end the superior performance of GPUs and even more specialized hardware...

brunohassuna - Monday, July 29, 2019 - link

Some information about energy consumption would very useful in comparisons like thatozzuneoj86 - Monday, July 29, 2019 - link

My first thought when clicking this article was how much more visibly-complex CPUs have gotten in the past ~35 years.Compare the bottom of that Xeon to the bottom of a CLCC package 286:

https://en.wikipedia.org/wiki/Intel_80286#/media/F...

And that doesn't even touch the difference internally... 134,000 transistors to 8 million and from 16Mhz to 4,000Mhz. The mind boggles.