Intel’s Keynote at CES 2019: 10nm, Ice Lake, Lakefield, Snow Ridge, Cascade Lake

by Ian Cutress on January 7, 2019 7:45 PM ESTStage 2: Datacenter

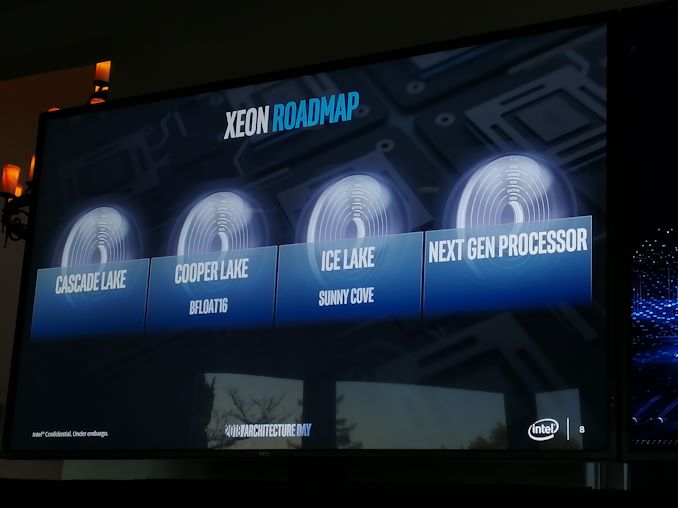

Intel’s Datacenter Group SVP, Navin Shenoy, also took to the stage at CES in order to discuss some new products in Intel’s portfolio, as well as to deliver updates on ones that were disclosed last year. Back in 2018, Intel held its Datacenter Summit in August, where it lifted the lid on Cascade Lake, Cooper Lake, and 10nm Ice Lake. Along with this, we saw new instruction support for AI and security as the top two areas of discussion.

Cascade Lake: Get Yours Today

Intel’s first generation of Xeon Scalable processors, Skylake-SP, was launched over 18 months ago. We’ve been hearing about the update to that family, Cascade Lake-SP, for a while now, along with its brother Cascade Lake-AP and how it will tackle the market. The announcement today from Intel is that the company is now shipping Cascade Lake for revenue.

This means, to be crystal clear, that select customers are now purchasing production-quality processors. What this doesn’t mean is retail availability. These select customers are part of Intel’s early sampling program, and have likely been working with engineering samples for several months. These customers are likely the big cloud providers, the AWS / Google / Azure / Baidus of the world.

It’s worth pointing out that at Intel’s Datacenter Summit, they said that half of all of its Xeons sold were ‘custom’ processor configurations that were not sold though its distributors – these parts are often described as ‘off roadmap’. It is likely that when Intel says Cascade Lake-SP is shipping for revenue to select customers that they are likely to be purchasing these off-roadmap processors. They might be running at a higher TDP than Intel expects for the commercial parts, or have different core/cache/frequency/memory configurations as and when they are needed.

One of the big draws for Cascade Lake is Intel’s Optane DC Persistent Memory support, which will enable several terabytes of memory per socket, but also the hardware security patches for Spectre v2. Businesses who want to be sure their hardware is patched can guarantee security if it's in the hardware, rather than relying on a firmware/software stack. So this might be part of why Intel’s demand for 14nm CPUs is at an all-time high and outstripping supply – if a company wants to be 100% sure it is protected, they need the hardware with baked-in security.

The full retail launch of Cascade Lake is expected in 2019. Based on what we saw at Supercomputing in November, given by a rolling slide deck at the booth of one of Intel’s OEM partners, that time frame looks to be somewhere from March to May.

Nervana for Inference: NNP-I coming in 2019

To date, when Intel has discussed the Nervana family of processors, we have only known about them in the context of large-scale neural network acceleration. The idea is that these big pieces of silicon are designed to accelerate the types of compute commonly found in neural network training, at performance and power efficiency levels above and beyond what CPUs and GPUs can do. It has been disclosed that Intel is working on that family of parts, NNP-L, for a while now, and we are still waiting on a formal launch. But in the meantime, Intel is announcing today that it is working on a part that's optimized for inference as well.

There are two parts to implementing machine learning with neural networks: making the network learn (training), and then using the trained network on new information to do its job (inference). The algorithms are often designed such that the more you can train a network, the more accurate it is and sometimes the less computationally intensive it is to apply it to an external problem. The more resources you put into training, the better. But the scale of compute between training and inference is several orders of magnitude: you need a big processor for training, but don’t need a big processor for inference. This is where Intel’s announcement comes in.

The NNP-I is set to be a smaller version of the NNP-L and built specifically for inference, with Intel stating that it will be coming in 2019. Exact details are not being disclosed at this time, so we don't have any information on the interface (likely PCIe), power consumption, die size, architecture, etc. However, we can draw some parallels from Intel’s competition. NVIDIA has big Tesla V100 GPUs with HBM2 for training that can draw 300-350W each, with up to eight of them in a system at once. However for inference it has the Tesla P4, which is a small chip below 75W, and we’ve seen systems designed to hold 20 of NVIDIA's various inference processors at once. It is likely that this new NNP-I design is along the same lines.

Snow Ridge on 10nm: An SoC for Networking and 5G (Next-Gen Xeon-D?)

The Data Center Group will be making two specific announcements around 10nm. The first is disclosing the Snow Ridge family of processors, focused on networking and specifically targeting the wide array of 5G deployments coming up over the next decade. The purpose of Snow Ridge is to enable wireless access base stations and deployments, as well as functions required at the edge of the network, such as compute, virtualization, and potentially things like artificial intelligence.

Intel gave no other details, however going back in my mind, I realise that we’ve heard this before with Intel. They already have processors on their roadmap focused specifically on networking, with 40 GbE support and features like QuickAssist Technology to accelerate networking cryptography: the Xeon-D line of processors. This makes me believe that Snow Ridge will be the name for the next generation of Xeon D, either the Xeon D-2500 or Xeon D-3100, depending on the power envelope Intel is going for.

Given this assumption, and the fact that Intel has said that this is a 10nm processor, I suspect we’re looking at a multi-core Sunny Cove enterprise design with integrated networking MACs and support for lots of storage and lots of ECC memory. There’s an outside chance that it might support Optane, allowing for bigger memory deployments, although I wouldn’t put money on it at this stage.

Ice Lake Xeon Scalable on 10nm

To finish up Intel’s announcements, Nevin also talked about Ice Lake Xeon Scalable. At Intel’s Architecture Day, a processor was shown at the event that was described as Ice Lake Xeon, so this is just Intel repeating the fact that they now have working silicon in the labs. There is still no word as to how Intel is progressing here, with question marks over the yields of the smaller dies, let alone the larger Xeon ones. Working silicon in this case is just a functional test to make sure it works – what comes now is the tuning for frequency, power, performance, and optimizing the silicon layout to get all three. I’m hoping that Intel keeps us apprised of its progress here.

What Happened at CES 2018, and why CES 2019 is Different

A memory that will stick in my mind is Intel’s CES 2018 announcements. At the heart of the show, we wanted to know about the state of Intel’s 10nm process, and details were not readily available. 10nm wasn’t mentioned in the keynote, and when I tried to ask then-CEO Brian Krzanich about it, another Intel employee hastily cut in to the conversation saying that nothing more would be said. In the end we got a single sentence from Gregory Bryant at an early morning presentation the day after the keynote, and that sentence was only after 10 minutes of saying how well Intel was executing. That single sentence was to say that Intel was shipping 10nm parts in 2017, although so far only two consumer products (in limited quantities, and region specific) have ever been seen.

This year, coming off the back of Intel’s Architecture Day last month, shows that Intel is becoming more open to discussing future products and roadmaps. A lot of us in the press and analyst community are actively encouraging this trend to continue, and the contrast between CES 2018 and CES 2019 is clear to see. Companies tend to hide or obfuscate details when product execution isn’t going to plan; now that Intel is starting to open up with details, the outlook is clearly returning to one with more optimism.

60 Comments

View All Comments

DanNeely - Tuesday, January 8, 2019 - link

I doubt it'd be the Automotive segment. They're not highly volume or idle power constrained; and already have a crapload of arm chips for all sorts of embedded control systems.jjj - Tuesday, January 8, 2019 - link

They are very power constrained, far more than laptop even with EVs because charging is slow, the infrastructure limited and batteries are costly..And cars are about 80 million units per year so as high vols as laptops but much higher ASPs as the product needs to be a lot more reliable. Plus, the PC has no future, sales will be 0 in less than 10 years.

FunBunny2 - Tuesday, January 8, 2019 - link

years ago, the CEO of Intel (don't recall which one) said, "I'd rather have my chip in every Ford than every PC"."Even basic vehicles have at least 30 of these microprocessor-controlled devices, known as electronic control units, and some luxury cars have as many as 100."

NYT: https://www.nytimes.com/2010/02/05/technology/05el... and that was 8 or 9 years ago.

Icehawk - Tuesday, January 8, 2019 - link

Uh huh, see ten years ago they said desktops were dead... and they aren’t. It will be a lot longer before they dissapearstephenbrooks - Tuesday, January 8, 2019 - link

Also the definition of "PC" keeps changing in these claims. Do they mean desktop, or laptop? What about a ChromeBook or MacBook - is that a PC? You can put linux on a ChromeBook now, so it's evolving towards being a general purpose PC rather than away. TVs and consoles are also gradually turning into PCs.FunBunny2 - Tuesday, January 8, 2019 - link

it's surely arguable that most users of 'desktop' computers can do wordprocessing, email, and spreadsheets on a 1995 cpu. it would appear that only high-stakes gamers and the occasional data scientist really need Intel/AMD latest silicon.HStewart - Tuesday, January 8, 2019 - link

I think you are thinking of ARM based CPU's - where multiple big cores maybe be required - for decades we have single core x86 base CPU and having addition smaller core for idle tasks would be benefit. I would believe that a single large core combine with 4 atom cores would be just as good a 2 large cores. Remember these are Sunny Core cores which are significantly faster than current cores.FunBunny2 - Tuesday, January 8, 2019 - link

" I would believe that a single large core combine with 4 atom cores would be just as good a 2 large cores. "it remains true that 99.44% of applications are single-threaded, and embarrassingly parallel problems are as rare as hen's teeth. remember lo those few years ago when it was asserted that clock speed was as fast as possible and needed? all we'd need was more threads/cores. hasn't worked out that way. having lots o cores to run lots o apps at the 'same time' is a totally different problem, and GPU seems to have solved much of that.

HStewart - Tuesday, January 8, 2019 - link

I believe we are in alignment - but it will be interesting to see how Foveres lines up - I know we say my Samsung Galaxy S3 - 4 large core and 4 small cores are part of designed - but an ARM core is much less powerful core than a Core based x86 core.As developer for 30 years, most of application depend on primary thread especially with user interface - and I can see how GPU could be more beneficial in have more units. But threads usually except for heavy compute situation are primary in idle state in most applications. For example check network connection for update status - or monitor a file - or post a message or something - typically a big while loop while thread is active - do something and go to sleep and repeat.

Even in heavy compute system, it would be better designed to break the task into chunks and let the thread - more yield of task, A lot of depends on OS - is very likely in same application - that it is all running on same core - it very possible that it requires additional logic to run it on additional core. I could see games using threads for AI and update character / bot movement on the playing field. How effective directly depends on how the OS is written and how the game is written. In addition it depends on how the CPU is designed - it possibly using more cores on system may actually slow things down for multiple reasons - system may run them at lower speed - plus what it takes to maintain the threads. But if believe it better to have less higher speed cores than more less powerful cores. But I also believe it much better if you need multiple cores - that it better to spread them across multiple CPU's. For example a quad cpu 8 core system would beat a single cpu 32 core system or a dual 16 core system. Because the cores can be run at higher speed.

The big question are we solving the problem of technology by throwing more cores into the picture, or should the architexture be change to make existing cores more efficient - this does not just mean clock speed - this is why the Sunny Cove changes in Ice Lake - look so exciting - it stead of patching the problem with more cores and higher frequency - revolutionize the design of system to handle the problem better.

Well like anything else on internet - this is my opinion - but part of those 30 years include 7 years of OS development and schooling include some Microprocessor design even though that was in 80's but I try to keep up with latest and that is why I come to forums like this.

MobiusPizza - Tuesday, January 8, 2019 - link

"Intel also mentioned software support, such as the new VNNI instructions, openVINO toolkit support, Cryptographic ISA instructions, and support for Overworld."What is Overworld? The slide says OverWolf but even so what is that?