The NVIDIA Titan V Deep Learning Deep Dive: It's All About The Tensor Cores

by Nate Oh on July 3, 2018 10:15 AM ESTDAWNBench: Image Classification (CIFAR10)

In terms of real-world applicable performance, deep learning training is better described with time-to-accuracy and cost metrics. For DAWNBench, those measurements were the stipulations for each of the three subtests. For image classification with CIFAR10, these were:

- Training Time: Train an image classification model for the CIFAR10 dataset. Report the time needed to train a model with test set accuracy of at least 94%

- Cost: On public cloud infrastructure, compute the total time needed to reach a test set accuracy of 94% or greater, as outlined above. Multiply the time taken (in hours) by the cost of the instance per hour, to obtain the total cost of training the model

Here, we take two of the top-5 fastest CIFAR10 training implementations, both in PyTorch, and run them with our devices. The first, based on ResNet34, was created to run on a NVIDIA GeForce GTX 1080 Ti, while the second, based on ResNet18, was created to run on a single Tesla V100 (AWS p3.2large). Because these are recent top entries to DAWNBench, we can consider them to be reasonably modern, while understanding that CIFAR10 is not a hugely intensive dataset.

For our purposes, the tiny image dataset of CIFAR10 works fine as running a single-node on a dataset like ImageNet with non-professional hardware that could be old as Kepler, may result in unacceptably long training times for unlikely convergence. This is a useful result on its own but only after we have a fully detailed machine learning GPU benchmark suite.

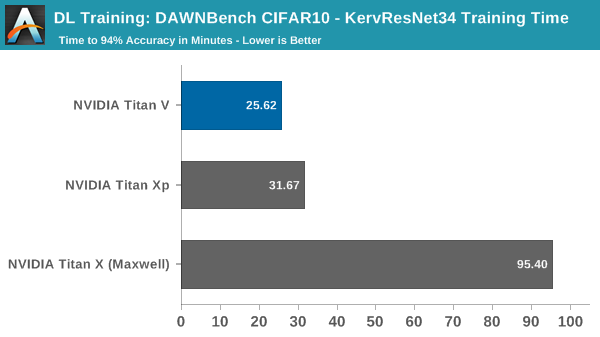

The first implementation was designed to run on a single GTX 1080 Ti train, and the original submission noted that it took 35:37, or 35.6 minutes, to train to 94%.

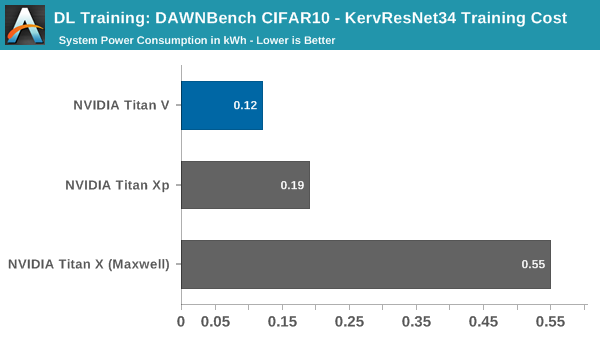

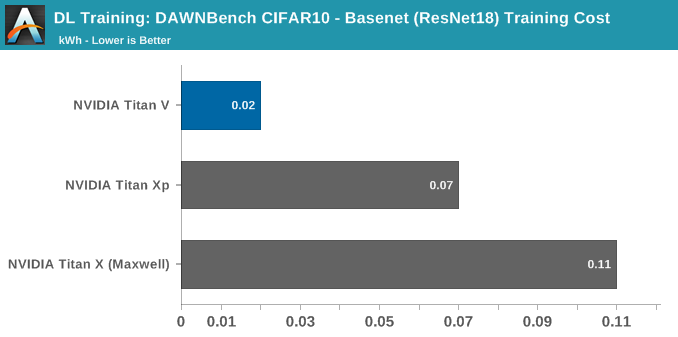

Even though we are training this on-premise, the cost metric is useful to compare against other graphics cards, though in this case we are talking about electricity differences of a few cents.

Using the Titan V in this circumstance does not take advantage of tensor cores, only its general improvements over Pascal. In this unoptimized setting, it runs in around 20% faster than the Titan Xp. Even still, the peak system consumption has dropped down by around 80W, though how much of that is due to the graphics card is unclear because of how the model deals with CPU usage and data pre-processing.

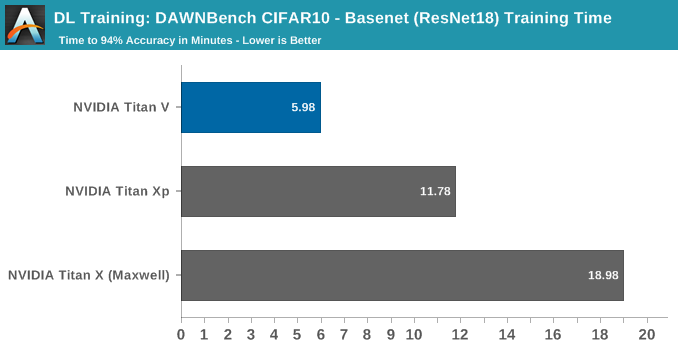

For the second implementation, the original submission reported the V100 training to 94% in 5:41, or 5.7 minutes.

Without delving into and accurately analyzing the model code, it's not clear whether it takes advantage of tensor cores or not, since the model is plenty fast on Maxwell, let alone Volta.

65 Comments

View All Comments

Ryan Smith - Tuesday, July 3, 2018 - link

To clarify: SXM3 is the name of the socket used for the mezzanine form factor cards for servers. All Titan Vs are PCie.Drumsticks - Tuesday, July 3, 2018 - link

Nice review. Will anandtech be putting forth an effort to cover the ML hardware space in the future? AMD and Intel both seem to have plans here.The V100 and Titan V should have well over 100TF according to Nvidia in training and inference, if I remember correctly, but nothing I saw here got close in actuality. Were these benches not designed to hit those numbers, or are those numbers just too optimistic in most scenarios to occur?

Ryan Smith - Tuesday, July 3, 2018 - link

"The V100 and Titan V should have well over 100TF according to Nvidia in training and inference"The Titan V only has 75% of the memory bandwidth of the V100. So it's really hard to hit 100TF. Even in our Titan V preview where we ran a pure CUDA-based GEMM benchmark, we only hit 97 TFLOPS. Meanwhile real-world use cases are going to be lower still, as you can only achieve those kinds of high numbers in pure tensor core compute workloads.

https://www.anandtech.com/show/12170/nvidia-titan-...

Nate Oh - Tuesday, July 3, 2018 - link

To add on to Ryan's comment, 100+ TF is best-case (i.e. synthetic) performance based on peak FMA ops on individual matrix elements, which only comes about when everything perfectly qualifies for tensor core acceleration, no memory bottleneck by reusing tons of register data, etc.remedo - Tuesday, July 3, 2018 - link

Nate, I hope you could have included more TensorFlow/Keras specific benchmarks, given that the majority of deep learning researchers/developers are now using TensorFlow. Just compare the GitHub stats of TensorFlow vs. other frameworks. Therefore, I feel that this article missed some critical benchmarks in that regard. Still, this is a fascinating article, and thank you for your work. I understand that Anandtech is still new to deep learning benchmarks compared to your decades of experience in CPU/Gaming benchmark. If possible, please do a future update!Nate Oh - Tuesday, July 3, 2018 - link

Several TensorFlow benchmarks did not make the cut for today :) We were very much interested in using it, because amongst other things it offers global environmental variables to govern tensor core math, and integrates somewhat directly with TensorRT. However, we've been having issues finding and using one that does all the things we need it to do (and also offers different results than just pure throughput), and I've gone so far as trying to rebuild various models/implementations directly in Python (obviously to no avail, as I am ultimately not an ML developer).According to people smarter than me (i.e. Chintala, and I'm sure many others), if it's only utilizing standard cuDNN operations then frameworks should perform about the same; if there are significant differences, a la the inaugural version of Deep Learning Frameworks Comparison, it is because it is poorly optimized for TensorFlow or whatever given framework. From a purely GPU performance perspective, usage of different frameworks often comes down to framework-specific optimization, and not all reference implementations or benchmark suite tests do what we need it to do out-of-the-box (not to mention third-party implementations). Analyzing the level of TF optimization is developer-level work, and that's beyond the scope of the article. But once benchmark suites hit their stride, that will resolve that issue for us.

For Keras, I wasn't able to find anything that was reasonably usable by a non-developer, though I could've easily missed something (I'm aware of how it relates to TF, Theano, MXNet, etc). I'm sure that if we replaced PyTorch with Tensorflow implementations, we would get questions on 'Where's PyTorch?' :)

Not to say your point isn't valid, it is :) We're going to keep on looking into it, rest assured.

SirPerro - Thursday, July 5, 2018 - link

Keras has some nice examples in its github repo to be run with the tensorflow backend but for the sake of benchmarking it does not offer anything that it's not covered by the pure tensorflow examples, I guessBurntMyBacon - Tuesday, July 3, 2018 - link

I believe the GTX Titan with memory clock 6Gbps and memory bus width of 384 bits should have a memory bandwidth of 288GB/sec rather than the list 228GB/sec. Putting that aside, this is a nice review.Nate Oh - Tuesday, July 3, 2018 - link

Thanks, fixedJon Tseng - Tuesday, July 3, 2018 - link

Don't be silly. All we care about is whether it can run Crysis at 8K.