The AnandTech Coffee Lake Review: Initial Numbers on the Core i7-8700K and Core i5-8400

by Ian Cutress on October 5, 2017 9:00 AM EST- Posted in

- CPUs

- Intel

- Core i5

- Core i7

- Core i3

- 14nm

- Coffee Lake

- 14++

- Hex-Core

- Hyperthreading

Silicon and Process Nodes: 14++

Despite being somewhat reserved in our pre-briefing, and initially blanket labeling the process node for these chips as ‘14nm’, we can confirm that Intel’s newest ‘14++’ manufacturing process is being used for these 8th Generation processors. This becomes Intel’s third crack at a 14nm process, following on from Broadwell though Skylake (14), Kaby Lake (14+), and now Coffee Lake (14++).

With the 8th Generation of processors, Intel is moving away from having the generation correlate to both the process node and microarchitecture. As Intel’s plans to shrink its process nodes have become elongated, Intel has decided that it will use multiple process nodes and microarchitectures across a single generation of products to ensure that every update cycle has a process node and microarchitecture that Intel feels best suits that market. A lot of this is down to product maturity, yields, and progress on the manufacturing side.

| Intel's Core Architecture Cadence (8/20) | |||||

| Core Generation | Microarchitecture | Process Node | Release Year | ||

| 2nd | Sandy Bridge | 32nm | 2011 | ||

| 3rd | Ivy Bridge | 22nm | 2012 | ||

| 4th | Haswell | 22nm | 2013 | ||

| 5th | Broadwell | 14nm | 2014 | ||

| 6th | Skylake | 14nm | 2015 | ||

| 7th | Kaby Lake | 14nm+ | 2016 | ||

| 8th | Kaby Lake Refresh Coffee Lake Cannon Lake |

14nm+ 14nm++ 10nm |

2017 2017 2018? |

||

| 9th | Ice Lake? ... |

10nm+ | 2018? | ||

| Unknown | Cascade Lake (Server) | ? | ? | ||

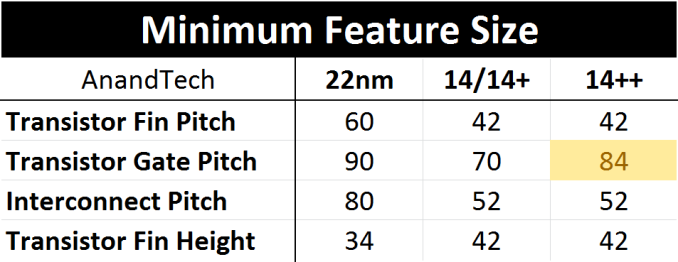

Kaby Lake was advertised as using a 14+ node with slightly relaxed manufacturing parameters and a new FinFET profile. This was to allow for higher frequencies and better overclocking, although nothing was fundamentally changed in the core manufacturing parameters. With Coffee Lake at least, the minimum gate pitch has increased from 70nm for 84nm, with all other features being equal.

Increased gate pitch moves transistors further apart, forcing a lower current density. This allows for higher leakage transistors, meaning higher peak power and higher frequency at the expense of die area and idle power.

Normally Intel aims to improve their process every generation, however this seems like a step ‘back’ in some of the metrics in order to gain performance. The truth of the matter is that back in 2015, we were expecting Intel to be selling 10nm processors en-masse by now. As delays have crept into that timeline, the 14++ note is holding over until 10nm is on track. Intel has already stated that 10+ is likely to be the first node on the desktop, which given the track record on 14+ and 14++ might be a relaxed version of 10 in order to hit performance/power/yield targets, with some minor updates. Conceptually, Intel seems to be drifting towards seperate low-power and high-performance process nodes, with the former coming first.

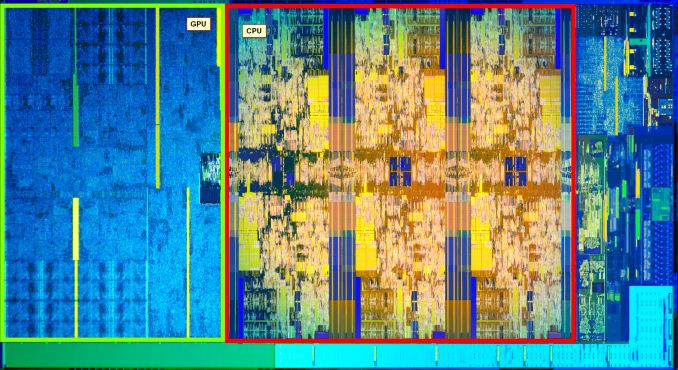

Of course, changing the fin pitch is expected to increase the die area. With thanks to HEKPC (via Videocardz), we can already see a six-core i7-8700K silicon die compared to a quad-core i7-7700K.

The die area of the Coffee Lake 6+2 design (six cores and GT2 graphics) sits at ~151 mm2, compared to the ~125 mm2 for Kaby Lake 4+2 processor: a 26mm2 increase. This increase is mainly due to the two cores, however there is a minor adjustment in the integrated grpahics as well to support HDCP 2.2, not to mention any unpublished changes Intel has made to their designs between Kaby Lake and Coffee Lake.

The following calculations are built on assumptions and contain a margin of error

With the silicon floor plan, we can calculate that the CPU cores (plus cache) account for 47.3% of the die, or 71.35 mm2. Divided by six gives a value of 11.9 mm2 per core, which means that it takes 23.8 mm2 of die area for two cores. Out of the 26mm2 increase then, 91.5% of it is for the CPU area, and the rest is likely accounting for the change in the gate pitch across the whole processor.

The Coffee Lake 4+2 die would then be expected to be around ~127 mm2, making a 2mm2 increase over the equivalent Kaby Lake 4+2, although this is well within the margin of error for measuring these processors. We are expecting to see some overclockers delid the quad-core processors soon after launch.

In previous Intel silicon designs, when Intel was ramping up its integrated graphics, we were surpassing 50% of the die area being dedicated to graphics. In this 6+2 design, the GPU area accounts for only 30.2% of the floor plan as provided, which is 45.6 mm2 of the full die.

Memory Support on Coffee Lake

With a new processor generation comes an update to memory support. There is always a small amount of confusion here about what Intel calls ‘official memory support’ and what the processors can actually run. Intel’s official memory support is typically a guarantee, saying that in all circumstances, with all processors, this memory speed should work. However motherboard manufacturers might offer speeds over 50% higher in their specification sheets, which Intel technically counts as an overclock.

This is usually seen as Intel processors having a lot of headroom to be conservative, avoid RMAs, and maintain stability. In most cases this is usually a good thing: there are only a few niche scenarios where super high-speed memory can equate to tangible performance gains* but they do exist.

*Based on previous experience, but pending a memory scaling review

For our testing at least, our philosophy is that we test at the CPU manufacturers’ recommended setting. If there is a performance gain to be had from slightly faster memory, then it pays dividends to set that as the limit for official memory support. This way, there is no argument on what the rated performance of the processor is.

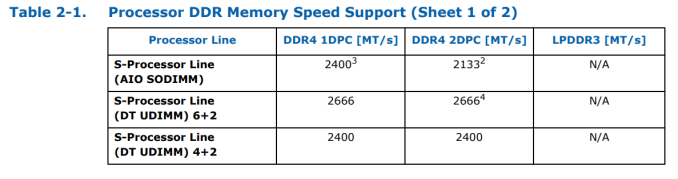

For the new generation, Intel is supporting DDR4-2666 for the six-core parts and DDR4-2400 for the quad-core parts, in both 1DPC (one DIMM per channel) and 2DPC modes. This should make it relatively simple, compared to AMD’s memory support differing on DPC and type of memory.

It gets simple until we talk about AIO designs using the processors, which typically require SODIMM memory. For these parts, for both quad-core and hex-core, Intel is supporting DDR4-2400 at 1DPC and DDR4-2133 at 2DPC. LPDDR3 support is dropped entirely. The reason for supporting a reduced memory frequency in an AIO environment with SODIMMs is because these motherboards typically run their traces as chained between the memory slots, rather than a T-Topology which helps with timing synchronization. Intel has made the T-Topology part of the specification for desktop motherboards, but not for AIO or integrated ones, which explains the difference in DRAM speed support.

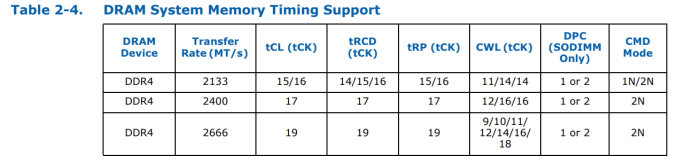

These supported frequencies follow JEDEC official sub-timings. Familiar system builders will be used to DDR4-2133 at a CAS Latency of 15, but as we increase the speed of the modules, the latency increases to compensate:

Intel’s official sub-timing support at DDR4-2666 is 19-19-19. Outside of enterprise modules, that memory does not really exist, because memory manufacturers can seem to mint DDR4-2666 16-17-17 modules fairly easily, and these processors are typically fine with those sub-timings. CPU manufacturers typically only state ‘supported frequency at JEDEC sub-timings’ and do not go into sub-timing discussions, because most users care more about the memory frequency. If time permits, it would be interesting to see just how much of a performance deficit the official JEDEC sub-timings provide compared to what memory is actually on sale.

222 Comments

View All Comments

mkaibear - Saturday, October 7, 2017 - link

Well, I'd broadly agree with that!There are latency issues with that kind of approach but I'm sure they'd be solvable. It'll be interesting to see what happens with Intel's Mesh when it inevitably trickles down to the lower end / AMD's Infinity Fabric when they launch their APUs.

mapesdhs - Tuesday, October 10, 2017 - link

Such an idea is kinda similar to SGI's shared memory designs. Problem is, scalable systems are expensive, and these days the issue of compatibility is so strong, making anything new and unique is very difficult, companies just don't want to try out anything different. SGI got burned with this re their VW line of PCs.boeush - Saturday, October 7, 2017 - link

I think it's a **VERY** safe bet that most systems selling with an i7 8700/k will also include some sort of a discrete GPU. It's almost unimaginable that anyone would buy/build a system with such a CPU but no better GPU than integrated graphicsWhich makes the iGPU a total waste of space and a piece of useless silicon that consumers are needlessly paying for (because every extra square inch of die area costs $$$).

For high-end CPUs like the i7s, it would make much more sense to ditch the iGPU and instead spend that extra silicon to add an extra couple of cores, and a ton more cache. Then it would be a far better CPU for the same price.

So I'm totally with the OP on this one.

mkaibear - Sunday, October 8, 2017 - link

You need a better imagination!Of the many hundreds of computers I've bought or been responsible for speccing for corporate and educational entities, about half have been "performance" oriented (I'd always spec a decent i5 or i7 if there's a chance that someone might be doing something CPU limited - hardware is cheap but people are expensive...) Of those maybe 10% had a discrete GPU (the ones for games developers and the occasional higher-up's PC). All the rest didn't.

From chatting to my fellow managers at other institutions this is basically true across the board. They're avidly waiting for the Ryzen APUs to be announced because it will allow them to actually have competition in the areas they need it!

boeush - Sunday, October 8, 2017 - link

It's not surprising to see business customers largely not caring about graphics performance - or about the hit to CPU performance that results from splitting the TDP budget with the iGPU...In my experience, business IT people tend to be either penny-wise and pound-foolish, or obsessed with minimizing their departmental TCO while utterly ignoring company performance as a whole. If you could get a much better-performing CPU for the same money, and spend an extra $40 for a discrete GPU that matches or exceeds the iGPU's capabilities - would you care? Probably not. Then again, that's why you'd stick with an i5 - or a lower-grade i7. Save a hundred bucks on hardware per person per year; lose a few thousand over the same period in wasted time and decreased productivity... I've seen this sort of penny-pinching miscalculation too many times to count. (But yeah, it's much easier to quantify the tangible costs of hardware, than to assess/project the intangibles of sub-par performance...)

But when it comes specifically to the high-end i7 range - these are CPUs targeted specifically at consumers, not businesses. Penny-pinching IT will go for i5s or lower-grade i7s; large-company IT will go for Xeons and skip the Core line altogether.

Consumer builds with high-end i7s will always go with a discrete GPU (and often more than one at a time.)

mkaibear - Monday, October 9, 2017 - link

That's just not true dude. There are a bunch of use cases which spec high end CPUs but don't need anything more than integrated graphics. In my last but-one place, for example, they were using a ridiculous Excel spreadsheet to handle the manufacturing and shipping orders which would bring anything less than an i7 with 16Gb of RAM to its knees. Didn't need anything better than integrated graphics but the CPU requirements were ridiculous.Similarly in a previous job the developers had ludicrous i7 machines with chunks of RAM but only using integrated graphics.

Yes, some it managers are penny wise and pound foolish, but the decent ones who know what they're doing they spend the money on the right CPU for the job - and as I say a serious number of use cases don't need a discrete GPU.

...besides it's irrelevant because the integrated GPU has zero impact on performance for modern Intel chips, as I said the limit is thermal not package size.

If Intel whack an extra 2 cores on and clock them at the same rate their power budget is going up by 33% minimum - so in exchange for dropping the integrated GPU you get a chip which can no longer be cooled by a standard air cooler and has to have something special on there, adding cost and complexity.

Sticking with integrated GPUs is a no-brainer for Intel. It preserves their market share in that environment and has zero impact for the consumer, even gaming consumers.

boeush - Monday, October 9, 2017 - link

Adding 2 cores to a 6-core CPU drives the power budget up by 33% if and **ONLY IF** all cores are actually getting fully utilized. If that is the case, then the extra performance from those extra 2 cores would be indeed actually needed! (at least on those occasions, and would be, therefore, sorely missed in a 6-core chip.). Otherwise, any extra cores would be mostly idle, not significantly impacting power utilization, cooling requirements, or maximum single-thread performance.Equally important to the number of cores is the amount of cache. Cache takes up a lot of space, doesn't generate all that much heat (compared to the actual CPU pipeline components), but can boost performance hugely, especially on some tasks that are memory-constrained. Having more L1/L2/L3 cache would provide a much better bang for the buck when you need the CPU grunt (and therefore a high-end i7), than the waste of an iGPU (eating up ~50% of die area) ever could.

Again, when you're already spending top dollar on an i7 8700/k (presumable because you actually need high CPU performance), it makes little sense that you go, "well, I'd rather have **LOWER** CPU performance, than be forced to spend an extra $40 on a discrete GPU (that I could then reuse on subsequent system builds/upgrades for many years to come)"...

mkaibear - Tuesday, October 10, 2017 - link

Again, that's not true. Adding 2 cores to a 6 core CPU means that unless you find some way to prevent your OS from scheduling threads on it then all those cores are going to end up used somewhat - which means that you have to plan for your worst case TDP not your best case TDP - which means you have to engineer a cooling solution which will work for the full 8 core CPU, increasing costs to the integrator and the end user. Why do you think Intel's worked so hard to keep the 6-core CPU within a few watts of the old 4-core CPU?In contrast an iGPU can be switched on or off and remain that way, the OS isn't going to assign cores to it and result in it suddenly dissipating more power.

And again you're focussing on the extremely limited gamer side of things - in the real world you don't "reuse the graphics card for many years to come", you buy a machine which does what you need it to and what you project you'll need it to, then replace it at the end of whatever period you're amortising the purchase over. Adding a $40 GPU and paying the additional electricity costs to run that GPU over time means your TCO is significantly increased for zero benefits, except in a very small number of edge cases in which case you're probably better off just getting a HEDT system anyway.

The argument about cache might be a better one to go down, but the amount of cache in desktop systems doesn't have as big an impact on normal workflow tasks as you might expect - otherwise we'd see greater segmentation in the marketplace anyway.

In short, Intel introducing desktop processors without iGPUs makes no sense for them at all. It would benefit a small number of enthusiasts at a cost of winding up a large number of system integrators and OEMs, to say nothing of a huge stack of IT Managers across the industry who would suddenly have to start fitting and supporting discrete GPUs across their normal desktop systems. Just not a good idea, economically, statistically or in terms of customer service.

boeush - Tuesday, October 10, 2017 - link

The TDP argument as you are trying to formulate it is just silly. Either the iGPU is going to be in fact used on a particular build, or it's going to be disabled in favor of headless operation or a discrete GPU. If the iGPU is disabled, then it is the very definition of all-around WASTE - a waste of performance potential for the money, conversely/accordingly a waste of money, and a waste in terms of manufacturing/materials efficiency. On the other hand, if the iGPU is enabled, it is actually more power-dense that the CPU cores - meaning you'll have to budget even more heavily for its heat and power dissipation, than you'd have for any extra CPU cores. So in either case, your argument makes no sense.Remember, we are talking about the high end of the Core line. If your build is power-constrained, then it is not high-performance and you have no business using a high-end i7 in it. Stick to i5/i3, or the mobile variants, in that case. Otherwise, all these CPUs come with a TDP. Whether the TDP is shared with an iGPU or wholly allocated to CPU is irrelevant: you still have to budget/design for the respective stated TDP.

As far as "real-world", I've seen everything from companies throwing away perfectly good hardware after a year of use, to people scavenging parts from old boxes to jury-rig a new one in a pinch.

And again, large companies with big IT organizations will tend to forego the Core line altogether, since the Xeons provide better TCO economy due to their exclusive RAS features. The top-end i7 really is not a standard 'business' CPU, and Intel really is making a mistake pushing it with the iGPU in tow. That's where they've left themselves wide-open to attack from AMD, and AMD has attacked them precisely along those lines (among others.)

Lastly, don't confuse Intel's near-monopolistic market segmentation engineering with actual consumer demand distribution. Just because Intel has chosen to push an all-iGPU lineup at any price bracket short of exorbitant (i.e. barring the so-called "enthusiast" SKUs), doesn't mean the market isn't clamoring for a more rational and effective alternative.

mkaibear - Wednesday, October 11, 2017 - link

Sheesh. Where to start?1) Yes, you're right, if the iGPU isn't being used then it will be disabled, and therefore you don't need to cool it. Conversely, if you have additional cores then your OS *will* use them, and therefore you *do* need to cool them.

iGPU doesn't draw very much power at all. HD2000 drew 3W. The iGPU in the 7700K apparently draws 6W so I assume the 8700K with a virtually identical iGPU draws just as much (figures available via your friendly neighbourhood google). Claiming the iGPU has a higher power budget than the CPU cores is frankly ridiculous. (in fact it also draws less than .2W when it's shut down which means that having it in there is far outweighed by the additional thermal sink available, but anyway)

2) Large companies with big IT organisations don't actually forego the Core line altogether and go with Xeons. They could if they wanted to, but in general they still use off-the shelf Dells and HPs for everything except extremely bespoke setups - because, as I previously mentioned, "hardware is cheap, people are expensive" - getting an IT department to build and maintain bespoke computers is hilariously expensive. No-one is arguing that for an enthusiast building their own computer that the option of the extra cores would be nice, but my point all along has been that Intel isn't going to risk sacrificing their huge market share in the biggest market to gain a slice of a much smaller market. That would be extremely bad business.

3) The market isn't "clamoring for a more rational and effective alternative" because if it was then Ryzen would have flown off the shelves much faster than it did.

Bottom line: business IT wants simple solutions, the fewer parts the better. iGPUs on everything fulfil far more needs than dGPUs for some and iGPUs for others. iGPUs make designing systems easier, they make swapouts easier, they make maintenance easier, they reduce TCO, they reduce RMAs and they just make IT staff's lives easier. I've run IT for a university, a school and a manufacturing company, and for each of them the number of computers which needed a fast CPU outweighed the number of computers which needed a dGPU by a factor of at least 10:1 - and the university I worked for had a world-leading art/media/design dept and a computer game design course which all had dGPUs. The average big business has even less use for dGPUs than the places I've worked.

If you want to keep trying to argue this then can you please answer one simple question: why do you think it makes sense for Intel to prioritise a very small area in which they don't have much market share over a very large area in which they do? That seems the opposite of what a successful business should do.