Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

AMD's EPYC Server CPU

If you have read Ian's articles about Zen and EPYC in detail, you can skip this page. For those of you who need a refresher, let us quickly review what AMD is offering.

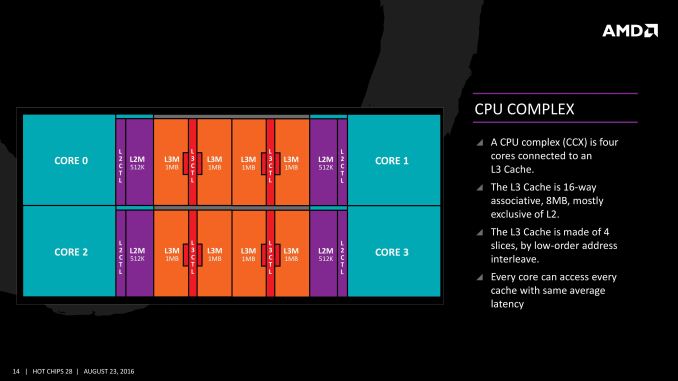

The basic building block of EPYC and Ryzen is the CPU Complex (CCX), which consists of 4 vastly improved "Zen" cores, connected to an L3-cache. In a full configuration each core technically has its own 2 MB of L3, but access to the other 6 MB is rather speedy. Within a CCX we measured 13 ns to access the first 2 MB, and 15 to 19 ns for the rest of the 8 MB L3-cache, a difference that's hardly noticeable in the grand scheme of things. The L3-cache acts as a mostly exclusive victim cache.

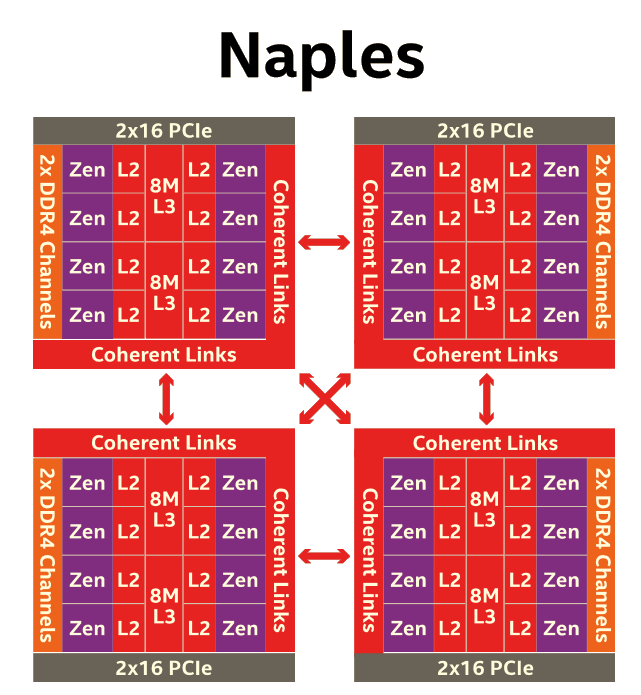

Two CCXes make up one Zeppelin die. A custom fabric – AMD's Infinity Fabric – ties together two CCXes, the two 8 MB L3-caches, 2 DDR4-channels, and the integrated PCIe lanes. That topology is not without some drawbacks though: it means that there are two separate 8 MB L3 caches instead of one single 16 MB LLC. This has all kinds of consequences. For example the prefetchers of each core make sure that data of the L3 is brought into the L1 when it is needed. Meanwhile each CCX has its own separate (not inside the L3, so no capacity hit) and dedicated SRAM snoop directory (keeping track of 7 possible states). In other words, the local L3-cache communicates very quickly with everything inside the same CCX, but every data exchange between two CCXes comes with a tangible latency penalty.

Moving further up the chain, the complete EPYC chip is a Multi Chip Module(MCM) containing 4 Zeppelin dies.

AMD made sure that each die is only one hop apart from the other, ensuring that the off-die latency is as low as reasonably possible.

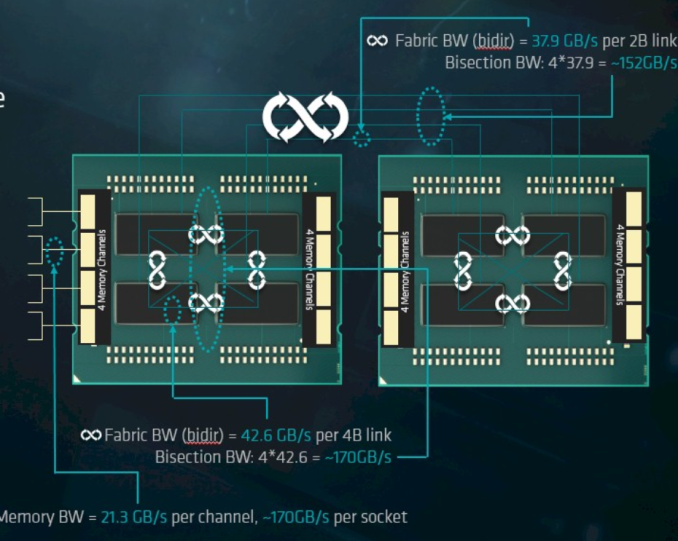

Meanwhile scaling things up to their logical conclusion, we have 2P configurations. A dual socket EPYC setup is in fact a "virtual octal socket" NUMA system.

AMD gave this "virtual octal socket" topology ample bandwidth to communicate. The two physical sockets are connected by four bidirectional interconnects, each consisting of 16 PCIe lanes. Each of these interconnect links operates at +/- 38 GB/s (or 19 GB/s in each direction).

So basically, AMD's topology is ideal for applications with many independently working threads such as small VMs, HPC applications, and so on. It is less suited for applications that require a lot of data synchronization such as transactional databases. In the latter case, the extra latency of exchanging data between dies and even CCX is going to have an impact relative to a traditional monolithic design.

219 Comments

View All Comments

tamalero - Tuesday, July 11, 2017 - link

How is that different if AMD ran stuff that is extremely optimized for them?Friendly0Fire - Tuesday, July 11, 2017 - link

That's kinda the point? You want to benchmark the CPUs in optimal scenarios, since that's what you'd be looking at in practice. If one CPU's weakness is eliminated by using a more recent/tweaked compiler, then it's not a weakness.coder543 - Tuesday, July 11, 2017 - link

Rather, you want to test under practical scenarios. Very few people are going to be running 17.04 on production grade servers, they will run an LTS release, which in this case is 16.04.It would be good to have benchmarks from 17.04 as another point of comparison, but given how many things they didn't have time to do just using 16.04, I can understand why they didn't use 17.04.

Santoval - Wednesday, July 12, 2017 - link

A compromise can be found by upgrading Ubuntu 16.04's outdated kernel. Ubuntu LTS releases include support for rolling HWE Stacks, which is a simple meta package for installing newer kernels compiled, modified, tested and packaged by the Ubuntu Kernel Team, and installed directly from the official Ubuntu repositories (not via a Launchpad PPA). With HWE 16.04 LTS can install up to the kernel of 18.04 LTS.I also use 16.04 LTS + HWE (it just requires installing the linux-generic-hwe-16.04 package), which currently provides the 4.8 kernel. There is even a "beta" version of HWE (the same package plus an -edge at the end) for installing the 4.10 kernel (aka the kernel of 17.04) earlier, which will normally be released next month.

I just spotted various 4.10 kernel listings after checking in Synaptic, so they must have been added very recently. After that there are two more scheduled kernel upgrades, as is shown in the following link. Of course HWE upgrades solely the kernel, it does not upgrade any application or any of the user level parts to a more recent version of Ubuntu.

https://wiki.ubuntu.com/Kernel/RollingLTSEnablemen...

CajunArson - Tuesday, July 11, 2017 - link

Considering the similarities between RyZen and Haswell (that aren't coincidental at all) you are already seeing a highly optimized set of RyZen results.But I have no problem seeing RyZen be tested with the newest distros, the only difference being that even Ubuntu 16.04 already has most of the optimizations for RyZen baked in.

coder543 - Tuesday, July 11, 2017 - link

What similarities? They're extremely different architectures. I can't think of any obvious similarities. Between the CCX model, caches being totally different layouts, the infinity fabric, Intel having better AVX-256/512 stuff (IIRC), etc.I don't think 16.04 is naturally any more optimized for Ryzen than it is for Skylake-SP.

CajunArson - Tuesday, July 11, 2017 - link

Oh please, at the core level RyZen is a blatant copy-n-paste of Haswell with the only exception being they just omitted half the AVX hardware to make their lives easier.It's so obvious that if you followed any of the developer threads for people optimizing for RyZen they say to just use the Haswell compiler optimizations that actually work better than the official RyZen optimization flags.

ddriver - Tuesday, July 11, 2017 - link

Can't tell if this post is funny or sad.CajunArson - Tuesday, July 11, 2017 - link

It's neither: It's accurate.Don't believe me? Look at the differences in performance of the holy 1800X over multiple Linux distros ranging from pretty new (OpenSuse Tumbleweed) to pretty old (Fedora 23 from 2015): http://www.phoronix.com/scan.php?page=article&...

Nowhere near the variation that we see with Skylake X since Haswell was already a solved problem long before RyZen lauched.

coder543 - Tuesday, July 11, 2017 - link

Right, of course. Ryzen is a copy-and-paste of Haswell.Don't make me laugh.