Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

Apache Spark 2.1 Benchmarking

Apache Spark is the poster child of Big Data processing. Speeding up Big Data applications is the top priority project at the university lab I work for (Sizing Servers Lab of the University College of West-Flanders), so we produced a benchmark that uses many of the Spark features and is based upon real world usage.

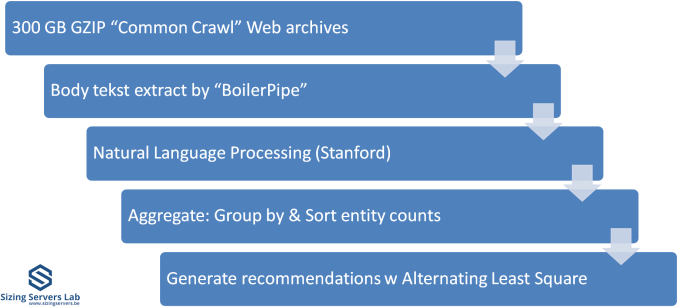

The test is described in the graph above. We first start with 300 GB of compressed data gathered from the CommonCrawl. These compressed files are a large amount of web archives. We decompress the data on the fly to avoid a long wait that is mostly storage related. We then extract the meaningful text data out of the archives by using the Java library "BoilerPipe". Using the Stanford CoreNLP Natural Language Processing Toolkit, we extract entities ("words that mean something") out of the text, and then count which URLs have the highest occurrence of these entities. The Alternating Least Square algorithm is then used to recommend which URLs are the most interesting for a certain subject.

In previous articles, we tested with Spark 1.5 in standalone mode (non-clustered). That worked out well enough, but we saw diminishing returns as core counts went up. In hindsight, just dumping 300 GB of compressed data in one JVM was not optimal for 30+ core systems. The high core counts of the Xeon 8176 and EPYC 7601 caused serious performance issues when we first continued to test this way. The 64 core EPYC 7601 performed like a 16-core Xeon, the Skylake-SP system with 56 cores was hardly better than a 24-core Xeon E5 v4.

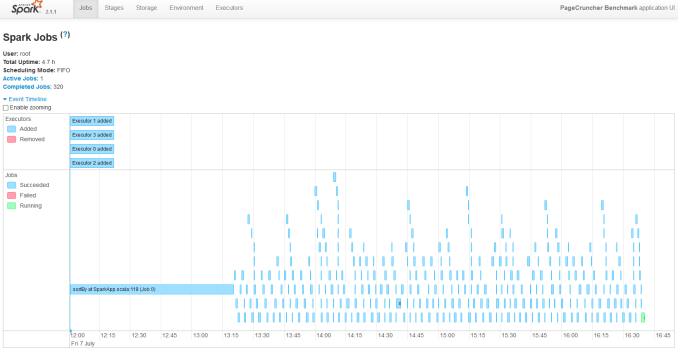

So we decided to turn our newest servers into virtual clusters. Our first attempt is to run with 4 executors. Researcher Esli Heyvaert also upgraded our Spark benchmark so it could run on the latest and greatest version: Apache Spark 2.1.1.

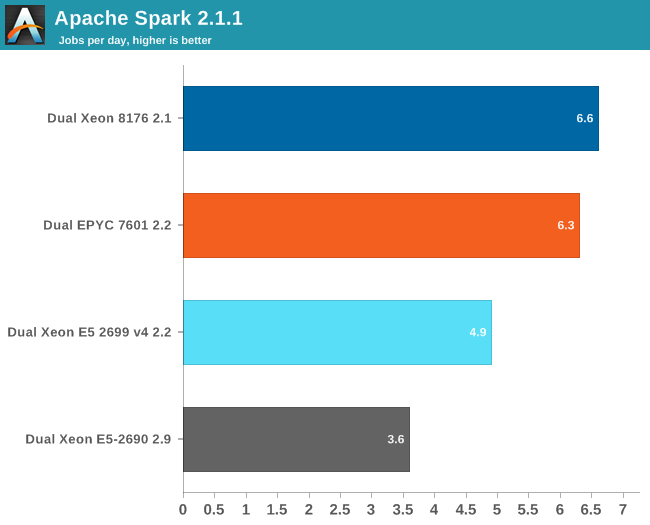

Here are the results:

If you wonder who needs such server behemoths besides the people who virtualize a few dozen virtual machines, the answer is Big Data. Big Data crunching has an unsatisfiable hunger for – mostly integer – processing power. Even on our fastest machine, this test needs about 4 hours to finish. It is nothing less than a killer app.

Our Spark benchmark needs about 120 GB of RAM to run. The time spent on storage I/O is negligible. Data processing is very parallel, but the shuffle phases require a lot of memory interaction. The ALS phase does not scale well over many threads, but is less than 4% of the total testing time.

Given the higher clockspeed in lightly threaded and single threaded parts, the faster shuffle phase probably gives the Intel chip an edge of only about 5%.

219 Comments

View All Comments

Panxa - Sunday, July 16, 2017 - link

"Competition has spoiled the naming convention Intels 14 === competetions 7 or 10"The node naming convention used to be the gate length, however that has become irrelevant. Intel 14 nm gate lenghth is about 1.5x and 10 nm about 1.8x. Companies and organizations have developed quite accurate models to asses process density with equations based on process poarameters like CPP and MPP to what they call a "standard node"

"Intel used to maintain 2 year lead now grew that to 3-4year lead"

Don't belive intel propaganda. Intel takes the lead in 2014 with their 14nm process with a standard node value of 12.1. Samsung and then TSMC take the lead in 2017 with their 10nm processes having standard node values of 11.2 and 10.3 respectively. Intel will retake the the lead back when they deliver their 10nm process with a standard node value of 8.3. However it will be a short lived lead, TSMC will retake the lead back with their 7nm with a standard node of 7.9 before GLOBALFOUNDRIES takes the lead in 2018 with their 7nm process with a standard node value of 7.8. The gap is gone !!!

"yet their revenue profits grow year over year"

Wrong. Intel revenue for the last years remained fairly constant

2011 grow

2012 decline

2013 decline

2014 grow

2015 decline

2016 grow

All in all from 2011 to 2016 revenue went from 54 billion to 59 billion. If we take into account inflation $54 billion in the year 2011 is worth $58.70 billion today.

Not to mention that Samsung has overtaken Intel to become the world No.1 semiconductor company, and that a "pure play" foundry like TSMC has surpassed intel in market CAP

johnp_ - Wednesday, July 12, 2017 - link

The Xeon Bronze Table on Page 7 seems to have an error. It lists the 4112 as having 5.50MB L3, but ark says it has 8.25MB, just like the 3104, so it looks like it has an above-average L3/Core:https://ark.intel.com/products/123551

Ian Cutress - Friday, July 14, 2017 - link

I've got Intel documents from our briefings that say it has the regular 1.375MB/core allocation, and others saying it has 8.25MB. I'm double checking.johnp_ - Friday, July 21, 2017 - link

All commercial listings and most reviews I've seen online show the processor with 8.25MB as well.Do you have any further information from Intel?

pepoluan - Wednesday, July 12, 2017 - link

What I'm dying to know: Performance when running as virtualization host. Using Xen, VMware, and Hyper-V.Threska - Saturday, July 22, 2017 - link

Virtualization itself, and more importantly virtualization security.Sparkyman215 - Wednesday, July 12, 2017 - link

Typo here: Intel will seven different versions of the chipset, varying in 10G and QAT support, but also varying in TDP:tmbm50 - Wednesday, July 12, 2017 - link

One thing to consider when considering value is the Microsoft Server 2016 core tax.....assuming your mission critical apps are still tied to MS ;-)Server 2016 now chargers per core with an 8 core socket as the base. The Window license for a 32 core server is NUTS.

I'm surprised AMD and Intel are not pushing Microsoft on this. For datacenters like ourselves its pushing us to 8 core sku's with more 2U nodes.

msroadkill612 - Wednesday, July 12, 2017 - link

Aye, its a fuuny world lad.The way the automobile panned out differently in different countries, was laargely die to fuel tax regimes, rather than technology.

i.e. what is the best way to cheat a bit on the incumbent tax rules of germany/france/uk vs a more laissez faire USA. In UK, u were taxed on horsepower, but u could cheat a bit w/ hi revs & more gears - that sort of thing.

rahvin - Wednesday, July 12, 2017 - link

Who runs any Windows service on bare metal these days? If you haven't virtulalized your windows servers running on KVM you should.