Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

Floating Point

Normally our HPC benchmarking is centered around OpenFoam, a CFD software we have used for a number of articles over the years. However, since we moved to Ubuntu 16.04, we could not get it to work anymore. So we decided to change our floating point intensive benchmark for now. For our latest article, we're testing with C-ray, POV-Ray, and NAMD.

The idea is to measure:

- A FP benchmark that is running out of the L1 (C-ray)

- A FP benchmark that is running out of the L2 (POV-Ray)

- And one that is using the memory subsytem quite often (NAMD)

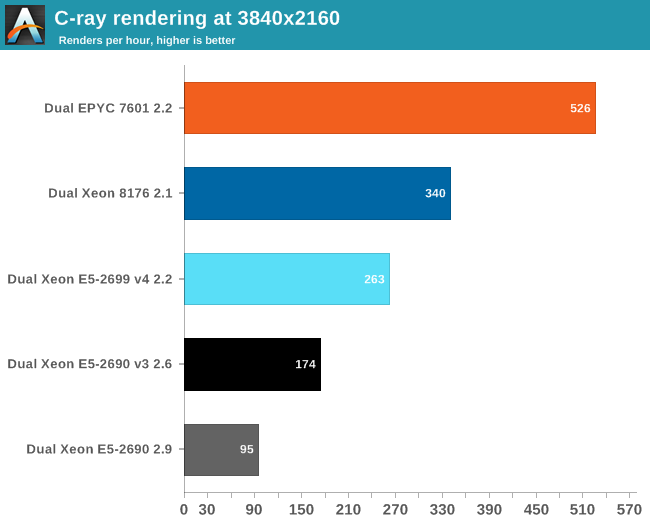

Floating Point: C-ray

C-ray is an extremely simple ray-tracer which is not representative of any real world raytracing application. In fact, it is essentially a floating point benchmark that runs out of the L1-cache. Luckily it is not as synthetic and meaningless as Whetstone, as you can actually use the software to do simple raytracing.

We use the standard benchmarking resolution (3840x2160) and the "sphfract" file to measure performance. The binary was precompiled.

Wow. What just happened? It looks like a landslide victory for the raw power of the four FP pipes of Zen: the EPYC chip is no less than 50% faster than the competition. Of course, it is easy to feed FP units if everything resides in the L1. Next stop, POV-Ray.

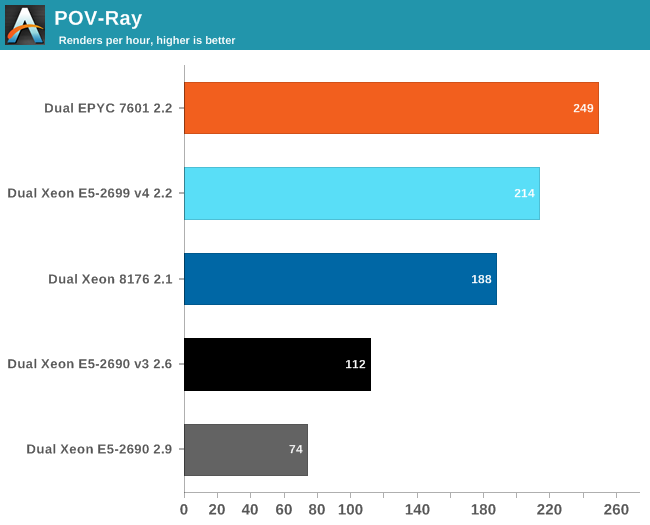

Floating Point: POV-Ray 3.7

POV-Ray is known to run mostly out of the L2-cache, so the massive DRAM bandwidth of the EPYC CPU does not play a role here. Nevertheless, the EPYC CPU performance is pretty stunning: about 16% faster than Intel's Xeon 8176. But what if AVX and DRAM access come in to play? Let us check out NAMD.

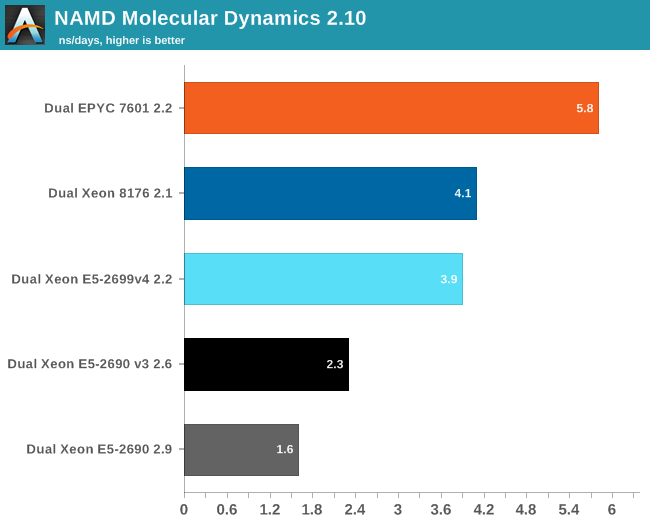

Floating Point: NAMD

Developed by the Theoretical and Computational Biophysics Group at the University of Illinois Urbana-Champaign, NAMD is a set of parallel molecular dynamics codes for extreme parallelization on thousands of cores. NAMD is also part of SPEC CPU2006 FP. In contrast with previous FP benchmarks, the NAMD binary is compiled with Intel ICC and optimized for AVX.

First, we used the "NAMD_2.10_Linux-x86_64-multicore" binary. We used the most popular benchmark load, apoa1 (Apolipoprotein A1). The results are expressed in simulated nanoseconds per wall-clock day. We measure at 500 steps.

Again, the EPYC 7601 simply crushes the competition with 41% better performance than Intel's 28-core. Heavily vectorized code (like Linpack) might run much faster on Intel, but other FP code seems to run faster on AMD's newest FPU.

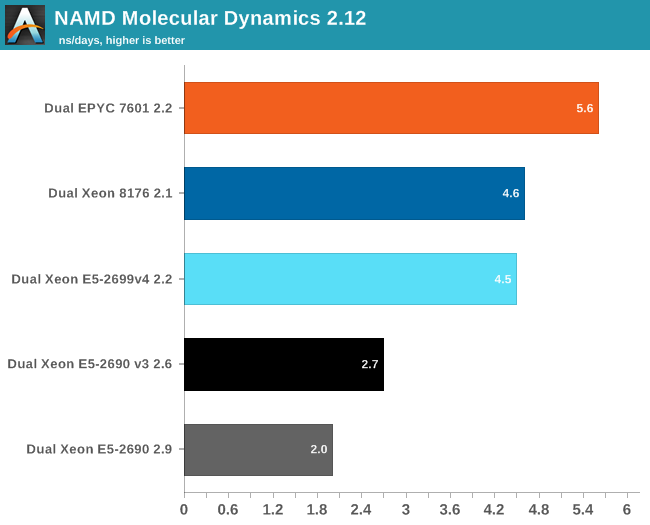

For our first shot with this benchmark, we used version 2.10 to be able to compare to our older data set. Version 2.12 seems to make better use of "Intel's compiler vectorization and auto-dispatch has improved performance for Intel processors supporting AVX instructions". So let's try again:

The older Xeons see a perforance boost of about 25%. The improvement on the new Xeons is a lot lower: about 13-15%. Remarkable is that the new binary is slower on the EPYC 7601: about 4%. That simply begs for more investigation: but the deadline was too close. Nevertheless, three different FP tests all point in the same direction: the Zen FP unit might not have the highest "peak FLOPs" in theory, there is lots of FP code out there that runs best on EPYC.

219 Comments

View All Comments

TheOriginalTyan - Tuesday, July 11, 2017 - link

Another nicely written article. This is going to be a very interesting next couple of months.coder543 - Tuesday, July 11, 2017 - link

I'm curious about the database benchmarks. It sounds like the database is tiny enough to fit into L3? That seems like a... poor benchmark. Real world databases are gigabytes _at best_, and AMD's higher DRAM bandwidth would likely play to their favor in that scenario. It would be interesting to see different sizes of transactional databases tested, as well as some NoSQL databases.psychobriggsy - Tuesday, July 11, 2017 - link

I wrote stuff about the active part of a larger database, but someone's put a terrible spam blocker on the comments system.Regardless, if you're buying 64C systems to run a DB on, you likely will have a dataset larger than L3, likely using a lot of the actual RAM in the system.

roybotnik - Wednesday, July 12, 2017 - link

Yea... we use about 120GB of RAM on the production DB that runs our primary user-facing app. The benchmark here is useless.haplo602 - Thursday, July 13, 2017 - link

I do hope they elaborate on the DB benchmarks a bit more or do a separate article on it. Since this is a CPU article, I can see the point of using a small DB to fit into the cache, however that is useless as an actual DB test. It's more an int/IO test.I'd love to see a larger DB tested that can fit into the DRAM but is larger than available caches (32GB maybe ?).

ddriver - Tuesday, July 11, 2017 - link

We don't care about real world workloads here. We care about making intel look good. Well... at this point it is pretty much damage control. So let's lie to people that intel is at least better in one thing.Let me guess, the databse size was carefully chosen to NOT fit in a ryzen module's cache, but small enough to fit in intel's monolithic die cache?

Brought to you by the self proclaimed "Most Trusted in Tech Since 1997" LOL

Ian Cutress - Tuesday, July 11, 2017 - link

I'm getting tweets saying this is a severely pro AMD piece. You are saying it's anti-AMD. ¯\_(ツ)_/¯ddriver - Tuesday, July 11, 2017 - link

Well, it is hard to please intel fanboys regardless of how much bias you give intel, considering the numbers.I did not see you deny my guess on the database size, so presumably it is correct then?

ddriver - Tuesday, July 11, 2017 - link

In the multicore 464.h264ref test we have 2670 vs 2680 for the xeon and epyc respectively. Considering that the epyc score is mathematically higher, howdoes it yield a negative zero?Granted, the difference is a mere 0.3% advantage for epyc, but it is still a positive number.

Headley - Friday, July 14, 2017 - link

I thought the exact same thing