Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

Memory Subsystem: Latency

The performance of modern CPUs depends heavily on the cache subsystem. And some applications depend heavily on the DRAM subsystem too. We used LMBench in an effort to try to measure cache and memory latency. The numbers we looked at were "Random load latency stride=16 Bytes".

| Mem Hierarchy |

AMD EPYC 7601 DDR4-2400 |

Intel Skylake-SP DDR4-2666 |

Intel Broadwell Xeon E5-2699v4 DDR4-2400 |

| L1 Cache cycles | 4 | 4 | 4 |

| L2 Cache cycles | 12 | 14-22 | 12-15 |

| L3 Cache 4-8 MB - cycles | 34-47 | 54-56 | 38-51 |

| 16-32 MB - ns | 89-95 ns | 25-27 ns (+/- 55 cycles?) |

27-42 ns (+/- 47 cycles) |

| Memory 384-512 MB - ns | 96-98 ns | 89-91 ns | 95 ns |

Previously, Ian has described the AMD Infinity Fabric that stitches the two CCXes together in one die and interconnects the 4 different "Zeppelin" dies in one MCM. The choice of using two CCXes in a single die is certainly not optimal for Naples. The local "inside the CCX" 8 MB L3-cache is accessed with very little latency. But once the core needs to access another L3-cache chunk – even on the same die – unloaded latency is pretty bad: it's only slightly better than the DRAM access latency. Accessing DRAM is on all modern CPUs a naturally high latency operation: signals have to travel from the memory controller over the memory bus, and the internal memory matrix of DDR4-2666 DRAM is only running at 333 MHz (hence the very high CAS latencies of DDR4). So it is surprising that accessing SRAM over an on-chip fabric requires so many cycles.

What does this mean to the end user? The 64 MB L3 on the spec sheet does not really exist. In fact even the 16 MB L3 on a single Zeppelin die consists of two 8 MB L3-caches. There is no cache that truly functions as single, unified L3-cache on the MCM; instead there are eight separate 8 MB L3-caches.

That will work out fine for applications that have a footprint that fits within a single 8 MB L3 slice, like virtual machines (JVM, Hypervisors based ones) and HPC/Big Data applications that work on separate chunks of data in parallel (for example, the "map" phase of "map/reduce"). However this kind of setup will definitely hurt the performance of applications that need "central" access to one big data pool, such as database applications and big data applications in the "Shuffle phase".

Memory Subsystem: TinyMemBench

To double check our latency measurements and get a deeper understanding of the respective architectures, we also use the open source TinyMemBench benchmark. The source was compiled for x86 with GCC 5.4 and the optimization level was set to "-O3". The measurement is described well by the manual of TinyMemBench:

Average time is measured for random memory accesses in the buffers of different sizes. The larger the buffer, the more significant the relative contributions of TLB, L1/L2 cache misses, and DRAM accesses become. All the numbers represent extra time, which needs to be added to L1 cache latency (4 cycles).

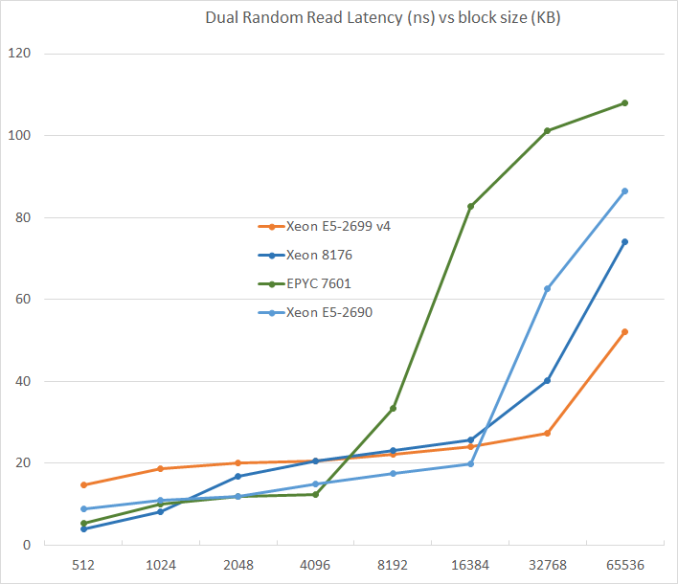

We tested with dual random read, as we wanted to see how the memory system coped with multiple read requests.

L3-cache sizes have increased steadily over the years. The Xeon E5 v1 had up to 20 MB, v3 came with 45 MB, and v4 "Broadwell EP" further increased this to 55 MB. But the fatter the cache, the higher the latency became. L3 latency doubled from Sandy Bridge-EP to Broadwell-EP. So it is no wonder that Skylake went for a larger L2-cache and a smaller but faster L3. The L2-cache offers 4 times lower latency at 512 KB.

AMD's unloaded latency is very competitive under 8 MB, and is a vast improvement over previous AMD server CPUs. Unfortunately, accessing more 8 MB incurs worse latency than a Broadwell core accessing DRAM. Due to the slow L3-cache access, AMD's DRAM access is also the slowest. The importance of unloaded DRAM latency should of course not be exaggerated: in most applications most of the loads are done in the caches. Still, it is bad news for applications with pointer chasing or other latency-sensitive operations.

219 Comments

View All Comments

extide - Tuesday, July 11, 2017 - link

PCPer made this same mistake -- Nehalem/Westmere used a crossbar memory bus -- not a ringbus. Only Nehalem/Westmere EX used the ringbus (the 6500/7500 series) The i7 and Xeon 5500 and 5600 series used the crossbar.extide - Tuesday, July 11, 2017 - link

Sandy Bridge brought the ringbus down to Xeon EP and client chips.Yorgos - Tuesday, July 11, 2017 - link

"With the complexity of both server hardware and especially server software, that is very little time. There is still a lot to test and tune, but the general picture is clear."No wonder why we see ubuntu and ancient versions of gcc and the rest of the s/w stack.

Imagine if you tried to use debian or rhel, it would take you decades to get the review.

eligrey - Tuesday, July 11, 2017 - link

Why did you omit the Turbo frequencies for the Xeon Gold 6146 and 6144?Intel ARK says that the 6146's turbo frequency is 4.2GHz and the 6144's is 4.5GHz.

eligrey - Tuesday, July 11, 2017 - link

Oops, I mean 4.2GHz for both.boozed - Tuesday, July 11, 2017 - link

Need more Skylake-SP SKUsrHardware - Tuesday, July 11, 2017 - link

For the purley system, It's listed that you used Chipset Intel Wellsburg B0This information cannot be correct. Lewisburg Chipset is the name of the purley chipset. Also, B0 stepping lewisburg also wouldn't boot with the stepping of CPU you have.

rHardware - Tuesday, July 11, 2017 - link

That 0200011 microcode is also very old.Rickyxds - Tuesday, July 11, 2017 - link

I'am a brazilian processors enthusiast and I'am very critic about intel and AMD processors, between 2012 and Q1 2017 AMD just doesn't existed, who bought AMD on that years, bougth just for love AMD and just it, doesn't for the price, doesn't for the high core count, doesn't for AMD is red, AMD was the worst performance processors. The A9 Apple dual core performance is better than FX 8150.But now I am very surprise with the aggressive AMD prices. No one here Imagined get the Ryzen 7 performance for less than $500. And I don't know if this scenario brings profit to AMD, but for the image against the intel it's wonderful.

On the next years we will see.

krumme - Tuesday, July 11, 2017 - link

Thank you for quality stuff article especially given the short time. So thank you for booting up Johan !Interesting and surpricing results.