Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

Apache Spark 2.1 Benchmarking

Apache Spark is the poster child of Big Data processing. Speeding up Big Data applications is the top priority project at the university lab I work for (Sizing Servers Lab of the University College of West-Flanders), so we produced a benchmark that uses many of the Spark features and is based upon real world usage.

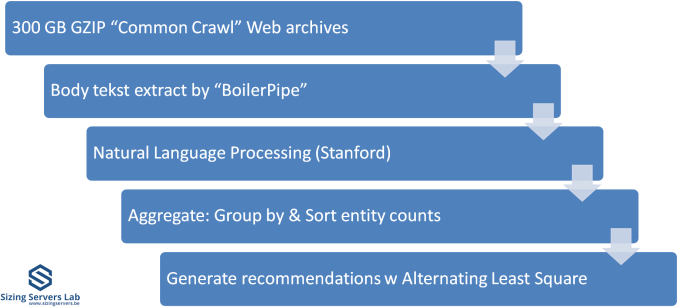

The test is described in the graph above. We first start with 300 GB of compressed data gathered from the CommonCrawl. These compressed files are a large amount of web archives. We decompress the data on the fly to avoid a long wait that is mostly storage related. We then extract the meaningful text data out of the archives by using the Java library "BoilerPipe". Using the Stanford CoreNLP Natural Language Processing Toolkit, we extract entities ("words that mean something") out of the text, and then count which URLs have the highest occurrence of these entities. The Alternating Least Square algorithm is then used to recommend which URLs are the most interesting for a certain subject.

In previous articles, we tested with Spark 1.5 in standalone mode (non-clustered). That worked out well enough, but we saw diminishing returns as core counts went up. In hindsight, just dumping 300 GB of compressed data in one JVM was not optimal for 30+ core systems. The high core counts of the Xeon 8176 and EPYC 7601 caused serious performance issues when we first continued to test this way. The 64 core EPYC 7601 performed like a 16-core Xeon, the Skylake-SP system with 56 cores was hardly better than a 24-core Xeon E5 v4.

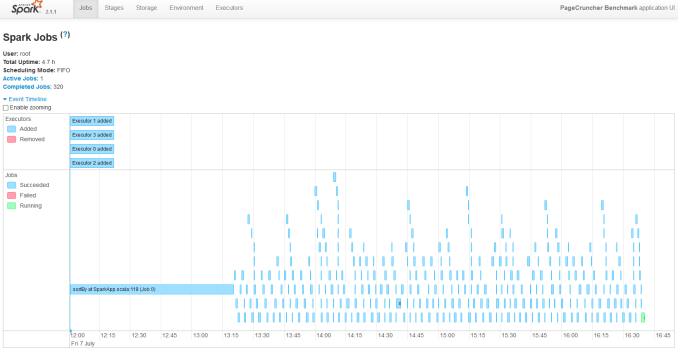

So we decided to turn our newest servers into virtual clusters. Our first attempt is to run with 4 executors. Researcher Esli Heyvaert also upgraded our Spark benchmark so it could run on the latest and greatest version: Apache Spark 2.1.1.

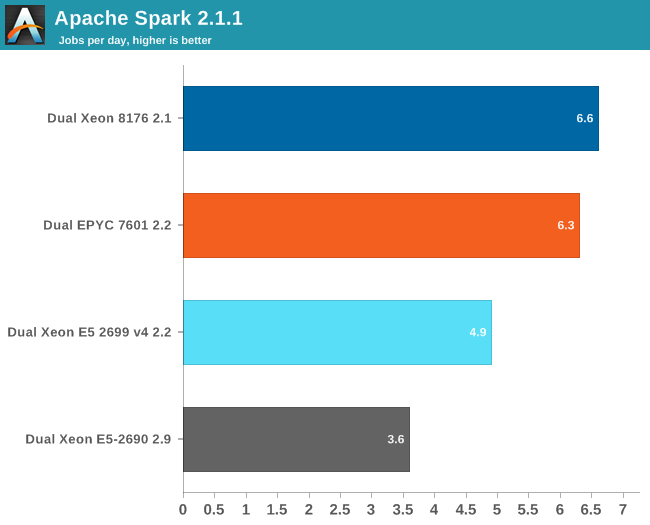

Here are the results:

If you wonder who needs such server behemoths besides the people who virtualize a few dozen virtual machines, the answer is Big Data. Big Data crunching has an unsatisfiable hunger for – mostly integer – processing power. Even on our fastest machine, this test needs about 4 hours to finish. It is nothing less than a killer app.

Our Spark benchmark needs about 120 GB of RAM to run. The time spent on storage I/O is negligible. Data processing is very parallel, but the shuffle phases require a lot of memory interaction. The ALS phase does not scale well over many threads, but is less than 4% of the total testing time.

Given the higher clockspeed in lightly threaded and single threaded parts, the faster shuffle phase probably gives the Intel chip an edge of only about 5%.

219 Comments

View All Comments

Shankar1962 - Wednesday, July 12, 2017 - link

AMD is fooling everyone one by showing more cores, pci lanes, security etcCan someone explain me why GOOGLE ATT AWS ALIBABA etc upgraded to sky lake when AMD IS SUPERIOR FOR HALF THE PRICE?

Shankar1962 - Wednesday, July 12, 2017 - link

Sorry its BaiduPretty sure Alibaba will upgrade

https://www.google.com/amp/s/seekingalpha.com/amp/...

PixyMisa - Thursday, July 13, 2017 - link

Lots of reasons.1. Epyc is brand new. You can bet that every major server customer has it in testing, but it could easily be a year before they're ready to deploy.

2. Functions like ESXi hot migration may not be supported on Epyc yet, and certainly not between Epyc and Intel.

3. Those companies don't pay the same prices we do. Amazon have customised CPUs for AWS - not a different die, but a particular spec that isn't on Intel's product list.

There's no trick here. This is what AMD did before, back in 2006.

blublub - Tuesday, July 11, 2017 - link

I kinda miss Infinity Fabric on my Haswell CPU and it seems to only have on die - so why is that missing on Haswell wehen Ryzen is an exact copy?blublub - Tuesday, July 11, 2017 - link

argh that post did get lost.zappor - Tuesday, July 11, 2017 - link

4.4.0 kernel?! That's not good for single-die Zen and must be even worse for Epyc!AMD's Ryzen Will Really Like A Newer Linux Kernel:

https://www.phoronix.com/scan.php?page=news_item&a...

Kernel 4.10 gives Linux support for AMD Ryzen multithreading:

http://www.pcworld.com/article/3176323/linux/kerne...

JohanAnandtech - Friday, July 21, 2017 - link

We will update to a more updated kernel once the hardware update for 16.04 LTS is available. Should be August according to Ubuntukwalker - Tuesday, July 11, 2017 - link

You mention an OpenFOAM benchmark when talking about the new mesh topology but it wasn't included in the article. Any way you could post that? We are trying to evaluate EPYC vs Skylake for CFD applications.JohanAnandtech - Friday, July 21, 2017 - link

Any suggestion on a good OpenFoam benchmark that is available? Our realworld example is not compatible with the latest OpenFoam versions. Just send me an e-mail, if you can assist.Lolimaster - Tuesday, July 11, 2017 - link

AMD's lego design where basically every CCX can be used in whatever config they want be either consumer/HEDT or server is superior in the multicore era.Cheaper to produce, cheaper to sell, huge profits.