Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

Testing Notes

For the EPYC launch, AMD sent us their best SKU: the EPYC 7601. Meanwhile Intel gave us a choice between the top bin Xeon 8180 and the Xeon 8176. Considering that the latter had 165-173W TDP, similar to AMD's best EPYC, we felt that the Xeon 8176 was the best choice.

Unfortunately, our time testing the two platforms has been limited. In particular, we only received AMD's EPYC system last week, and the company did not put an embargo on the results. This means that we can release the data now, in time to compare it to the new Skylake-SP Xeons, however it also means that we've only had a handful of days to work with the platform before writing all of this up for today's embargo. We're confident in the data, but it means that we haven't had a chance to tease out the nuances of EPYC quite yet, and that will have to be something we get to in a future article.

Meanwhile we should note that we've had to retire the bulk of our historical benchmark data, as we upgraded both our compiler and OS (see below). Due to this, we only had a very limited amount of time to run additional systems, and for that reason we've opted include Intel's Xeon E5-2690. The Sandy Bridge-EP processor is about 5 years old, and for customers who aren't upgrading their servers every single generation, it's these servers that we believe are most likely to get upgraded in this round. So for server managers looking at finally buying into new hardware, you can get an idea of much return of investment you get.

Benchmark Configuration and Methodology

All of our testing was conducted on Ubuntu Server "Xenial" 16.04.2 LTS (Linux kernel 4.4.0 64 bit). The compiler that ships with this distribution is GCC 5.4.0.

You will notice that the DRAM capacity varies among our server configurations. The reason is that we had little time left before today's launch embargo. Removing any hardware is always a risk, so we decided to run our tests without significantly changing the internal hardware of the systems we received from AMD and Intel (SSDs were still replaced). As far as we know, all of our tests fit in 128 GB, so DRAM capacity should not have much influence on performance. But it wil have a impact on total energy consumption, which we will discuss.

Last but not least, we want to note how the performance graphs have been color-coded. Orange is AMD's EPYC, dark blue is Intel's best (Skylake-SP), and light blue is the previous generation Xeons (Xeon E5-v4) . Gray has been used for the soon-to-be-replaced Xeon v1.

Intel's Xeon "Purley" Server – S2P2SY3Q (2U Chassis)

| CPU | Two Intel Xeon Platinum 8176 (2.1 GHz, 28c, 38.5MB L3, 165W) |

| RAM | 384 GB (12x32 GB) Hynix DDR4-2666 |

| Internal Disks | SAMSUNG MZ7LM240 (bootdisk) Intel SSD3710 800 GB (data) |

| Motherboard | Intel S2600WF (Wolf Pass baseboard) |

| Chipset | Intel Wellsburg B0 |

| BIOS version | 9/02/2017 |

| PSU | 1100W PSU (80+ Platinum) |

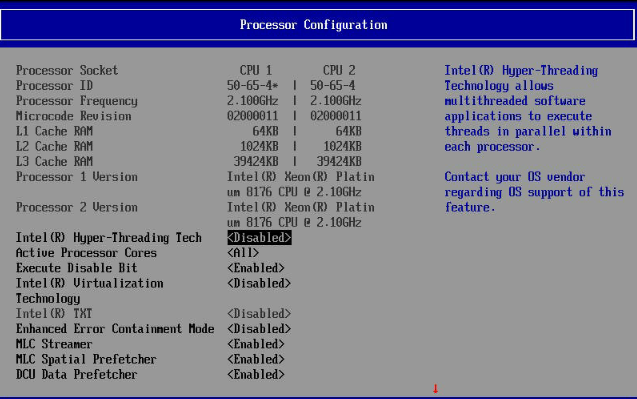

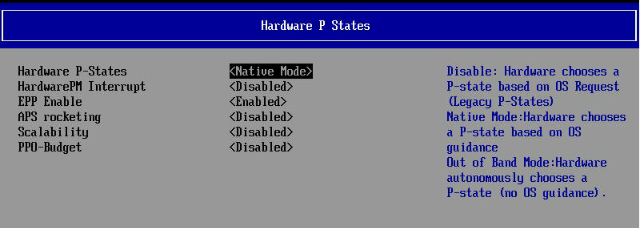

The typical BIOS settings can be seen below; we enabled hyperthreading and Intel virtualization.

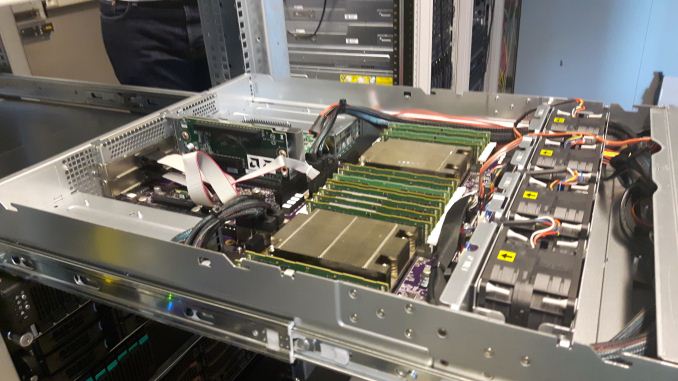

AMD EPYC 7601 – (2U Chassis)

Five years after our "Piledriver review", a new AMD server arrives in the Sizing Servers Lab.

| CPU | Two EPYC 7601 (2.2 GHz, 32c, 8x8MB L3, 180W) |

| RAM | 512 GB (16x32 GB) Samsung DDR4-2666 @2400 |

| Internal Disks | SAMSUNG MZ7LM240 (bootdisk) Intel SSD3710 800 GB (data) |

| Motherboard | AMD Speedway |

| BIOS version | To check. |

| PSU | 1100W PSU (80+ Platinum) |

Intel's Xeon E5 Server – S2600WT (2U Chassis)

| CPU | Two Intel Xeon processor E5-2699v4 (2.2 GHz, 22c, 55MB L3, 145W) Two Intel Xeon processor E5-2690v3 (2.3 GHz, 14c, 35MB L3, 120W) |

| RAM | 256 GB (16x16GB) Kingston DDR-2400 |

| Internal Disks | SAMSUNG MZ7LM240 (bootdisk) Intel SSD3700 800 GB (data) |

| Motherboard | Intel Server Board Wildcat Pass |

| BIOS version | 1/28/2016 |

| PSU | Delta Electronics 750W DPS-750XB A (80+ Platinum) |

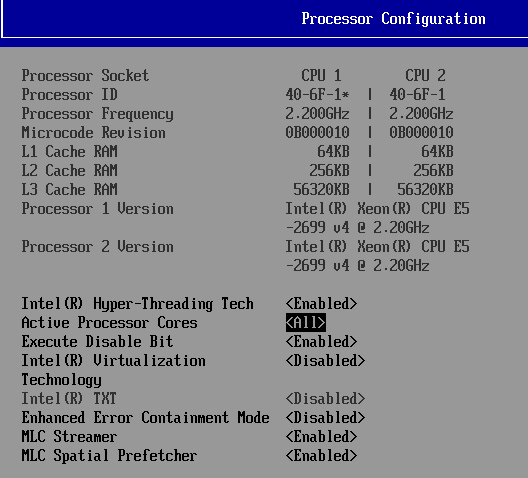

The typical BIOS settings can be seen below.

HP-G8 (2U Chassis) - Xeon E5-2690

| CPU | Two Intel Xeon processor E5-2690 (2.9GHz, 8c, 20MB L3, 135W) |

| RAM | 512 GB (16x32GB) Samsung DDR-3 LR-DIMM 1866 MHz @ 1333 MHz |

| Internal Disks | SAMSUNG MZ7LM240 (bootdisk) Intel SSD3700 800 GB (data) |

| Motherboard | HP G8 |

| BIOS version | 9/23/2016 |

| PSU | HP 750W (Gold) |

Other Notes

Both servers are fed by a standard European 230V (16 Amps max.) power line. The room temperature is monitored and kept at 23°C by our Airwell CRACs.

219 Comments

View All Comments

JohanAnandtech - Friday, July 21, 2017 - link

Thanks! It is was a challenge, and we will update this article later on, when better kernel support is available.serendip - Tuesday, July 11, 2017 - link

What idiot marketroid thought it was cool to have a huge list of SKUs and gimped "precious metals" branding? I'd like to see Epyc kicking Xeon butt simply because AMD has much more sensible product lists and there's not much gimping going on.ParanoidFactoid - Tuesday, July 11, 2017 - link

Reading through this, the takeaway seems thus. Epyc has latency concerns in communicating between CCX blocks, though this is true of all NUMA systems. If your application is latency sensitive, you either want a kernel that can dynamically migrate threads to keep them close to their memory channel - with an exposed API so applications can request migration. (Linux could easily do this, good luck convincing MS). OR, you take the hit. OR, you buy a monolithic die Intel solution for much more capital outlay. Further, the takeaway on Intel is, they have the better technology. But their market segmentation strategy is so confusing, and so limiting, it's near impossible to determine best cost/performance for your application. So you wind up spending more than expected anyway. AMD is much more open and clear about what they can and can't do. Intel expects to make their money by obfuscating as part of their marketing strategy. Finally, Intel can go 8 socket, so if you need that - say, high core low latency securities trading - they're the only game in town. Sun, Silicon Graphics, and IBM have all ceded that market.msroadkill612 - Wednesday, July 12, 2017 - link

"it's near impossible to determine best cost/performance for your application. So you wind up spending more than expected anyway. AMD is much more open and clear about what they can and can't do. Intel expects to make their money by obfuscating as part of their marketing strategy.Finally, Intel can go 8 socket, so if you need that - say, high core low latency securities trading - they're the only game in town. Sun, Silicon Graphics, and IBM have all ceded that market."

& given time is money, & intelwastes customers time, then intel is expensive.

Those guys will go intel anyway, but just sayin, there is already talk of a 48 core zen cpu, making 98 cores on a mere 2p mobo.

As i have posted b4, if wall street starts liking gpu compute for prompter answers, amdS monster apuS will be unanswerable.

nils_ - Wednesday, July 19, 2017 - link

98 cores on a 2p mobo isn't quite right if you keep in mind that the 32 core versions already constitute a 4 CPU system, unless AMD somehow manages to get more cores on a single die.nils_ - Wednesday, July 19, 2017 - link

Good analysis, although Sun and IBM are still coming out with new CPUs and at least with IBM there is renewed interest in the POWER ecosystem.eek2121 - Wednesday, July 12, 2017 - link

, but rather AMD's spanking new EPYC server CPU. Both CPUs are without a doubt very different: micro architecture, ISA extentions, <snip>Should be extensions.

intelemployee2012 - Wednesday, July 12, 2017 - link

After looking at the number of people who really do not fully understand the entire architecture and workloads and thinking that AMD Naples is superior because it has more cores, pci lanes etc is surprising.AMD made a 32 core server by gluing four 8core desktop dies whereas Intel has a single die balanced datacenter specific architecture which offers more perf if you make the entire Rack comparison. It's not the no of cores its the entire Rack which matters.

Intel cores are superior than AMD so a 28 core xeon is equal to ~40 cores if you compare again Ryzen core so this whole 28core vs 32core is a marketing trick. Everyone thinks Intel is expensive but if you go by performance per dollar Intel has a cheaper option at every price point to match Naples without compromising perf/dollar.

To be honest with so many Fabs, don't you think Intel is capable of gluing desktop dies to create a 32core,64core or evn 128core server (if it wants to) if thats the implementation style it needs to adopt like AMD?

The problem these days is layman looks at just numbers but that's not how you compare.

sharath.naik - Wednesday, July 12, 2017 - link

Agree, Most who look at these numbers will walk away thinking AMD is doing well with EPYC. The article points out the approach to testing and also states the performance challenges with EPYC, which can be missed who reading this review without the prior review on the older Xeons. For example the Big data test, I bet the newbies will walk away thinking EPYC beats the older XEONS E5 v4, as thats what the graphs show,without ever looking back at the numbers for a single 22 core Xeon e5 v4. So yes, a few back links in the article will be helpful.warreo - Wednesday, July 12, 2017 - link

Not a fanboi of either company, but care to elaborate more? I checked the original Xeon E5 v4 review. It shows that a single Xeon E5 v4 performs about 10% slower than a dual setup. Extrapolating that here, that means the single Xeon E5 v4 setup would be right around 4.5 jobs per day, which would make it roughly 50% slower than the dual Epyc and Xeon 8176.Sure, you could argue perf/dollar is better against a dual Epyc setup...but one could make the same argument against Intel's Skylake Xeons? I also wouldn't expect the performance to scale linearly anyway. Please let me know what I'm missing.