The Apple iPhone 6s and iPhone 6s Plus Review

by Ryan Smith & Joshua Ho on November 2, 2015 8:00 AM EST- Posted in

- Smartphones

- Apple

- Mobile

- SoCs

- iPhone 6s

- iPhone 6s Plus

Camera Architecture

As usual, it’s important to discuss some of the basics of the camera hardware before we move on to actual image and video quality tests in order to better understand the factors that can affect overall camera quality. Of course, there’s much more to this than meets the eye but for the most part things like the actual lenses used are hard to determine without a device teardown.

| Apple iPhone Cameras | ||||

| Apple iPhone 6 Apple iPhone 6 Plus |

Apple iPhone 6s Apple iPhone 6s Plus |

|||

| Front Camera | 1.2MP | 5.0MP | ||

| Front Camera - Sensor | ? (1.9 µm, 1/5") |

? (1.12 µm, 1/5") |

||

| Front Camera - Focal Length | 2.65mm (31mm eff) | 2.65mm (31mm eff) | ||

| Front Camera - Max Aperture | F/2.2 | F/2.2 | ||

| Rear Camera | 8MP | 12MP | ||

| Rear Camera - Sensor | Sony ??? (1.5 µm, 1/3") |

Sony ??? (1.22 µm, 1/3") |

||

| Rear Camera - Focal Length | 4.15mm (29mm eff) | 4.15mm (29mm eff) | ||

| Rear Camera - Max Aperture | F/2.2 | F/2.2 | ||

At a high level, not a whole lot changes between the iPhone 6s and iPhone 6. The aperture remains constant, as does the focal length. Sensor size is also pretty much unchanged from the iPhone 6 line. Unfortunately, the iPhone 6s continues the trend of not having OIS, which has significant effects on low light photo and all video recording. Of course, OIS alone isn’t going to make or break a camera, but it can make the difference between a competitive camera and a class-leading one.

I’m sure some are wondering why the aperture hasn’t gotten wider or why the sensor hasn’t gotten larger, and it’s likely that attempting to make a wider aperture or a larger sensor would have some significant knock-on effects. A wider aperture inherently means that distortions get worse, as even in simple cases like chromatic aberration the incoming light is now reaching the lenses at a more extreme angle. A larger sensor with all else equal would significantly increase thickness, which is already near acceptable limits for the iPhone 6s camera module. Even if you modified the lens design to focus on z-height, the end result is that the focal length is shortened significantly. Even if you don’t think a wider field of view is a problem, distortion throughout the photo increases which is likely to be unacceptable as well.

In effect, the major changes here are pixel size/resolution and the ISP, which is a black box but is new for the A9 SoCs as far as I can tell. Instead of the 1.5 micron pixel size we’ve seen before, Apple has moved to a 1.22 micron pixel size for the iPhone 6s and 6s Plus. There’s been a perennial debate about what the “right” pixel size is, and some of the research I’ve done really indicates that this changes with technology. For the most part, noise from photos taken with strong, even lighting is solely due to the fact that light is composed of discrete photons. This shot noise is an unavoidable fact of life, but in low light the problem is that the sensor’s inherent noise becomes noticeable which is affected by factors like the sensor die temperature. The problem here is that in CMOS sensors each pixel has circuitry which independently converts the number of electrons counted into a corresponding voltage, which means that for the same sensor size, if you increase the number of pixels you’re also increasing the amount of read noise.

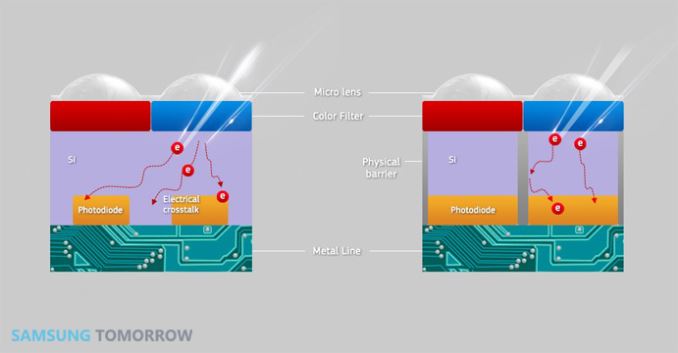

As a result, while in theory a smaller pixel size (up to a certain limit) has no downsides, in practice due to the way CMOS image sensors are made you have to trade-off between daytime and low light image quality. Apple claims that their way of avoiding this trade-off is through the use of new technology. One of the key changes made here is deep trench isolation, which we’ve seen in sensors like Samsung’s ISOCELL. This basically helps with effects like electron tunneling which causes a photon that hits one sensor to be detected at another. The iPhone 6s’ image sensor also has modifications to the color filter array which are designed to reduce sensor thickness requirements by increasing the chief ray angle.

Camera UX

Moving on to the camera UI, iOS has basically kept the same UI that we’ve seen since iOS 7. There’s nothing that I really have to complain about given the relative simplicity and the lack of any notable usability issues here. The one major change I’ll mention here is the Live Photos button, which illuminates and indicates when the camera is capturing a live photo. The one usability problem worth noting here is that the camera doesn’t stop capturing a live photo even when the camera is lowered, so live photos often just show the ground or some fingers towards the end. Otherwise, the experience is exactly like a normal photo.

Other than the addition of the live photos button, there are some subtle additions to the camera UI due to the addition of 3D Touch. Peeking on the image shows the last 20 images captured on the phone, and popping will open up the gallery in an interesting dark theme mode which is slightly odd and inconsistent but otherwise a nice addition. A force touch on the camera app icon allows quick access to some common modes without extra actions after opening the camera application.

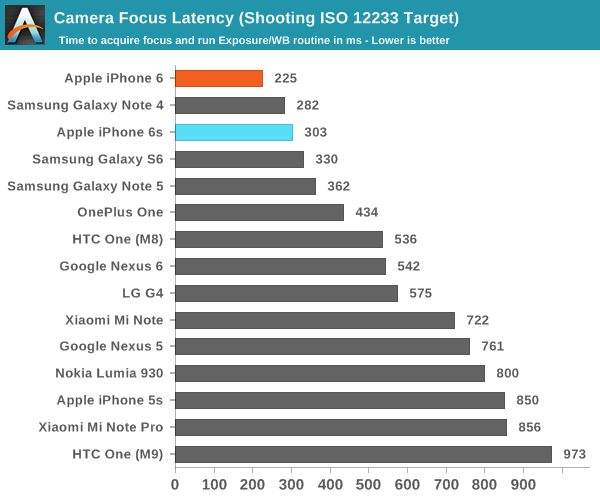

Of course, the other question that still lingers is how fast the iPhone camera is. In order to test this, we continue to use our ISO chart with strong studio lighting in order to get an idea for what the best case focus and capture latency are. As the ISO chart is an extremely high-contrast object, this test avoids unnecessarily favoring phase-detect auto focus and laser AF mechanisms relative to traditional contrast-detect focusing.

When it comes to focus latency, the iPhone 6s is basically identical to the iPhone 6. At this point, we're basically looking at variance in testing as a 64ms difference is only 4 frames on the display. Something as simple as a small difference in initial focus position is going to affect the result here, because the iPhone 6s traverses straight to the correct focus position in testing. Pretty much every other smartphone is behind here because they all seem to traverse past the correct focus point before reverting to verify that PDAF or laser AF is giving an accurate result.

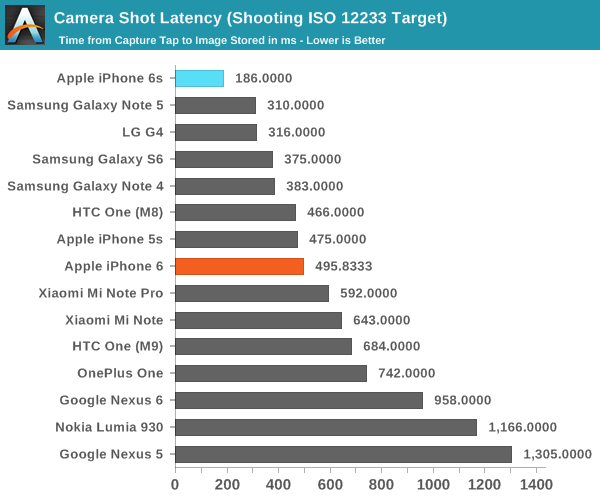

In the shot latency test, we're really seeing the value of Apple's NVMe mobile NAND solution here as the iPhone 6s captures a single image in roughly 200 ms less time than the iPhone 6. Of course, this is assuming a situation in which shutter speed isn't the dominating factor in shot latency so in low light these differences are going to be hard to spot.

Live Photos

Live Photos is a new feature in the iPhone 6s, which is effectively trying to capture a moment within a photo. At a technical level, Live Photos captures a photo and a video simultaneously, with the video lasting up to three seconds. The first half of the video is going to be the moment immediately before the shutter is tapped, and the second half is right after the shutter is tapped. The video has a resolution of 1440x1080 to fit the 4:3 aspect ratio, and appears to vary in frame rate from about 12 to 15 FPS, with a bitrate of roughly 8Mbps and H.264 high profile encoding.

These are all technical details, but really what matters here is that the frame rate is relatively low so it isn’t necessarily the greatest at capturing something that is going to pass through the frame within a second. It’s likely that this is at least partially necessarily in order to make sure that Live Photos don’t take up a huge amount of storage. Similarly, I suspect this is the same logic behind why the resolution is closer to a video than a photo. The frame rate is low enough though that low light photos aren’t going to be limited by the need to keep the video at an acceptable frame rate. This is important to note, mostly because the whole point of a live photo is kind of ruined if you have to turn it on to use it.

Ultimately, with these features it is insufficient to focus on the technical details of the implementation, even if they matter. What really matters here is the user experience, and to that end Live Photos solves a lot of the friction that was present with HTC’s Zoes. I loved the idea of Zoes when I first got the HTC One M7, but after a few months I found I just wasn’t using the feature because it was too much effort to try and pre-emptively plan for a shot that would work well as a Zoe. It was also difficult to deal with the fixed recording time, a higher minimum shutter speed in low light, and the need to keep the phone raised for the entire time the Zoe was recording.

In some ways, Apple has solved these problems with Live Photos. It’s fully possible to keep the mode enabled all the time, and with the recent release of iOS 9.1 it seems Apple has implemented an algorithm to dynamically alter the length of recording based upon whether the camera is suddenly lowered in the middle of recording. Due to the relatively low frame rate there’s also no need to worry about worse low light performance or something similar, which helps with keeping the feature enabled all the time even if it means that motion isn’t has fluent as it would be with a 30 or 60 FPS video. The end result is that you can basically just take photos like usual and serendipitously discover that it resulted in a great live photo.

I really like the idea of Live Photos, and in practice I had a lot of fun playing with the feature to capture various shots to see the results. Even though I’ve spent plenty of time with the iPhone 6s I still don’t know whether I’ll actually continue using the feature in any real capacity as I definitely used HTC’s Zoe feature for the first few weeks that I spent with the One M7 but as time went on I promptly forgot that it ever existed.

531 Comments

View All Comments

Der2 - Monday, November 2, 2015 - link

Its about time.zeeBomb - Monday, November 2, 2015 - link

Oh man...oh man it's finally here. I just wanted to say thank you for faithfuflly using all your findings to incorporate this review. It may have take a little longer than expected, but hey, this is my first anandtech review that I probably camped out for it to drop, lol...thanks again Joshua and Brandon!zeeBomb - Monday, November 2, 2015 - link

Ugh. I meant Ryan Smith...sorry! Waking up at 5 isn't the ideal way to go...Samus - Thursday, November 5, 2015 - link

That's what she said, Der bra.zeeBomb - Sunday, November 8, 2015 - link

Very valid point. Speaking of valid points... 500!trivor - Thursday, November 5, 2015 - link

Have to disagree with your statement that the high end Android phone space has stood still. With this round of phones the Android OEMs have all upped their game to approximate parity with the iPhones and in some cases exceed the performance and quality of images taken by an iPhone. In addition, on phones like the LG G4 the option of having manual control of your picture taking and supporting RAW/JPEG simultaneously is a huge advance for smartphones. Add to that, phase change focusing, laser rangefinder for close focus, generous internal storage (32 GB) and micro SD expansion (which works quite well on Lollipop - not sure about Marshmallow yet) you have a great camera phone. It also has OIS 2.0 (whatever that means) at a significantly lower cost than even the low end (16 GB) iPhone 6s @ $450-500 for the G4 versus $650 for the iPhone. While iOS seems to get apps updated a little quicker, look nicer from what I've heard and seem to be a little more feature rich. Conversely, the Material Design language has greatly improved the state of Android interfaces to give Android OEMs a much more stable OS - although the first builds of Lollipop were not ready for prime time. Also, let's not forget that Android dominates the low - middle range of Smartphones below $400 with near flagship specs, excellent cameras in phones like the Motorola Style (Pure Edition in the US), Motorola Play (is apparently the base model for the Droid Maxx 2 for Verizon, a number of the Asus Zenphones, the Moto G and E. Also, the new Nexus' (6P and 5X) are both competitive across the board with new cameras with 1.55 micron pixels that let in significantly more light than the 1.12 pixels in other cameras, are competitively priced (especially the 6P @ $499), and are overall very nice handsets. Finally, the customizability and wide variety of handsets at EVERY PRICE POINT make Android a compelling choice for many consumers.Fidelator - Friday, November 6, 2015 - link

I couldn't agree more, the Android space has not stayed still, if anything, most of the problems on that side were due to Qualcomm's lack of a good offering this year, still, the phones were further refines in other areas, saying this is overall the best camera phone given the only advantage it has over the competition is reduced motion blur is complete bull, the UI is far from the best given that auto on both the SGS6/Note 5 and the G4 is as effective yet those still offer great manual settings.The -barely over 720p- display on the 6S is inexcusable for 2015 and given the starting price of the 6S should not be passed as an acceptable not even as a good display.

Where Apple deserves credit is with the A9, it is miles ahead of anything the competition currently offers, they have made some fantastic design choices, it just is on the next level.

robertthekillertire - Monday, November 9, 2015 - link

I'm actually very happy with Apple's decision to stick with a lower-resolution screen. Which would you rather: a smartphone with an insanely high pixel count that your eyes probably can't appreciate anyway, or a smartphone with a lower PPI (but barely perceptibly so) that gets better battery life and has smoother UI and game performance because it's not trying to push an absurd number of pixels at any given moment? The tradeoff just doesn't seem worth it to me.MathieuLF - Tuesday, November 10, 2015 - link

But your eyes can tell the difference... When I had my iPhone 6+ and Nexus 6P side by side I can see it right away that the Nexus has more pixelsCantona7 - Tuesday, December 1, 2015 - link

But the difference is not large enough to justify heavier power consumption and greater graphics requirement. I agree that more pixels is certainly more pleasant to the eyes, but I'd rather greater battery life. If the Nexus 6P had a lower resolution screen, it would have a even greater battery life which would be awesome