HGST And Mellanox Show Off SAN Fabric Backed By Phase Change Memory

by Billy Tallis on August 14, 2015 8:00 AM EST- Posted in

- Storage

- HGST

- Mellanox

- PCM

- InfiniBand

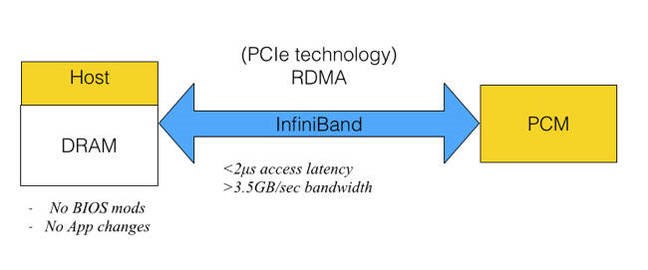

It seems that NAND flash memory just isn't fast enough to show off the full performance of the latest datacenter networking equipment from Mellanox. They teamed up with HGST at Flash Memory Summit to demonstrate a Storage Area Network (SAN) setup that used Phase Change Memory to attain speeds that are well out of reach of any flash-based storage system.

Last year at FMS, HGST showed a PCIe card with 2GB of Micron's Phase Change Memory (PCM). That drive used a custom protocol to achieve lower latency than possible with NVMe: it could complete a 512-byte read in about 1-1.5µs, and delivered about 3M IOPS for queued reads. HGST hasn't said how the PCM device in this year's demo differs, if at all. Instead, they're exploring what kind of performance is possible when accessing the storage remotely. Their demo has latency of less than 2µs for 512-byte reads and throughput of 3.5GB/s using Remote Direct Memory Access (RDMA) over Mellanox InfiniBand equipment. By comparison, NAND flash reads take tens of microseconds without counting any protocol overhead.

This presentation from February 2014 provides a great summary of where HGST is going with this work. It's been hard to tell which non-volatile memory technology is going to replace NAND flash. Just a few weeks ago Intel and Micron announced their 3D XPoint memory, immediately taking the place as one of the most viable alternatives to NAND flash without even officially saying what kind of memory cell it uses. Rather than place a bet on which new memory technology would pan out, HGST is trying to ensure that they're ready to exploit the winner's advantages over NAND flash.

None of the major contenders are suitable for directly replacing DRAM, either due to to limited endurance (even if it is much higher than flash), poor write performance, or vastly insufficient capacity. At the same time, ST-MRAM, CBRAM, PCM, and others are all much faster than NAND flash and none of the current interfaces other than a DRAM interface can keep pace. HGST chose to develop a custom protocol over standard PCIe as more practical than trying to make a PCM SSD that works as a DIMM connected to existing memory controllers.

Last year's demo showed that they were ready to deliver better-than-flash performance as soon as the new memory technology becomes economical. This year's demo shows that they can retain most of that performance while putting their custom technology behind an industry-standard RDMA interface to create an immediately deployable solution, and in principle it can all work just as well for 3D XPoint memory as for Phase Change Memory.

19 Comments

View All Comments

Shadow7037932 - Friday, August 14, 2015 - link

This is pretty exciting. I wonder if we'll see future SSDs with PCM/Xpoint to act as cache esp. since it's non volatile compared to DRAM that is being used currently for caching.Flunk - Friday, August 14, 2015 - link

I could see something like that being available on the enterprise level some time soon.Einy0 - Friday, August 14, 2015 - link

Funny, I was just thinking about that a few weeks ago when I read the Xpoint article. It only seems logical to see it first on high-end RAID controllers that currently rely on batteries to maintain their DRAM cache in the case of power failure. At work the only time our IBM i Series server get's powered down is to change out the internal RAID controller batteries. This happens every few years as routine maintenance. It's always a big deal because we have to schedule it for down time and everything.Samus - Friday, August 14, 2015 - link

Those aren't hot swap? You can use a RAID utility to flush the RAID cache (LSI\Areca this is done via command line) then pull the battery and replace it real quick. This is even more comfortable if you have dual power supplies that are connected to a UPS.I've worked on servers with hot swap memory and it works the same way...you use a utility or flip a switch on the motherboard to put it into memory swap mode, then get to it. Always do this stuff at night during a maintenance window, but there is no need for real downtime. It's the 21st century.

jwhannell - Saturday, August 15, 2015 - link

or just put the host into maintenance mode, move all the VMs off, and perform the work at your leisure ;)boeush - Friday, August 14, 2015 - link

Cache needs even more rewrite endurance that RAM (every cache line hash-maps to more than one RAM address.) As stated above, most of these NAND alternatives have "limited endurance (even if it is much higher than flash)".Billy Tallis - Friday, August 14, 2015 - link

Caches that sit between the CPU and DRAM need to be fast and sustain constant modification, but those aren't the only caches around. Almost all mass storage devices and a lot of other peripherals have caches and buffers for their communication with the host system. An SSD's write cache only needs to have the same total endurance as the drive itself, so if you've got a new memory technology with 1000x the endurance of NAND but DRAM-like speed, then you could put 1GB of that on a 1TB SSD without having to worry about burning out your cache. (Provided that you do wear-leveling for your cache, such as by using it as a circular buffer.)XZerg - Friday, August 14, 2015 - link

Depending on what the cache is for... if it is a cache for say network card then it would only be "utilized" when there is network related traffic. whereas, ram is the "layer" to cpu and as such would have much higher writes. your point is valid when comparing the cache to the final destination that it would need more endurance than the destination, eg: hdd.boeush - Friday, August 14, 2015 - link

I was admittedly thinking more in terms of node cache in large custered systems with shared memory topology over networked fabric (the access latency of PCM already rules it out as a SRAM or even DRAM replacement.)On flash drives, I'm not aware of SLC write-caches currently being a performance bottleneck...

frenchy_2001 - Friday, August 14, 2015 - link

The solution is DRAM, a smaller battery and a new memory type to write the cache into faster.Sandforce 2XXX controllers had NO DRAM cache at all, so I guess it is possible to make it work with either little cache or non-volatile memory as cache.

ReRAM or PCM have 100k write cycles as endurance.