The Intel Xe-LP GPU Architecture Deep Dive: Building Up The Next Generation

by Ryan Smith on August 13, 2020 9:00 AM EST- Posted in

- GPUs

- Intel

- Tiger Lake

- Xe

- Xe-LP

- DG1

- Intel Arch Day 2020

- SG1

Xe-LP Execution Units: It Takes Two

Diving down a level deeper, we have the smallest thread-level building block of the Xe-LP GPU architecture, the venerable Execution Unit. Intel has tweaked these a few times over the years, and for Xe-LP they are getting tweaked once again.

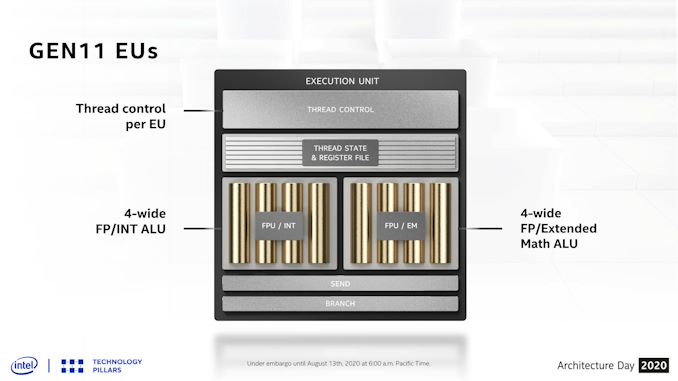

As a quick refresher, as of Intel’s Gen11 GPU architecture, an EU is comprised of a single thread control unit and two sets of 4-wide SIMDs. One block handles floating point and integer math, while the other block can handle floating point and special functions, which Intel refers to as “extended math”. Despite this, Gen11’s smallest wavefront width is 8 threads wide (SIMD8), so it can take multiple clock cycles to execute a single wavefront, with Intel interleaving multiple wavefronts as a form of latency hiding.

Xe-LP, meanwhile, brings several important changes to the design of the EUs. First and foremost, a single EU is no longer a stand-alone block; instead two EUs now share a single thread control unit. As a result, a thread control unit now gets the combined resource of two EUs to spread work over, instead of the one. And while the SIMD arrays themselves have also changed – further complicating matters – the impact here is that there are now fewer thread control units in a GPU, which should reduce the number of wavefronts that are in-flight at any given moment.

In fact there’s an argument to be had whether calling these dual EU setups two EUs is even the most accurate way to describe them; it might be better to instead bundle them together as a single fat EU, since neither half is truly independent. But absent further low-level details, and what I’m sure is a desire by Intel to be semi-consistent in counting EUs, they are going with 96 thin EUs.

Meanwhile regardless of how you bundle the EUs, there’s also the matter of what’s in the EUs themselves. For Xe-LP, Intel has reorganized the SIMD blocks. Gone are the pair of feature-differentiated SIMD4 blocks in favor of a SIMD8 block and a SIMD2 block. The larger SIMD8 essentially combines all of the floating point and integer ALUs that previously were split between Gen11’s two SIMD4 blocks, and makes a single SIMD8 out of them. The net result is that the number of FPU ALUs hasn’t changed – it’s still 8 ALUs per EU – however the number of pipes that can process integers has changed from 4 to 8. Meanwhile, although not made clear in Intel's slides, the number of ALUs that can process extended math is staying constant: Gen11 had two EM-capable pipes, and Xe-LP does as well.

Speaking of which, extended math has now been moved to its own SIMD2, and every EU gets one. This means that executing extended math functions no longer directly blocks the execution of floating point arithmetic, as was the case for Gen11 – the EU doesn’t have to give up FP pipes to do this. Further underscoring this point, the EU can co-issue instructions to both the FP/INT SIMD8 and the EM SIMD2 at the same time, meaning that under at least some circumstances, doing extended math also won’t indirectly block FP/INT arithmetic.

As always with co-issuing, the devil is in the details – at this point it’s not clear to us just what the co-issuing limitations are – but it’s still very likely to be a better fit to the kind of workloads Intel is actually seeing. AMD and NVIDIA also use dedicated EM/SFU units, and in similarly small ratios, all of which seems to work out well for those two companies. So in that respect Intel’s ALU setup is looking a lot more like its contemporary competitors’. And this, I suspect, is also one of the forms of bottleneck optimization that Intel has gone through to get more work out of the same number of FLOPs on Xe-LP.

These ALU changes also impact how wavefronts will move through the GPU. With a SIMD8 being the smallest ALU array for normal arithmetic, Intel’s minimum wavefront size is now the same size as the underlying hardware. This means that Xe-LP no longer needs multiple cycles to execute a single instruction from a wavefront in a single cycle, at least for the smallest wavefront size. In Gen11 Intel also allowed SIMD16 and SIMD32 wavefronts, and I’m waiting on the Xe-LP whitepaper to confirm whether those have been retained – in which case they’d still need multiple cycles – or if Intel is forcing everything to be SIMD8.

It’s worth noting that this change is fairly similar to what AMD did last year with its RDNA (1) architecture, eliminating the multi-cycle execution of a wavefront by increasing their SIMD size and returning their wavefront size. In AMD’s case this was done to help keep their SIMD slots occupied more often and reduce instruction latency, and I wouldn’t be surprised if it’s a similar story for Intel.

A further benefit of this reorganization is that Intel has been able to simplify their thread scheduling hardware overall. As recently as Gen11, Intel was still using hardware score boarding to determine when to run threads and when threads’ data would be ready. But with Xe-LP, score boarding has been moved into software, becoming a responsibility of Intel’s compiler.

While the move to software score boarding means that scheduling has to be determined in advance by the software – and thus becomes static and potentially results in less-than-optimal scheduling – the payoff is that hardware score boarding is fairly expensive from a die area and power standpoint. So moving to software score boarding allows for smaller and more power efficient EUs, which feeds back into Intel’s ability to build a larger number of EUs, and to improve their overall energy efficiency. Overall, this mirrors changes NVIDIA made to their architecture almost a decade ago with Kepler, where they similarly switched to software score boarding to the great benefit of their energy efficiency (and while retaining their high performance).

EU Throughput: By the Numbers

Now that we’ve had a chance to see all the changes made at the EU level, let’s talk about what this means for the actual throughput rates of the EUs.

| Intel GPU Compute Throughput Rates (FMA, Ops/clock/EU) |

||||

| Xe-LP | Gen11 | |||

| FP32 | 16 | 16 | ||

| FP16 | 32 | 32 | ||

| INT32 | 8 | 4 | ||

| INT16 | 32 | 16 | ||

| INT8 | 64 (DP4A) |

N/A | ||

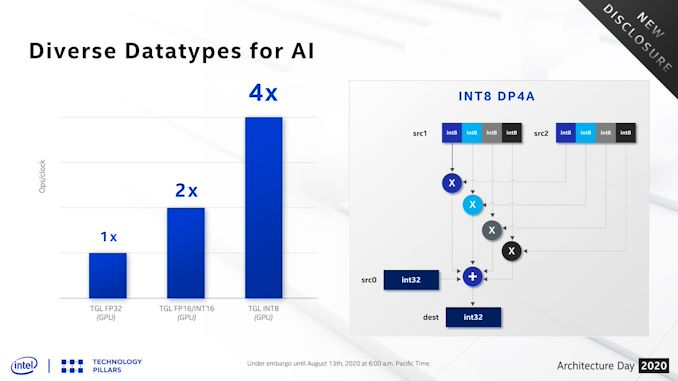

Starting with floating point, things are simple here. Despite the ALU reorganization, the number of FP ALUs per EU is still 8. And as a result, FP throughput per EU remains at 16 FP32 ops/clock and 32 FP16 ops/clock, just like Gen11.

For integer throughput on the other hand, the number of integer-capable ALUs has been doubled from 4 to 8 relative to the Gen11 architecture. As a result, integer throughput has also doubled: Xe-LP can put away 8 INT32 ops or 32 INT16 ops per clock cycle, up from 4 and 16 respectively on Gen11. This does mean, however, that Xe-LP retains Gen11’s unusual INT32 handicap; the INT32 rate is only half the FP32 rate, whereas the INT16 rate is equal to the FP16 rate.

Finally, it’s worth nothing that Xe-LP doesn’t have anything equivalent to a tensor core or other systolic array of ALUs for doing dense math, which has become all the rage for neural networking training an inference. This hardware will be coming to the Xe family in later parts as the Xe Matrix eXtensions (XMX), but for now Xe-LP has to make do with its regular EUs.

But for that reason, Intel has added one more feature to their EU SIMD: support for INT8 dot products. INT8 has become increasingly popular for neural networking inference over the past few years, and dot products in turn a very common operation in that process. So adding support for INT8 dot products gives Xe-LP a big boost in this form of AI execution. With the DP4A instruction, the INT8 throughput rate can get as high as 64 ops/clock, twice the INT16 rate.

33 Comments

View All Comments

mode_13h - Thursday, August 13, 2020 - link

As always, thanks for the deep coverage.Not finished reading, but I already have one complaint:

> Gen11’s smallest wavefront width is 8 threads wide (SIMD8), so it can take multiple clock cycles to execute a single wavefront, with Intel interleaving multiple threads as a form of latency hiding.

Wow. Mixing 2 different definitions of "thread" in the same sentence? Please don't.

Last I checked Nvidia is the only one talking about SIMD lanes as if they're threads. In Intel's Gen 9 whitepaper, it uses "threads" in a manner equivalent to CPU threads, and they talk about SIMD lanes as SIMD lanes.

And speaking of Gen 9, they claim it has 7-way SMT. Did they ever specify this, for Gen 11? I don't recall seeing it in their Gen 11 whitepaper, which went into significantly less detail on the EUs than previous whitepapers.

mode_13h - Thursday, August 13, 2020 - link

I guess your article could be self-consistent by replacing the second use of "thread" in that quoted sentence with "wavefront"?Although, "wavefront" is an AMD term (Nvidia calls them "Warps"). However, Intel's slides suggest they still call them "threads".

Ryan Smith - Thursday, August 13, 2020 - link

"I guess your article could be self-consistent by replacing the second use of "thread" in that quoted sentence with "wavefront"?"You are correct sir! That was supposed to be "wavefront".

And Intel tends to use "wave" in its literature, though I prefer to collapse it down to just wavefront to keep things reasonably consistent. We don't need 2 nearly-identical terms for the same thing.

mode_13h - Thursday, August 13, 2020 - link

Cool. Thanks for the reply!BTW, I don't mind the term "wavefront" - I said that more to point it out to those who might not know.

mode_13h - Thursday, August 13, 2020 - link

IMO, the reason Nvidia has long called their Warp elements "threads" is so they can claim that each SIMD lane is a "core", to make their GPUs *sound* more impressive.Since Volta finally fixed their per-lane IP register (which is basically just a fancy form of branch predication), there's almost a touch of truth in that characterization, and I'd finally agree that their ISA is more than just a straight-forward combination of SIMD + SMT.

xenol - Thursday, August 13, 2020 - link

AMD feels more confusing. Their base unit is a "stream processor" which seems to suggest something larger than it really is. But a group of stream processors is called a Compute Unit, which that seems to suggest something smaller than it really is.Though looking at some of the programming literature for GPUs, I can see where the "thread" terminology comes from. So this looks more like a problem of someone coming up with their own language instead of the industry coming together to standardize on it. However, given that NVIDIA, AMD, and Intel have their own way of doing things, it may not be possible to do that and for the sake of clarity, having their own terminology is more or less correct.

mode_13h - Thursday, August 13, 2020 - link

Since Nvidia's Fermi and AMD's GCN, their architectures basically amount to SIMD + SMT. I'm not sure exactly when Intel added SMT.Anyway, I wouldn't characterize their architectures as fundamentally different. Intel is traditionally the most distinct, among the three.

jim bone - Friday, August 14, 2020 - link

recent editions of Hennessy and Patterson have a nice table mapping the CPU terminology to nvidia’s GPU terminology:https://books.google.ca/books?id=cM8mDwAAQBAJ&...

jim bone - Friday, August 14, 2020 - link

and yes for reasons nvidia calls a vertical slice of simd instructions a threadkpx86 - Thursday, August 13, 2020 - link

I believe the SW libraries like DirectX and OpenGL use threads this way.From MSFT website: The maximum number of threads is limited to D3D11_CS_4_X_THREAD_GROUP_MAX_THREADS_PER_GROUP (768) per group.