Hot Chips 31 Live Blogs: Intel Optane

by Dr. Ian Cutress on August 19, 2019 2:55 PM EST

02:55PM EDT - This year at Hot Chips, Intel is presenting the latest updates to its Optane PCDMM strategy.

02:57PM EDT - Intel Optane DC Persistent Memory

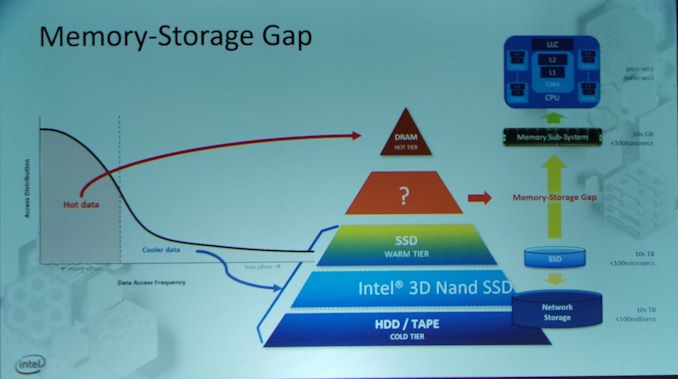

02:57PM EDT - The memory-storage gap slide where Optane goes

02:57PM EDT - Oh wow, they've made the same mistake again. This machine doesn't have the Intel font so everything looks off

02:59PM EDT - SSD like capacity to DRAM

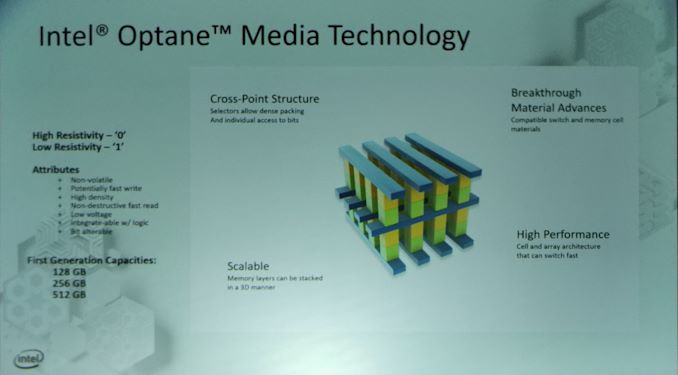

03:00PM EDT - We've heard the architecture of Optane before - crosspoint structure, non-voatile, bit-alterable, up to 512GB in first generation

03:00PM EDT - Designed to scale in layers

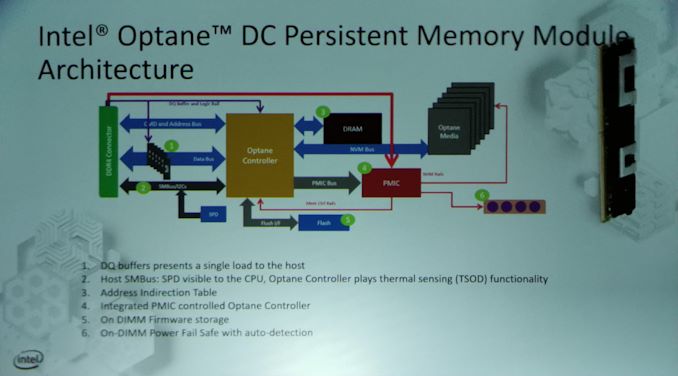

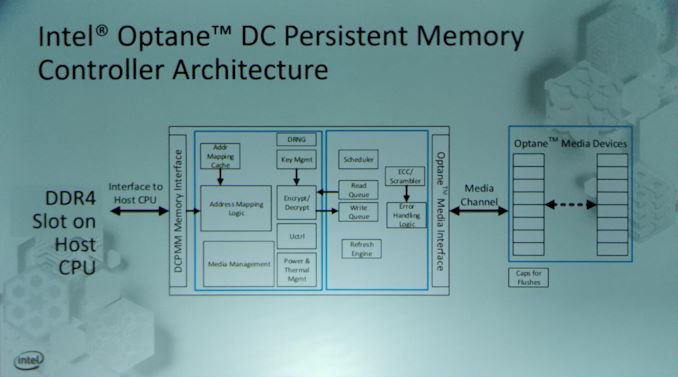

03:02PM EDT - DRAM module architecture

03:03PM EDT - Sorry the picture is bad, click through for full resolution

03:03PM EDT - Onboard Optane controller with firmware and integrated PMIC

03:04PM EDT - Built in ECC

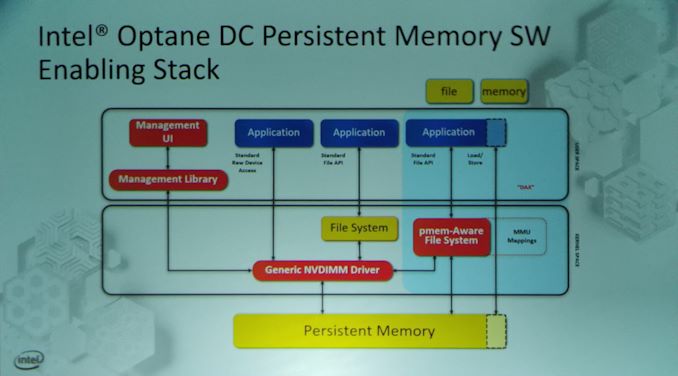

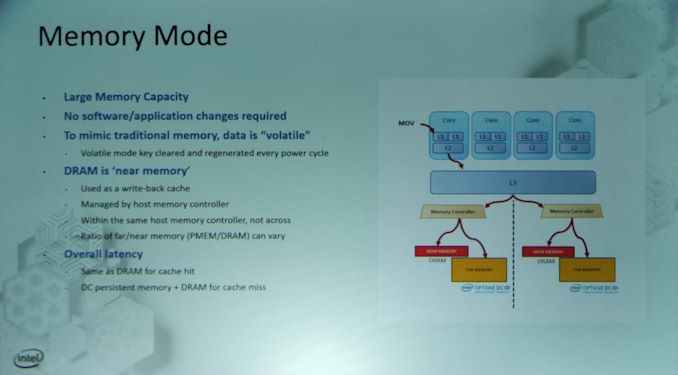

03:05PM EDT - Two modes, Storage mode or Memory mode

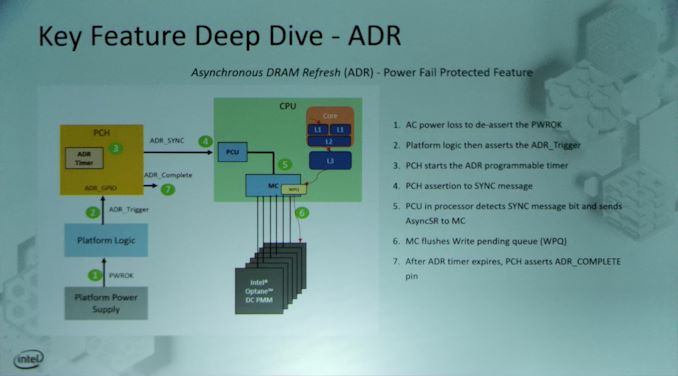

03:05PM EDT - Asyncronous DRAM refresh - power fail protected failure

03:07PM EDT - In memory mode, DRAM is used as a write-back cache and treated like a near memory. Optane is far memory.

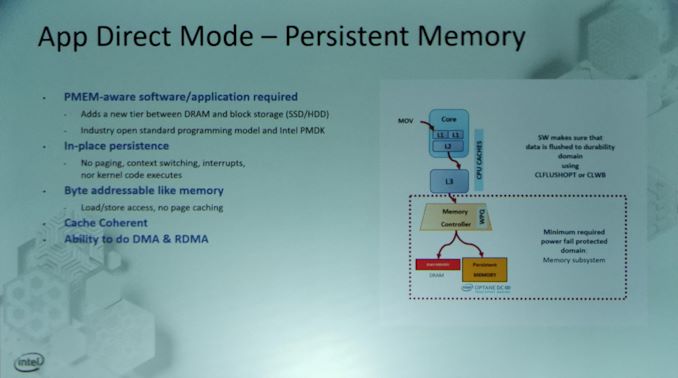

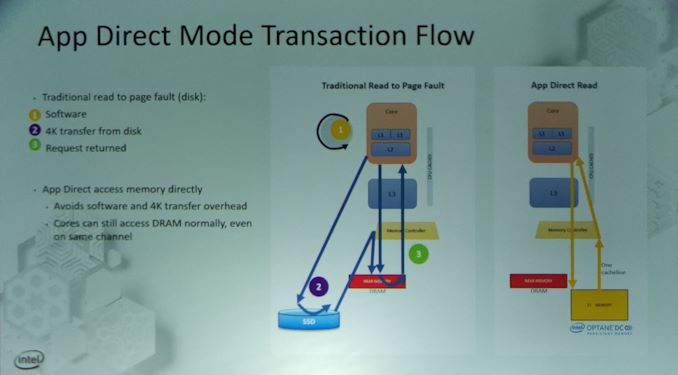

03:07PM EDT - Software/App Direct Mode shows the Optane as a storage

03:08PM EDT - Support for DMA and RDMA, cache coherent, byte-addressable, in-place persistance

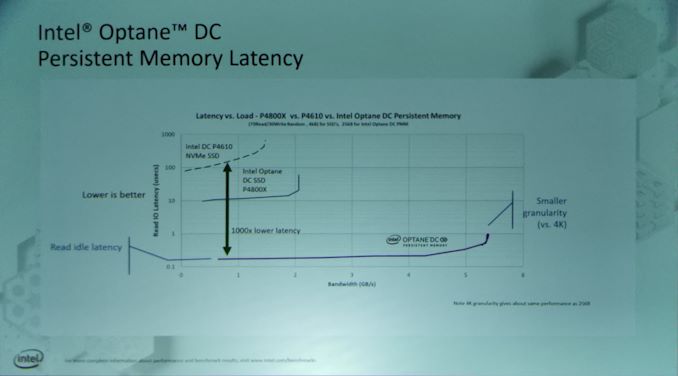

03:08PM EDT - Now Performance

03:08PM EDT - Latency vs SSD

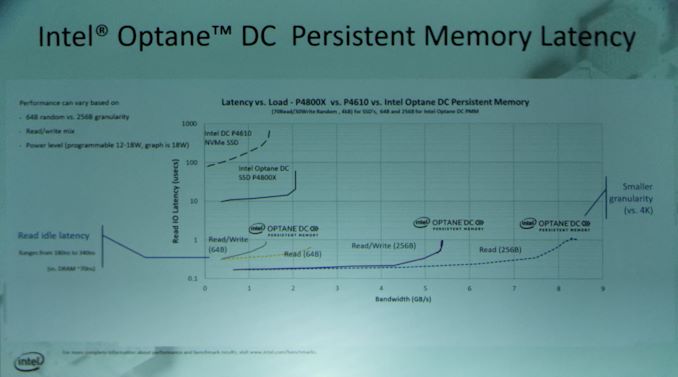

03:09PM EDT - 100 microseconds for normal SSD vs Optane SSD (10 microseconds) vs Optane DCPMM (0.2 microseconds)

03:09PM EDT - 180 microseconds vs 70 on DRAM

03:10PM EDT - This is a R/W mix of traffic

03:10PM EDT - Read idle latency peaks at 340ns

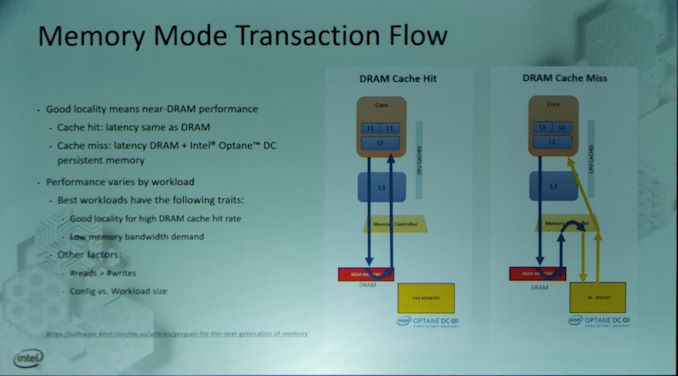

03:11PM EDT - Memory Mode transaction flow between CPU and DCPMM vs standard

03:11PM EDT - DRAM cache hit vs cache miss

03:12PM EDT - transparent to software

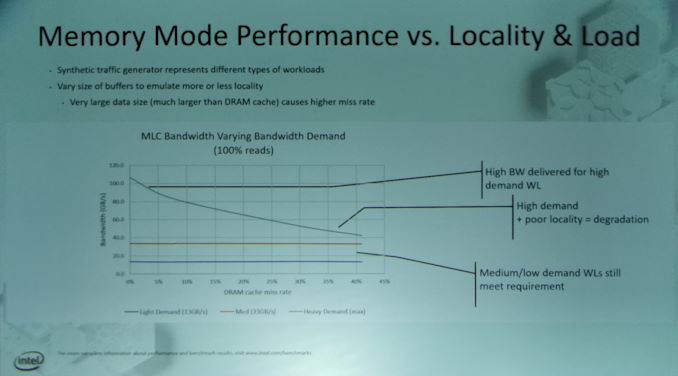

03:13PM EDT - Bandwidth is near DRAM, as you miss there is some degredation

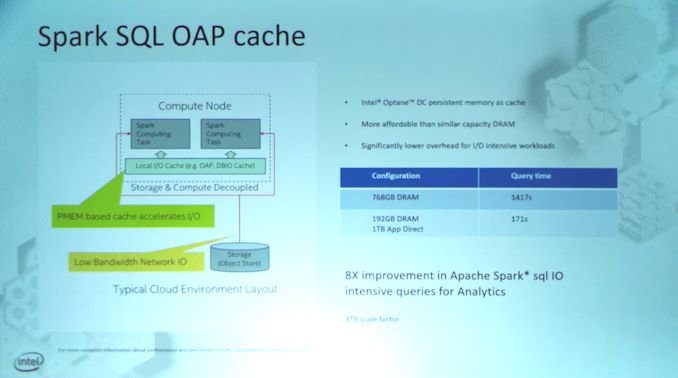

03:14PM EDT - A lot of workloads doesn't require full bandwidth all the time

03:14PM EDT - Up to 6TB in dual socket

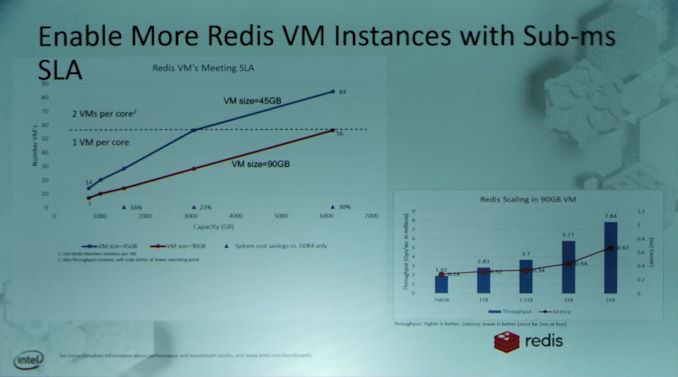

03:15PM EDT - Enables consolidation with larger memory per server

03:15PM EDT - Increases virtualized capacity based on memory

03:16PM EDT - Redis examples show under 1ms SLA quality even with max VMs in 6TB setup

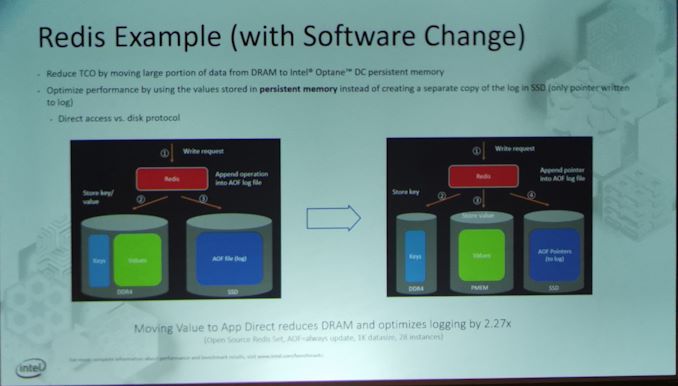

03:17PM EDT - App Direct Mode

03:18PM EDT - To take full advantage App Direct, requires software adjustments

03:19PM EDT - In Redis and enabling logging, Moving Value section to App Direct Optane reduces DRAM use and optimizes logging by 2.27x

03:21PM EDT - Q&A time

03:24PM EDT - Q: Spare capacity? There is spare capacity and wear levelling across all chips

03:25PM EDT - Q: Can you bring the Optane on package with CPU? A: It's possible, but not today

03:28PM EDT - Q: Why is 64B a slower latency than 256B? A: Our buffers are based on 256B, so on 64B reads you get buffering which increases latency. If you run at 256B, you get additional benefits from our buffer sizes

03:28PM EDT - That's a wrap. Time for lunch. The next live blog is on MLperf at 3:15pm PT.

21 Comments

View All Comments

azfacea - Monday, August 19, 2019 - link

we knew intel couldnt make sillicon but looks like they cant even make slides anymore LULvFunct - Monday, August 19, 2019 - link

These are phenomenal for databases, since databases are random access lookups. I'd like to see Optane DCPMM benchmarks with Postgres.johannesburgel - Monday, August 19, 2019 - link

At the current price levels the only use case for Optane DIMMs is restarting the database system or the whole server system regularly. In all other cases a couple of NVMe SSDs and more RAM are much cheaper. This has been true since Optane DIMMs came to market.vFunct - Monday, August 19, 2019 - link

That's a use case for small-scale workstations. For price-doesn't-matter servers, Optane Memory means data sets stored in much bigger main memory.And eventually the prices will go down as well.

azfacea - Monday, August 19, 2019 - link

It wont matter if the storage across the network. It would only be faster if its instance storage and you are commiting to a single node. the moment you wanna commit to 2 or more nodes (mongodb replicasets for example) or storage is across the network (AWS RDS) it wont matter.Faster transaction processing is purely theoretical and completely meaningless in practice. Optane's only advantage is 2x better density for a 3x worse latency. and with dram prices where they are its not enough to make ppl rewrite software.

azfacea - Monday, August 19, 2019 - link

correction:+2x better density

-3x worse latency

-10x worse random bandwidth

- who knows about wear and tear (much worse than DRAM)

azfacea - Monday, August 19, 2019 - link

why no edit feature?III-V - Monday, August 19, 2019 - link

Am I reading this correctly? They're just re-iterating the benefits of Gen 1 3D XPoint?Good grief, everything at Intel's delayed, not just stuff relying on 10nm.

azfacea - Monday, August 19, 2019 - link

its actually an order magnitude downgrade on what they were saying in 2015/16extide - Monday, August 19, 2019 - link

Note really -- they said 1000x earlier, and they are showing 500x here:"100 microseconds for normal SSD vs Optane SSD (10 microseconds) vs Optane DCPMM (0.2 microseconds)"