Marrying Vega and Zen: The AMD Ryzen 5 2400G Review

by Ian Cutress on February 12, 2018 9:00 AM EST

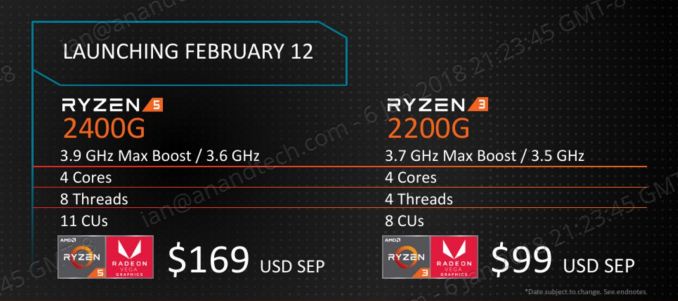

AMD's new launch of APUs hits the apex of the 2017 designs that tend the balance sheet black. After a return to high performance on x86 with the Ryzen CPU product line, and the 'we can't produce enough' Vega graphics, AMD has inserted several product lines that combine the two. Today is the launch of the desktop socket edition APUs, with four Zen cores and up to 11 Vega compute units. AMD has historically been aggressive in the low-end desktop space, effectively killing the sub-$100 discrete graphics market. The new APUs now set the bar even higher. In this review we focus on the Ryzen 5 2400G, but also test the Ryzen 3 2200G.

Ryzen 5 2400G and Ryzen 3 2200G: The Ryzen 2000 Series

The two APUs that AMD is launching today are the Ryzen 5 2400G, a $169 14nm quad-core Zen processor with simultaneous multithreading and ‘Vega 11’ graphics, and the Ryzen 3 2200G, a $99 14nm quad-core Zen processor without simultaneous multithreading and with ‘Vega 8’ graphics. Both parts are distinguishable from the non-integrated graphics Ryzen processors with the ‘G’, which is similar to how Intel is marketing its own Vega-enabled processors.

| AMD Ryzen 2000-Series APUs | ||

| Ryzen 5 2400G with Vega 11 |

Ryzen 3 2200G with Vega 8 |

|

| CPU Cores/Threads | 4 / 8 | 4 / 4 |

| Base CPU Frequency | 3.6 GHz | 3.5 GHz |

| Turbo CPU Frequency | 3.9 GHz | 3.7 GHz |

| TDP @ Base Frequency | 65 W | 65 W |

| Configurable TDP | 46-65 W | 46-65 W |

| L2 Cache | 512 KB/core | 512 KB/core |

| L3 Cache | 4 MB | 4 MB |

| Graphics | Vega 11 | Vega 8 |

| Compute Units | 11 CUs | 8 CUs |

| Streaming Processors | 704 SPs | 512 SPs |

| Base GPU Frequency | 1250 MHz | 1100 MHz |

| DRAM Support | DDR4-2933 Dual Channel |

DDR4-2933 Dual Channel |

| OPN PIB | YD2400C4FBBOX | YD2200C5FBBOX |

| OPN Tray | YD2400C5M4MFB | YD2200C4M4MFB |

| Price | $169 | $99 |

| Bundled Cooler | AMD Wraith Stealth | AMD Wraith Stealth |

Most of the following analysis in this section was taken from our initial APU Ryzen article.

Despite the Ryzen 5 2400G being classified as a ‘Ryzen 5’, the specifications of the chip are pretty much the peak specifications that the silicon is expected to offer. AMD has stated that at this time no Ryzen 7 equivalent is planned. The Ryzen 5 2400G has a full complement of four cores with simultaneous multi-threading, and a full set of 11 compute units on the integrated graphics. This is one compute unit more than the Ryzen 7 2700U Mobile processor, which only has 10 compute units but is limited to 15W TDP. The 11 compute units for the 2400G translates as 704 streaming processors, compared to 640 SPs on the Ryzen 7 2700U or 512 SPs on previous generation desktop APUs: an effective automatic 25% increase from generation to generation of desktop APU without factoring the Vega architecture or the frequency improvements.

The integrated graphics frequency will default to 1250 MHz and the total chip TDP is 65W. Maximum supported memory frequency will vary depending on how much memory is used and what type, but AMD lists DDR4-2933 as the support for one single-sided module per channel. Aside from the full set of hardware, the CPU frequency of the 2400G is very high, similar to the standard Ryzen 7 desktop processors: a base frequency of 3.6 GHz and a turbo of 3.9 GHz will leave little room for overclocking. (Yes, that means these chips are overclockable.)

The Ryzen 5 2400G somewhat replaces the Ryzen 5 1400 at the $169 price point. Both chips will continue to be sold, but at this price point AMD will be promoting the 2400G over the 1400. The 2400G has a higher set of frequencies (3.6G vs 3.2G base frequency, 3.9G vs 3.4G turbo frequency), higher memory support (DDR4-2933 vs DDR4-2666), no cross-CCX latency between sets of cores, but has less L3 cache per core (1 MB vs 2 MB). In virtually all scenarios, even if a user does not use the Ryzen 5 2400G integrated graphics, the Ryzen 5 2400G seems the better option on paper.

The cheaper $99 processor is the Ryzen 3 2200G. The specifications follow the other Ryzen 3 processors already in the market: four cores, and no simultaneous multi-threading. The rated frequencies, 3.5 GHz for base and 3.7 GHz for turbo, are slightly below that of the Ryzen 5 2400G but are still reasonably high – despite this chip being rated for 65W, the same as the Ryzen 5 2400G, users might expect this processor to turbo for longer within its power window as long as it is within its thermal boundaries (we do see this in some benchmarks in the review). The suggested retail price of $99 means that this is the cheapest Ryzen desktop processor on the market, and it crosses a fantastic line for consumers: four high-performance x86 cores under the $100 mark. The integrated graphics provide 512 streaming processors, identical to the $169 processors from previous generations, but this time upgraded with the Vega architecture.

Within the presentations at Tech Day, AMD typically provides plenty of performance data from their own labs. Of course, we prefer to present our own data obtained in our labs, but combing through AMD’s numbers provided a poignant point as to just how confident AMD is on even its low-end unit: using the 3DMark 11 Performance benchmark, the Ryzen 3 2200G (according to AMD) scored 3366 points, while on the same benchmark Intel’s best-integrated graphics offering, the Core i7-5775C with embedded DRAM, scored only 3094. If we took this data point as the be-all and end-all, it would come across that AMD has broken Intel’s integrated graphics strategy. We have some other interesting numbers in today’s review.

One of the other important elements to the Ryzen APU launch is that both processors, including the Ryzen 3 2200G for $99, will be bundled with AMD’s revamped Wraith Stealth (non-RGB) 65W cooler. This isn’t the high-end AMD cooler, but as far as stock coolers go, it easily introduces a $30 saving to any PC build, reducing the need to buy a hefty standard cooler.

Combining Performance with Performance: A Winning Strategy (on Paper)

Over the last 10 years, joining a CPU and a GPU together, either as two bits of silicon in a package or both on the same bit of silicon, fit a hole that boosted the low-end market. It completely cut the need for a discrete graphics card if all a user needed was a basic desktop experience. This also had a knock-on effect for mobile devices, reducing the total power requirements even under light workload scenarios. Since then, however, the integrated graphics have been continually asked to do more. Aside from 2D layering, we are now asking it to deal with interactive webpages, new graphics APIs, and new video decode formats. The march to higher resolution displays means new complex ways of encoding video information have been developed to minimize file size but keep the quality, which can stretch a basic integrated graphics solution, resulting in dedicated decode hardware to be added to future versions of the hardware.

The Sisyphean task, the Holy Grail for graphics, has always been gaming. Higher fidelity, higher resolutions, and more immerse environments like virtual reality, are well beyond the purview of integrated graphics. For the most part, the complex tasks still are today - don't let me fool you on this. But AMD did set to change the status quo when it introduced its later VLIW designs, followed by its GCN graphics architecture, several generations ago. The argument at the time was that most users were budget limited, and by saving money on a decent integrated graphics solution, the low-end gamer could get a much better experience. This did seem odd at the time, given AMD's success in the low-end discrete graphics market - they were cannibalizing sales of one product for another with a more complex design and lower margins. This was clearly apparent during our review analysis at the time.

Over several years of Bulldozer processing cores and integrated graphics designs, AMD competed on two main premises: performance per dollar, and peak performance. In this market the competition was Intel, with its 'Gen' graphics design. Both companies made big strides in graphics, however a bifurcation soon started to develop: Intel's Gen graphics were easily sufficient for office work in mobile devices, used a higher performance processor, and was more power efficient in the CPU by a good margin. AMD competed more for desktop market share, where power limits were less of a concern, and gave similar or better peak graphics performance at a much lower cost. For the low-end graphics market, this suited them fine, although AMD was still behind on general CPU performance which did put certain segments of users off.

What AMD did notice is that one of the limits for these integrated designs was memory bandwidth. For several years, they continually released products that had a higher base memory support over Intel: when Intel still had DDR3-1600 listed as the supported frequency, AMD was moving up to DDR3-2133, which boosted that graphics performance by a fair margin. You can see in our memory scaling article with Intel's Haswell products that DDR3-1600 was effectively a black-hole at unlocking integrated graphics performance, especially when it came to minimum frame rates.

At this stage in history, memory bandwidths to the CPU were around 20 GB/s, compared to discrete graphics that were pushing 250GB/s. The memory bandwidth issue was not unnoticed by Intel, and so with Broadwell they introduced the 'Crystalwell' line of Broadwell processors: these featured the largest implementation of Intel's latest graphics design, paired with embedded DRAM silicon in the package. This 'eDRAM', up to 128MB of it, was a victim cache, allowing the processor to re-use data (like textures) that had been fetched from memory and already used at a rate of 50 GB/s (bi-directional). The ability to hold data relevant to graphics rendering closer to the processor, at a faster bandwidth than main memory, paired with Intel's best integrated graphics design, heralded a new halo product in the category. This eDRAM processor line also gave speed ups for other memory bandwidth limited tasks that reused data, as stated when we reviewed it. The big downside to this was price: adding a new bit of silicon to the package, by some accounts, was fairly cheap: but Intel sold them at a high premium, aimed at one specific customer with a fruit logo. Some parts were also made available to end-users, very briefly before being removed from sale, and it was quoted in other press that OEMs did not like the price.

AMD's response, due to how their R&D budgets and manufacturing agreements were in place, was not to specifically compete with a similar technology. The solution with the resources at hand was to dedicate more silicon space to graphics. This meant the final APUs on the FM2+ platform, using Bulldozer-family CPU cores, offered 10 compute units (512SPs) at a high frequency, with DDR3-2133 support, for under half the price. For peak performance, AMD was going toe-to-toe, but winning on price and availability.

Fast forward almost two years, to the start of 2018. Intel did have a second generation eDRAM product, where that 128 MB of extra memory acted like a true level 4 cache, allowing it to be used a lot more, however the release was muted and very limited: for embedded systems only, and again, focused on one customer. The integrated graphics in other Intel solutions has focused more on video encode and decode support, rather than peak graphics performance. AMD had also released a platform only to OEMs, called Bristol Ridge. This used the latest Excavator-based Bulldozer-family cores, paired with 10 compute units (512 SPs) of GCN, but with DDR4-2133. The new design pushed integrated performance again, but AMD was not overly keen on promoting the line: it only had an official consumer launch significantly later, and no emphasis was placed in the media on its use. AMD has been waiting for the next generation product to make another leap in integrated graphics performance.

During 2017, AMD launched its Ryzen desktop processors, using the new Zen x86 microarchitecture. This was a return to high performance, with AMD quoting a 52% gain over its previous generation at the same frequency, by fundamentally redesigning how a core should be made. We reviewed the Ryzen 7 processor line, as well as Ryzen 5, Ryzen 3, Ryzen Threadripper, and the enterprise EPYC processors, all built with the same core layout, concluding that AMD now had a high-performance design within a shout of competing in a market that values single-threaded performance. AMD also heavily competed on performance per dollar, undercutting the competition and making the Ryzen family headline a number of popular Buyer's Guides, including our own. AMD also launched a new graphics design, called Vega. AMD positioned the Vega products to be competitive against NVIDIA dollar for dollar, and although the power consumption for the high-end models (up to 64 compute units) was questionable, AMD currently cannot make enough Vega chips to fulfil demand, as certain workloads perform best on Vega. In a recent financial call, CEO Dr. Lisa Su stated that they are continually ramping (increasing) the production of Vega discrete graphics cards because of that demand. Despite the power consumption for graphics workloads on the high-end discrete graphics, it has always been accepted that the peak efficiency point for the Vega design is something smaller and lower frequency. It would appear that Intel in part agrees with this statement, as it has recently introduced the Intel Core with Radeon RX Vega graphics processor, combining its own high-performance cores with mid-sized Vega chip, powered by high-bandwidth memory. The reason for choosing an AMD graphics chip rather than rolling its own, according to Intel, is that it is the right part for that product segment.

So a similar reasoning for today’s launch: combine a high-performance core with a high-performance graphics core. For the new Ryzen Desktop APUs being launched today, AMD has combined four of its high-performance x86 Zen cores and a smaller version of Vega graphics into the same piece of silicon. As with all silicon manufacturing, the APU design has to hit the right point on performance, power, die area, and cost, and with these products AMD is focusing squarely on the entry-level gaming performance metric, for users that are spending $400-$600 on the entire PC, including motherboard, memory, case, storage, power supply, and operating system. The idea is that high-performance processor cores, combined with high-performance graphics, can create a product that has no equal for the market.

177 Comments

View All Comments

Gideon - Monday, February 12, 2018 - link

BTW Octane 2.0 is retired for Google (just check their github), and even they endorse using Mozillas Speedometer 2.0 (darn can't find the relevant blog post).Ian Cutress - Monday, February 12, 2018 - link

I know; in the same way we have legacy benchmarks up, some people like to look at the data.Not directed to you in general, but don't worry if 100% of the benchmarks aren't important to you: If there's 40 you care about, and we have 80 that include those 40, don't worry that the other 40 aren't relevant for what you want. I find it surprising how many people want 100% of the tests to be relevant to them, even if it means fewer tests. Optane was easy to script up and a minor addition, just like CB11.5 is. As time marches on, we add more.

kmmatney - Monday, February 12, 2018 - link

In this case, a few 720p gaming benchmarks would have been useful, or even 1080p at medium or low settings.III-V - Tuesday, February 13, 2018 - link

Who uses 720p and is in the market for this?PeachNCream - Tuesday, February 13, 2018 - link

I'm happy with 1366x768 and I'm seriously considering the 2400G because it looks like it can handle max detail settings at that resolution. I'm not interested in playing at high resolutions, but I do like having all the other non-AA eye candy turned on.atatassault - Tuesday, February 13, 2018 - link

People who buy sub $100 monitors.WorldWithoutMadness - Tuesday, February 13, 2018 - link

Just google GDP per capita and you'll find huge market for 720p budget gaming pc.Sarah Terra - Wednesday, February 14, 2018 - link

Wow, i just came here after not visiting in ages, really sad to see how far this site has fallen.Ian Cutress was the worst thing that ever happened to Anandtech.

At one point AT was the defacto standard for tech news on the web, but now it has simply become irrelevant.

Unless things change i see AT slowly but surely dying

lmcd - Friday, March 22, 2019 - link

Wow, I just came to this article after not visiting for ages, really sad to see how the comment section has fallenmikato - Thursday, February 15, 2018 - link

Me. My TV is 720p and still kicking after many years. These CPUs would make for a perfect high end HTPC with some solid gaming ability. Awesome.