Dissecting Intel's EPYC Benchmarks: Performance Through the Lens of Competitive Analysis

by Johan De Gelas & Ian Cutress on November 28, 2017 9:00 AM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Skylake-SP

- Xeon Platinum

- EPYC

- EPYC 7601

Enterprise & Cloud Benchmarks

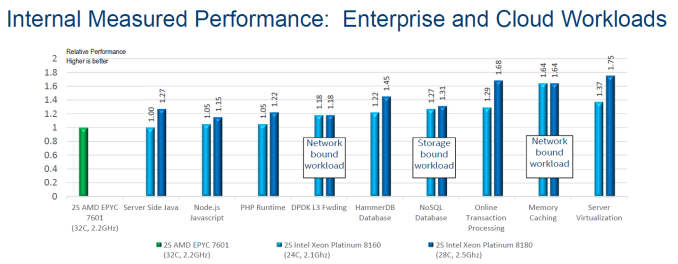

Below you can find Intel's internal benchmarking numbers. The EPYC 7601 is the reference (performance=1), the 8160 is represented by the light blue bars, the top of the line 8180 numbers are dark blue. On a performance per dollar metric, it is the light blue worth observing.

Java benchmarks are typically unrealistically tuned, so it is a sign on the wall when an experienced benchmark team is not capable to make the Intel 8160 shine: it is highly likely that the AMD 7601 is faster in real life.

The node.js and PHP runtime benchmarks are very different. Both are open source server frameworks to generate for example dynamic page content. Intel uses a client load generator to generate a real workload. In the case of the PHP runtime, MariaDB (MySQL derivative) 10.2.8 is the backend.

In the case of Node.js, mongo db is the database. A node.js server spawns many different single threaded processes, which is rather ideal for the AMD EPYC processor: all data is kept close to a certain core. These benchmarks are much harder to skew towards a certain CPU family. In fact, Intel's benchmarks seem to indicate that the AMD EPYC processors are pretty interesting alternatives. Surely if Intel can only show a 5% advantage with a 10% more expensive processor, chances are that they perform very much alike in the real world. In that case, AMD has a small but tangible performance per dollar advantage.

The DPDK layer 3 Network Packet Forwarding is what most of us know as routing IP packets. This benchmark is based upon Intel own Data Plane Developer Kit, so it is not a valid benchmark to use for an AMD/Intel comparison.

We'll discuss the database HammerDB, NoSQL and Transaction Processing workloads in a moment.

The second largest performance advantage has been recorded by Intel testing the distributed object caching layer memcached. As Intel notes, the benchmark was not a processing-intensive workload, but rather a network-bound workload. As AMD's dual socket system is seen as a virtual 8-socket system, due to the way that AMD has put four dies onto each processor and each die has a sub-set of PCIe lanes linked to it, AMD is likely at a disadvantage.

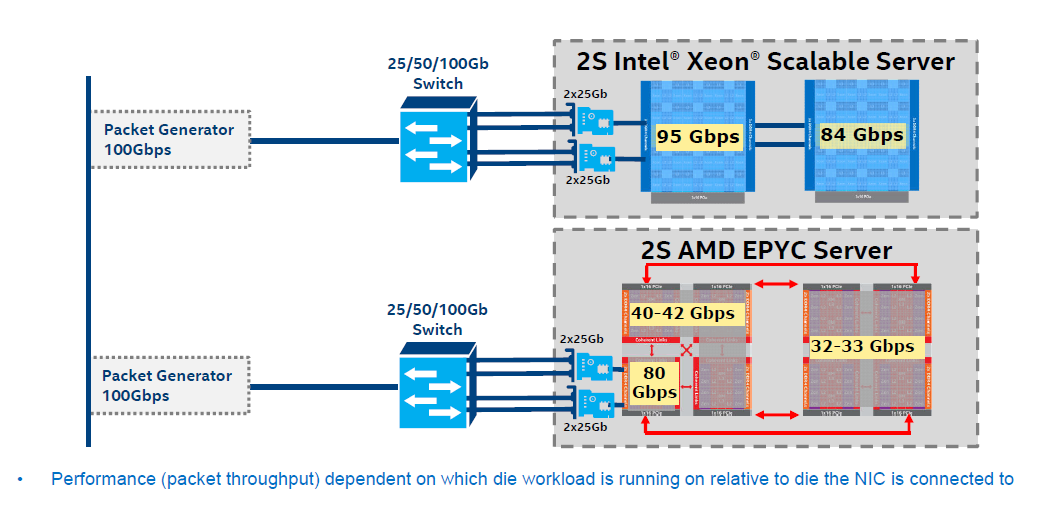

Intel's example of network bandwidth limitations in a pseudo-socket configuration

Suppose you have two NICs, which is very common. The data of the first NIC will, for example, arrive in NUMA node 1, Socket 1, only to be accessed by NUMA node 4, Socket 1. As a result, there is some additional latency incurred. In Intel's case, you can redirect a NIC to each socket. With AMD, this has to be locally programmed, to ensure that the packets that are sent to each NICs are processed on each virtual node, although this might incur additional slowdown.

The real question is whether you should bother to use a 2S system for Memached. After all, it is distributed cache layer that scales well over many nodes, so we would prefer a more compact 1S system anyway. In fact, AMD might have an advantage as in the real world, Memcached systems are more about RAM capacity than network or CPU bottlenecks. Missing the additional RAM-as-cache is much more dramatic than waiting a bit longer for a cache hit from another server.

The virtualization benchmark is the most impressive for the Intel CPUs: the 8160 shows a 37% performance improvement. We are willing to believe that all the virtualization improvements have found their way inside the ESXi kernel and that Intel's Xeon can deliver more performance. However, in most cases, most virtualization systems run out of DRAM before they run out of CPU processing power. The benchmarking scenario also has a big question mark, as in the footnotes to the slides Intel achieved this victory by placing 58 VMs on the Xeon 8160 setup versus 42 VMs on the EPYC 7601 setup. This is a highly odd approach to this benchmark.

Of course, the fact that the EPYC CPU has no track record is a disadvantage in the more conservative (VMware based) virtualization world anyway.

105 Comments

View All Comments

CajunArson - Tuesday, November 28, 2017 - link

The whole "pricetag" thing is not really an issue when you start to look at what *really* costs money in many servers in the real world. Especially when you consider that there's really no need to pay for the highest-end Xeon Platinum parts to compete with Epyc in most real-world benchmarks that matter. In general even the best Epyc 7601 is roughly equivalent to similarly priced Xeon Gold parts there.If you seriously think that even $10000 for a Xeon Platinum CPU is "omg expensive"... try speccing out a full load of RAM for a two or four socket server sometime and get back to me with what actually drives up the price.

In addition to providing actually new features like AVX-512, Intel has already shown us very exciting technologies like Optane that will allow for lower overall prices by reducing the need to buy gobs and gobs of expensive RAM just to keep the system running.

eek2121 - Tuesday, November 28, 2017 - link

One thing is clear, you've never touched enterprise hardware in your life. You don't build servers, you typically buy them from companies like Dell, etc. Also RAM prices are typically not a big issue. Outfitting any of these systems with 128GB of ECC memory costs around $1,500 tops, and that's before any volume discounts that the company in question may get. Altogether a server with a 32 core AMD EPYC, 128 GB of RAM, and an array of 2-4 TB drives will cost under $10,000 and may typically be less than $8,000 depending on the drive configuration, so YES price is a factor when the CPU makes up half the machine's budget.CajunArson - Tuesday, November 28, 2017 - link

"One thing is clear, you've never touched enterprise hardware in your life. You don't build servers, you typically buy them from companies like Dell, etc"Well, maybe *you* don't build your own servers. But my point is 100% valid for pre-bought servers too, just check Dell's prices on RAM if you are such an expert.

Incidentally, you also fell into the trap of assuming that the MSRPs of Intel CPUs are actually what those big companies like Dell are paying & charging. That's clearly shows that *you* don't really know much about how the enterprise hardware market actually works.

eek2121 - Tuesday, November 28, 2017 - link

As someone who has purchased servers from Dell for 'enterprise', I know exactly how much large enterprise users pay. I was using the prices quoted here for comparison. I don't know of a single large enterprise company that builds their own servers. I have a very long work history working with multiple companies.sor - Tuesday, November 28, 2017 - link

There are plenty. I’ve been in data centers filled with thousands of super micro or chenbro home built and maintained servers. The cost savings are immense, even if you partner with a systems integrator to piece together and warranty the builds you design. Anyone doing scalable cloud/web/VM probably has thousands of cheap servers rather than HP/Dell.IGTrading - Tuesday, November 28, 2017 - link

This is one thing I HATE :)When AMD had total IPC and power consumption superiority people said "yeah buh software's optimized for Intel so better buy Xeon" .

When AMD had complete superiority with higher IPC and a platform so mature they were building Supercomputers out of it, people said "yeah buh Intel has a tiny bit better power consumption and over the long term..."

Over the long term "nothing" would be the truth. :)

Then Bulldozer came and AMD's IPC took a hit, power consumption as well, but they were still dong ok in general and had some applicatioms where they did excell such as encrypting and INT calculus , plus they had the cost advantage.

People didn't even listen ... and went Xeon.

Now Intel comes and says that they've lost the power consumption crown, the core count crown, the PCIe I/O crown, the RAID crown, the RAM capacity crown, the FPU crown and he platform cost crown.

But they come with this compilation of particular cases where Xeon has a good showing and people say: "uh oh you see ?! EPYC is still imature, we go Xeon" .

What ?!

Is this even happening ?! How many crowns AMD needs to win to be accepted as the better choice overall ?! :)

Really ?! Intel writes a mostly marketing compilation of particular use cases and people take it as gospel ?!

Honestly ... how many crowns does AMD need to win ?! :)

In the end, I would like to point out that in an AMD EPYC article there was no mention of AMD's EPYC 2 SPEC World Records .....

Not that it does anything to affect Intel's particular benchmarking, but really ?!

You write an AMD EPYC article within less than a week since HP announces 2 World Records with EPYC and you don't even put it in thw article ?!?

This is the nTH AMD bashing piece from AnandTech :(

I still remeber how they've said that AMD's R290 for 550 USD was "not recommended" despite beating nVIDIA's 1000 USD Titan because "the fan was loud" :)

This coming after nVIDIA's driver update resulting in dead GPUs ....

But AMD's fan was "loud" :)

WTF ?! ...

For 21 years I've been in this industry and I'm really tired to have to stomach these ...

And yeah .. I'm not bashing AnandTech at all. I'll keep reading it like I did since back in 1998 when I've first found it.

But I really see the difference between an independent Anandtech article and this one where I'm VERY sure some Intel PR put in "a good word" and politely asked for a "positive spin" .

0ldman79 - Tuesday, November 28, 2017 - link

I agree with a lot of your points in general, though not really directed toward Anandtech.The article sounded pretty technical, unbiased and the final page was listing facts, the server CPU are similar, Intel showed a lot of benches that show the similarities and ignore the fact that their CPU costs twice as much.

In all things, which CPU works best comes down to the actual app used. I was browsing the benches the other day, the FX six core actually beats the Ryzen quad and six core in a couple of benches (like literally one or two) so if that is your be-all end-all program, Ryzen isn't worth it.

So far it looks like AMD has a good server product. Quite like the FX line it looks like the Epyc is going to be better at load bearing than maximum speed and honestly I'm okay with that.

IGTrading - Friday, December 1, 2017 - link

I've just realized something even more despicable in this marketing compilation of particular use cases :1) Intel built a 2S Intel based server that is comparable in price with the AMD built.

2) That Intel built gets squashed in almost all benchmarks or barely overtakes AMD in some.

3) But then Intel added on all graphs a built that is 11,000 USD more expensive which also performed better, without clearly stating just how much more expensive that system is.

4) Also Intel says that it's per core performance in some special use cases is 38% better without saying that AMD offers 33% more cores that, overall, overtake the Intel built.

In conclusion, the more you look at it, the more this becomes clear as an elaborate marketing trick that has been treated like "technical" information by websites like AnandTech.

It is not. It is an elaborate marketing trick that doesn't clearly state that the system that looks so good in these particular benchmarks is 11,000 USD more expensive. That over 60% extra money.

Like I've said, AnandTech needs to be more critical of these marketing ploys.

They should be informing and educating us, their readers, not dishing out marketing info and making it look technical and objective when it clearly is not.

Johan Steyn - Monday, December 18, 2017 - link

The only reason I still sometimes read Anandtech's articles, is because some of the reader like you are not stupid and fall for this rubbish. I get more info from the comments than fro the articles itself. WCCF have great news posts, but the comments are like from 12 year-olds.Anandtech used to be a top rated review site and therefore some of the old die hard readers are still commenting on these articles.

submux - Thursday, November 30, 2017 - link

I think that the performance crown which AMD has just won has some catches unfortunately.First of all, I'll probably start working with ARM and Intel as opposed to AMD an Intel... not because AMD is not a good source, but because from a business infrastructure perspective, Intel is better positioned to provide support. In addition, I'm looking into FPGA + CPU solutions which are not offered or even on the road map for AMD.

Where AMD really missed the mark this time is that if AMD delivers better performance on 64-cores as Intel does 48-cores with current storage technologies, both CPUs are probably facing starvation anyway. The performance difference doesn't count anymore. If there's no data to process, there's no point in upping the core performance.

The other huge problem is that software is licensed on core count, not on sockets. As such, requiring 33% more cores to accomplish the same thing... even if it's faster can cost A LOT more money. I suppose if AMD can get Microsoft, Oracle, SAP, etc... to license on users or flops... AMD would be better here. But with software costs far outweighing hardware costs, fake cores (hyperthreading) are far more interesting than real cores from a licensing angle.

We already have virtualization sorted out. We have our Windows Servers running as VMs and unless you have far too many VMs for no particular reason or if you're simply wasting resources for no reason, you probably are far over-provisioned anyway. I know very few enterprises who really need more than two half-racks of servers to handle their entire enterprise workload... and I work with 120,000+ employee enterprises regularly.

So, then there's the other workload. There's Cloud. Meaning business systems running on a platform which developers can code against and reliably expect it to run long term. For these systems, all the code is written in interpreted or JITed languages. These platforms look distinctly different than servers. You would never for example use a 64-core dual socket server in a cloud environment. You would instead use extremely low cost, 8-16 core ARM servers with low cost SSD drives. You would have a lot of them. These systems run "Lambdas" as Amazon would call them and they scale storage out across many nodes and are designed for massive failure and therefore don't need redundancy like a virtualized data center. A 256 node cluster would be enough to run a massive operation and cost less than 2 enterprise servers and a switch. It would have 64TB aggregate storage or about 21TB effective storage with incredible response times that no VM environment could ever dream of providing. You'd buy nodes with long term support so that you can keep buying them for 10-20 years and provide a reliable platform that a business could run on for decades.

So, again, AMD doesn't really have a home here... not unless they will provide a sub-$100 cloud node (storage included).

I'm a huge fan of competition, but I just simply don't see outside of HPC where AMD really fits right now. I don't know of any businesses looking to increase their Windows/VMWare licensing costs based on core count. (each dollar spent on another core will cost $3 on software licensed for it). It's a terrible fit for OpenStack which really performs best on $100 ARM devices. I suppose it could be used in Workstations, but they are generally GPU beasts not CPU. If you need CPU, you'd prefer MIC which is much faster. There are too many storage and RAM bottlenecks to run 64-core AMD or 48-core Intel systems as well.

Maybe it would be suitable for a VDI environment. But we've learned that VDI doesn't really fit anywhere in enterprise. And to be fair, this is another place where we've learned that GPU is far more valuable than CPU as most CPU devoted to VDI goes to waste when there is GPU present.

You could have a point... but I wonder if it's just too little too late.

I also question the wisdom of investing too heavily on spending tens of thousands of dollars on an untested platform. Consider that even if AMD is a better choice, to run even a basic test would require investment in 12 sockets worth of these chips. To test it properly would require a minimum of $500,000 worth of hardware and let's assume about $200,000 in human resources to get a lab up and running. If software is licensed (not trial), consider another $900,000 for VMware, Windows and maybe Oracle or SQL server. That's a $700,000 $1.6 million investment to see if you can save a few thousand on a save a few thousand on CPUs.

While Fortune 500s could probably waste that kind of money on an experiment, I don't see it making sense to smaller organizations who can go with a proven platform instead.

I think these processors will probably find a good home in Microsoft's Azure, Google's Cloud and Amazon AWS and I wish them well and really hope AMD and the cloud beasts profit from it.

In the mean time, I'll focus on moving our platforms to Cloud systems which generally work best on Raspberry Pi style systems.